Ethical, Safe, and Responsible Artificial Intelligence Usage and Deployment

Artificial Intelligence is transforming how businesses operate—but with great power comes great responsibility. For SMB executives, deploying AI isn’t just about efficiency or automation—it’s about building systems that align with human values, avoid harm, and earn trust. As AI tools become more autonomous and integrated into daily operations, ethical risks—like bias, misinformation, surveillance, and opacity—can impact customers, employees, and long-term reputation. Forward-looking organizations now treat ethical AI not as a regulatory burden, but as a strategic asset that drives transparency, inclusion, and accountability.

To guide responsible use, international frameworks like the EU AI Act, U.S. Executive Order on Trustworthy AI, UNESCO’s Ethics Guidelines, and NIST’s Risk Management Framework now outline clear expectations. Influential thinkers such as Dr. Fei-Fei Li, Yoshua Bengio, and Luciano Floridi are helping shape a new generation of AI governance—rooted in fairness, explainability, and shared benefit.

Key considerations for Ethical AI include:

- Transparency: Can users tell when AI is being used—and how it works?

- Bias & Fairness: Are outputs audited to avoid discrimination or skewed results?

- Privacy & Consent: Are people’s data and autonomy respected at every step?

- Human Oversight: Is there a clear path for people to monitor, intervene, or correct the AI?

- Accountability: Who is responsible when the AI gets it wrong?

Building Trust Through Responsible AI

As artificial intelligence becomes embedded in the fabric of modern business, ethical and responsible development practices are no longer optional—they are essential. At ReadAboutAI.com, we spotlight the frameworks and safeguards that leading companies are using to promote safe, transparent, and inclusive AI. Amazon’s Nova family of models exemplifies this shift, built from the ground up with responsible AI principles in mind—from privacy, security, and fairness to explainability, governance, and robustness. By embracing comprehensive approaches like alignment with human values, red-teaming adversarial tests, and watermarking generated content, companies are demonstrating how AI can be powerful and trustworthy. This page curates best practices and evolving standards so that SMB executives and managers can better understand, evaluate, and demand ethical AI in their own operations and partnerships.

Why Ethical AI Matters

Artificial intelligence isn’t just a productivity tool—it’s a force that shapes decisions, influences people, and impacts real-world outcomes. As AI becomes more embedded in everyday business operations, the ethical implications of how it’s developed, deployed, and governed deserve close attention. Responsible AI isn’t just about avoiding harm; it’s about building trust, ensuring fairness, and protecting your customers, employees, and brand.

To guide ethical and safe AI practices, consider these often-overlooked but critical areas:

- Privacy and Data Use: Is your AI trained on data that was ethically sourced, and is user data protected throughout the lifecycle?

- Bias and Fairness: Does the system treat all user groups equitably, or could it reinforce existing social or workplace inequalities?

- Transparency: Can users and stakeholders understand how decisions are made or how outputs are generated?

- Safety and Guardrails: What protections are in place to prevent harmful, offensive, or misleading content?

- Explainability: Can someone—especially a non-technical stakeholder—reasonably interpret the system’s output?

- Security and Robustness: Is your AI protected from adversarial attacks, data leaks, or unauthorized manipulations?

- Governance and Accountability: Who is responsible when something goes wrong, and what systems are in place to monitor outcomes over time?

At ReadAboutAI.com, we highlight frameworks, real-world practices, and corporate accountability efforts to help leaders make informed decisions about AI—balancing innovation with integrity.

Summary by ReadAboutAI.com

Ethical AI Developments Update: January 20, 2026

THE STATE OF ETHICAL AI

AI ethics has entered a decisive transition period. What was once dominated by principles, white papers, and voluntary guidelines is now colliding with real-world deployment, institutional pressure, and accelerating capability gains. The latest State of AI Ethics Report from the Montreal AI Ethics Institute makes this clear: ethical AI is no longer a theoretical debate about alignment or safety in isolation—it is an execution challenge shaped by governance readiness, workforce impact, procurement choices, and public trust. In short, ethics has become operational.

At the same time, leading AI voices are articulating divergent—but increasingly convergent—paths forward. Anthropic’s Dario Amodei outlines an optimistic vision in Machines of Loving Grace, where powerful AI could compress decades of scientific and medical progress—if governance, alignment, and societal intent keep pace with scaling intelligence. Human-centered AI advocates like Fei-Fei Li reinforce this framing, emphasizing that AI’s value lies in augmenting human capability, not replacing it. Meanwhile, organizations such as IASEAI, MAIEI, and the Responsible AI Institute signal a broader shift: ethical AI governance is becoming multi-stakeholder, institutionalized, and strategically relevant, even as formal regulation remains fragmented. Together, these signals point to a clear conclusion—ethical AI is no longer optional, abstract, or future-dated; it is becoming a core leadership responsibility.

WHAT’S OFTEN OVERLOOKED — WHERE SMBS GET CAUGHT OFF GUARD

Many SMB leaders assume ethical AI risk will arrive through formal regulation or high-profile enforcement actions. In practice, it usually shows up earlier and more quietly through procurement requirements, partner expectations, and platform rules. Enterprise customers, insurers, government agencies, and large vendors increasingly require vendors to demonstrate responsible AI use—even when no law explicitly mandates it. Businesses that adopt AI tools without clear governance, documentation, or usage policies often discover these gaps only after a deal stalls, a contract is delayed, or additional scrutiny is imposed mid-engagement.

Another common blind spot is where responsibility actually sits. As AI systems become embedded in everyday workflows, accountability is shifting away from model developers and toward the organizations that deploy them. “Using a third-party tool” does not transfer ethical, legal, or reputational risk. Data handling, IP exposure, customer impact, and workforce consequences increasingly fall on the deployer—not the lab that trained the model. Compounding this is a growing speed mismatch: AI capabilities evolve in months, while policies, training, and organizational norms evolve in years. Businesses that move quickly without parallel governance often find themselves reacting to problems—data leakage, unclear ownership of AI-generated content, or employee misuse—rather than managing AI deliberately from the start.

Machines of Loving Grace — How AI Could Transform the World for the Better

Dario Amodei (Anthropic), October 2024

TL;DR / Key Takeaway:

AI’s biggest impact may not be automation or productivity alone, but a compressed century of progress in health, science, and human well-being—if powerful AI is governed responsibly and deployed with clear societal intent.

Executive Summary

In Machines of Loving Grace, Anthropic CEO Dario Amodei lays out a rare, optimistic—but disciplined—vision of what powerful AI systems could enable if development goes right. Rather than focusing on hype or dystopia, Amodei frames AI as a multiplier of human intelligence, capable of dramatically accelerating scientific discovery, medical breakthroughs, and problem-solving across society—while still being constrained by real-world bottlenecks such as regulation, physical limits, and human institutions.

Amodei argues that AI’s greatest near- to mid-term impact will come from scaling intelligence, not replacing people. He describes future AI systems as “a country of geniuses in a data center”—able to reason, plan, run experiments, coordinate tasks, and iterate at speeds far beyond human capacity. This could compress 50–100 years of progress into 5–10 years in fields like biology, medicine, neuroscience, and materials science, enabling breakthroughs such as disease prevention, personalized treatments, and extended healthy lifespans.

At the same time, Amodei emphasizes that outcomes are not automatic. AI’s benefits will be shaped by governance, alignment, access, and institutional readiness. Without careful oversight, AI could just as easily amplify inequality, destabilize labor markets, or strengthen authoritarian control. His essay reflects Anthropic’s broader stance: AI optimism must be paired with risk mitigation, transparency, and long-term stewardship, not unchecked acceleration.

Relevance for Business

For SMB executives and managers, this essay reframes AI as a strategic capability, not just a software tool. The biggest opportunities will come from using AI to accelerate decision-making, experimentation, and problem-solving, while the biggest risks will come from ignoring governance, workforce adaptation, and ethical guardrails. Businesses that treat AI as infrastructure—planned, piloted, and governed—will benefit most as capabilities scale rapidly over the next decade.

Calls to Action

🔹 Reframe AI strategy from short-term automation to long-term intelligence amplification across the organization.

🔹 Prepare for faster cycles of change in healthcare, regulation, and knowledge work that may affect your industry sooner than expected.

🔹 Invest in governance early, including usage policies, oversight, and accountability—not after problems arise.

🔹 Upskill managers and teams to work with increasingly capable AI systems rather than around them.

🔹 Monitor AI leaders closely (Anthropic, OpenAI, Google, Microsoft) for signals about how fast capabilities are scaling and where constraints remain.

Summary by ReadAboutAI.com

https://www.darioamodei.com/essay/machines-of-loving-grace: Ethical AIIs Anthropic’s Direction at Odds with Other Major AI Companies?

Anthropic’s current strategy—rooted in safety-first research, cautious deployment, and human-aligned frameworks—differs in emphasis from some other leading AI players, but it is not fundamentally in conflict with the broader trajectory of the industry. (cgi.org.uk)

• Anthropic vs. OpenAI/Microsoft/Google:

Anthropic was founded by former OpenAI researchers who left due to disagreements about scaling speed and governance, and its public-benefit corporation structure embeds safety and long-term societal benefit more tightly into its corporate mission. (Wikipedia) Compared with OpenAI—which balances rapid capability development with external governance engagement—and with Google/Microsoft that push broad deployment across products and platforms, Anthropic places unusually heavy emphasis on internal alignment work (e.g., Constitutional AI and interpretability) and principled model behavior baked into the training process. (cgi.org.uk)

Anthropic’s AI for Science program, focus on agentic workflows, and research into structured alignment are all consistent with this posture: deploying capable AI with built-in guardrails rather than deploying first and governing later. (Anthropic) Yet the industry as a whole acknowledges alignment and safety research as a priority—many companies publish safety roadmaps and participate in multi-party governance frameworks—so the difference is mainly in how centrally safety is positioned within R&D, not whether it exists at all. (arXiv)

• Competing priorities and execution:

Where companies like OpenAI and Google leverage scale and broad commercial integration to push capability frontiers rapidly, Anthropic’s distinctiveness lies in paired capability + safety research with structural governance commitments. (cgi.org.uk) Some critics argue (outside official sources) that this emphasis could slow competitive momentum or be leveraged rhetorically—but Anthropic’s recent initiatives show it is still actively advancing powerful models and real-world AI workflows, even as it foregrounds alignment. (Wikipedia)

In short, Anthropic’s direction diverges in tone and emphasis but is part of the same broad industry movement toward powerful, capable AI systems—just with a heavier procedural focus on safety and governance.

Do AI Ethicists Like Fei-Fei Li Agree with Anthropic’s Direction?

Fei-Fei Li, a highly respected AI researcher and advocate of human-centered AI, shares many philosophical aims with Anthropic but frames them in a broader ethical and societal context rather than through a singular corporate posture.

• Alignment with human-centered values:

Fei-Fei Li’s work—especially through Stanford’s Institute for Human-Centered AI (HAI)—champions AI that augments human capabilities, respects dignity, and embeds ethics into design and deployment. (Klover.ai – Klover.ai) She argues against narratives of AI as a replacement for humans and emphasizes that AI should serve humanity with fairness, transparency, inclusivity, and societal benefit at the core. (The Economic Times) That view resonates with Anthropic’s commitment to safety-first development, research governance, and embedding ethical principles into models rather than bolting them on later. (cgi.org.uk)

• Different framing and emphasis:

However, Li’s perspective is broader than any one company’s agenda: she advocates for interdisciplinary research, public engagement, diverse stakeholder inclusion, and societal governance frameworks, not just corporate internal alignment protocols. (Klover.ai – Klover.ai) Her public commentary often pushes back against both apocalyptic hype and unchecked techno-optimism, arguing for balanced, evidence-based discourse and ethics as a design challenge rather than a constraint on innovation. (Fenxi)

So while Li would likely support Anthropic’s emphasis on safety and alignment because it aligns with human-centered goals, she would also push for broader systemic considerations—including equitable access, public oversight, and a diversity of voices shaping how AI is used in society. (Klover.ai – Klover.ai)

Summary for Discussion

Anthropic’s approach is not fundamentally at odds with other major AI labs, but it stands out for embedding safety and ethical guardrails deeply into R&D rather than treating them as add-ons. (cgi.org.uk)

Fei-Fei Li’s human-centered AI philosophy overlaps with Anthropic’s safety emphasis, but her focus is broader—encompassing societal values, public governance, and interdisciplinary involvement alongside technical safety. (Klover.ai – Klover.ai)

Industry trends converge on capability + responsibility, but differ in how they balance speed, governance structures, and public accountability. (arXiv)

Leading AI Ethics / Governance Bodies

Here are four prominent organizations (aside from IASEAI) that are influential in ethical AI development, governance, and standards:

Partnership on AI (PAI) – A multi-stakeholder consortium including major tech firms, academia, and civil society that conducts research, convenes dialogues, and publishes best practices for responsible AI. (AI Ethicist)

Center for AI Safety (CAIS) – A nonprofit focused on accelerating research into technical AI safety, raising public awareness of AI risks, and fostering systemic approaches to ensuring AI systems are safe. (Wikipedia)

AI Ethics and Integrity International Association (AIEI) – A non-profit that promotes AI standards, fosters a global community for knowledge sharing, and works to shape responsible AI policies. (AI Ethics and Integrity)

Responsible AI Institute (RAI Institute) – An organization that partners with industry and policymakers to develop governance frameworks, toolkits, and assessments to help organizations implement and scale ethical AI practices. (Responsible AI)

Additionally, there are many other groups and ecosystems (e.g., academia, standards bodies, civil society initiatives) playing important roles in AI ethics and governance discussions—such as ACM/IEEE, AI4ALL, the Montreal AI Ethics Institute, and national/regional policy initiatives. (AI Ethicist)

Context

Ethical AI governance is inherently distributed and multi-layered: no single body currently has global authority. Different organizations influence standards, policy frameworks, technical safety research, industry best practices, and public dialogue, often engaging with governments, international institutions, and private sector stakeholders. IASEAI’s emergence adds another coordinated voice, especially around safety and alignment, but it complements rather than replaces the broader ecosystem of ethics and governance organizations. (IASEAI)

International Association for Safe & Ethical AI (IASEAI): Mission & Role

IASEAI, 2024–2026

TL;DR / Key Takeaway:

IASEAI is a global nonprofit convening researchers, policymakers, and industry leaders to shape safeguards and policy for advanced AI, signaling that AI governance is moving from voluntary principles toward coordinated international action.

Executive Summary

The International Association for Safe & Ethical AI (IASEAI) is a global, membership-based nonprofit created to ensure that increasingly capable AI systems operate safely, ethically, and in the public interest. Formed in the aftermath of the 2023 AI Safety Summit at Bletchley Park, IASEAI emerged amid growing concern that geopolitical competition, fragmented regulation, and industry-led self-governance could undermine coordinated global AI safeguards.

IASEAI’s mission is not to build AI systems, but to connect expertise across academia, government, civil society, and industry to accelerate safety research, inform public understanding, and influence policy. Its leadership and steering committee include widely respected figures in AI research, ethics, and governance—such as Stuart Russell, Yoshua Bengio, Geoffrey Hinton, Kate Crawford, and Francesca Rossi—positioning the organization as a serious convening body rather than an advocacy group alone.

Unlike regulatory agencies, IASEAI does not enforce rules. Instead, it aims to shape norms, frameworks, and international coordination at a moment when advanced AI systems are moving rapidly into economic, scientific, and social infrastructure. Its early conferences and organizational structure signal an effort to institutionalize AI safety and ethics discussions before high-impact failures force reactive regulation.

Relevance for Business

For SMB executives and managers, IASEAI represents an early indicator of where AI governance expectations are heading, even if formal regulation lags. As AI becomes embedded in core workflows, customers, partners, and regulators will increasingly expect organizations to demonstrate responsible AI use, risk awareness, and governance maturity—not just technical capability.

Calls to Action

🔹 Monitor IASEAI outputs and convenings as forward signals of emerging AI governance norms.

🔹 Align internal AI policies with safety, transparency, and accountability principles before they become mandatory.

🔹 Assess AI risk exposure in customer-facing and decision-support systems.

🔹 Prepare for multi-stakeholder scrutiny, including regulators, partners, and the public.

🔹 Treat AI governance as strategy, not compliance—early alignment reduces future disruption.

Summary by ReadAboutAI.com

https://www.iaseai.org/about: Ethical AI

AI at the Crossroads: A Practitioner’s Guide to Community-Centered Solutions

Montreal AI Ethics Institute — State of AI Ethics Report, Volume 7 (Nov 2025)

TL;DR / Key Takeaway

AI ethics has entered its implementation phase: organizations that treat ethics as capacity-building, governance infrastructure, and community engagement—not compliance theater—will be better positioned to scale AI sustainably and maintain trust.

Executive Summary

The State of AI Ethics Report (Volume 7) argues that AI ethics is no longer about abstract principles or future risk—it is about how AI systems are actually deployed, governed, and experienced today. Drawing on global case studies across government, industry, civil society, and emerging technologies, the report shows a widening gap between AI capability expansion and organizational readiness to implement AI responsibly. Ethics failures are increasingly tied not to malicious intent, but to poor integration, weak governance, low AI literacy, and exclusion of affected communities.

A central theme is that AI must be understood as a sociotechnical system—combining models, data centers, energy use, labor practices, institutional incentives, and power dynamics. The report highlights how narrow framings of “AI safety,” “alignment,” or “responsible AI” often collapse into branding or risk checklists, while real harms emerge from workforce deskilling, opaque procurement, surveillance expansion, environmental costs, and unequal participation in governance decisions. Ethics, the authors argue, is a process, not a destination.

Rather than slowing innovation, the report reframes ethics as a strategic enabler: organizations that embed community-centered design, workforce upskilling, transparent evaluation, and exit-readiness into AI adoption see stronger long-term ROI, resilience, and legitimacy. In this framing, ethical AI is not about avoiding deployment—but about deploying AI in ways that expand human capability rather than erode it.

Relevance for Business

For SMB executives and managers, this report is a warning—and a playbook. Many AI pilots fail not due to model limitations, but because governance, literacy, accountability, and integration are missing. As regulation fragments globally, responsibility increasingly shifts to organizational decision-makers, procurement teams, and managers overseeing AI-enabled workflows. Ethical AI is becoming a competitive differentiator, affecting employee trust, vendor lock-in risk, brand credibility, and long-term scalability.

Calls to Action

🔹 Treat AI ethics as infrastructure, not policy language — build governance, review, and escalation pathways before scaling tools

🔹 Audit AI use for capability erosion — identify where automation is reducing judgment, skills, or workforce trust

🔹 Embed ethics into procurement — require transparency, evaluation access, and exit options from AI vendors

🔹 Invest in AI literacy beyond “how-to” — focus on risk awareness, decision-making, and accountability

🔹 Pilot with community and workforce input — early feedback reduces downstream risk and resistance

Summary by ReadAboutAI.com

https://montrealethics.ai/state/: Ethical AIEthical AI has implicit risks that benefit from explicit naming.

1. Regulation-by-Procurement (Quiet but Powerful)

The discussion is toward governance bodies and emerging norms—but one mechanism is reshaping SMB reality faster than legislation:

Large enterprises and governments are enforcing ethics through procurement requirements.

Why this matters:

- SMBs increasingly inherit AI obligations from:

- Enterprise clients

- Platform partners

- Insurance providers

- Public-sector contracts

Even where formal regulation lags, ethical AI expectations are increasingly enforced through procurement rules, vendor risk assessments, and enterprise compliance requirements—often catching SMBs first.

This makes the risk concrete, not theoretical.

2. Liability Is Shifting from Models to Deployers

One subtle but critical trend:

Responsibility is moving downstream.

Regulators, courts, and customers increasingly care less about:

- Who built the model

and more about: - Who chose to deploy it

- How it was used

- Whether safeguards were in place

This points to business understanding that “ethics = leadership responsibility.”

3. Data & IP Risk as an Ethical Issue (Not Just Legal)

There is a focus on governance and human impact, which is great—but SMBs often encounter ethics first through data misuse and IP exposure:

- Training data ambiguity

- Customer data reuse

- Prompt leakage

- AI-generated content ownership

Ethical AI failures often surface first as data, privacy, or IP incidents, not philosophical disputes.

This bridges ethics to everyday operational risk.

4. Speed Mismatch as the Core Ethical Tension

The central ethical tension in AI today is not values—it’s speed mismatch:

models scale in months, while governance, law, and culture evolve in years.

⚠️ SMB Reality Check: Where Ethical AI Risk Shows Up First

Ethical AI risk rarely arrives as a headline-grabbing regulation. For most SMBs, it appears quietly—through procurement requirements, client questionnaires, vendor audits, insurance reviews, or partner expectations. Organizations that adopt AI tools without basic governance, documentation, or usage boundaries often encounter friction after deployment, when a deal slows, scrutiny increases, or trust is questioned.

Just as importantly, responsibility sits with the deployer, not the model maker. Using third-party AI tools does not transfer accountability for data handling, IP exposure, customer impact, or workforce misuse. As AI capabilities accelerate faster than policies, training, and norms can adapt, SMBs that move quickly without parallel governance often end up reacting to issues—rather than steering AI deliberately from the start.

Wrap up: Ethical Development Update: January 20, 2026

The state of ethical AI today is defined less by consensus on values and more by how organizations choose to act amid uncertainty. For executives, the question is no longer whether ethical AI matters—but whether their organization is prepared to govern increasingly powerful systems before governance is imposed from the outside.

Ethical AI Developments: July 8, 2025

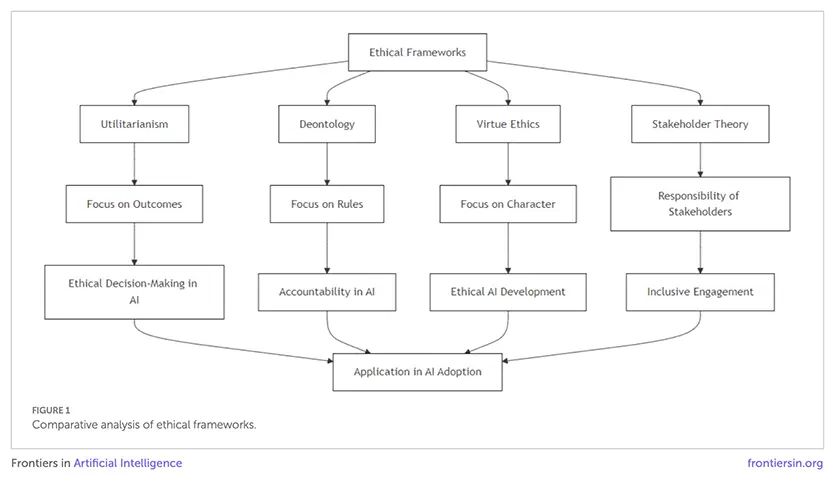

Ethical Theories & Governance for Responsible AI (Frontiers in AI, July 2025)

Summary

This academic review outlines how utilitarianism, deontology, and virtue ethics can guide responsible AI adoption. It examines governance models across different cultures (Anglo-American, European, Asian) and proposes strategic frameworks that combine fairness, transparency, and accountability with business success. The paper stresses closing the gap between academic AI ethics principles and practical industry application.

Relevance for Business

For SMBs, adopting AI responsibly isn’t just about compliance—it builds trust with customers, employees, and regulators. Embedding ethics into strategy can prevent reputational risk while supporting long-term growth.

Calls to Action

🔹 Incorporate ethical AI principles (fairness, transparency) into AI projects.

🔹 Establish governance structures with clear accountability for AI outcomes.

🔹 Engage stakeholders—including employees and customers—in AI oversight.

🔹 Treat responsible AI adoption as both a compliance issue and a competitive advantage.

Summary Created by ReadAboutAI.com

https://www.frontiersin.org/journals/artificial-intelligence/articles/10.3389/frai.2025.1619029/full: Ethical AI

OpenAI’s Disrupting Malicious Uses of AI report (June 2025)

Four-Paragraph Executive Summary

OpenAI’s Disrupting Malicious Uses of AI report (June 2025) highlights the evolving risks of AI misuse and the company’s ongoing efforts to detect, disrupt, and prevent harmful activity. The report documents real-world cases where adversaries exploited AI for scams, cyber operations, and covert influence campaigns. Threat actors used AI to create deceptive job applications, automate social engineering, generate disinformation, and assist with malware development. OpenAI emphasizes that while AI can be exploited, it is also a powerful defensive tool when used to detect and neutralize these threats.

Key case studies show a global pattern of misuse. North Korea-linked operators employed AI to generate false résumés and infiltrate IT roles, while networks tied to China and Russia engaged in influence operations designed to manipulate political discourse in the U.S., Europe, and Asia. Other examples include malware development by Russian-speaking actors and scams originating from Cambodia. These findings illustrate that malicious use of AI is transnational, opportunistic, and highly adaptive.

The report underscores the importance of collaboration across the tech industry and government. OpenAI has worked with partners such as Google, Anthropic, and law enforcement agencies to share intelligence, remove malicious accounts, and dismantle fraudulent networks. By publishing detailed case studies, OpenAI aims to improve collective defenses and provide transparency about how threat actors exploit AI models. The findings show that adversaries’ reliance on AI for efficiency also exposes their workflows to detection, enabling stronger countermeasures.

Ultimately, OpenAI frames AI safety not just as a technical challenge but as a societal responsibility. Preventing malicious use requires common-sense rules, proactive monitoring, and cooperative frameworks across borders. As AI becomes more deeply integrated into business, governance, and everyday life, defending against misuse is essential to ensure that AI’s benefits outweigh its risks.

Relevance for Business

For SMB executives and managers, the report is a wake-up call: AI-powered attacks are not just theoretical—they are already being deployed against companies of all sizes. Threat actors are using AI to scale phishing, impersonation, scams, and even insider infiltration through falsified employment. At the same time, AI can be leveraged to bolster defenses, from automated fraud detection to anomaly monitoring in HR and IT processes. Businesses that fail to adapt may find themselves vulnerable to increasingly sophisticated attacks, while those that integrate AI responsibly into security and governance will strengthen their resilience and protect stakeholder trust.

Executive Calls to Action

- Strengthen Hiring Protocols: Use layered verification (e.g., video interviews, device management, and reference checks) to detect falsified AI-generated résumés.

- Invest in AI-Driven Security: Implement anomaly detection, fraud monitoring, and behavioral analytics that leverage AI defensively.

- Train Teams on AI Risks: Educate staff about phishing, social engineering, and scams amplified by AI to improve human vigilance.

- Collaborate and Share Intelligence: Join industry groups, ISACs, or vendor networks to stay informed on AI-driven threats and mitigation strategies.

- Develop Ethical AI Guidelines: Ensure your company uses AI responsibly, minimizing risks of unintentional misuse while upholding trust and compliance standards.

OPENAI Report: Disrupting Malicious Uses of AI: PDF

https://openai.com/global-affairs/disrupting-malicious-uses-of-ai-june-2025/: Ethical AI https://openai.com/safety/how-we-think-about-safety-alignment/: Ethical AIIntroduction: Responsible AI in the Generative Era

By Michael Kearns, Amazon Scholar and Professor of Computer and Information Science, University of Pennsylvania

Generative AI offers unprecedented creative and practical capabilities—but it also introduces novel ethical and technical challenges. Traditional responsible AI concerns like fairness, privacy, and bias become exponentially harder to define and control when systems can generate open-ended, human-like content. New issues, including hallucinated facts, subtle bias, intellectual property mimicry, and plagiarism, require innovative technical and policy-based responses. The article advocates for a multi-layered defense: combining training data curation, guardrails, retrieval-augmented verification, watermarking, and above all, context-specific use case development.

Relevance for Business

As generative AI tools become integrated into marketing, HR, product development, and customer service, businesses must understand that the risks go beyond bad output—they include reputational damage, regulatory exposure, and ethical failures. This article shows that responsible deployment demands not only technical controls but also intentional design choices, clear boundaries for AI use, and employee education. SMBs in particular must build trust with stakeholders by ensuring their AI systems are transparent, fair, and aligned with business values.

Calls to Action

- Define boundaries: Be specific about where and how generative AI will be used within your organization. Open-ended use = higher risk.

- Audit for fairness and privacy: Test models for demographic bias and ensure outputs don’t inadvertently leak sensitive data or reinforce stereotypes.

- Use guardrails: Employ output moderation tools and retrieval-augmented generation (RAG) systems to reduce hallucinations and increase factual reliability.

- Establish IP protocols: Understand how your tools are trained and implement watermarking or usage disclaimers to reduce IP infringement risks.

- Educate your team: Make sure employees know generative AI’s capabilities—and its limitations—so they use it ethically and effectively.

Books

The Worlds I See: Curiosity, Exploration, and Discovery at the Dawn of AI by Dr. Fei-Fei Li

Executive Summary: The Worlds I See by Dr. Fei-Fei Li

Flatiron Books, 2023

In The Worlds I See, Dr. Fei-Fei Li—renowned AI scientist, Stanford professor, and co-director of the Human-Centered AI Institute—offers a deeply personal and intellectually rich memoir that traces her journey from an immigrant girl with little English to one of the most influential figures in artificial intelligence. Interwoven with this narrative is a powerful argument: AI must be developed in alignment with human values like dignity, empathy, and inclusivity. Li challenges the techno-deterministic mindset and instead calls for “AI with a soul”—technology that is as morally grounded as it is powerful.

She shares firsthand stories of creating ImageNet (which helped launch the deep learning revolution), leading Google Cloud’s AI efforts, and founding AI4ALL to bring underrepresented groups into the AI field. Her reflections illuminate the ethical crossroads AI now faces, and she makes a compelling case for building systems that augment—not replace—human capabilities.

💼 Relevance to Business

Dr. Li’s book serves as a strategic and ethical compass for executives navigating AI adoption. Her focus on human-centered design is especially relevant for SMBs aiming to deploy AI in ways that respect user autonomy, prevent bias, and build stakeholder trust. It’s a reminder that long-term value creation requires not just innovation, but responsibility.

Whether using AI in hiring, marketing, customer service, or operations, Li’s perspective highlights how aligning with human values can strengthen brand credibility, avoid ethical pitfalls, and drive sustainable growth.

🎯 Calls to Action for Executives

- Embrace human-centered AI: Prioritize tools and platforms that empower rather than displace workers and customers.

- Build diverse teams: AI is shaped by its creators—invest in diversity and inclusion to reduce blind spots and build more equitable systems.

- Focus on dignity and trust: Design AI with transparency, explainability, and user well-being as core business values—not afterthoughts.

- Support ethical innovation: Consider joining or supporting organizations like AI4ALL or adopting HAI-inspired frameworks for responsible AI deployment.

Dr. Fei‑Fei Li (Stanford HAI)

- Focus: Human‑centered AI, dignity, inclusivity

- Why she matters: Co‑founder of Stanford’s Human‑Centered AI Institute and founder of AI4ALL, Dr. Li champions AI that respects human dignity, supports civic and moral values, and advances equitable representation in technology. Her work influences global policy—like the UN’s “dignity dividend”—ensuring AI enhances human well‑being and resists reducing humans to mere data points (Klover.ai – Klover.ai).

2025 Stanford HAI AI Index

The 2025 AI Index offers the most comprehensive snapshot yet of AI’s global ecosystem—highlighting rapid technical progress, shifting geopolitics, emerging governance norms, and growing Responsible AI concerns. It reports that open‑weight (open source) models are nearly matching closed‑weight giants in performance—closing an 8% gap to just 1.7% in a year (Stanford HAI). Hardware improvements have cut inference costs by ~40%, making AI adoption significantly more affordable (WIRED). Additionally, China is rapidly catching up: while the U.S. still leads in AI model count and investment, China’s publications and model quality have surged (Axios). However, ethical concerns are mounting—AI incident reports rose 56% in 2024, and fairness/truthfulness benchmarks revealed persistent model biases (Stanford HAI).

💼 Relevance to Business

- Affordable AI power: With costs dropping and open-source models reaching cutting-edge performance, SMBs can access powerful AI even with modest budgets.

- Global competition: As AI innovation spreads beyond the U.S., businesses face growing international market competition—and opportunity—from rising AI capabilities worldwide.

- Operational and ethical risk: The spike in AI incidents and continued bias spotlight the need for proactive governance—especially as regulators, customers, and stakeholders demand transparency and fairness.

🎯 Calls to Action for Executives

- Reassess AI strategy – Explore open-source models to balance cost, performance, and control.

- Benchmark performance & safety – Measure models not just on accuracy, but on bias, factuality, safety, and incident history.

- Govern with purpose – Build internal policies and tools to track and manage AI-related incidents and risks.

- Stay globally informed — Monitor AI trends in other regions to evaluate threats and partnerships in international markets.

The Ethics of Artificial Intelligence: Principles, Challenges, and Opportunities (Oxford, 2023)

📘 Executive Summary: The Ethics of Artificial Intelligence by Luciano Floridi

Luciano Floridi presents AI as a transformative form of agency, defining it as the “unprecedented divorce between intelligence and agency.” He structures the book in two parts: the first explores AI’s historical emergence, current landscape, and future trajectories; the second focuses on ethical principles, risks, legal contexts, and real-world applications (Google Books). He synthesizes core values—autonomy, non-maleficence, beneficence, justice, and a uniquely digital fifth, explicability—highlighting the need for transparency and accountability (ResearchGate).

He carefully examines the dark side of AI—from malicious use to bias and informational harm—and contrasts it with potential positive uses in AI for Social Good, including environmental sustainability and support for UN Sustainable Development Goals (SDGs) (barnesandnoble.com). Ultimately, Floridi calls for a “marriage of the Green and the Blue”, urging AI development that balances technological advancement with societal and ecological well-being (barnesandnoble.com).

🔍 Relevance for Business

- Establishes a deep ethical foundation for AI that goes beyond compliance—essential for guiding internal values and stakeholder trust.

- Highlights digital accountability: explicability ensures your systems are auditable and defendable.

- Connects AI ethics to sustainability goals, offering a bridge between AI investment and ESG (Environmental, Social, Governance) strategies.

✅ Calls to Action

Prioritize transparency: Build systems and policies that document data sources, model decisions, and accountability processes for internal and external review.

Adopt core principles: Embed autonomy, non-maleficence, beneficence, justice, and explicability into AI procurement and deployment practices.

Evaluate risk holistically: Extend ethical audits beyond bias and privacy to include disinformation, environmental impact, and misuse potentials.

Champion AI4SG initiatives: Align AI projects with sustainability efforts and measurable social outcomes, following Floridi’s green-blue integration model.

Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence by Kate Crawford

📘Summary

Crawford dismantles the myth of AI as a disembodied intelligence, revealing it instead as a massive industrial “megamachine” powered by resource extraction, environmental degradation, and human labor (THE GENEVA OBSERVER, SoBrief).

She documents the material toll of AI—ranging from lithium mining and data-center energy use to low-wage labor for data labeling (MAEKAN, macloo.com).

Crawford critiques the ideology of “objective” AI by exposing biased, consent-lacking datasets (e.g., ImageNet, facial emotion recognition) deployed for surveillance and decision-making (Wikipedia).

She advocates for rethinking AI governance, urging stronger regulation, transparency, and democratic oversight of its power structures and planetary footprint (SoBrief).

💼 Relevance for Business Executives

- Hidden costs: AI isn’t just a digital tool—it depends on physical resources (minerals, energy, labor), which can damage the environment and destabilize supply chains (MAEKAN).

- Reputational & regulatory risk: Deploying exploitative data and labor models or biased systems can trigger public backlash and legal exposure.

- Strategic alignment: Businesses must assess whether their AI practices align with ESG goals and stakeholder expectations around sustainability and equity.

- Geopolitical implications: AI’s environmental and labor sourcing spans global contexts, entangling companies with human-rights, trade, and foreign-policy dynamics.

✅ Calls to Action

- Map your AI supply chain footprint: Audit your usage of rare-earth minerals, energy consumption, and labor practices behind model training.

- Shift toward responsible sourcing: Partner with suppliers committed to fair labor and eco-friendly mineral extraction.

- Scrutinize dataset ethics: Institute policies for obtaining informed consent, preventing bias, and ensuring representational fairness in all AI data.

- Advocate for robust AI governance: Engage in policy discussions and adopt external oversight—from regulators and civil society—on your AI systems.

- Integrate sustainability into AI metrics: Use environmental and social impact KPIs alongside traditional performance and ROI measures.

Responsible Artificial Intelligence: How to Develop and Use AI in a Responsible Way by Virginia Dignum

📘 Summary

Dignum provides a clear analysis of what constitutes AI—autonomy, adaptability, and interaction—linking these technical definitions with ethical theory and value-sensitive design (TU Delft Research Portal).

She introduces the ART framework—Accountability, Responsibility, Transparency—to guide the integration of ethics into AI systems, helping developers embed moral reasoning within AI agents (TU Delft Research Portal).

Arguing that responsible AI extends beyond algorithmic fairness, Dignum emphasizes the socio-technical ecosystem, stressing human oversight, stakeholder values, and governance structures (arXiv, SpringerLink).

The book concludes with practical guidance—value-sensitive design, ethical decision-making methodologies, and governance models—to ensure AI supports societal well-being and adheres to human and legal values (SpringerLink).

💼 Relevance for Business Executives

- Holistic integration: AI ethics isn’t a box-ticking exercise—it requires embedding ART principles within design, deployment, and policy to build trust and resilience (arXiv).

- ESG alignment: Dignum reinforces that ACE (Accountability, Competence, Ethics) is equally crucial as ROI—ensuring your AI projects adhere to human-rights and legal norms (SpringerLink).

- Governance readiness: As global regulations around trustworthy AI tighten, companies must proactively establish transparent oversight, clear responsibility lines, and ethical decision processes (TU Delft Research Portal, arXiv).

- Reputation protection: With systemic opacity and bias risks across data, models, and use cases, a responsible approach shields against public backlash and legal exposure.

✅ Calls to Action

Adopt the ART framework: Implement Accountability, Responsibility, Transparency checklists throughout the AI lifecycle.

Value-sensitive design: Engage diverse stakeholders early in design to identify and align with core ethical values.

Human-in-the-loop: Ensure humans remain part of critical decision processes to uphold moral and legal oversight.

Ethics governance board: Form cross-functional teams to oversee ethical impact assessments and compliance strategies.

Train and audit rigorously: Provide ethics training for engineers and conduct regular audits for bias, transparency, and decision explainability.

The Ethics of AI: Power, Critique, Responsibility by Rainer Mühlhoff

📘 Executive Summary: The Ethics of AI

Author: Rainer Mühlhoff (2025)

Publisher: Bristol University Press

Summary:

In The Ethics of AI, philosopher Rainer Mühlhoff challenges conventional “applied ethics” approaches by developing a power-aware critique of artificial intelligence systems. He argues that modern AI doesn’t just simulate intelligence—it captures and exploits human cognitive labor at scale through everyday digital interactions, which he terms “Human-Aided AI.” Central to the book is the concept of “prediction power”, the capability of AI to sort, score, and control populations through automated decision-making, often without consent, transparency, or recourse. Mühlhoff calls for a new ethical framework grounded in collective responsibility, epistemic humility, and democratic action to resist the systemic harms embedded in the socio-technical design of AI systems.

💼 Relevance for Business Executives

Executives need to recognize that AI is not just a technical tool—it is an infrastructure of influence that can unintentionally shape, reinforce, or exploit social inequalities. Business models that rely on data extraction and behavioral prediction—especially in marketing, HR, finance, or customer service—may be silently reinforcing digital colonialism, privacy violations, or algorithmic discrimination. Ethical AI is not about “fixing bias” in a model—it’s about examining the power dynamics, labor models, and design intentions behind the AI lifecycle. Firms that fail to consider these deeper structural questions risk both reputational and regulatory backlash.

✅ Calls to Action

- Reframe your AI ethics efforts: Move beyond individual fairness audits to address structural power imbalances in your data and algorithm pipelines.

- Audit your data sourcing practices: Are you benefiting from exploitative or non-consensual data harvesting? If yes, it’s time to revise.

- Integrate predictive privacy safeguards: Consider how your systems might predict—and misuse—private attributes even when users haven’t disclosed them.

- Empower collective responsibility: Foster interdepartmental awareness of AI’s social impacts and involve diverse stakeholders in oversight.

- Support ethical-by-design tools: Prioritize interface designs and system architectures that reduce user exploitation and enhance transparency.

The Ethics of Information by Luciano Floridi

Executive Summary: The Ethics of Information by Luciano Floridi

Oxford University Press, 2013

In The Ethics of Information, philosopher Luciano Floridi lays the foundation for a new branch of ethics—information ethics—to address the moral challenges of the digital age. Floridi argues that we are not just living in an information society; we are inforgs (informational organisms), deeply entangled in a shared infosphere where actions, identities, and technologies are data-driven and interconnected. Rather than adapting old moral frameworks to new technologies, Floridi proposes a distinct ethical approach centered on preserving, enhancing, and respecting the integrity of informational entities—humans, systems, and environments alike.

Key Ideas and Takeaways

- The Infosphere: We now exist within a digital environment (the infosphere) where data flows between people, machines, and systems. Ethics must address the responsibilities of acting within this space.

- Information as a Moral Patient: Floridi introduces the concept that information itself—not just humans—can be harmed or degraded. Spam, disinformation, and data pollution are moral harms to the infosphere.

- Ontocentric Ethics: Shifting from anthropocentric to ontocentric ethics, Floridi argues that moral worth should extend to all entities that can be affected informationally—not just human users but also systems and digital environments.

- Macroethics for a Networked World: We need broad, systemic thinking about the impact of our actions in the digital realm. That includes how algorithms make decisions, how data is stored and accessed, and how digital systems influence behavior at scale.

Relevance for Business

For SMBs operating in a data-rich world—using AI, automation, personalization, or customer analytics—Floridi’s framework helps leaders see that ethical responsibility extends beyond individual user privacy. It includes stewardship of the broader information ecosystem: minimizing misinformation, protecting digital identities, and ensuring algorithmic outputs are not only efficient but respectful of informational dignity.

Floridi’s work also offers a deeper foundation for understanding why ethical AI isn’t just about avoiding harm, but about promoting good digital citizenship, transparency, and sustainability in information practices.

Calls to Action

- Treat information as infrastructure: Like clean water or power, your data systems and digital outputs should preserve the integrity of the infosphere.

- Audit for informational harm: Go beyond privacy—review your algorithms, content, and user experiences for misinformation, manipulation, or data pollution.

- Practice digital environmentalism: Reduce unnecessary data hoarding, algorithmic opacity, and content that degrades trust or informational quality.

- Reframe responsibility: Ensure your team understands that ethical AI isn’t just about protecting people—it’s also about protecting the shared digital environment we all rely on.

- Encourage long-term thinking: Design AI and information systems not only for efficiency but for fairness, resilience, and trustworthiness over time.

Key Figures

🌍 Leading in Ethical AI Today

Fei-Fei Li

– Co-director of the Stanford Human-Centered AI Institute (HAI) and a former Chief Scientist of AI at Google Cloud, Dr. Fei-Fei Li is one of the world’s foremost voices in ethical and human-centered AI. She advocates for “AI with a soul”—technology that advances human dignity, inclusivity, and well-being. As founder of AI4ALL, she promotes diverse participation in AI development and regularly advises on U.S. and global AI policy, emphasizing transparency, equity, and responsibility in AI adoption. (Stanford HAI, TIME, AI4ALL)

Yoshua Bengio

– Co‑founder of Canada’s Mila and “godfather of AI,” Bengio now leads LawZero, a non‑profit focused on safe AI development. He advocates for mechanisms like “Scientist AI” that monitor and block unsafe AI behavior, especially around biological and chemical dual-use risks—a growing concern. (Stanford Medicine Magazine, Vox)

Kay Firth‑Butterfield

– Former head of AI & ML at the World Economic Forum and current CEO of the Centre for Trustworthy Technology, Kay is a pioneer in AI governance. She’s also Vice‑Chair of IEEE’s Global Initiative on Ethical AI and an advisor for UNESCO—actively shaping policies on fairness, transparency, and human rights in AI design. (Wikipedia)

Julie Owono

– A leader in internet and digital rights from the Berkman‑Klein Center and Stanford’s CPSL Lab, Julie champions multi‑stakeholder frameworks for content governance, digital rights, and AI ethics—especially in emerging economies and marginalized communities. (Wikipedia)

Marina Jirotka

– As co‑director at Oxford’s Responsible Technology Institute, Marina is known for championing the concept of an “ethical black box” in AI systems and research into responsible innovation and algorithmic bias through public‑sector collaborations. (Wikipedia)

Aimee van Wynsberghe

– A professor of Applied Ethics of AI in Germany, Aimee leads the Sustainable AI Lab and founded the Foundation for Responsible Robotics. She advocates for ethics-centered design and hosts Europe’s leading Sustainable AI conference series. (Wikipedia)

Virginia Dignum

– A professor at Delft and Umeå, and a UN AI Advisory member, Virginia’s work focuses on social‑ethical AI, human‑agent collaboration, and policy. She co‑authored the EU’s Trusted AI guidelines for human‑centered systems. (Wikipedia)

Nick Bostrom

– Philosopher and former director of Oxford’s Future of Humanity Institute, Bostrom is the author of Superintelligenceand Deep Utopia. His work continues to shape global discourse on existential risks, AI alignment, and governance frameworks for future AGI. (Wikipedia)

Mustafa Suleyman

– Co‑founder of DeepMind and current CEO of Microsoft AI, Mustafa is also the author of The Coming Wave: AI, Power, and our Future (2023). He emphasizes responsible, cautious deployment and the need for oversight as AI systems become semi‑autonomous agents. (IT Pro)

Geoffrey Hinton

– Often called the “godfather of deep learning,” Hinton’s work underpins much of today’s neural network architecture. In 2023, he resigned from Google to speak more freely about the risks of AI, warning that powerful models could surpass human control. He urges greater investment in AI alignment research and robust regulatory frameworks to address potential harms—from misinformation to loss of human agency. Hinton now serves as an independent advocate for global cooperation in AI safety. (MIT Tech Review, New York Times)

Demis Hassabis

– Co-founder and CEO of Google DeepMind, Hassabis is a central figure in AI’s scientific frontier, having led breakthroughs like AlphaGo and AlphaFold. While a proponent of AI’s potential to accelerate science and medicine, he is also vocal about the existential risks of AGI. Hassabis advocates for global governance mechanisms, international collaboration, and a science-led approach to AI alignment. He is a member of the UN’s AI Advisory Body and supports regulation that balances innovation with human safety. (DeepMind, UN, Possible.fm)

Ilya Sutskever

– Co‑founder and former Chief Scientist of OpenAI, Ilya Sutskever is a pioneering figure in deep learning and neural networks, co‑creating the influential AlexNet architecture that kickstarted the modern AI boom. Known for his expertise in large-scale generative models like GPT, he’s now focused on ensuring AI safety and alignment through his new company, Safe Superintelligence Inc. (SSI). Sutskever advocates for building AI systems that are both powerful and rigorously aligned with human values, prioritizing transparency, controllability, and long-term safety in the path toward AGI. (OpenAI, MIT Tech Review, SSI)

Cornelia C. Walther

– A contributor to Forbes and founder of the Hybrid Intelligence Hub, Walther brings a humanitarian lens to AI ethics after two decades with the United Nations. She explores how artificial intelligence impacts civic agency, autonomy, and collective well-being—arguing for “pro-social AI” guided by empathy and moral accountability. Now a visiting scholar at the Wharton School, she calls for hybrid frameworks that balance technological advancement with human conscience, particularly in health, development, and education contexts. (Forbes, Wharton, Hybrid Intelligence Hub)

Abeba Birhane

– A cognitive scientist and senior advisor at Mozilla, Birhane investigates algorithmic harm, decolonial AI, and the embedded social assumptions within training data. Her research has exposed systemic issues in popular datasets—like ImageNet and LAION—and advocates for dismantling power imbalances in AI development. She pushes for community-centered governance and cautions against unchecked scale in data-driven AI. (Mozilla, Nature, Wired)

Shakir Mohamed

– A principal researcher at DeepMind, Shakir works at the intersection of machine learning, social justice, and global equity. He champions the decolonization of AI research, advocating for inclusive epistemologies and greater representation of underrepresented communities—especially in the Global South. He co-founded the Deep Learning Indaba, a pan-African movement to build locally grounded AI talent and ethics frameworks. (DeepMind, NeurIPS, UNESCO)

Helen Nissenbaum

– A philosopher and scholar of digital ethics, Nissenbaum developed the theory of contextual integrity, which has become a foundational framework in privacy and data ethics. She critiques the application of AI in contexts where data use violates social norms, and argues for nuanced, context-aware AI design that respects human dignity. Her work spans academic research, policy advocacy, and public education. (Cornell Tech, Oxford Internet Institute)

Timnit Gebru

– Founder of the Distributed AI Research Institute (DAIR) and former co-lead of Google’s Ethical AI team, Gebru is a globally recognized advocate for algorithmic justice, data transparency, and accountability in large language models. Her work exposes racial, gender, and economic biases embedded in AI systems, emphasizing the importance of lived experience and social context in ethical AI design. She’s a key voice in promoting participatory AI governance and equitable research funding. (DAIR, Wired, MIT Tech Review)

Margaret Mitchell

– Chief Ethics Scientist at Hugging Face and former co-lead of Google’s Ethical AI team, Mitchell is a pioneer in explainable AI and responsible ML practices. She co-created “Model Cards” to improve transparency in AI models and is a driving force in pushing for internal accountability structures within tech companies. Mitchell advocates for inclusive datasets and ethical documentation to mitigate systemic bias. (Hugging Face, Nature, Fast Company)

Stuart Russell

– A professor at UC Berkeley and co-author of the widely used textbook Artificial Intelligence: A Modern Approach, Russell is a leading voice in AI alignment and long-term safety. He calls for robust international regulation, human-centric AI systems, and provable safety guarantees before deploying AGI-level models. Russell is also affiliated with the Future of Life Institute and has briefed policymakers globally on the societal risks of advanced AI. (UC Berkeley, Future of Life Institute, Wired)

Key Regulatory Documents

Key current publications and guidelines from prominent regulatory organizations that focus on ethical, safe, and responsible AI development:

🏛️ Government & Standard-Setting Bodies

1. U.S. Executive Order on “Safe, Secure, and Trustworthy AI” (2023)

- President Biden directed federal agencies to follow eight guiding principles for AI: safety, bias mitigation, transparency, and cybersecurity, among others (Federal Register).

- NIST, under the Department of Commerce, published draft guidance to help organizations implement these principles and invited public comment to shape standards (NIST).

2. EU Artificial Intelligence Act (Entry into Force: August 1, 2024)

- The world’s first comprehensive, risk-based AI regulation classifies systems into unacceptable, high, limited, and minimal risk tiers.

- It mandates obligations like risk assessment, transparency, incident reporting, and cybersecurity, with compliance deadlines extending between 2025–2027 (Medium, Reuters).

3. OECD AI Principles (Adopted 2019, Updated May 2024)

- These international, non‑binding principles advocate for trustworthy AI grounded in human rights, fairness, safety, transparency, robustness, and accountability (OECD).

- They form the basis for AI policies globally—impacting regulations in the EU, U.S., U.K., Canada, and beyond (Medium).

4. UNESCO Recommendation on the Ethics of AI (November 2021)

- The first global standard on AI ethics, endorsed by 194 member states, emphasizing human dignity, transparency, fairness, and human oversight (UNESCO).

🌐 International & Technical Standards

5. ISO/IEC JTC 1/SC 42 AI Standards

- A global ISO subcommittee has released key standards since 2023–2024, covering AI risk management, bias detection, transparency, governance frameworks, and lifecycle control (Wikipedia).

6. UK & U.S. AI Safety Institutes (2023–2024)

- National AI Safety Institutes have been established to evaluate and test frontier AI models for safety and shared wild-model oversight.

- The UK’s AISI and its U.S. counterpart collaborate internationally, developing tools like “Inspect” for compliance and performance audits (Wikipedia).

✅ Recommended Actions for SMB Leaders

- Reference and align with NIST guidance to meet U.S. federal expectations and manage AI systems responsibly.

- Map your AI systems against the EU risk categories, preparing for compliance and reducing legal exposure.

- Integrate OECD and UNESCO ethical principles into procurement, vendor agreements, and policy design.

- Adopt ISO/IEC AI standards for certification readiness and product quality assurance.

- Monitor outputs from AI Safety Institutes to understand risks in generative and foundation AI before adoption.

Direct links to six key regulatory publications and guidelines on ethical, safe, and responsible AI.

1. 🇺🇸 U.S. Executive Order 14110: Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence (Oct 30, 2023)

Full text (PDF) from the White House archives: (The White House)

👉 Download the Executive Order PDF

Accompanied by NIST’s AI Risk Management Framework (AI RMF 1.0):

👉 AI RMF 1.0 PDF (The White House, NIST Publications)

2. 🇨🇭 NIST Draft AI Guidance (May 2024)

NIST-issued draft modules aligned with the Executive Order, covering safety, security, and trustworthy AI:

👉

Includes generative AI profile: (NIST Publications, NIST)

3. 🇪🇺 EU Artificial Intelligence Act (Entered Force: Aug 1, 2024)

Official EU version—complete text and annexes:

👉 EU AI Act PDF (aiact-info.eu)

Preview and track the enforcement timeline:

👉 EU AI Act Summary Page (Digital Strategy)

4. 🌐 OECD AI Principles (Updated May 2024)

Text from OECD with human-centric, trustworthy AI guidelines:

👉 OECD AI Principles Webpage (OECD AI Policy Observatory)

Detailed explanatory memorandum (PDF):

👉 OECD Explanatory PDF (OECD)

5. 🇺🇳 UNESCO Recommendation on the Ethics of Artificial Intelligence (Adopted Nov 2021)

Global ethical norms from UNESCO:

👉 UNESCO Recommendation Web (UNESCO)

Full PDF version:

👉 UNESCO Ethics Recommendation PDF (docbox.etsi.org, OHCHR)

6. 🔧 ISO/IEC JTC 1/SC 42 – AI Standards (2024)

Overview of globally recognized technical standards:

👉 ISO SC 42 Committee Page

(assets.iec.ch, iso.org)

Conclusion

The rise of AI has unleashed a flood of commentary, whitepapers, and “thought leadership,” much of it shallow, opportunistic, or self-promotional, especially when it comes to topics like “ethical AI” and “responsible innovation.” For SMB executives, it’s become nearly impossible to discern what’s credible, actionable, or even honest.

That’s exactly why ReadAboutAI.com’s curated approach is so valuable:

1. Trusted Filters Beat Noisy Feeds

Your editorial lens—favoring peer-reviewed books, academic thinkers, and credible publications (e.g. MIT Tech Review, HBR, Wired)—cuts through hype and misinformation.

2. Executive-Relevant, Not Academic-Obscure

Many ethics books are either too technical or too abstract. Your summaries translate them into practical insights for decision-makers with limited time and high stakes.

3. Real Issues, Not Buzzwords

While others peddle AI “values” without substance, your platform covers structural power, predictive privacy, labor exploitation, ESG risks, and compliance gaps—the real terrain of ethical AI.

4. Anti-Ethics-Washing Stance

You’re surfacing the difference between meaningful accountability (like Microsoft’s FATE or Meta’s Responsible Use Guide) and empty PR gestures.

Why We Curate AI Ethics Content

At ReadAboutAI.com, we know “responsible AI” is more than just a buzzword. It’s a complex—and often exploited—topic, especially for business leaders under pressure to adopt AI quickly and ethically. That’s why we curate only the most credible, relevant, and practical resources—books, policies, and reports that help executives make informed, ethical, and future-proof decisions.

↑ Back to Top