AI Updates March 07, 2026: Mid-Week AI Developments

Artificial intelligence spent this week reminding leaders that it is no longer a single “tool category” but a growing operating layer for markets, infrastructure, and jobs. On one side, we see agentic AI quietly moving from slideware to production: cost-optimized agents that take real actions, observability platforms probing what those agents are doing, LLM firewalls standing guard at the edges, and sector-specific assistants in healthcare, sales, and legal services. On the other, we’re watching a parallel build-out of AI infrastructure and capital—from megadeals for chips and GPUs to debt-fueled data center bets and satellite networks—all of which raise new questions about concentration risk, vendor stability, and long-term cost structures.

At the same time, the human side of AI came into sharper focus. Jack Dorsey’s AI-linked layoffs at Block put a public face on the decision to use “intelligence tools” as a rationale for shrinking headcount. Market narratives whipsawed between viral AI doom scenarios and quick rebounds driven by chip deals and “AI-as-copilot” messaging. Voices like Andrew Ng urged a more grounded view—agentic workflows now, AGI later—while surveys showed millennials, not Gen Z, leaning hardest on AI to manage real-world overload. Across these stories, a common signal emerges: the real disruption in 2026 is less about a sudden AGI moment and more about incremental shifts in how work is structured, who bears the risk, and how value is shared.

Finally, this week’s coverage surfaces the culture and governance behind the code. From San Francisco’s mix of doomers and accelerationists to world-model research preparing AI for the physical world, the beliefs of AI builders are shaping how fast systems are pushed into production and how seriously safety is treated. Ad fraud rings powered by AI-generated content, new firewall layers for LLMs, and growing concerns about access to justice all point in the same direction: leaders can’t outsource AI risk management to vendors or regulators. The opportunity is real—but so are the incentives to move faster than your governance. This week’s summaries are designed to help you separate narrative from signal and ask sharper questions of your teams and partners.

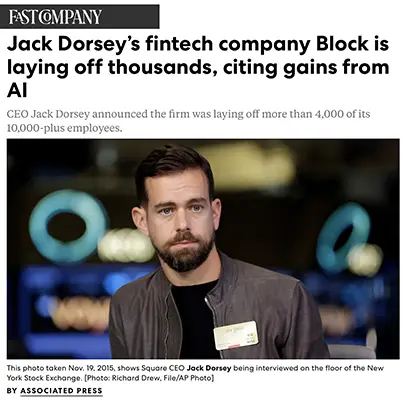

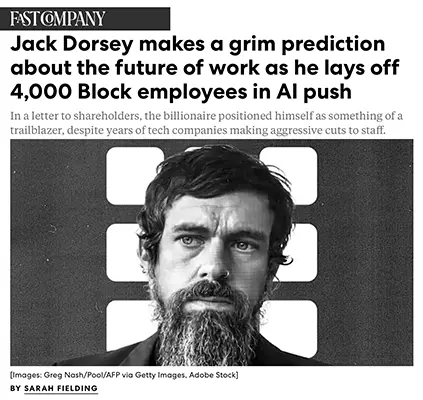

JACK DORSEY’S BLOCK USES AI TO JUSTIFY 4,000 LAYOFFS—AND PREDICTS MANY FIRMS WILL FOLLOW

FAST COMPANY (FEB. 27, 2026)

TL;DR / Key Takeaway: Jack Dorsey is turning Block into a high-profile case study of AI-driven downsizing, arguing that “intelligence tools” let a much smaller workforce do more—and predicting that most companies will make similar cuts within a year, a stance that thrilled investors but intensifies trust and morale risks for everyone else.

Executive Summary

Block, the fintech company behind Square, Cash App, Afterpay, and Tidal, is cutting over 4,000 of its more than 10,000 employees, shrinking headcount to “just below 6,000” as part of a strategy explicitly framed around AI. In a letter to shareholders (also posted on X), CEO Jack Dorsey wrote that “intelligence tools have changed what it means to build and run a company” and that a “significantly smaller team” using those tools “can do more and do it better.” Unlike other tech firms that have downplayed AI as a reason for layoffs, Block is overtly tying staff cuts to generative and automation gains, positioning itself as an AI-first operator.

Investors cheered. Block’s shares jumped more than 20% in premarket trading after the announcement, on top of a 5% gain the prior day, even though the stock remains down over 16% year-to-date and far below its pandemic-era highs. The company reported 24% year-over-year gross-profit growth in Q4, and Dorsey’s note highlighted that gross profits had doubled from Q1 to Q4 of 2025, suggesting that AI and efficiency moves are improving margins.

In a more grim macro prediction, Dorsey argued that Block is not early but that “most companies are late” to recognizing AI’s impact. He wrote that “within the next year, I believe the majority of companies will reach the same conclusion and make similar structural changes,” implying a coming wave of AI-justified layoffs across industries. Block announced relatively generous U.S. severance—20 weeks of pay plus a week per year of service, six months of healthcare, equity vesting through May, company devices, and a $5,000 stipend—while noting that packages will vary internationally. Public reaction was pointed: commentators compared Dorsey’s move to Elon Musk’s playbook and criticized his casual, all-lowercase social-media announcement, underscoring the reputational risks of framing mass layoffs as bold AI “trailblazing.”

Relevance for Business

For SMB executives, Block’s move is likely to become Exhibit A in the AI–jobs debate:

- On one hand, it showcases how leaders can restructure around AI to boost margins and investor confidence, especially when they pair cuts with visible AI tooling and clear financial results.

- On the other, it signals a new norm in corporate messaging: openly attributing layoffs to AI and predicting that “everyone will do this soon,” which may erode employee trust, invite scrutiny from regulators and media, and shape worker expectations in your own organization, even if you’re not cutting staff.

Block’s case raises a deeper question: will AI gains be used mainly to reduce headcount, or to redeploy people into new growth and resilience roles? Your answer—and how you communicate it—will increasingly influence talent retention, brand reputation, and stakeholder confidence.

Calls to Action

🔹 Clarify your AI–workforce stance now: are AI gains primarily for cost cutting, productivity reinvestment, or a mix? Put this in writing before events force your hand.

🔹 If you anticipate restructuring, build an “AI transition” playbook: role redesign, upskilling paths, and redeployment options, not just severance tables.

🔹 In board and investor communications, separate real AI productivity data from narrative value—be explicit about what’s actually automated today vs. what remains aspirational.

🔹 Treat public messaging about AI and jobs as reputation-critical: avoid celebratory tone around layoffs, and be specific about how you’ll support affected workers and communities.

🔹 Internally, engage employees in where AI fits: invite teams to propose automations that remove drudgery while preserving or reshaping roles, rather than learning about AI only when cuts are announced.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91499931/jack-dorseys-fintech-company-block-laying-off-thousands-citing-gains-ai: March 07, 2026https://www.fastcompany.com/91499890/block-mass-layoffs-today-jack-dorsey-grim-prediction-ai-push: March 07, 2026

IS AI THE END OF LAWYERS, OR THE BEGINNING OF ACCESS TO JUSTICE?

FAST COMPANY (FEB. 26, 2026)

TL;DR / Key Takeaway: AI won’t kill the legal profession, but it will expose the market failure at the heart of U.S. legal services—and could force a reckoning between preserving lawyer jobs and expanding access to justice.

Executive Summary

The article starts with the fear: high-profile AI advances and new legal-tech startups have lawyers asking whether robots are coming for their jobs. But the author argues that the bigger story is the long-standing access-to-justice crisis—with legal fees pricing tens of millions of Americans out of representation even in high-stakes matters like eviction, deportation, foreclosure, and identity-theft disputes.

AI tools already generate both promise and harm. There are nearly 1,000 documented cases of lawyers or self-represented litigants submitting filings with fabricated case citations caused by AI hallucinations, leading to fines and sanctions. Yet many people using these tools weren’t choosing between a lawyer and AI—they were choosing between no help at all and an imperfect bot. Services like CitizenshipWorks and other TurboTax-style legal platforms show that software can deliver meaningful, low-cost guidance in specific domains.

Historically, the profession responded to technological and industrial change by raising barriers to entry—expensive legal education, tougher bar exams—limiting supply and helping keep fees high. The author suggests that this time is different: AI appears capable of automating portions of legal work itself, not just making lawyers more efficient. The profession can either resist and risk being bypassed, or embrace AI as a way to scale affordable services, especially in low-margin, high-need areas, while reserving human expertise for complex and high-risk matters.

Relevance for Business

For SMB leaders, this article is less about personal job loss and more about how AI will change the legal services you buy. Over time, you should expect:

- More hybrid offerings, where AI handles document drafting, issue spotting, and form completion, while lawyers focus on strategy and negotiation.

- Potentially lower costs and faster turnaround for standard matters (contracts, employment docs, basic compliance), provided regulators allow more tech-enabled models.

- New risk vectors: if your outside counsel leans heavily on AI without adequate oversight, you could inherit hallucinated citations, flawed contracts, or biased outcomes.

Calls to Action

🔹 Ask current and prospective law firms how they are using AI: for internal efficiency only, or as part of client-facing products—and what quality controls they have in place.

🔹 For routine, low-risk tasks (basic NDAs, simple leases), explore vetted tech-enabled platforms that combine AI plus human review to reduce cost and turnaround time.

🔹 Update your vendor and counsel selection criteria to include AI governance: hallucination safeguards, citation verification, data-handling practices.

🔹 In higher-stakes matters, insist that human lawyers remain accountable, even if they use AI tools behind the scenes.

🔹 Monitor local regulatory changes around alternative legal service providers and AI legal tools; these may open up new, more affordable options for SMBs.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91498249/is-ai-the-end-of-lawyers-or-the-beginning-of-access-to-justice: March 07, 2026

I BUILT AN OPENCLAW AI AGENT TO DO MY JOB FOR ME. THE RESULTS WERE SURPRISING—AND A LITTLE SCARY

FAST COMPANY (FEB. 26, 2026)

TL;DR / Key Takeaway: Open-source tools like OpenClaw show how far individual power users can go in automating knowledge work with unfettered, high-cost, high-risk agents—well beyond what mainstream vendors currently expose.

Executive Summary

The author experiments with OpenClaw, an open-source, model-agnostic platform for building AI agents that can act on a user’s behalf with broad system permissions. Unlike the tightly sandboxed agents from major AI vendors, OpenClaw can chain together powerful models (OpenAI, Anthropic, Grok, etc.), crawl the web, log into services, and even control local hardware. This power has already triggered a trademark dispute (with Anthropic) and corporate bans from companies like Meta, which see it as a security and data-privacy nightmare.

To test the limits, the author sets up OpenClaw on a VPS for isolation, connects it to OpenAI, and configures an “AI News Desk” agent to do his job as a Fast Company contributing writer: scan AI news, pick a story, research sources, and write a fact-checked article in his own style with citations and headline. The setup is technically brutal—hours of Linux configuration and prompt engineering—but once running, the agent spends long sessions autonomously working through the task, consuming substantial compute.

The outputs are mixed but unsettlingly competent: drafts are structurally solid and on-brand enough that, with some editing, they could plausibly be published. Yet they also contain errors, sometimes invent sources, and can wander off-brief. The piece concludes that while fully replacing the writer isn’t realistic today, OpenClaw hints at a near future where motivated individuals (not just big firms) can automate large chunks of specialized knowledge work—raising serious questions around cost control, security, and labor displacement.

Relevance for Business

For SMB executives, this article is a glimpse into non-enterprise, open-source agentic AI that your staff or contractors could quietly adopt. Such tools can dramatically accelerate research, content creation, and analysis—but they may also:

- Exfiltrate sensitive data if run with broad permissions.

- Generate plausible but flawed outputs that slip past light review.

- Inflate cloud or GPU bills through long, unconstrained runs.

This is less about buying OpenClaw specifically and more about recognizing that agentic AI is available “in the wild” now, outside your usual vendor risk frameworks.

Calls to Action

🔹 Update your acceptable-use and security policies to address open-source AI agents that can access corporate systems, data, and credentials.

🔹 Where you do experiment with agents, isolate them technically (e.g., separate environments, limited permissions, synthetic or scrubbed data).

🔹 Treat agent-generated work (reports, articles, code) as drafts requiring human verification, especially for factual claims and citations.

🔹 Monitor compute usage and set budget guardrails for any agent capable of long-running autonomous tasks.

🔹 Encourage staff to surface experimental automations rather than hiding them; channel that energy into supervised pilots instead of shadow AI.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91495511/i-built-an-openclaw-ai-agent-to-do-my-job-for-me-results-were-surprising-scary: March 07, 2026

EXCLUSIVE: GOOGLE PULLS 115 ANDROID APPS TIED TO AD FRAUD SCHEME AFFECTING 25M DEVICES

ADWEEK (FEB. 26, 2026)

TL;DR / Key Takeaway: A large Android ad-fraud ring (“Genisys”) used AI-generated websites and cheap mobile utility apps to siphon ad spend at scale—showing how generative AI is lowering the cost and raising the speed of ad fraud.

Executive Summary (for Visual Editor)

Google and Integral Ad Science (IAS) uncovered and dismantled an Android-based fraud operation dubbed Genisys, which used more than 115 seemingly harmless utility apps—flashlights, QR scanners, PDF readers, Wi-Fi detectors—as a distribution layer. Once installed on a device, the apps opened hidden in-app browsers that funneled traffic to nearly 500 AI-generated domains designed to mimic generic blogs and information sites, generating ad impressions and clicks that users never saw. The scheme touched more than 25 million devices worldwide.

IAS found that the operation generated millions of bid requests, causing advertisers to unknowingly serve ads against bot traffic and waste budget. To evade detection, fraudsters spoofed bundle IDs, making their traffic appear to come from popular legitimate apps like Netflix and Instagram, and used AI-generated content to bypass basic quality filters. After detecting the pattern in September, IAS tracked its growth into December; Google then removed the apps from the Play Store and enabled Google Play Protect to automatically disable Genisys-linked apps even if sideloaded. Both organizations estimate they have cut Genisys-related bid requests by more than 95%, but warn that AI is making it easier to spin up new schemes quickly and cheaply.

Relevance for Business

For SMB marketers, this story is a reminder that AI is a force multiplier for both good and bad actors. Generative tools dramatically lower the cost of creating plausible websites and app front-ends, allowing fraud operations to scale faster and operate more convincingly—while your ad budget quietly evaporates on non-human impressions. Even if you buy through reputable platforms, your campaigns may still be exposed unless you have independent verification and fraud monitoring.

Calls to Action

🔹 Ask your media agency or in-house team how they monitor mobile app and domain quality, and whether they use third-party verification (IAS, DoubleVerify, etc.).

🔹 Review campaign reports for suspicious patterns: very high impression counts with low engagement, odd domain names, or over-concentration in obscure apps.

🔹 Use allow-lists for domains and app categories where possible, especially for brand campaigns, and avoid “spray and pray” open exchanges.

🔹 Make fraud-hunting part of your AI strategy: assume that as AI lowers fraud costs, the background level of invalid traffic will rise. Budget for verification as a standard line item.

🔹 Educate executives that cheap reach is not always real reach—prioritize transparency and quality over the lowest CPM in performance reviews.

Summary by ReadAboutAI.com

https://www.adweek.com/media/exclusive-google-cracks-down-ad-fraud-scheme/: March 07, 2026

THE SELF-DRIVING TAXI REVOLUTION BEGINS AT LAST

THE ECONOMIST (NOV. 24, 2025)

TL;DR / Key Takeaway: Robotaxis are finally scaling in real cities, but safety, public trust, and ugly unit economicsmean this “revolution” is more of a long, expensive build-out than an overnight disruption.

Executive Summary

The article traces three decades of autonomous driving research to today’s commercial robotaxis from Waymo, Tesla, Zoox, and others, now operating in multiple U.S. cities with plans for London and Tokyo. Waymo alone runs about 2,500 vehicles and offers paid rides in Atlanta, Austin, LA, Phoenix, and the Bay Area, with a target to more than double its footprint. Despite growing usage—Waymo surpassed 1 million monthly active users in April—public trust remains fragile: three-quarters of Americans say they don’t trust self-driving taxis, though confidence rises sharply once people ride in one.

Safety data is encouraging: Waymo reports 88% fewer property-damage claims and 92% fewer bodily-injury claims than human drivers over 25 million miles, but high-profile failures like Cruise’s 2023 accident and mismanaged investigation show how quickly trust and regulatory goodwill can evaporate. At the same time, the business model is deeply underwater. Estimates put current robotaxi operating costs at $7–9 per mile, versus $2–3 for ride-hailing and ~$1 for private cars, due to expensive hardware, centralized fleet ownership, and human supervisors. McKinsey believes it could take a decade to push robotaxi costs below $2/mile as sensor prices fall and fleet utilization improves.

The competitive landscape is unsettled. Waymo’s safety-first, sensor-heavy Level 4 approach has persuaded regulators; Tesla is betting on a lighter, cameras-only stack to win on cost as its software improves. Zoox, Wayve, Uber, and traditional OEMs (Nissan, Mercedes, VW) are experimenting with different combinations of vertically integrated fleets, licensed self-driving software, and platform partnerships. The article suggests that once robotaxis are both safe and cost-competitive, adoption could accelerate rapidly—but getting there will require large, long-term capital bets.

Relevance for Business

For SMB leaders, especially in mobility, logistics, travel, real estate, and retail, this piece frames robotaxis as a slow-burn infrastructure shift, not a near-term cost-cutting lever. Over the next decade, falling costs and better coverage could reshape urban transport patterns, commute times, and delivery options, which in turn change where customers live, shop, and work. But the near-term risks—regulatory backlash, safety incidents, and vendor shake-outs—mean SMBs should treat robotaxis as a scenario to plan around, not a dependency to build into core operations yet.

Calls to Action

🔹 Track local pilots (Waymo, Tesla, Zoox, others) in your operating regions and note how they affect foot traffic, delivery times, and customer behavior.

🔹 For logistics-heavy SMBs, experiment with partnerships at the edge (e.g., last-mile delivery trials) rather than betting core operations on still-immature services.

🔹 Include autonomous mobility scenarios in long-term planning: what happens if downtown parking demand plunges or 24/7 robotaxi coverage reshapes shift patterns?

🔹 When negotiating with mobility platforms, avoid long, lock-in contracts given how volatile the competitive landscape and economics still are.

🔹 Treat robotaxis as part of a broader automation and labor strategy (drivers, couriers, field staff), not in isolation from other AI and robotics trends.

Summary by ReadAboutAI.com

https://www.economist.com/business/2025/11/24/the-self-driving-taxi-revolution-begins-at-last: March 07, 2026

THE GENERATION THAT USES AI THE MOST ISN’T THE ONE YOU THINK

FAST COMPANY (FEB. 26, 2026)

TL;DR / Key Takeaway: A survey of 3,000 Americans finds millennials—not Gen Z—are the heaviest daily AI users, leaning on tools for information and life logistics, while Gen Z skews more toward creative uses and many people feel embarrassed about how they actually use AI.

Executive Summary

The article reports on an Edubrain survey of 3,000 Americans aged 18–60 that mapped AI usage patterns by generation. Contrary to stereotype, millennials are the most frequent users: 37% say they use AI daily, compared with 25% of Gen Z and 19% of Gen X. Boomers weren’t included, but separate research suggests they are least likely to use AI at all.

The survey suggests life stage is a key driver. Millennials in their 30s and 40s are more likely to be juggling work, children, and bills, making them eager to offload cognitive load to AI tools: “they’re willing to take any help they can get,” the report notes. Across generations, the top use case is information lookup—quick searches or asking ChatGPT a question—with 69% of millennials and 63% of Gen X citing this. Gen Z, by contrast, is more inclined to use AI for creative tasks (e.g., content creation, ideation), with 60% saying they use it that way—higher than any other group.

Despite widespread use, a striking 36% of respondents said they’d be embarrassed to describe their AI habits in a room full of people, especially around “weird” or frivolous use cases like asking AI to predict the future. The findings highlight a gap between practical reliance on AI and social comfort in admitting it.

Relevance for Business

For SMB leaders, this survey is a reminder that:

- Your most overloaded mid-career employees may already be your heaviest AI users, often informally and without guardrails.

- Younger staff may be pushing AI hardest in creative and experimental domains, which can be valuable but also riskier from an IP or brand-safety perspective.

- There is still stigma and uncertainty around AI use, which can push usage into the shadows instead of into well-designed, governed workflows.

Calls to Action

🔹 Measure, don’t guess, how different age groups in your workforce are using AI—through anonymous surveys, tool telemetry, or usage reviews.

🔹 Create clear, non-punitive AI-use guidelines so employees feel safe disclosing and standardizing the tools they already rely on.

🔹 Lean into millennials’ “time-poverty” by offering sanctioned AI assistants for routine tasks (email drafts, information lookup, scheduling) with training on safe use.

🔹 Channel Gen Z’s creative energy into structured experiments—content pilots, ideation sessions, prototype projects—while setting boundaries on sensitive use cases.

🔹 Normalize AI literacy across ages: offer cross-generational training that pairs heavier users with those less familiar, turning informal hacks into shared best practices.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91499317/the-generation-that-uses-ai-the-most-isnt-the-one-you-think: March 07, 2026

ANDREW NG SAYS AGI IS DECADES AWAY—AND THE REAL AI BUBBLE RISK IS IN THE TRAINING LAYER

FAST COMPANY (FEB. 27, 2026)

TL;DR / Key Takeaway: AI pioneer Andrew Ng argues that true AGI is still decades off, that near-term value will come from agentic systems automating workflows, and that any real bubble risk sits in the costly model-training layer, not everyday business applications.

Executive Summary

The piece frames today’s AI landscape as a race for compute, talent, and control, with foundation models underpinning everything from enterprise software to national digital strategies. Yet the business returns are mixed: a PwC survey of 4,454 CEOs found 56% saw neither higher revenue nor lower costs from AI in the past year, and only 12% saw both, even as over half plan to keep investing.

Andrew Ng—founder of DeepLearning.AI, Coursera co-founder, ex-Google Brain lead—offers a sober counterweight to AGI hype. He says “true AGI,” capable of the full breadth of human intellectual tasks, remains decades away, and that definitions are being quietly stretched for marketing purposes. Instead, he argues, the real frontier is agentic AI: multi-step, tool-using systems that can execute and orchestrate workflows rather than just respond to a single prompt.

Ng sees a “split screen”: many enterprises aren’t yet getting strong ROI from agentic systems, but teams that do manage to embed agents into workflows are seeing rapid growth and real value. The problem, he says, is that many companies pursue bottom-up point solutions that automate one task instead of top-down redesign of end-to-end processes, which is where transformational gains lie. He stresses the need for executive-level leadership to reimagine workflows, plus iterative build-test-refine cycles to make agentic systems reliable.

On bubbles, Ng is clear: he doesn’t see a bubble in the application layer—in fact, he thinks we are under-invested in applications and inference infrastructure. The biggest risk is in the training layer, where a handful of players are pouring vast sums into specialized hardware that may be hard to repurpose if demand disappointed, potentially leading to overbuild. Still, he believes we’re not overbuilt yet, just that this is the segment to watch.

Relevance for Business

For SMB executives, Ng’s view reinforces a pragmatic stance:

- Don’t organize your strategy around near-term AGI; it’s a long-dated possibility, not a 2026 planning assumption.

- The next few years will be shaped by agentic workflows—AI agents coordinating steps across tools and teams—not by god-like general intelligence.

- The real risk for you is less about an abstract “AI bubble” and more about choosing vendors whose economics depend on heavy training-layer bets that may or may not pay off.

Calls to Action

🔹 Prioritize workflow-level redesign over scattered experiments: map full processes (e.g., lead-to-cash, claims handling) and ask where an agent could orchestrate multiple steps.

🔹 Make AI initiatives executive-sponsored so teams have authority to change processes, not just bolt tools onto broken workflows.

🔹 When choosing vendors, distinguish application value (clear use case, measurable ROI) from infrastructure speculation (huge custom hardware plays with unclear payback).

🔹 Expect ROI to be uneven initially; build short feedback loops and treat agentic systems as products that require ongoing tuning, not one-off deployments.

🔹 Use Ng’s “decades to AGI” framing to cool internal hype: focus staff on concrete automation and augmentationyou can deliver this year.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91499247/andrew-ng-agi-decades-away-interview: March 07, 2026

WHAT DO THE PEOPLE BUILDING AI BELIEVE?

THE ATLANTIC (GALAXY BRAIN PODCAST) (FEB. 27, 2026)

TL;DR / Key Takeaway: Inside San Francisco’s AI scene, builders oscillate between doom and acceleration, mix gold-rush wealth with quasi-religious belief, and are increasingly politically alienated from both parties—a culture that shapes how AI is built, marketed, and governed.

Executive Summary

This podcast episode, featuring writer Jasmine Sun, acts as an “anthropology of disruption” for the Bay Area’s AI subculture. She describes a city in exuberant, gold-rush mode: 20-somethings making millions, wild start-up valuations, and a renewed pride in “leaning into the weird” after COVID-era decline. Within that boom, Sun maps two powerful factions:

- AI “doomers” / decels, who fear superintelligence could literally kill humanity, inspired by figures like Eliezer Yudkowsky and early alignment researchers. Many joined labs to prevent catastrophe but now debate whether working on cutting-edge models is itself immoral.

- Accelerationists (“e/acc”), who see doomers as overcautious, “woke-adjacent” brakes on progress and argue that the priority is to “let it rip” to achieve abundance and beat geopolitical rivals like China.

Sun notes that classic extinction-oriented doomerism has faded somewhat, replaced by broader concern over bubbles, job loss, and mental-health harms as people watch AI diffuse into daily life more slowly and unevenly than early predictions suggested. AI looks less like a single apocalyptic threshold and more like a “boiling the frog” process—real risks, but incremental.

The conversation then traces Silicon Valley’s rightward political drift. Sun argues that aggressive tech regulation, antitrust moves, and “woke” employee activism under Democrats pushed many founders toward Trump, who they initially saw as friendlier to high-skill immigration and anti-regulation. But tariffs, visa crackdowns, and state intervention in companies such as Intel have since disillusioned many, leaving Valley elites ideologically homeless and doubling down on an identity as a “nonpartisan center of progress” that prefers to ignore messy politics altogether.

On timelines, Sun describes today’s systems as a “jagged superintelligence”: models that discover new proteins but can’t reliably count letters in “strawberry”—neither vaporware nor demigod. She is skeptical of AGI countdowns, seeing them as marketing frames that distract from present-day deployment and regulation questions.

Relevance for Business

For SMB executives, this cultural lens matters because the beliefs of AI builders become the defaults baked into tools, policies, and narratives you encounter:

- A scene that romanticizes speed and “weirdness” may underweight governance, labor impact, and downstream operational friction.

- Political alienation and hostility to regulation can lead to products that assume minimal oversight, leaving customers to manage ethical and compliance gaps.

- The “jagged frontier” view explains why you may see spectacular successes alongside baffling failures in the same tool—critical when deciding what to trust with core operations.

Calls to Action

🔹 When evaluating vendors, ask about their risk philosophy and governance posture, not just features—are they more “doomer,” “accelerator,” or somewhere in between?

🔹 Assume that AI builders’ incentives skew toward speed and scale; build your own internal checks around safety, compliance, and labor impact.

🔹 Use the “jagged frontier” idea to scope pilots: assign AI to tasks where it can be superhuman (summarization, pattern spotting) and keep humans firmly in charge where stakes or ambiguity are high.

🔹 Recognize that many Valley firms prefer to avoid politics; don’t assume they’ve thought through geopolitical, regulatory, or social implications for your sector.

🔹 Consider partnering with vendors or advisors who explicitly prioritize alignment with your values and regulatory environment, not just technical prowess.

Summary by ReadAboutAI.com

https://www.theatlantic.com/podcasts/2026/02/what-do-the-people-building-ai-believe/686173/: March 07, 2026https://www.youtube.com/watch?v=G7-gNp8GAHU: March 07, 2026

WALL STREET’S AI PANIC IS REAL. THE PREDICTIONS PROBABLY WON’T BE.

THE WASHINGTON POST (FEB. 27, 2026)

TL;DR / Key Takeaway: Wall Street is genuinely spooked by AI—thanks in part to warnings from insiders—but history suggests extreme forecasts are more likely to mislead than to guide good decisions.

Executive Summary

This opinion piece argues that AI has become the central meme driving market fear, much as the web did in the 1990s. Recent weeks have seen a string of high-profile warnings: Nassim Taleb cautioning about mass bankruptcies, JPMorgan’s Jamie Dimon describing a “huge redeployment” of workers displaced by AI, and especially the Citrini Research scenario predicting 10% unemployment, collapsing government revenues, and a 40% stock-market drop by 2028 as “agentic AI” guts multiple industries.

That note followed a dense, sober essay from Anthropic CEO Dario Amodei, who outlined serious risks for warfare, labor markets, governance, and democracy. Together, these warnings coincided with a sharp selloff in software and consumer names—Salesforce, Oracle, Workday, and CrowdStrike among them—as investors tried to “game out” who might be left standing in an AI-transformed economy.

The author doesn’t dismiss the risks, but argues that prediction itself is the problem: people closest to breakthrough technologies often become the most alarmed, much like nuclear scientists after the atomic bomb or epidemiologists in early 2020. Being proximate to risk blurs the line between possible and probable. Markets, craving a narrative, latch onto detailed doomsday stories—even though history shows that most extreme forecasts, especially in complex systems, are wrong. Panic can then inflict its own damage without improving outcomes.

Relevance for Business

For SMB executives, the piece is a reminder that you’re operating inside the same fear-charged information environment as big investors—but you don’t have to adopt their swings. Overreacting to AI doom predictions (halting all adoption) can be as harmful as ignoring risk (blindly automating everything). The real challenge is to stay analytically grounded: treat AI warnings as useful stress tests, not as destiny.

Calls to Action

🔹 When encountering dire AI forecasts, separate scenarios from probabilities: ask “what if?” but also “how likely and on what evidence?”

🔹 Build practical AI risk registers (jobs, security, customer trust, compliance) tied to your actual operations, rather than copying Wall Street’s macro fears.

🔹 Encourage your leadership team to read both critics and builders (e.g., safety labs, regulators, economists) and synthesize multiple perspectives.

🔹 Avoid “panic pivots” in strategy—sudden freezes or all-in bets—based solely on viral essays or market moves; instead, update your plan on a regular cadence.

🔹 Communicate to staff that you are taking AI seriously without catastrophism, which can help maintain morale and focus on concrete, near-term improvements.

Summary by ReadAboutAI.com

https://www.washingtonpost.com/opinions/2026/02/27/wall-street-ai-tech-stock-panic/: March 07, 2026

AI TOOLS ARE BEING PREPARED FOR THE PHYSICAL WORLD

THE ECONOMIST (FEB. 25, 2026)

TL;DR / Key Takeaway: The next frontier is “world models”—AI systems that build internal, predictive representations of physical and virtual environments—so robots, agents, and copilots can plan actions, not just autocomplete text.

Executive Summary

The article explores a race to develop world models—AI tools that can understand and simulate environments well enough to support agents in the physical world. Google’s experimental Project Genie can turn a prompt into an interactive, explorable world, from realistic scenes to a walkable version of a Seurat painting. Google frames Genie as more than a game: a model that lets AI systems practice in simulation before acting in reality, essential for tasks like humanoid robots shopping for groceries or self-driving cars navigating complex roads.

One branch of research starts from video generation: if a model can produce coherent video, it’s effectively simulating a consistent world and can infer missing details (plotting paths through mazes, modeling how hands twist a jar). But video-based models miss off-camera details (like a broken freezer) and struggle with multi-user consistency—aisles that only “exist” when viewed. In response, Fei-Fei Li’s World Labs is building Marble, which constructs full 3D environments that are complete and consistent from the start, allowing multiple users to share the same world—useful for architects and potentially for robot training.

Another camp, led by Yann LeCun at Advanced Machine Intelligence, focuses less on literal 3D spaces and more on abstract environments—HR systems, legal documents, software stacks. His Joint-Embedding Predictive Architecture (JEPA) aims to give AI the ability to simulate long-range consequences and plan sequences of actions without rendering every frame, closer to how humans think ahead.

Finally, some argue that large language models already contain world models. Citing work where an LLM trained only on Othello move sequences encoded the full board state internally, plus Anthropic’s interpretability research mapping neurons to concepts (like the Golden Gate Bridge or “feelings of guilt”), they suggest LLMs compress real-world structure into their weights. Critics like Fei-Fei Li counter that LLMs are still “wordsmiths in the dark”—able to talk about the world without grounded experience, like a student who has only read about a foreign country. Whichever approach wins, the piece concludes, AI is about to “pay the real world a visit.”

Relevance for Business

For SMB leaders, world models are the invisible layer that will underwrite next-gen robotics, industrial automation, logistics, AR/VR, and even complex back-office copilots. As these models improve, expect AI systems that can reason about physical constraints, inventory layouts, workflows, and policy rules, not just surface text answers. That means future tools may be able to plan routes through your warehouse, schedule technicians with realistic travel times, or navigate regulatory “mazes” with more context—while also introducing new dependencies on proprietary simulation platforms.

Calls to Action

🔹 In automation roadmaps, look beyond chatbots: consider where physically grounded AI (robots, smart cameras, AR assistants) could safely augment work in warehouses, clinics, shops, or field operations.

🔹 When evaluating vendors, ask how their systems model the environment—are they just text-based, or do they integrate spatial data, sensor feeds, or digital twins?

🔹 Start digitizing floor plans, asset locations, process maps, and workflows; these will be key inputs for practical world-model deployments.

🔹 Be cautious about vendor lock-in to a single world-model platform; prefer systems that can export data and interoperate with other tools.

🔹 Watch early deployments in logistics, manufacturing, and retail as leading indicators of where world-model-driven AI becomes economically compelling.

Summary by ReadAboutAI.com

https://www.economist.com/science-and-technology/2026/02/25/ai-tools-are-being-prepared-for-the-physical-world: March 07, 2026

SPACEX RIVAL RISES ON NEW DEFENSE CONTRACT

INVESTOR’S BUSINESS DAILY / WSJ (FEB. 23, 2026)

TL;DR / Key Takeaway: AST SpaceMobile’s $30 million Pentagon contract signals that low-Earth-orbit satellite networks—a key backbone for future AI and comms—are becoming a multi-vendor, defense-critical market, not a one-company (SpaceX) story.

Executive Summary

The article reports that AST SpaceMobile has landed a $30 million contract with the U.S. Space Development Agency, part of the Space Force, to test how its BlueBird satellites can carry Department of Defense communications. It’s the first time AST’s defense subsidiary has served as prime contractor for a government deal, moving the company beyond its civilian mission of extending wireless coverage to remote areas.

The Pentagon already buys satellite communications from SpaceX’s Starlink, so this contract marks AST as a meaningful alternative provider. Shares rose 4.6% on the news. The article notes that AST is part of a broader cohort of commercial space firms—including Rocket Lab and Firefly—that have seen volatile investor interest. AST’s stock gained over 240% in 2025 and is up about 15% this year, though it has been in a five-week pullback with a pattern of surging to new highs then sliding.

Meanwhile, SpaceX is planning a potential Starlink IPO and a merger with Musk’s AI company xAI, further blurring the line between space infrastructure and AI.

Relevance for Business

For SMBs, especially those in communications, IoT, agriculture, logistics, or remote operations, this contract is another signal that space-based connectivity is evolving into a strategic, AI-adjacent infrastructure layer with multiple commercial vendors. More competition could eventually mean better coverage and pricing for satellite-backed services, but it also increases complexity in choosing partners and integrating networks.

Calls to Action

🔹 If you operate in remote or connectivity-poor regions, track emerging satellite providers (not just Starlink) and compare coverage, latency, and enterprise offerings.

🔹 Consider how AI + satellite connectivity could open new products—e.g., always-connected field sensors, autonomous equipment, or resilient backup links.

🔹 When evaluating satellite vendors, look beyond price to regulatory posture, uptime history, and defense exposure, which may affect service priorities.

🔹 Avoid over-committing to a single space provider; design architectures that can swap or multi-home across networks as the market shifts.

🔹 Stay aware that defense contracts can both stabilize and skew a provider’s roadmap—ensure your requirements still align with where the vendor is heading.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/spacex-rival-rises-on-new-defense-contract-134163591537071601: March 07, 2026

A VIRAL BLOG POST SLAMS STOCKS. FEAR ABOUT AI IS REAL.

BARRON’S / WSJ (FEB. 23, 2026)

TL;DR / Key Takeaway: A single viral AI-doom scenario from a small research shop was enough to knock billions off selected stocks—showing that AI narrative risk is now a real market driver, even when the scenario is purely hypothetical.

Executive Summary

The article describes how a Substack post from Citrini Research—imagining a bleak 2028 in which agentic AI wipes out white-collar jobs, hollows out asset managers, and shrinks the tax base—sparked a Monday selloff in exactly the stocks it named. In Citrini’s downside scenario, AI isn’t a flop; it exceeds expectations, yet the S&P 500 falls 38% as profits and employment crumble.

Investors reacted anyway. DoorDash was hit hardest after the post imagined AI agents fragmenting food-delivery markets: coding agents let rivals spin up apps cheaply, drivers use multi-app dashboards to cherry-pick gigs, and consumer agents shop every app for the lowest price—erasing brand loyalty and compressing margins “to nearly nothing.” DoorDash fell 6.9% Monday; Uber lost 4.1%. DoorDash co-founder Andy Fang publicly acknowledged that “agentic commerce will be transformative” and that platforms will need to adapt to serve both humans and agents.

Payments giants American Express, Capital One, Visa, Mastercard and private-equity firms KKR, Blackstone, Apollo—all singled out in the post—also sold off as the imagined scenario painted AI agents routing payments via stablecoins and hammering software-exposed credit portfolios. Other news, including tariff worries, also weighed on the market, but the piece notes that the Citrini scenario became a talking point on trading desks, underscoring how sensitized investors are to AI downside narratives.

Relevance for Business

For SMB leaders, this is less about day-to-day trading and more about recognizing “narrative volatility” around AI. Investors—and by extension boards, partners, and lenders—are now reacting not just to earnings, but to thought experiments about AI futures. That means your AI posture (how you talk about exposure, resilience, and upside) can influence perceptions of risk even before fundamentals change.

Calls to Action

🔹 Treat AI scenarios as a board-level topic: be ready to explain how your business would respond to aggressive agent adoption, not just best-case automation stories.

🔹 In investor and lender conversations, separate hype from plan: quantify which revenue streams are most exposed to AI-driven margin compression and what mitigation looks like.

🔹 If you operate in platforms or marketplaces, stress-test “agentic commerce”: what happens when buyers’ agents compare every offer and workers’ agents shop every gig?

🔹 Develop communication playbooks for AI-related shocks (research notes, viral posts, policy changes) so you can respond calmly with credible data instead of silence.

🔹 Internally, encourage leaders to distinguish between scenarios and forecasts—use worst-case narratives to sharpen resilience planning, not to paralyze decision-making.

Summary by ReadAboutAI.com

https://www.barrons.com/articles/ai-blog-post-stocks-fall-cf25d815: March 07, 2026

15 INCREDIBLY USEFUL THINGS YOU DIDN’T KNOW NOTEBOOKLM COULD DO

FAST COMPANY (FEB. 25, 2026)

TL;DR / Key Takeaway: Google’s NotebookLM shows how a constrained, source-grounded AI notebook can turn messy documents into practical, low-hallucination “second brain” workflows for both personal life and work.

Executive Summary

The article positions NotebookLM as an “AI-first notebook” where you upload your own materials (PDFs, images, transcripts) and then use Google’s Gemini to query and synthesize them—reducing hallucinations because the system is constrained to your sources and not used for model training. The author argues the main challenge is discoverability: NotebookLM looks like a blank prompt, so users don’t know where to start.

To make it concrete, the piece walks through 15 real-world use cases: a product-manual notebook that answers “how does this feature work?” questions, car-specific notebooks that combine owner’s manuals with maintenance receipts, home maintenance notebooks for repairs and appliances, a personal company wiki for policies and contacts, and dedicated notebooks for contracts, meeting transcripts, interviews, instruction manuals (including board games), and more. Each example illustrates how NotebookLM can search across heterogeneous documents, answer targeted questions, and surface patterns over time (e.g., when to schedule next maintenance based on manufacturer guidance and service history).

Relevance for Business

For SMB leaders drowning in unstructured PDFs, manuals, SOPs, and call notes, NotebookLM demonstrates a pattern that’s broader than Google: grounded, domain-specific AI over your own content. Whether you adopt NotebookLM or a different tool, the value comes from turning scattered internal documents into queryable knowledgethat reduces training overhead, supports faster decisions, and cuts down on “who knows where X is?” friction. The piece also highlights a cultural shift: workers are starting to build personal “AI notebooks” around their roles, which can improve productivity but also raises questions about knowledge portability and governance.

Calls to Action

🔹 Identify 1–2 high-friction knowledge areas (e.g., internal policies, product documentation, service manuals) and pilot a grounded AI notebook over those documents.

🔹 Treat notebooks as shared assets, not just personal hacks: define who owns the content, how it’s kept current, and what happens when staff leave.

🔹 Use constrained tools like NotebookLM to reduce hallucination risk for internal Q&A, rather than relying on open-web chatbots for policy or compliance answers.

🔹 Start small with one function (IT, facilities, customer support), measure time saved on basic questions, and then decide whether to roll out more broadly.

🔹 Refresh your information-classification and retention policies to cover AI notebooks, including what should notbe uploaded (e.g., sensitive HR or regulated data).

Summary by ReadAboutAI.com

https://www.fastcompany.com/91494654/google-notebooklmtips: March 07, 2026

ORACLE’S AI SPENDING SPOOKS MARKETS—BUT ONE ANALYST SEES A BARGAIN

MARKETWATCH (FEB. 25, 2026)

TL;DR / Key Takeaway: After a 56% stock plunge driven by fears over Oracle’s debt-fueled AI build-out, Oppenheimer now calls the company an “upper-echelon” EPS growth story—if it can finance a massive data-center push and keep its OpenAI partnership on track.

Executive Summary

Oracle’s share price has been cut by more than half since its September peak as investors question whether the company can safely finance its transformation into a capital-intensive AI infrastructure provider. The company has amassed about $130 billion in debt plus $248 billion in operating-lease commitments to fund cloud and data-center expansion, with Oppenheimer estimating up to $414 billion in data-center spending through fiscal 2030. Credit markets are nervous: Oracle’s credit-default swaps have risen to their highest levels since the Great Recession, reflecting perceived financing risk.

Despite this, Oppenheimer has upgraded Oracle from Perform to Outperform with a $185 price target (~22% upside), arguing that the selloff now appropriately prices in risk and that Oracle is “relatively immune” to the AI-disruption fears hitting other software names such as IBM and Datadog. The analyst’s bull case has Oracle’s EPS nearly tripling by 2030, with the base case still showing more than a doubling of EPS, placing the company in the “upper echelon” of large-cap earnings growth—assuming it can execute and service its debt load. Much of that thesis depends on Oracle’s large AI partnership with OpenAI and on its ability to raise up to $50 billion this year via stock and debt issuance without overstretching its balance sheet.

Relevance for Business

For SMB leaders, Oracle is a case study in AI infrastructure risk and reward:

- It shows how quickly markets can sour on debt-heavy AI expansion, even for established vendors.

- It underscores that your own AI plans may depend on suppliers who are simultaneously chasing growth and testing their credit limits.

- As Oracle and other “hyperscalers” spend aggressively on GPUs and data centers, customers may see attractive pricing and capacity in the near term, followed by potential volatility if financing conditions tighten.

Calls to Action

🔹 If you depend on Oracle (or similar vendors) for cloud and AI services, review concentration risk and ensure you can shift workloads if pricing or service quality changes.

🔹 In RFPs and vendor reviews, ask explicitly about AI capex, financing strategy, and balance-sheet health—not just feature roadmaps.

🔹 Treat very aggressive, AI-driven discounting as a signal of strategic overextension; negotiate hard, but avoid locking into inflexible long-term contracts without exit options.

🔹 Use Oracle’s situation to brief your board on the systemic risk of debt-fueled AI infrastructure and how you’re insulating your organization via multi-vendor strategies.

🔹 Internally, apply the same discipline: don’t mirror Oracle’s leverage mindset—fund AI initiatives with clear ROI thresholds and staged investments rather than all-in bets.

Summary by ReadAboutAI.com

https://www.marketwatch.com/story/oracles-stock-selloff-offers-a-chance-to-buy-an-upper-echelon-growth-play-for-cheap-analyst-73e8e1ab: March 07, 2026

NASDAQ BOUNCES BACK AFTER AMD–META DEAL ON AI CHIPS

THE WALL STREET JOURNAL (FEB. 24, 2026)

TL;DR / Key Takeaway: A $100+ billion AMD–Meta AI-chip deal and signs that AI tools will augment rather than replace software helped markets quickly reverse an AI-driven selloff—illustrating how volatile the AI narrative pendulum has become.

Executive Summary

After Monday’s sharp drop—sparked in part by Citrini Research’s AI-doom scenario—stocks rebounded Tuesday on a series of AI-tilted catalysts. Before the open, Meta Platforms announced a plan to buy over $100 billion of AI chips from AMD, sending AMD shares up 8.8% and lifting sentiment around non-Nvidia chip suppliers. Software names battered in the selloff, including IBM and Oracle, also bounced, and an S&P software-and-services fund rose 2.7%.

Two additional factors helped: stronger-than-expected consumer confidence data, and a statement from Anthropic that framed its new tools as working with existing software stacks rather than replacing them outright—implicitly countering fears that AI will obliterate incumbent vendors. The Nasdaq composite gained 1%, while the S&P 500 and Dow each rose 0.8%, clawing back part of the prior session’s losses (shown in the three-day index chart on page 2).

Still, uncertainty remains high. Commentators describe “a daily battle between AI bulls and bears” as investors try to price how AI will reshape margins, capex, and competitive dynamics. The S&P is nearly flat year-to-date, masking volatile swings in AI-sensitive names. Meanwhile, President Trump’s new 10% global tariffs took effect, with hints of future increases and possible national-security tariffs on select industries, adding another layer of macro risk.

Relevance for Business

For SMB leaders, this article reinforces that AI is now central to capital-market sentiment, affecting everything from chip supply to enterprise software valuations. The AMD–Meta deal underscores how much large players are willing to spend on compute as strategic infrastructure, while the Anthropic framing shows that “AI as a co-pilot” remains a powerful counter-narrative to AI-as-job-destroyer. Your customers, partners, and suppliers are being buffeted by these swings, which can influence pricing, investment, and vendor stability.

Calls to Action

🔹 Expect continued volatility in AI-exposed vendors you depend on (cloud, chips, software); avoid over-indexing on a single supplier based solely on near-term market optimism.

🔹 When planning your own AI investments, separate your strategy from market mood: focus on concrete ROI and risk, not whether the Nasdaq is up or down on a given AI headline.

🔹 Use moments like the AMD–Meta announcement to pressure-test your infrastructure roadmap: do you have enough flexibility to change vendors or architectures if economics shift?

🔹 In communications with boards and owners, explain how you’re insulating your plans from AI narrative whiplash—stable capex plans, scenario analysis, and staged pilots.

🔹 Watch how major AI labs (like Anthropic) position their tools relative to incumbent software; their augment vs. replace framing can signal where disruption risk is highest.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/nasdaq-bounces-back-after-amd-meta-deal-on-ai-chips-a30ef666: March 07, 2026

New Relic Plans to Expand AI Agent Observability

TechTarget / Search IT Operations (Feb. 24, 2026)

TL;DR / Key Takeaway: New Relic is betting on deep, agent-aware observability and partnerships—not an “all-in-one AI control plane”—which may appeal to teams that want modular tooling but still need proof that agentic observability delivers better outcomes than traditional root-cause analysis.

Executive Summary

New Relic is rolling out preview features to make its observability platform more agentic-AI aware: a new SRE Agent to investigate incidents, a no-code AI agent builder to tailor analyses, and capabilities to monitor AI agents and assess their business impact. Rather than building its own end-to-end automation stack, New Relic is doubling down on integration—feeding insights to partners such as ServiceNow, Atlassian, PagerDuty, AWS, and GitHub so their agents can use New Relic’s telemetry to drive remediation and CI/CD decisions.

Analysts note that these features largely match competitors like Cisco-Splunk, Dynatrace, and Datadog, and that no clear winner has emerged in agentic observability. Customers, already familiar with AIOps and decade-old root-cause tools, are skeptical that yet another “agentic ecosystem” will actually improve mean time to resolution without introducing new complexity. New Relic’s strategy is to lean into the operator persona and become a powerful layer in a broader agentic ecosystem, rather than trying to own the entire software delivery lifecycle—but that carries the risk that buyers will favor single platforms over “additional layers” when consolidating vendors.

Relevance for Business

For SMBs already using New Relic or considering observability upgrades, this signals a shift toward AI-mediated operations where agents query telemetry directly, correlate incidents, and propose fixes. The upside is potentially faster incident triage and more consistent analysis without hiring a large SRE team. The downside is the risk of tool sprawl—multiple AI agents across vendors, each needing integration, governance, and validation of their recommendations.

Calls to Action

🔹 Inventory your monitoring stack and identify where agent-enhanced analysis would materially reduce downtime or noise, instead of adding another dashboard.

🔹 If you use New Relic, pilot the SRE Agent and AI agent builder in a narrow scope (e.g., one service) and compare incident metrics against your current process.

🔹 Clarify ownership: decide who is responsible for validating agent recommendations and approving automated actions before enabling aggressive remediation.

🔹 When evaluating vendors, ask for concrete before/after metrics (MTTR, alert volume, false positives) from real deployments—not just demos of “smart” agents.

🔹 Build a vendor-agnostic integration plan: assume you’ll have multiple agentic tools and ensure they can share signals without locking you into a single platform.

Summary by ReadAboutAI.com

https://www.techtarget.com/searchitoperations/news/366639362/New-Relic-plans-to-expand-AI-agent-observability: March 07, 2026

7 Practical Tips for Agentic AI Cost Optimization

TechTarget / Search Enterprise AI (Feb. 24, 2026)

TL;DR / Key Takeaway: Agentic AI can quietly turn into a nonlinear, runaway cost center unless leaders treat it as a variable swarm of agents with its own TCO model, guardrails, and usage budgets—not just “another model” to plug in.

Executive Summary

This piece argues that most organizations dramatically underestimate the full cost of agentic AI because they fixate on model inference, which may be only ~20% of total cost of ownership. The bigger spend shows up in orchestration frameworks, data infrastructure, persistent memory, observability, human oversight, and governance required to keep autonomous agents safe and useful at scale. Agent behavior—retries, long-running workflows, expanding context windows, tool calls, and sub-agents—creates inherently variable and often unpredictable bills, especially when agents run continuously.

The article outlines seven practical levers: scenario-based TCO forecasting, using smaller models where possible, explicitly limiting autonomy (retries, recursion, tool calls, token budgets), stress-testing vendor pricing against real usage, real-time cost monitoring, tight control over retrieval/context, and defining cost and error budgets up front. The core message: optimization is about proportionality—costs should scale with realized business value, not with whatever the agents decide to do.

Finally, the author draws a boundary for when agentic AI makes economic sense: high-volume, high-variability work(customer service, claims, IT ops, finance back office), always-on domains (security, fraud, network operations, dynamic pricing), and complex coordination tasks (supply chain, remediation, procurement). In contrast, low-volume, deterministic, or high-risk workflows often get better ROI from simpler automation or assistive AI; in those cases, the governance overhead and autonomy risk can sink the business case.

Relevance for Business

For SMB leaders, the takeaway is that “just add agents” is a budget trap. Agentic systems introduce new, persistent cost lines—vector databases, orchestration platforms, monitoring tools, and human overseers—which can quickly exceed the initial license and API line items. Without a clear framework tying per-task cost to measurable outcomes, you risk building a clever but expensive parallel system that doesn’t replace enough human effort or generate new revenue.

Calls to Action

🔹 Model TCO explicitly for any agentic AI initiative, including worst-case scenarios for retries, context growth, and oversight time—not just model/API pricing.

🔹 Segment workflows and deliberately route routine steps to cheaper models or deterministic logic, reserving premium models and autonomy for complex edge cases.

🔹 Impose autonomy guardrails (max retries, recursion depth, token and tool-call budgets), and automatically escalate to a human when thresholds are hit.

🔹 Set cost and error budgets per use case and monitor them in near real time; treat deviations like operational incidents, not a “billing surprise” to resolve later.

🔹 Be selective about where you deploy agents: prioritize high-volume, variable processes; use simpler RPA or assistive AI for stable, deterministic workflows.

Summary by ReadAboutAI.com

https://www.techtarget.com/searchenterpriseai/tip/Practical-tips-for-agentic-AI-cost-optimization: March 07, 2026

LLM Firewalls Emerge as a New AI Security Layer

TechTarget / Network Security (Feb. 25, 2026)

TL;DR / Key Takeaway: As organizations embed LLMs into workflows, LLM firewalls are emerging as a specialized layer to filter prompts, control data flows, and block AI-specific attacks—but the market is nascent, and firewalls alone are not a complete GenAI security strategy.

Executive Summary

This feature describes how rapid adoption of LLMs and generative AI is exposing organizations to new classes of risk—prompt injection, model poisoning, data leaks, malicious code generation, privilege escalation, and model overuse. In response, vendors are rolling out LLM firewalls that sit between users, models, and back-end systems to monitor, filter, and sanitize inputs and outputs, manage how models invoke tools and external data, and understand how sensitive information flows through the system.

LLM firewalls differ from traditional network firewalls and WAFs by focusing on semantics, intent, and context in natural language, often via three layers: a prompt firewall (blocking jailbreaks and malicious prompts), a retrieval firewall (governing data fetched in RAG workflows), and a response firewall (screening outputs before they reach users). Established players like Palo Alto Networks, Cloudflare, Akamai, Varonis, Check Point and a host of startups (Lakera, Prompt Security, HiddenLayer, CalypsoAI, and others) are rushing into the segment; market size estimates range from tens to hundreds of millions of dollars, illustrating how early and unsettled the space is.

Analysts say current tools can reasonably block many jailbreaks, prompt injections, and sensitive-data leaks, but struggle with multi-turn, stateful attacks and still face uncertainty around long-term effectiveness as threat patterns evolve. Organizations must clarify what they’re protecting (LLM, client, or downstream systems), where models are hosted, and what data types must be recognized. Accuracy (false positives/negatives) and latency are key buying criteria. The article stresses that LLM firewalls are only one element in a broader defense-in-depth posture that also includes AI security posture management, DLP, data security, tokenization, and governance.

Relevance for Business

For SMBs experimenting with LLMs—whether via internal assistants, customer chatbots, or embedded vendor features—LLM firewalls highlight that “AI security” is no longer just data access control. You now have to worry about what users and agents say to the model, what the model can access, and what it can trigger downstream. A full enterprise-grade firewall may be overkill for small deployments, but understanding these patterns is essential as usage spreads and shadow AI proliferates across tools.

Calls to Action

🔹 Map where LLMs live in your environment (in-house apps, SaaS tools, vendor platforms) and document what systems and data they can touch.

🔹 For higher-risk use cases (finance, legal, health, operations), consider LLM firewall or equivalent controls that can block prompt injection, data exfiltration, and dangerous tool calls.

🔹 When evaluating solutions, test for accuracy and latency using your real prompts and workflows; avoid tools that either block everything or miss obvious abuses.

🔹 Treat LLM firewalls as one layer in a broader strategy that includes access control, DLP, logging, incident response, and clear policies on acceptable AI use.

🔹 Educate staff that GenAI is effectively “shadow IT” by default: even if you ban tools, they are embedded in many SaaS products—so you must manage risk where people already work.

Summary by ReadAboutAI.com

https://www.techtarget.com/searchsecurity/feature/LLM-firewalls-emerge-as-a-new-AI-security-layer: March 07, 2026

B.well Launches White-Label Health AI Assistant “bailey”

TechTarget / Health IT Infrastructure (Feb. 24, 2026)

TL;DR / Key Takeaway: B.well’s white-label health AI assistant “bailey” offers healthcare organizations a shortcut to personalized, longitudinal-data-driven patient assistants, shifting the hard work to data integration, compliance, and workflow design rather than core model building.

Executive Summary

Digital health company b.well Connected Health has released “bailey”, a white-label AI assistant that providers, payers, and other healthcare organizations can embed in their own apps instead of building from scratch. Bailey is marketed as being “grounded in complete, longitudinal health records” by fusing clinical, pharmacy, claims, and wearables data from 350+ sources into a single health record. The assistant supports an agentic architecture, letting customers use pre-built agents, develop their own, or integrate third-party agents on top of b.well’s data platform.

Organizations can fully brand the interface and embed bailey into existing web, Android, and iOS apps, while relying on b.well’s compliance posture: HIPAA, SOC 2, HITRUST, plus alignment to the CARIN Alliance Code of Conductfor responsible data use. Under the hood, bailey uses the b.well Health AI SDK, which applies a 13-step data refineryto clean and standardize fragmented health data and embed it into fewer tokens—aimed at reducing processing costs while keeping context rich. The launch lands amid broader momentum for AI in healthcare, including ambient clinical documentation, revenue-cycle automation, and AI-driven patient engagement.

Relevance for Business

For SMB-scale healthcare providers, clinics, and health-adjacent organizations, bailey represents a platform approach: instead of hiring data engineers and AI teams, they can rent a pre-integrated health data and assistant stack and focus on patient journeys, branding, and policy. The upside is faster time to market with a potentially high-trust patient assistant that can coordinate care steps, surface convenient options, and support patients between visits. The risk is vendor dependency on a single data refinery and assistant layer, plus the need to carefully govern what actions bailey can take and how recommendations are presented to avoid clinical overreach.

Calls to Action

🔹 If you operate in healthcare or benefits, map where a patient-facing assistant could reduce friction (e.g., scheduling, medication refills, benefits navigation) and assess whether a white-label solution fits.

🔹 Evaluate data governance and consent flows: ensure patients understand how their data is aggregated, used, and shared across agents and partners.

🔹 Scrutinize integration points with your EHR, claims, and wearable data: clarify who is responsible for data quality, error handling, and reconciliation.

🔹 Start with low-risk, high-utility use cases (education, navigation, reminders) before allowing bailey or similar assistants to influence higher-risk clinical decisions.

🔹 Build an exit strategy: confirm that you can export cleaned, unified data and transition to other platforms if business or regulatory needs change.

Summary by ReadAboutAI.com

https://www.techtarget.com/searchhealthit/news/366639288/Bwell-launches-white-label-health-AI-assistant: March 07, 2026

Salesforce Acquisition of Momentum to Boost Sales Functions

TechTarget / Search Customer Experience (Feb. 25, 2026)

TL;DR / Key Takeaway: Salesforce is using Momentum’s AI sales agents to turn messy conversational data into pipeline reality checks, but the value hinges on whether sales leaders can trust the agents’ judgments more than rep optimism—and more than AI hallucinations.

Executive Summary

Salesforce has agreed to acquire Momentum, an AI startup whose agents target core sales and RevOps pain points: Deal Execution Agent (auto-filling CRM records), Coaching Agent (analyzing calls for positioning and objections), and an AI CRO Agent (building briefs and surfacing pipeline insights from natural language queries). Momentum already integrates tightly with Slack and Salesforce, but also with competing CRMs and tools like Gong, Google Meet, Zoom, and Webex, as well as multiple foundation models (Gemini, GPT, Claude, Cohere). This positions Salesforce to pull far more conversational sales exhaust into Agentforce 360 and Slack’s agentic workflows.

Analysts argue that while AI-assisted pipeline analysis isn’t new, most executives still struggle to see through sales-rep optimism and noisy dashboards to what’s actually happening in deals. AI agents that digest every call, email, and meeting could provide more grounded forecasts and coaching recommendations. At the same time, generative AI’s history of hallucinations and overconfident errors makes trust a critical adoption barrier; if the AI is just a different kind of optimist, it doesn’t solve the underlying problem.

Relevance for Business

For SMBs in the Salesforce ecosystem, this acquisition points toward a future where deal hygiene, coaching, and forecasting are increasingly automated. That could reduce manual CRM updates, improve rep behavior on calls, and give leadership a clearer signal on pipeline health—without adding headcount. However, these benefits depend on data quality, governance, and calibration: bad call recordings or inconsistent processes will simply be analyzed faster, not fixed. Trust and transparency around how AI agents score deals and generate recommendations will be essential.

Calls to Action

🔹 Audit your sales data and workflows now: clean up CRM fields, standardize stages, and ensure call recordings and notes are reliably captured before layering in AI.

🔹 Plan how you’d use AI-driven coaching: decide what metrics (objection handling, talk ratio, value framing) matter most and how managers will respond to insights.

🔹 When Salesforce exposes these capabilities, start with “assist” modes—AI suggestions and risk flags—before allowing agents to drive forecasts or workflow automation.

🔹 Establish a trust framework: spot-check AI-generated briefs and risk scores against real deals, and set thresholds for when to override or ignore AI guidance.

🔹 Consider vendor diversification risk: if your sales stack (CRM, comms, analytics) is all Salesforce-centric, ensure you still have export paths and independent views of your data.

Summary by ReadAboutAI.com

https://www.techtarget.com/searchcustomerexperience/news/366639518/Salesforce-acquisition-of-Momentum-to-boost-sales-functions: March 07, 2026Closing: AI update for March 07, 2026, Mid-Week AI Developments

Taken together, these stories show AI moving from experimentation to embedded infrastructure, with agents, chips, networks, and governance all evolving in parallel—and not always in sync. As you scan this week’s summaries, consider where you can capture practical gains from agentic tools and automation today, while deliberately setting guardrails on capital exposure, workforce impact, and long-term dependency on a small set of AI suppliers.

All Summaries by ReadAboutAI.com

↑ Back to Top