AI Updates March 31, 2026

This week’s collection suggests that AI is entering a more disciplined and more consequential phase. The loudest signal is no longer just that models are improving; it is that the companies building them are making harder strategic choices about where real value will come from. OpenAI’s retreat from Sora and its apparent concentration on enterprise, coding, and frontier-model competition, Google’s widening multimodal push, and Meta’s internal restructuring around AI agents all point to the same conclusion: the market is shifting from experimentation and novelty toward execution, monetization, and organizational redesign.

At the same time, this week’s coverage makes clear that adoption is not a clean upward curve. Businesses are buying tools faster than employees are learning to use them. Workers are increasingly anxious about what AI means for entry-level and administrative roles. Public distrust remains high even as usage spreads. And the governance questions are getting sharper: disclosure in published content, trust in AI-assisted decisions, vendor stability, and the risks of building workflows on tools whose strategic priorities may change overnight. In other words, AI is not settling down into a simple productivity story. It is becoming a management, workforce, and trust story at the same time.

For SMB executives and managers, the practical takeaway is straightforward: this is a moment to become more selective, not more passive. The strongest opportunities are increasingly visible — coding assistance, customer service automation, research acceleration, domain-specific tools, and workflow agents — but so are the failure modes: poor training, weak governance, unstable vendors, and rushed assumptions about labor savings. The organizations that benefit most will not be the ones chasing every new release. They will be the ones that choose durable use cases, train their people well, and treat AI adoption as an operating model decision rather than a software purchase.

Summaries

OpenAI’s Strategic Retrenchment, Google’s Expanding Multimodal Push, and the Next Phase of AI Competition

AI for Humans podcast transcript — March 27, 2026

TL;DR / Key Takeaway: The clearest signal from this episode is that OpenAI appears to be narrowing its priorities around enterprise adoption, coding, and frontier-model competition, while Google is broadening across voice, audio, interface generation, and consumer utility, reinforcing that the AI market is now being shaped as much by focus, distribution, and product execution as by raw model quality.

Executive Summary

This episode’s strongest business signal is the hosts’ argument that OpenAI is pulling back from experimental or consumer-adjacent bets—most notably Sora/video efforts and “spicy chat”—in order to concentrate on enterprise relevance, coding, and its next frontier model, “Spud.” The important takeaway is not that AI video is disappearing; the transcript itself makes the opposite case. Rather, the implication is that OpenAI may be reallocating scarce focus toward areas where revenue, defensibility, and competitive pressure are highest. That reads less like a collapse of ambition than a strategic retrenchment under pressure, especially as the hosts repeatedly frame Anthropic as shipping faster and competing more aggressively in business use cases.

The second major signal is that Google continues to widen its surface area. In the transcript, the hosts highlight improvements to Gemini 3.1 Flash Live for faster voice-based interaction, a browser-style demo that points toward AI-rendered interfaces, and Lyra3 Pro for music/audio generation. Their interpretation is especially important for executives: the value is not novelty alone, but reduced interaction friction, faster response times, and the possibility that AI becomes more embedded in everyday workflows through voice, translation, and lightweight interface generation. In other words, the next competitive edge may come from responsiveness and usability, not just benchmark performance.

The remaining items—Mistral’s open-source voice model, Runway’s simplified multi-shot video workflow, Meta’s TRIBE v2 brain-scan work, and the SMASH ping-pong robot—are more uneven in immediate business value. Mistral and Runway matter because they lower barriers: one through open-source voice access, the other through easier video assembly. Meta’s brain-model work is more “watch closely” than “act now”; the hosts themselves frame its long-term implications through a mix of scientific intrigue and concern about how such capabilities could eventually serve ad-driven optimization. The robot demo is best understood as a reminder that robotics progress remains real but uneven, with flashy point demonstrations not yet equivalent to broad commercial readiness.

Relevance for Business

For SMB executives and managers, this episode matters because it reinforces that the AI market is entering a more disciplined phase. Leaders should pay less attention to whether a company launches many flashy features and more attention to which capabilities vendors are willing to double down on, sustain, and integrate into dependable business products. OpenAI’s apparent narrowing suggests that not every AI feature category will remain strategically important to every vendor, which increases vendor-dependence risk for teams building on noncore tools or APIs.

Google’s updates matter for a different reason: they suggest AI is becoming more ambient and more usable. Faster voice interaction, live multimodal agents, translation, and interface rendering all point toward AI being woven into ordinary work rather than reserved for special “AI tasks.” That has implications for workflow design, training, customer interaction, and software purchasing. Businesses may soon evaluate AI tools less on whether they can generate content and more on whether they reduce time, clicks, and cognitive load in real operating environments.

The broader strategic message is that power may continue concentrating with firms that control distribution, data, and compute ecosystems. The hosts explicitly note Google’s YouTube advantage and ByteDance/TikTok’s video data advantage in AI video. For smaller organizations, that means tool selection should account not only for current output quality, but also for which vendors have the infrastructure, data flywheels, and financial staying power to keep improving quickly.

Calls to Action

🔹 Review your vendor exposure if any workflow depends on niche or experimental AI features that could be deprioritized or discontinued.

🔹 Track AI tools that reduce friction, especially voice, translation, and lightweight interface-generation features that may improve everyday productivity faster than headline-grabbing model releases.

🔹 Treat frontier-model announcements cautiously until they translate into measurable gains in coding, workflow speed, reliability, or customer outcomes.

🔹 Monitor open-source voice and simplified video tools as lower-cost options for marketing, training, and content production pilots.

🔹 Keep an eye on neurotech and robotics, but classify them as longer-horizon signals unless a concrete, near-term business use case emerges.

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=MZyfI0g3Uzg: March 31, 2026

WHEN CLAUDE MET CLAUDE: WHY IS ANTHROPIC SPONSORING AN EXHIBITION ABOUT MONET?

The Atlantic | Matteo Wong | March 25, 2026

TL;DR: Anthropic’s sponsorship of a Monet exhibition at San Francisco’s de Young Museum — complete with AI-powered typewriter kiosks — is examined critically as an example of AI companies purchasing cultural legitimacy rather than earning it, and raises an honest question about whether technology brand-building through art sponsorship changes the ethical calculus of those companies’ underlying practices.

Executive Summary

This is an opinion-adjacent cultural commentary piece, not a business news article. Its primary claim — that Anthropic and other AI companies (OpenAI is also cited, via a Versailles chatbot and a Cannes film project) are deploying arts sponsorships to soften the public perception of disruptive technology — is framed as critique rather than fact. The author’s firsthand account of the typewriter kiosk at the de Young is useful: the experience was shallow, the AI restated exhibit wall text, and the physical props (fake books, Anthropic-branded stationery) read as aesthetic cosplay rather than substantive engagement with art or ideas.

The editorial position is explicit and should be treated as such. The author is making an argument, not reporting a finding. The argument — that sponsoring classical art while training models on millions of books without author consent is a form of reputational laundering — is a legitimate critique worth noting, but it is not a neutral assessment. What the piece does document factually: the Monet exhibition sponsorship occurred; the typewriter installation was real and available to museum visitors; Anthropic declined to answer questions about it; and OpenAI has pursued similar cultural partnerships.

The business signal worth extracting: AI companies are investing meaningfully in brand reputation and public trust — not just through product performance but through cultural positioning. This is a response to real and growing public unease about generative AI’s effects on creative industries, authorship, and human expression. Whether museum sponsorships change that dynamic is debatable; that the companies perceive a reputational problem worth spending on is not.

Relevance for Business

For SMBs, this piece is lower-priority as a direct operational input. Its relevance is contextual: the public trust deficit around AI is real and is being actively managed by the largest players. Organizations that deploy AI in client-facing or creative contexts should be aware that cultural and reputational framing is part of the competitive landscape, not just product features. Brand exposure from AI use — positive and negative — is a real consideration, particularly for businesses in creative services, publishing, education, or professional services where clients have strong feelings about the human vs. AI origin of work product.

Calls to Action

🔹 Monitor how AI companies manage public trust narratives — this will inform the broader cultural environment in which your own AI use is perceived by clients and employees.

🔹 Consider your own AI narrative — if your business uses AI in client-facing work, having a clear and honest position on that use is more defensible (and more durable) than leaving it ambiguous.

🔹 Deprioritize this piece as an immediate operational action item — it is a cultural commentary, not a business development, and its relevance to most SMBs is background context rather than decision input.

Summary by ReadAboutAI.com

https://www.theatlantic.com/technology/2026/03/claude-monet-ai-typewriter/686535/: March 31, 2026

THE INSIDE STORY OF THE GREATEST DEAL GOOGLE EVER MADE: BUYING DEEPMIND

Wall Street Journal | March 25, 2026

TL;DR: An exclusive book excerpt from Sebastian Mallaby’s forthcoming biography of Demis Hassabis reveals that Google’s $650 million acquisition of DeepMind in 2014 was shaped by an early bidding war with Facebook, a principled negotiation over AI safety governance, and Hassabis’s deliberate test of whether Zuckerberg truly understood AI’s singular importance — all of which reads as the origin story of the current AI power structure.

Executive Summary

This is a book excerpt — narrative history, not breaking news — and should be read as such. It provides no new operational intelligence about AI capabilities or business applications, but offers genuine strategic context for understanding how the current AI landscape was shaped by decisions made over a decade ago. The core facts: Google acquired DeepMind in January 2014 for $650 million. Facebook was a competing bidder and was rejected, in part because Hassabis concluded that Zuckerberg did not truly understand AI’s primacy over other technology trends. DeepMind’s co-founders negotiated successfully for an AI safety and ethics oversight board as a condition of the sale — a meaningful early instance of founders demanding governance structures as part of an acquisition, which Google accepted specifically because of its conviction that Hassabis represented the future of its AI strategy.

The framing most useful for business leaders: the AI governance structures now being debated at policy and enterprise levels were already being negotiated in private acquisition rooms in 2013–2014. Safety and oversight were not afterthoughts imposed on AI by regulators — they were built into the founding architecture of one of the field’s most consequential institutions by the people who understood the stakes. The excerpt also illustrates how talent concentration drove competitive dynamics — Facebook’s pivot to recruiting Yann LeCun directly after losing the DeepMind bid created FAIR (Facebook AI Research), which became foundational to Meta’s current AI position.

Editorial note on the source: This is a promotional excerpt from a book publishing March 31. The narrative is compelling and the sourcing (30+ hours of interviews with Hassabis over three years) is credible. It should be read as authorized history — Hassabis’s perspective is central and largely unchallenged within the excerpt.

Relevance for Business

This article’s primary value for SMB leaders is contextual intelligence, not operational guidance. Understanding that the AI companies now dominating enterprise software were shaped by early principled debates about safety, governance, and who should control transformative technology puts current AI governance conversations in perspective. The book itself — “The Infinity Machine” by Sebastian Mallaby, publishing March 31 — is worth noting for executive reading lists. Mallaby wrote “More Money Than God” and “The Man Who Knew” (about Alan Greenspan), both regarded as definitive accounts of their respective topics. His treatment of AI’s origin story is likely to become a reference point.

Calls to Action

🔹 Add to reading list — “The Infinity Machine” (Mallaby, Penguin Press, March 31, 2026) is likely to be a defining narrative account of how the current AI landscape was built. Relevant for any leader who wants strategic context beyond current product coverage.

🔹 Note the governance precedent — DeepMind’s founders insisted on independent AI ethics oversight as a condition of acquisition in 2013. That instinct is relevant context for how your own organization structures oversight of AI systems today.

🔹 Monitor how the book’s narrative shapes policy and investor conversations about AI governance — origin stories matter for how industries regulate themselves.

🔹 Deprioritize this as an immediate operational action item — it is valuable strategic context, not a decision trigger.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/deepmind-google-demis-hassabis-5bd6de54: March 31, 2026

CHINA IS WINNING THE AI TALENT RACE

Source: The Economist | Date: March 25, 2026

TL;DR: China now produces and retains more top AI researchers than the U.S., a structural shift with long-term implications for where AI innovation originates and who controls it.

Executive Summary

Based on original analysis of NeurIPS 2025 — the world’s largest AI research conference — The Economist documents a measurable and accelerating shift in the global AI talent balance. In 2019, roughly 29% of top AI researchers began their careers in China; by 2025, that share had reached 50%. The U.S. share fell from 20% to 12% over the same period. Nine of the top ten undergraduate institutions represented at NeurIPS 2025 were Chinese. Graduates of Tsinghua University alone outnumbered those of MIT four to one.

China is not just producing more AI researchers — it is retaining them. In 2019, about one-third of Chinese-trained researchers stayed in China. By 2025, that figure was 68%. The reversal is driven by both pull (rising university rankings, government recruitment incentives, competitive salaries) and push (U.S. visa uncertainty, funding cuts, and a chilling atmosphere around Chinese-born researchers at American institutions).

The Economist appropriately flags methodological limits — NeurIPS may overrepresent Chinese researchers due to academic incentive structures, and America’s leading AI talent is increasingly concentrated in non-publishing frontier labs. The data is directionally significant, but the full picture is more complex than a single conference ranking suggests. American institutions still retain the majority of Chinese researchers who complete graduate degrees in the U.S., and 87% of Chinese-born NeurIPS authors from 2019 remained in America by 2025. The trend, however, is moving against the U.S.

Relevance for Business

For SMB executives, the direct implication is not about geopolitics — it is about where competitive AI innovation will originate over the next five to ten years. If China’s talent base produces frontier models at lower cost (as DeepSeek suggested in early 2025), U.S.-centric AI vendors may face sustained price and capability competition they have not previously encountered. This affects vendor selection, pricing assumptions, and the durability of current AI tool investments. It also has supply-chain and compliance dimensions for businesses operating in regulated sectors or with government contracts, where AI provenance and vendor origin are increasingly scrutinized.

Calls to Action

🔹 Monitor how the U.S.-China AI talent gap evolves — it is a leading indicator of where AI capability and pricing power shifts over the next decade.

🔹 If you use or evaluate AI tools, begin noting vendor origin and training data provenance — this will become a compliance and procurement consideration in regulated industries.

🔹 Do not treat this as an immediate operational threat, but incorporate geopolitical AI risk into any 3–5 year technology strategy conversations.

🔹 Watch for lower-cost Chinese-origin AI models (following the DeepSeek pattern) entering enterprise software stacks through third-party integrations — often without explicit disclosure.

Summary by ReadAboutAI.com

https://www.economist.com/interactive/science-and-technology/2026/03/25/china-is-winning-the-ai-talent-race: March 31, 2026

China’s New Masterplan for Its Tech Economy in 2030 and Beyond

The Economist | March 24, 2026

TL;DR: China’s 15th Five-Year Plan sets an extraordinarily ambitious technology agenda — including AI dominance, humanoid robots, quantum computing, and brain-computer interfaces — but a track record of missed targets and escalating U.S. countermeasures means the plan is as much a geopolitical signal as a reliable roadmap.

Executive Summary

China’s newly adopted 15th Five-Year Plan mandates commercial deployment of drones, AI-powered robotics, hydrogen energy, and brain-computer interfaces within five years, with “frontier breakthroughs” in fusion power and quantum computing to follow. The plan functions as a state coordination mechanism: sectors named in the plan unlock central and local government funding, attract private capital, and draw the professional infrastructure needed to commercialize technology. In this sense, the plan is both a roadmap and a market-making instrument. The AI component is particularly credible — China’s 2017 declaration of AI ambitions was dismissed by Western experts, then validated by DeepSeek’s January 2025 model release, which matched leading American systems.

However, credibility is not uniformity. China’s catch-up successes — EVs, solar, AI — all occurred in fields with mature markets and proven technology. The current plan targets frontier domains where commercial viability is genuinely uncertain: is there a market for humanoid robots? Can quantum computers be made practical? China’s planners appear to assume “yes” across every category simultaneously, which risks spreading capital and talent too thin. Additionally, the plan’s ambition will almost certainly trigger a renewed U.S. technology export response — the same dynamic that followed Made in China 2025 and produced semiconductor restrictions.

Relevance for Business

SMB executives should treat this as strategic context, not an operational trigger. The near-term business signal is in AI and robotics, where China’s investment is real and accelerating. For any business with supply chain exposure to Chinese manufacturing, technology sourcing, or competitive markets where Chinese firms participate, the pace of automation and AI-enabled productivity in Chinese industry will increase. For businesses evaluating AI vendors or tools from Chinese-linked firms, geopolitical friction and potential export controls create vendor-dependency and continuity risk. The broader implication: the AI competitive environment is a two-pole race with real resource commitment on both sides, and the pace of capability development will remain high regardless of which plans succeed or fall short.

Calls to Action

🔹 Monitor U.S. government responses to China’s plan, particularly any new technology export controls — these will affect the availability and pricing of semiconductors and AI hardware.

🔹 Assess supply chain exposure to Chinese manufacturing in sectors the plan targets: robotics, EVs, smart logistics — these will see intensifying state-backed competition.

🔹 Treat Chinese AI capability as real and advancing — the DeepSeek episode demonstrated that dismissing Chinese AI as derivative is a strategic error.

🔹 Deprioritize the more speculative elements (fusion, quantum, brain implants) as near-term business factors — these remain genuinely uncertain even with state backing.

🔹 Flag for future review in 12–18 months: whether U.S.-China technology decoupling accelerates in response to this plan, creating vendor landscape shifts for AI and hardware procurement.

Summary by ReadAboutAI.com

https://www.economist.com/finance-and-economics/2026/03/24/chinas-new-masterplan-for-its-tech-economy-in-2030-and-beyond: March 31, 2026

Trump Names Zuckerberg, Ellison, and Huang to Federal AI Advisory Council

The Wall Street Journal | Annie Linskey and Alex Leary | March 25, 2026

TL;DR: The Trump administration has assembled the most commercially powerful AI advisory council in U.S. history — but its composition raises legitimate questions about whose interests will shape federal AI policy.

Executive Summary

President Trump has appointed 13 technology industry leaders — including Meta CEO Mark Zuckerberg, Oracle’s Larry Ellison, Nvidia’s Jensen Huang, Google co-founder Sergey Brin, and Dell’s Michael Dell — to the President’s Council of Advisors on Science and Technology (PCAST). The council, which may expand to 24 members, will be co-chaired by David Sacks (White House AI and crypto czar) and adviser Michael Kratsios. Its stated mandate: advise the administration on AI regulation and emerging technology policy.

The signal here is structural, not ceremonial. This administration is formally embedding the CEOs of the dominant AI infrastructure companies — chip supply (Nvidia), cloud and enterprise software (Oracle), social/AI platforms (Meta), and compute ecosystems (Google/Dell) — directly into the policy-shaping process. That is a meaningful concentration of influence. Several council members or their companies have also made financial contributions to Trump administration initiatives, a conflict-of-interest dynamic the article notes but does not resolve.

Trump’s framing centers on U.S. AI competitiveness and a “light-touch” regulatory posture. What is being claimed is that industry expertise will produce smarter policy. What is not yet demonstrated is how dissenting voices — smaller firms, civil society, academic researchers, labor — will factor into recommendations that could shape AI rules affecting all U.S. businesses.

Relevance for Business

For SMB executives, the practical implication is this: AI regulation in the U.S. is now being shaped primarily by the companies that sell AI infrastructure. That creates a regulatory environment likely to favor permissive deployment rules, reduced compliance friction for large platforms, and limited mandatory safeguards — at least in the near term.

This matters for vendor decisions (the companies on this council will have early read on regulatory direction), competitive positioning (large incumbents with a seat at the table gain structural advantage in shaping rules that apply to everyone), and compliance planning (SMBs should not assume a stable or predictable regulatory framework will emerge quickly — advisory councils can move slowly and their output is non-binding until codified).

The notable corporate willingness to join — a sharp contrast to Trump’s first term — signals that big tech has made a strategic calculation: proximity to this administration is now considered less risky than distance.

Calls to Action

🔹 Monitor, don’t react: This council is advisory; no binding AI rules have changed. Track its formal outputs before adjusting compliance posture or vendor strategy.

🔹 Flag the conflict-of-interest dynamic internally: If your organization is evaluating AI governance frameworks or compliance standards, note that current federal guidance is being shaped by parties with direct commercial interests in the outcome.

🔹 Prioritize vendor diversification in AI procurement: Concentration of policy influence among a small set of AI vendors is a dependency risk. Avoid single-vendor lock-in while the regulatory landscape is still forming.

🔹 Assign someone to track PCAST outputs: When recommendations are published, they will likely signal which regulations are coming — and which aren’t. That’s a strategic planning input, not just a policy curiosity.

🔹 Revisit in 6–12 months: Advisory councils produce recommendations on long timelines. Unless your business is directly engaged in AI product development or federal contracting, this warrants monitoring rather than immediate action.

Summary By ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/trump-to-name-mark-zuckerberg-larry-ellison-and-jensen-huang-to-tech-panel-ded1ec6f: March 31, 2026

HOW TO GUESS IF YOUR JOB WILL EXIST IN FIVE YEARS

The Atlantic | Annie Lowrey | March 25, 2026

TL;DR: A well-argued essay uses historical economic precedent — the “Jevons paradox” and the horse-vs.-coal distinction — to push back on both AI alarmism and AI dismissiveness, concluding that AI’s labor market effects will be varied, sector-specific, and shaped as much by regulation and friction as by capability.

Executive Summary

This is an opinion essay, written with intellectual rigor and appropriate caveats, that makes three substantive arguments worth extracting. First, the Jevons paradox — the historical pattern in which efficiency improvements in a resource increase rather than decrease its total consumption — likely applies to AI-enabled labor: making tasks cheaper and faster tends to expand the scope of those tasks, not eliminate them. The radiologist example is apt: predictions of AI obsolescence for radiologists in 2016 have not materialized, in part because cheaper imaging drove higher volumes of tests, and in part because FDA review processes slow AI deployment in regulated industries.

Second, the horse vs. coal framing is analytically useful. “Horses” are roles where the human is performing a function that AI replicates directly and does better — demand collapses. “Coal” roles are those where AI efficiency drives more demand for the underlying function, and humans remain needed to execute it, even as the work changes. The author’s honest conclusion is that most workers will experience both simultaneously in different parts of their roles, with outcomes varying significantly by industry, regulation, and the specific nature of their work.

Third, and most important for business leaders: the outcome is not predetermined. Government policy (regulation, taxation, labor protection), FDA-style review processes, and the inherent friction of deploying AI in complex real-world environments will shape how fast and how broadly AI displaces or complements human work. Block’s Jack Dorsey firing half his employees because of AI is one data point; software engineering employment growing 6% year-over-year in the same environment is another.

The essay acknowledges its own limitations honestly — the author notes that transitions are rarely smooth, that major economic shifts have historically involved “wrenching mass migration” and prolonged suffering, and that the social and political consequences of rapid job displacement are real even when humans ultimately adapt.

Relevance for Business

For SMB executives, this essay is worth reading in full, particularly if you are navigating difficult internal conversations about AI and jobs. The coal/horse framework is genuinely useful for role-level analysis. For each function in your organization, ask: is AI creating more demand for this type of work (coal), or does AI do the work itself, reducing need for the human (horse)? The answer will differ by role and context, and the honest answer is often “we don’t know yet.” The Jevons paradox reminder is particularly relevant for SMBs that fear AI will eliminate demand for their core services — in many cases, cheaper AI-assisted delivery expands total market demand rather than contracting it.

Calls to Action

🔹 Use the coal/horse framework for a structured internal conversation about AI’s role-by-role impact in your organization. This is more useful than generic “AI will change everything” framing.

🔹 Identify which of your key roles are most likely horse vs. coal — roles involving physical presence, relationship management, regulatory compliance, or complex judgment under uncertainty are more coal-like; roles involving high-volume routine information processing are more horse-like.

🔹 Do not catastrophize or dismiss — the honest answer from credible economists is that AI’s labor effects are real, varied, and not yet deterministic. Communicate this uncertainty to your team rather than false certainty in either direction.

🔹 Watch for Jevons effects in your own market — if AI makes your core service cheaper or faster, does that expand the number of customers who can access it, or does it simply reduce revenue per engagement? The answer shapes your AI adoption strategy significantly.

🔹 Monitor regulatory friction as a legitimate business factor — the FDA review process is one example of how regulation meaningfully slows AI deployment and creates durable demand for human expertise in regulated domains. Know where your industry’s equivalent friction points are.

Summary by ReadAboutAI.com

https://www.theatlantic.com/ideas/2026/03/ai-job-loss-jevons-paradox/686520/: March 31, 2026

HOW AI IS CREEPING INTO THE NEW YORK TIMES

The Atlantic | Vauhini Vara | March 25, 2026

TL;DR: Undisclosed AI use is appearing in the opinion and personal essay sections of major U.S. newspapers — including The New York Times, Wall Street Journal, and Washington Post — raising a governance and trust problem for any organization that publishes or relies on written content under a named author’s authority.

Executive Summary

This is an investigative piece, not an opinion column, and it surfaces a concrete and documented pattern. Researchers at Stony Brook University, using an AI detection tool called Pangram, scanned thousands of articles across major U.S. publications and flagged likely AI use in the opinion sections of the Times, Journal, and Post — suggesting undisclosed AI assistance is more prevalent than editorial policies acknowledge. A specific case: a “Modern Love” column in the Times was flagged by multiple detection tools as likely containing AI-generated content; the author confirmed using AI tools (ChatGPT, Claude, Copilot, Gemini, Perplexity) as a “collaborative editor,” but denied generating content wholesale.

The governance failure is clear regardless of where one draws the line. All three publications have AI policies requiring disclosure of “substantial use.” None appear to be enforcing them consistently. The Times told the reporter that journalism there “is inherently a human endeavor” — a framing rather than an enforcement mechanism. OpenAI reportedly built a watermarking tool capable of detecting AI output with high accuracy but has not released it, with at least one reported consideration being concern that users would reduce ChatGPT usage if watermarking were deployed.

Limitations of the analysis are real and should not be dismissed. AI detection tools produce false positives, and AI-influenced writing is not binary. The percentage estimates vary significantly across tools. The author and researcher acknowledge these constraints. This is a pattern signal, not a verdict on specific articles.

The second-order concern, explicitly raised in the article, is more significant than stylistic homogenization: AI output has been shown in multiple studies to be measurably more persuasive than human writing in shifting political opinions— and if that output is appearing undisclosed in prestigious publications, the implications for public discourse are not trivial.

Relevance for Business

For SMB leaders, this story matters in two directions. First, any organization that publishes content under named authors — blogs, thought leadership, newsletters, client communications — now faces a version of this governance problem. The reputational exposure from undisclosed AI use in bylined content is real, particularly in professional services, consulting, or any business where trust in the author’s voice is a competitive asset. Second, the detection technology is real and accessible — clients, partners, journalists, or competitors can run your content through AI detection tools. The absence of a clear internal AI authorship policy is a liability, not a neutral position.

Calls to Action

🔹 Prepare an internal AI authorship policy now — define what constitutes “AI-assisted” vs. “AI-generated” for your organization’s published content, and where each requires disclosure. This policy protects you, not just the reader.

🔹 Apply this policy to all bylined content — blog posts, op-eds, LinkedIn articles, client newsletters, and proposals written under a specific person’s name should have clear internal guidelines on permissible AI use.

🔹 Do not assume detection is impossible — Pangram and similar tools are accessible to anyone. Treating AI disclosure as optional because “no one will know” is increasingly a bad bet.

🔹 Monitor how major publications evolve their AI disclosure policies over the next 6–12 months — their policies will likely become the de facto standard that clients and partners begin to expect from professional content broadly.

🔹 Assign governance ownership — someone in your organization should be responsible for AI content standards and know where the lines are. Right now, most SMBs have no one in that role.

Summary by ReadAboutAI.com

https://www.theatlantic.com/culture/2026/03/how-ai-creeping-new-york-times/686528/: March 31, 2026

AI TOKENS: HOW TO NAVIGATE AI’S NEW SPEND DYNAMICS

WSJ / Deloitte CIO Journal (Sponsored Content) | January 14, 2026

Editorial note: This is sponsored content from Deloitte. The framework is analytically sound and practically useful. Readers should note the consulting firm’s inherent interest in positioning AI cost management as a complex, professional-services-worthy problem.

TL;DR: AI is now the fastest-growing line item in corporate technology budgets — driven by token-based pricing that is variable, nonlinear, and poorly tracked — and most organizations lack the cost governance frameworks to manage it effectively.

Executive Summary

The core argument of this piece is financially important and often overlooked: AI costs are fundamentally different from traditional software costs, and most existing budget frameworks are not designed to manage them. Where traditional software costs are tied to subscriptions or licenses (predictable, per-seat), AI costs are denominated in tokens — small units of data processed during each interaction — making them variable, consumption-driven, and difficult to forecast. Cloud computing bills rose 19% in 2025 for many enterprises as AI became central to operations. Yet according to Deloitte’s own survey, only 28% of global finance leaders report clear, measurable value from AI investments — and nearly half expect it will take up to three years to achieve ROI from basic AI automation.

The piece identifies three distinct AI consumption models with different cost structures: buying AI through packaged software (tokens abstracted into subscription fees — predictable but opaque); consuming AI through APIs (tokens explicit and metered — transparent but volatile); and running AI on owned infrastructure (tokens fully internalized, requiring GPU, storage, networking, and power management — most cost-efficient at scale, but with high upfront commitment). Deloitte modeling suggests that an on-premises “AI factory” can deliver more than 50% cost savings over three years compared to API-based or cloud approaches — but only once token production reaches a sufficient scale threshold, and noting that roughly 50% of that infrastructure cost is non-GPU (power, networking, facilities).

The practical governance prescription is sound regardless of source: treat AI like energy or capital allocation — apply FinOps disciplines, implement real-time monitoring, set per-unit budgets by team, enforce ROI thresholds before approving AI projects, and rightsize model selection to task complexity.

Relevance for Business

This is a cost management story with immediate relevance for any SMB that is spending meaningfully on AI tools.Most SMBs are in the API consumption tier — paying per query to OpenAI, Anthropic, Google, or similar providers — which means their costs are directly token-driven, even if the invoices don’t show it that way. As usage scales — more employees, more complex prompts, more automated workflows — costs will grow nonlinearly in ways that catch organizations off guard.

The practical SMB implication: AI spend needs a budget owner, usage visibility, and per-project ROI criteria — now, not after the first surprise invoice. The scale required for an on-premises AI factory is irrelevant to most SMBs, but the FinOps principles apply at any size.

Calls to Action

🔹 Assign a budget owner for AI spend: If no one in your organization is accountable for AI cost as a line item — separate from general IT or software budgets — establish that ownership now.

🔹 Audit your current AI consumption: Which tools, which teams, which use cases are generating the most AI cost? Many SMBs don’t have this visibility. Get it.

🔹 Set per-project ROI criteria before approving AI initiatives: Establish a simple threshold — “this project must reduce X hours of work by Y% to justify Z in AI spend” — and apply it consistently.

🔹 Right-size model selection: Using the most powerful (most expensive) AI model for every task is a common and costly error. Simpler tasks should use simpler, cheaper models.

🔹 Implement basic spend monitoring: Most API providers offer usage dashboards and budget alerts. Turn them on. This is an easy governance step that most small organizations have not yet taken.

Summary by ReadAboutAI.com

https://deloitte.wsj.com/cio/ai-tokens-how-to-navigate-ais-new-spend-dynamics-373e1642: March 31, 2026

Disney Exits OpenAI Deal After AI Giant Shutters Sora Video App

The Hollywood Reporter | Alex Weprin | March 24, 2026

TL;DR: OpenAI is shutting down the standalone Sora AI video app just months after launch, triggering Disney’s exit from a $1 billion investment deal — a clear signal that AI product strategy remains highly unstable and that enterprise partnerships built on specific AI features carry real discontinuity risk.

Executive Summary

OpenAI is closing its Sora video generation app, which launched only last fall. The shutdown is not a retreat from AI video entirely — OpenAI says video capabilities will continue within the broader ChatGPT platform — but the standalone product is being discontinued. The immediate consequence: Disney is exiting the $1 billion investment deal it signed with OpenAI in December, which included an agreement to license Disney characters for use in Sora. Disney’s stated intent had been to integrate the technology into Disney+.

The business risk here is vendor dependency on immature products. Sora launched with significant IP controversy — OpenAI had to walk back its initial approach to celebrity likenesses and studio IP within days of launch. The product never stabilized commercially. Its closure mid-lifecycle means partners, users, and API customers who built on top of it now face transition costs. The Sora episode is a compressed version of a pattern that will recur: AI capabilities get announced and partnered around before they are proven, then pivoted or discontinued as strategy shifts.

The competitive beneficiary named in the article is Google, which now has the only scaled AI video generation platform — though it has not yet resolved its own IP licensing issues and is facing related lawsuits.

Relevance for Business

The core lesson for SMB executives is vendor and feature risk. Any AI tool that is new, standalone, or in active development carries discontinuity exposure. This applies not just to video generation, but to any AI feature or platform where a vendor’s strategic priorities may shift. Building workflows or partnerships that depend on a specific AI product’s continued existence is high-risk in the current environment. The Disney situation — a $1 billion deal unwound in months — illustrates that this risk exists even at scale. For smaller businesses, the same dynamic plays out in software subscriptions, API integrations, and vendor relationships that evaporate when a product is sunsetted.

Calls to Action

🔹 Audit current AI tool dependencies — identify any workflows, integrations, or vendor agreements that depend on a specific feature or product that could be discontinued or folded into a larger platform.

🔹 Build portability into AI integrations — where possible, avoid deep vendor lock-in for features that are new, standalone, or described as “beta” or “evolving.”

🔹 Monitor AI video generation as a category — it remains commercially viable and strategically important, but the vendor landscape is unstable. Google’s Veo and others will fill the Sora gap; evaluate alternatives in 6–12 months when the dust settles.

🔹 Apply IP and legal review before any partnership that involves licensing your brand, characters, or content to an AI platform — the Sora IP controversy should inform the contract terms you demand.

🔹 Treat AI partnerships like early-stage vendor relationships — with shorter commitment horizons, exit provisions, and contingency plans.

Summary by ReadAboutAI.com

https://www.hollywoodreporter.com/business/digital/openai-shutting-down-sora-ai-video-app-1236546187/: March 31, 2026

ChatGPT, Claude, and Gemini Entered the WSJ Bracket Pool. One Might Actually Win.

Wall Street Journal | March 24, 2026

TL;DR: Three leading AI models outperformed most human participants in a March Madness bracket pool by applying disciplined probabilistic strategy — a useful, accessible illustration of where AI genuinely adds value in competitive decision-making under uncertainty.

Executive Summary

The Wall Street Journal secretly entered Claude (Anthropic), ChatGPT (OpenAI), and Gemini (Google) into its 124-person office March Madness pool, providing each with the same statistical data, scoring rules, and permission to search the web. The assignment was explicit: not to predict the most likely outcome, but to find the optimal strategy for winning a pool — a subtly different problem that rewards contrarian picks in low-ownership outcomes.

After the first weekend, all three AI models are alive with intact Final Fours. Claude holds the best win probability of the three (6th out of 124 brackets), having made the contrarian pick of No. 3 seed Illinois as champion — chosen by only 3.2% of pool participants. Gemini and ChatGPT played it safer. Claude’s edge-seeking logic — explicitly framed by the model itself as analogous to portfolio construction — correctly identified two upset wins and avoided the only No. 1 seed to lose. All three models outperformed the pool average after the first weekend.

This is an entertainment story, but it has a real signal embedded in it: AI models can process scoring incentive structures, public pick distributions, and real-time news (player arrests, injuries) and translate them into a coherent, reasoned strategy faster and more consistently than most humans. There was also a notable failure mode early on: all three models initially produced structurally invalid brackets, misreading the bracket format entirely. The error had to be corrected before any useful analysis could begin — a reminder that AI outputs require human validation even in well-defined tasks.

Relevance for Business

The practical signal here is not about sports. It’s about AI as a decision-support tool in competitive, probabilistic environments: market analysis, bid strategy, pricing decisions, competitive positioning. The models demonstrated the ability to synthesize multiple data inputs, account for what competitors are likely to do, and identify contrarian opportunities with asymmetric upside. That logic applies directly to business strategy.

The failure mode — initial structural errors in reading the bracket — is equally instructive. AI tools perform best when the problem is clearly defined and the output can be verified. When the setup is wrong, the output is confidently wrong. Human oversight at the framing stage remains essential.

Calls to Action

🔹 Take note of the decision-framing capability: Consider whether AI tools are being used in your business not just to answer questions, but to optimize strategy given a specific scoring environment (e.g., bid margins, pricing tiers, competitive proposals).

🔹 Test AI on a low-stakes competitive problem in your business domain to build intuition about where it adds value and where it fails.

🔹 Do not skip human validation of AI-structured outputs. The bracket error is a real-world reminder: AI can misread the structure of a problem and produce confident, detailed, completely wrong results.

🔹 Monitor AI benchmarking coverage as a useful proxy for relative model capability — especially as Claude, ChatGPT, and Gemini continue to diverge in strategy and performance across different task types.

🔹 Deprioritize as a standalone story — treat this as illustrative context, not a data point requiring action.

Summary by ReadAboutAI.com

https://www.wsj.com/sports/basketball/ncaa-bracket-ai-chatgpt-claude-gemini-82d997f1: March 31, 2026

QUANTUM READINESS: THE CASE FOR FUTURE-PROOFING AI INFRASTRUCTURE

WSJ / Deloitte CIO Journal (Sponsored Content) | March 19, 2026

Editorial note: This is sponsored content from Deloitte. The quantum computing timeline projections are sourced from Deloitte’s own consultants and should be treated as informed estimates, not independently verified forecasts. The article is inherently designed to expand the scope of infrastructure consulting conversations.

TL;DR: Deloitte’s quantum computing advisers argue that commercially relevant quantum use cases are 24–36 months away, and organizations building AI infrastructure today should begin thinking about how quantum processing units (QPUs) will eventually need to integrate with their existing GPU architectures — before, not after, they make major infrastructure investments.

Executive Summary

The article makes a forward-looking argument: organizations currently scaling up AI infrastructure around GPUs risk building systems that will require costly re-engineering when quantum computing becomes commercially relevant. Deloitte projects the first commercial quantum use cases within 24–36 months, with early applications in molecular design, financial risk modeling, and options pricing — problems where quantum systems can outperform classical supercomputers on specific calculation types.

The technical framing is useful: quantum computers are not substitutes for GPUs or CPUs — they solve fundamentally different types of problems using different mathematics. The most likely enterprise architecture, per this article, is heterogeneous: CPUs + GPUs + QPUs working together, with quantum computers handling specific, computationally intensive sub-problems (such as exact molecular calculations), feeding results back into classical machine learning systems. This hybrid model is already being explored in state-of-the-art research environments.

The practical readiness case is two-part: first, infrastructure decisions made today (AI data center builds, GPU cluster designs, networking architectures) should account for eventual quantum integration rather than require full replacement; second, developing internal quantum literacy takes years — organizations that wait until quantum is commercially deployed before building skills will be behind. The article advocates for “quantum-inspired computing” on GPUs as a transitional step: using GPU-scale approaches to complex optimization problems that builds capability and architecture patterns useful for eventual QPU integration.

What the article does not say clearly: for the vast majority of enterprises — and virtually all SMBs — quantum computing is not a near-term operational concern. The 24–36 month timeline for “first commercially relevant use cases” refers to specialized applications in science-intensive industries, not general enterprise workloads. The readiness argument applies most directly to organizations making major, long-horizon infrastructure investments today.

Relevance for Business

For most SMBs, this article is a horizon-monitoring item, not an action item. Quantum computing’s near-term commercial relevance is concentrated in financial services, pharmaceuticals, materials science, and advanced logistics — industries with specific optimization problems that classical computers handle poorly at scale. If your business is not in one of those sectors and is not building proprietary AI infrastructure, the practical relevance is low today.

The exception: if you are making multi-year technology infrastructure commitments in 2026 — AI infrastructure investments, data center decisions, long-term cloud architecture choices — the argument to consider future hybrid architecture flexibility at the design stage is reasonable and costs little to incorporate as a factor.

Calls to Action

🔹 Monitor, don’t act: For most SMBs, quantum computing is a 3–5+ year horizon item. Put it on your annual technology radar review, not your 2026 roadmap.

🔹 Exception: if you are in financial services, pharma, or advanced manufacturing and making major infrastructure decisions in 2026, add quantum integration flexibility as a design criterion — not a full roadmap, but a stated consideration.

🔹 Assign a designated technology horizon watcher: Quantum is one of several emerging technologies (alongside neuromorphic computing, advanced robotics, and next-generation networking) that warrant monitoring without immediate investment. Someone in your organization should own that tracking function.

🔹 Calibrate the timeline claims: The 24–36 month estimate for first commercial quantum use cases comes from Deloitte’s own consultants and should be read as motivated optimism. Independent analysts place general enterprise quantum relevance further out.

🔹 Deprioritize for most SMBs unless sector-specific: The ROI case for quantum readiness planning today is real for science-intensive large enterprises. For most SMBs, appropriate awareness is sufficient.

Summary by ReadAboutAI.com

https://deloitte.wsj.com/cio/quantum-readiness-the-case-forfuture-proofingai-infrastructure-cb1525c1: March 31, 2026

America’s CFOs Say AI Is Coming for Admin Jobs

Wall Street Journal | March 24, 2026

TL;DR: A rigorous study of ~750 CFOs confirms AI will primarily eliminate clerical and administrative roles, not yet knowledge work — but the displacement of “stepping stone” jobs raises a real workforce planning issue for managers today.

Executive Summary

A working paper published through the National Bureau of Economic Research, based on a survey of approximately 750 CFOs across finance, tech, manufacturing, and professional services, delivers the most grounded near-term picture yet of AI’s workforce impact. The findings: AI had essentially no measurable employment effect in 2025, and most CFOs expect only a modest headcount reduction of roughly 0.4% in 2026 relative to what it otherwise would have been. This is not a wave — it is a measured shift.

The more significant finding is directional: CFOs were twice as likely to say AI would reduce jobs in clerical and administrative roles — bookkeeping, customer service, data entry — as they were to say it would enhance them. For technical, skilled roles, the inverse is true: AI is more likely to complement than replace. This mirrors the pattern economists call “skills-biased technological change,” most recently seen when personal computers hollowed out routine office work in the 1980s and 1990s. Those jobs didn’t disappear overnight, but their share of employment shrank steadily.

A notable split by company size: larger firms (500+ employees) are actively cutting routine roles while keeping technical headcount flat, using AI to extract efficiency. Smaller companies are doing the opposite — keeping routine staff while adding technical workers to pursue growth. The study has one notable limitation: it only surveys established companies, missing the job creation that often comes from AI-native startups.

Relevance for Business

This study matters to SMB leaders on two levels. First, workforce planning: the window to reskill or redeploy employees in clerical functions is open now but narrowing. Waiting until roles are clearly redundant creates harder transitions. Second, hiring: roles that were once reliable entry points into organizations — administrative assistants, data clerks, customer service reps — are under structural pressure. Companies need to decide deliberately whether to fill those roles as they turn over, or redesign the workflow.

The size-based divergence is particularly relevant: SMBs that treat AI as a growth accelerator rather than a cost-cutter are in a stronger strategic position, both for talent retention and competitive differentiation. The risk of following large-enterprise playbooks — pure efficiency-through-reduction — is that it hollows out organizational capability and morale without building new capacity.

Calls to Action

🔹 Act now on workforce mapping: Audit which roles in your organization are primarily clerical or routine-cognitive. These are the first to be structurally pressured, and planning now costs less than reactive reductions later.

🔹 Reframe AI deployment as capability-building, not just cost reduction — particularly relevant for SMBs competing against larger, leaner enterprises.

🔹 Review hiring decisions for admin/clerical backfills: Before replacing a departing admin or customer service employee, evaluate whether AI tools can redistribute the workflow.

🔹 Prepare manager-level guidance on how to discuss AI and job security with teams. Employee anxiety is real and affects productivity.

🔹 Monitor: Track the next quarterly edition of the Duke/Atlanta Fed/Richmond Fed CFO survey for updated employment expectations as 2026 progresses.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/ai/ai-admin-job-market-6a1c3436: March 31, 2026

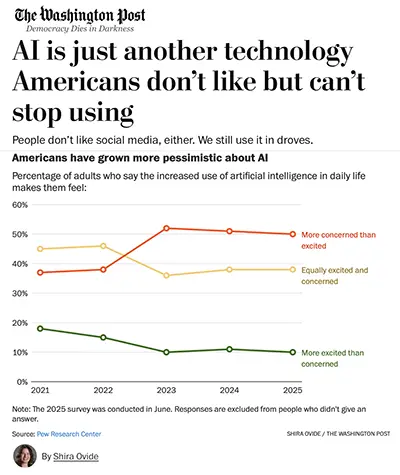

AI Is Just Another Technology Americans Don’t Like But Can’t Stop Using

The Washington Post | March 26, 2026

TL;DR: Public disapproval of AI is real and rising, but history suggests it won’t stop adoption — though it may create governance friction and erode employee and customer trust in ways leaders should actively manage.

EXECUTIVE SUMMARY

This Washington Post opinion-analysis piece, published March 26, 2026, makes a structural argument: American public distrust of AI is growing but unlikely to meaningfully slow deployment, based on the parallel with social media. Pew Research data shows a majority of Americans are now more concerned than excited about AI — a sentiment that has worsened since 2021 — and AI currently ranks below the Democratic Party and Iran in NBC News favorability polling. Yet the author’s central claim is that attitude and behavior diverge: people said they didn’t like social media either, and kept using it in dramatically increasing numbers.

The article is honest about the limits of that analogy. One expert cited — an MIT political science professor — flags a critical difference: AI distrust may suppress usage in a way social media distrust did not. Social media can be used passively without trusting its content. AI tools require users to trust the outputs enough to act on them. If employees or customers don’t trust AI-generated recommendations, summaries, or decisions, the productivity gains don’t materialize. This is a practical risk for SMBs deploying AI in customer-facing or decision-support roles.

On the regulatory side, the article notes emerging political pressure: Senator Bernie Sanders has introduced legislation to halt new data center construction until AI regulation is enacted. Governor Ron DeSantis has publicly challenged the logic of subsidizing AI companies whose own leaders predict massive job displacement. These are early-stage signals, not enacted policy — but they indicate that the political environment around AI infrastructure is shifting, which could affect compute availability and cost over a 2–4 year horizon. The article does not independently verify claims made by industry lobbying groups (such as the AI Infrastructure Coalition’s assertion that AI approval grows with usage), and leaders should treat those as advocacy positions, not findings.

RELEVANCE FOR BUSINESS

The relevance here is not technical — it’s organizational and reputational. Two risks deserve attention:

Internal adoption risk. If the majority of Americans distrust AI, a portion of your workforce does too. Deploying AI tools without addressing that distrust — through transparency about how they work, clear policies on what decisions AI does and doesn’t make, and explicit human oversight structures — will generate friction, resistance, and potential errorsas employees either avoid the tools or over-trust outputs they shouldn’t.

Customer-facing exposure. Using AI in customer service, communications, or decision-making without disclosure creates reputation and trust risk that the social media analogy underscores. Meta’s usage kept growing even as its reputation collapsed — but legal liability, employee attrition, and brand erosion followed over time. The better outcome is proactive governance, not reactive damage control.

For the near term: public backlash is unlikely to produce restrictive U.S. federal regulation before 2027 at the earliest. But it is already affecting how employees, customers, and local officials respond to AI deployment — and those relationships matter for SMBs in ways they may not for large platforms that can absorb reputational friction.

CALLS TO ACTION

🔹Treat the social media parallel as a caution, not a reassurance. History shows technology can scale despite public disapproval — but also that deferred governance creates compounding liability. The SMBs that build ethical AI frameworks now will be better positioned as regulation tightens.

🔹Before deploying customer-facing AI, develop a clear, plain-language disclosure policy. State what AI is doing, where human review applies, and how errors are corrected. This protects trust and reduces legal exposure.

🔹Address internal AI skepticism directly. Survey employees on AI comfort levels, communicate governance guardrails, and avoid framing AI as a labor replacement in internal communications — even if that’s a real long-term consideration.

🔹Monitor federal and state AI regulation developments, particularly around data centers, labor protections, and sector-specific rules (healthcare, finance). Assign someone in your organization to track this quarterly — the political momentum is building.

🔹Do not assume employee adoption equals employee trust. Usage metrics alone will not tell you whether your team trusts AI outputs enough to act on them. Build feedback mechanisms into any AI workflow deployment.

Summary by ReadAboutAI.com

https://www.washingtonpost.com/technology/2026/03/26/americans-dont-trust-ai-will-probably-keep-using-it-anyway/: March 31, 2026

THE MOST INNOVATIVE COMPANIES IN APPLIED AI FOR 2026

Fast Company | Mark Sullivan and Victor Dey | March 24, 2026

TL;DR: The applied AI landscape has decisively shifted from text generation to autonomous agents — the 20 companies on this list reveal where real revenue is being generated today, which business functions are being restructured first, and which infrastructure problems are now commercially addressable.

Executive Summary

Fast Company’s 2026 applied AI list is less a celebration of novelty than a useful map of where AI is generating measurable business outcomes right now. The dominant pattern across the 20 companies: AI is moving from assistive tools to autonomous agents that take action, complete multi-step tasks, and operate across systems with minimal human initiation. The two functions furthest along in commercial deployment are software development and customer service— both now supported by a competitive ecosystem of mature, revenue-generating products.

The numbers behind several entries are notable for their scale and speed. Cursor surpassed $2 billion in annualized revenue and is used by 64% of the Fortune 500. Lovable reached $200 million ARR and 500,000 paid users, enabling non-technical users to build and deploy full applications in natural language. Sierra (AI customer service agents) reached $150 million ARR and a $10 billion valuation eight quarters after launch. ServiceNow extended AI agents across HR, finance, IT, and operations — not just support desks — with 1,000+ enterprise deployments. Gong’s AI sales agents report a 35% increase in win rates for teams using its deal-signal trackers.

Two entries address infrastructure problems that SMBs will encounter as they scale AI: Credo AI offers governance tooling — model trust scores across 95 use cases and an agent registry for real-time oversight — directly relevant to any organization deploying agents at scale. Temporal addresses agent reliability — the problem of multi-step workflows failing mid-execution when APIs time out or state is lost. Its inclusion in OpenAI’s Agents SDK is a credibility signal. Sardine’s agentic financial crime platform is the most concrete example of AI being used to fight AI-enabled fraud — a real and growing threat.

On the “vibe coding” / no-code development front: Lovable, Bolt.new, and Cursor together represent a compressed window in which non-technical leaders can prototype and deploy software without engineering teams. This is not future-state — these are products with millions of users today.

Relevance for Business

This list functions as a sector-by-sector diagnostic for SMB leaders. The relevant question is not “which of these companies is impressive?” but “which of these categories touches a function I own, and how far along is commercial adoption?” Customer service automation (Sierra, Nice/Cognigy), sales intelligence (Gong), document intelligence (Adobe), financial crime detection (Sardine), and software prototyping (Lovable, Bolt) are all past early-adopter stage. Governance tooling (Credo AI) and agent reliability infrastructure (Temporal) are early-stage but strategically important — organizations that deploy agents without governance frameworks are accumulating invisible risk.

The cost pressure implication is direct: tools like Lovable and Cursor are already enabling large enterprises to deliver software with smaller teams. SMBs that compete for technical talent, or that depend on outsourced development, face a changed cost structure for custom software.

Calls to Action

🔹 Identify your highest-friction business function — customer service, sales, software development, or document management — and assess whether a commercially mature AI agent solution exists in that category. Several on this list do.

🔹 Test a no-code or low-code AI development tool (Lovable, Bolt) on one internal prototype. If your business has needed a custom internal tool but lacked development resources, the barrier is now materially lower.

🔹 Do not skip governance — if you are deploying more than one or two AI tools, evaluate Credo AI or comparable agent registry/oversight solutions. The volume of AI tools in enterprises is growing faster than the ability to track what they are doing.

🔹 Audit your fraud prevention approach — AI-enabled financial fraud is real and accelerating. Sardine’s model (behavioral biometrics, device intelligence, consortium risk signals) represents a new threat model that legacy fraud detection was not designed for.

🔹 Monitor agent reliability as a category — the failure mode of multi-step AI workflows (lost state, API timeouts, incomplete tasks) is not a theoretical risk. Temporal’s rapid growth reflects real enterprise pain. Before deploying agentic workflows in production, understand what happens when they fail mid-task.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91495408/applied-ai-most-innovative-companies-2026: March 31, 2026

The Most Innovative AI Companies of 2026

Fast Company | March 24, 2026

TL;DR: AI capability is accelerating — not plateauing — and the companies shaping what tools you’ll use next year are already pulling ahead.

EXECUTIVE SUMMARY

Fast Company’s annual ranking surfaces a clear pattern: AI investment is still accelerating, not consolidating. Tech companies poured hundreds of billions into data center infrastructure over the past year, and the feared ‘scaling wall’ — the worry that making models larger would stop producing smarter results — has not materialized. Instead, model release cadence has sped up, partly because AI coding tools are now writing the code used to build the next generation of models. That feedback loop is compressing development timelines in ways that weren’t anticipated even 18 months ago.

Google’s Gemini 3 is the headline development. Released by Google DeepMind in November 2025, these multimodal models were built from the ground up to process text, images, video, audio, and code — not retrofitted to do so. Gemini 3 now powers Google Search AI Overviews (reportedly reaching 2 billion monthly users), the Gemini chatbot (750 million monthly active users), and Google’s enterprise platform Gemini Enterprise, which has grown to 8 million paid seats. The competitive signal: Google has moved from being a fast follower to a pace-setter, and that shifts pressure onto every other vendor in the enterprise AI stack.

Anthropic’s Claude Code is equally significant from an SMB operations perspective. Originally an internal tool, it was released commercially in May 2025 and reached a $1 billion annual revenue run rate within six months. Anthropic reports that 70–90% of its own new code is now written by Claude Code — a figure that, if it holds for enterprise customers, has direct implications for software team sizing, hiring, and procurement. Customers include Netflix, Spotify, Salesforce, and KPMG. Vendors building on top of Anthropic’s models should note that Anthropic is not expected to be profitable until 2028, creating continued dependency on investor funding and potential pricing volatility.

Beyond the platform leaders, the list highlights meaningful specialization. Abridge (clinical documentation) reports that 250 of the largest U.S. health systems will use it in 2026, with clinicians spending 60% less time on after-hours charting. Darktrace has automated 90 million cybersecurity investigations, narrowing them to under 500,000 critical incidents — a signal that AI-assisted security triage is no longer experimental. Cohere is building enterprise-grade ‘sovereign AI’ — models that can be hosted within a company’s own security perimeter — which matters acutely for regulated industries. And infrastructure-level players like Cerebras and Mithril are attacking the chronic undersupply of compute and its cost structure, which remains the primary constraint on how fast organizations can actually deploy AI at scale.

RELEVANCE FOR BUSINESS

For SMB leaders, this list is less a celebration and more a competitive clock. The companies on it are building the tools your larger competitors will use in the next 12–24 months. Three signals stand out:

Vendor concentration risk is real. Google, Microsoft, and Anthropic are widening their infrastructure moats. If your current or planned AI tools run on their models or clouds, your pricing, reliability, and feature roadmap are increasingly subject to their strategic priorities — not yours. Cohere’s ‘sovereign AI’ positioning exists precisely because this risk is real for enterprises that need data governance control.

AI coding tools are changing software economics now, not eventually. Claude Code reaching $1B ARR in six months is not a venture story — it’s a signal that developers are already using AI to write production code at scale. For any SMB that relies on software development — internally or through vendors — this shifts expectations around speed, cost, and team composition.

Specialization is the next phase of enterprise AI adoption. General-purpose chatbots are giving way to domain-specific tools with measurable outcomes: 60% reduction in chart time (Abridge), 97% error-detection accuracy (Abridge vs. 82% for off-the-shelf GPT-4o), 50% reduction in legal document review time (GC AI). Leaders should expect AI vendor selection to become more like selecting a qualified domain specialist and less like choosing a productivity platform.

CALLS TO ACTION

🔹 Do not wait for AI to feel ‘settled.’ The companies on this list are creating the defaults. SMBs that defer adoption decisions are not avoiding risk — they are deferring it while the landscape consolidates around them.

🔹 Audit your current AI vendor stack for concentration risk. If more than two of your tools run on the same foundation model (e.g., OpenAI or Anthropic), map the downstream exposure if pricing or availability changes.

🔹 Assess your software development workflows now. If you have an in-house dev team or a software vendor, ask how they are using AI coding tools and what productivity benchmarks have changed. The gap between teams using and not using these tools is widening.

🔹 If you operate in healthcare, legal, or finance, evaluate domain-specific AI vendors (Abridge, GC AI, OpenEvidence) over general-purpose tools for compliance-sensitive workflows. Domain-specific accuracy and audit trails matter more than cost at this stage.

🔹 Monitor the Google vs. OpenAI vs. Anthropic model race. Gemini 3’s rise introduces real competitive pressure at the model layer. Your AI tool vendors’ performance may improve or degrade as they adapt — watch for product updates tied to model migrations.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91495412/artificial-intelligence-most-innovative-companies-2026: March 31, 2026

This Single ChatGPT Prompt Can Do Hours of Market Research in Minutes

Fast Company / Inc. | Ben Sherry | March 23, 2026

TL;DR: ChatGPT’s upgraded Deep Research feature can produce a credible, cited market analysis report in under 30 minutes — a genuine productivity gain for early-stage research — but the output requires expert review to separate usable insight from filler and to account for data the tool could not access.

Executive Summary

OpenAI recently upgraded its Deep Research feature to run on GPT-5.2 (previously on o3) and added the ability to prioritize specific websites during research. The feature directs an AI agent to autonomously search the web, compile findings, and produce a structured, cited report. In a documented test, a prompt for a niche local business idea produced a ~4,000-word report in 21 minutes, including competitor identification, customer persona development, market sizing, and a strategic pivot recommendation — findings a marketing professor described as genuinely impressive.

The limitations are material and worth naming explicitly. The AI produced report contained unnecessary complexity and bulk that required editorial trimming. Many websites block AI agents from scraping their content, meaning the tool’s research has blind spots it cannot always self-disclose — users must specifically prompt it to flag inaccessible sources. The tool does not surface adoption frequency data or repeat purchase projections without explicit prompting. And the quality of the output is directly dependent on the quality of the input prompt — a poorly structured prompt produces a poorly structured report.

This is a demonstrated capability, not a claim. The use case is well-matched: early-stage research, competitive landscape surveys, idea validation. It is not a substitute for primary research, proprietary data, or expert judgment.

Relevance for Business

For SMBs, this is a high-value, low-cost tool for work that previously required either significant staff time or expensive outside research. A 21-minute, $20-tier output that surfaces local competitors, market sizing, and customer personas is meaningful for small teams evaluating new markets, products, or pivots. The key execution risk is treating the output as finished work — it requires critical review, especially where data sources were inaccessible. Leaders should also note that this tool is available to competitors at the same price point, which raises the bar on research quality across the board.

Calls to Action

🔹 Act now — test Deep Research on one live business question your team is currently working on. Use it as a first-pass research layer, not a final deliverable.

🔹 Build prompt discipline — invest 15 minutes in crafting a detailed prompt before running the agent. Use ChatGPT’s voice mode or prompt-writing assistance to develop the input. Quality in, quality out.

🔹 Always prompt the agent to list sources it could not access — then manually retrieve that data. Treating a gap-free report as complete is the primary failure mode.

🔹 Apply expert review before acting on output — have a knowledgeable person assess whether the report’s conclusions hold up, particularly on market sizing and competitive claims.

🔹 Monitor OpenAI’s continued upgrades to this feature — the shift to GPT-5.2 and source prioritization materially improves reliability, and further improvements are likely.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91506061/this-single-chatgpt-prompt-hours-market-research-minutes-heres-how: March 31, 2026

Why Your Employees Aren’t Using the AI You Bought

Fast Company | Varun Puri | March 23, 2026

TL;DR: Enterprise AI spending hit $37 billion in 2025, but three-quarters of companies are still stuck in pilot mode — not because the tools fail, but because organizations are providing compliance-level training instead of the practice-based learning that actually changes behavior.

Executive Summary

Despite a 200% year-over-year increase in enterprise AI spending in 2025, adoption is failing at the human layer. The data is damning: enterprises average 200 AI tools deployed, but only 28% of employees know how to use their company’s applications, and only 7.5% have received what could be called extensive AI training. When employees lack support for sanctioned tools, they route around IT — using consumer AI tools outside company oversight. Critically, 57% of employees are reluctant to admit to their managers that they use AI, and nearly half admit to faking competence to avoid appearing incompetent.

The article’s core argument is well-supported: AI fluency, like clinical skills or sales technique, is built through practice and real-time feedback, not video modules and PDF prompt libraries. Organizations doing this well embed AI coaching into existing workflows (Morgan Stanley’s GPT-powered advisor assistant), build internal communities of practice (PwC’s firmwide prompting initiative), or use structured certification programs (Google Cloud’s 15,000-rep training program). The data supports the investment: companies with a formal AI strategy report 80% adoption success, versus 37% for those without one. In 2025, 42% of companies abandoned most of their AI initiatives — up from 17% the year prior.

The “zombie center of excellence” framing is an important call-out: teams that have consumed large budgets deploying platforms that no one uses in their daily work create the appearance of AI maturity without the substance.

Relevance for Business

This article directly addresses the most common SMB AI failure mode: buying the license, skipping the enablement, and declaring the initiative “underway.” For SMBs, the risk is proportionally higher — smaller teams mean lower slack to absorb failed initiatives, and the cost of an abandoned AI investment is not just financial but reputational and morale-eroding. The shadow IT risk is also acute: employees using unsanctioned AI tools creates data governance exposure that SMBs are poorly equipped to manage. The path forward is practical: invest in structured, practice-based training, measure behavior change (not completion rates), and build communities of internal use-case sharing.

Calls to Action

🔹 Assess now — survey your team on actual AI tool usage, not self-reported familiarity. The gap between what leadership assumes and what employees are actually doing is likely larger than expected.