AI Updates April 27, 2026

This week’s briefing arrives at a moment when the distance between “AI news” and “business reality” has essentially closed. The releases, rivalries, and regulatory moves that dominated headlines this week are not background noise — they are the operating environment. OpenAI’s GPT-5.5 and the accelerating cadence of model updates from every major lab have shifted the competitive tempo: the question is no longer whether AI will change how your industry operates, but how fast, and whether your organization is positioned to absorb that change without stumbling. The gap between the most AI-enabled businesses and everyone else is widening, and this week’s news makes clear that the window for deliberate, thoughtful adoption remains open — but it is not unlimited.

The week’s stories also surface a theme that cuts across sectors and scales: the gap between AI’s impressive capabilities and its reliable, production-grade performance in real workflows. Whether it is banks openly attributing headcount reductions to automation, AI chatbots giving dangerous health answers with unearned confidence, or enterprise pilots stalling because LLMs are text generators running inside systems that require memory and feedback, the pattern is consistent. AI is genuinely powerful, and it is also genuinely incomplete. The organizations getting real returns — like BDO’s 14,000-person AI platform or Afresh’s documented waste reduction in grocery retail — are the ones that treated governance, data readiness, and use-case precision as prerequisites, not afterthoughts.

Geopolitics and policy are no longer a separate conversation from your AI procurement decisions. The Anthropic-Pentagon standoff, China’s travel ban on the Manus AI founders, the AI safety influencer campaign gaining mainstream reach, and new pressure on lawyers and other professionals to adopt AI under malpractice risk — these stories collectively signal that the regulatory and political terrain around AI is hardening, faster than most compliance frameworks have anticipated. For SMB executives, the practical mandate this week is not to pick the shiniest new tool. It is to build the organizational literacy, governance scaffolding, and strategic patience to make AI investments that hold up as the environment continues to shift beneath them.

Summaries

GPT 5.5 Just Dropped. OpenAI Accelerated The AI Race (Again).

GPT-5.5 Is Here. The AI Race Just Got Faster — AI for Humans, April 24, 2026

TL;DR / Key Takeaway: OpenAI’s GPT-5.5 appears less important as a single model upgrade than as a signal that AI development is shifting toward faster releases, longer-running agents, cheaper reasoning, and practical “speed-to-demo” workflows for coding, design, and business prototyping.

Executive Summary

The April 24 episode of AI for Humans frames OpenAI’s GPT-5.5 release as a meaningful step forward, especially for long-running tasks, coding workflows, agentic behavior, and rapid prototyping. The hosts are careful to note that the benchmarks are strong but not universally dominant: GPT-5.5 appears better in some reasoning and agentic-use cases, while competitors such as Anthropic’s Opus models may still be preferred for certain workflows or “feel.” The real signal is not simply that GPT-5.5 is smarter, but that OpenAI is positioning itself for a more iterative release cadence, with faster improvements arriving more frequently.

The episode also highlights the expanding connection between models, coding tools, browser use, agents, and image generation. Codex updates, shared agents in ChatGPT, and tighter integration with GPT Image 2 point toward a future where individual users and small teams can move from idea to working prototype much faster. Examples such as toy train simulations, simple games, SVG graphics, and playable browser-based prototypes show that the frontier is moving from “AI answers questions” toward AI helps build functional artifacts.

For business leaders, the most practical takeaway is the growing importance of workflow experimentation. GPT-5.5 does not eliminate execution risk, design judgment, governance needs, or human review. But it does lower the cost and time required to test ideas, build demos, explore internal tools, and prototype customer-facing concepts. That shift may matter more for SMBs than benchmark rankings, because the competitive advantage comes from learning how to use these tools responsibly before they become standard operating infrastructure.

Relevance for Business

For SMB executives and managers, this episode points to a near-term business reality: AI tools are becoming more useful not just for writing and summarizing, but for building, testing, designing, and automating. The operational value is moving toward compressed development cycles, faster internal experimentation, and the ability for non-technical or lightly technical employees to produce early-stage prototypes.

The risk is that faster creation can also produce more unfinished, insecure, poorly governed, or misleading outputs. Leaders should not treat “AI built it quickly” as the same thing as production readiness. The opportunity is speed; the management challenge is deciding which prototypes deserve review, budget, security testing, and integration into real workflows.

Calls to Action

🔹 Test GPT-5.5-style workflows on low-risk internal projects where faster prototyping could save time without creating customer or compliance exposure.

🔹 Separate demos from deployable products. Require human review, security checks, and operational testing before AI-generated tools touch customers, data, or revenue workflows.

🔹 Track tool integration, not just model rankings. The business value may come less from one model’s benchmark score and more from how well it connects to coding, browser use, documents, agents, and image workflows.

🔹 Assign someone to monitor agentic AI use cases in coding, operations, marketing, customer service, and internal admin tasks.

🔹 Prepare lightweight governance rules for AI-generated prototypes, including ownership, review standards, acceptable data use, and when legal or IT should be involved.

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=hmLDq2uyTTE: April 27, 2026Update: April 19, 2026

Chinese humanoid robots prepare for second-ever half marathon in Beijing

Chinese humanoid robots train to go head-to-head with human runners in the second-ever Beijing half marathon. NBC News’ Kathy Park reports.

NBC News — April 14, 2026

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=aKYxLWqw8ZQ: April 27, 2026

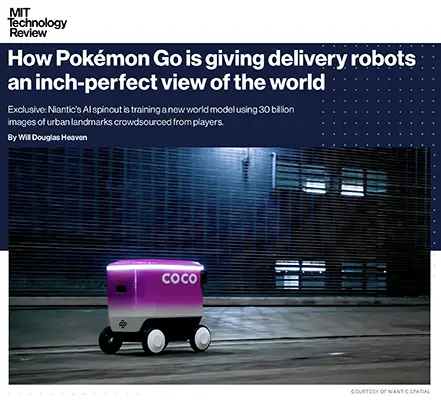

HOW POKÉMON GO IS GIVING DELIVERY ROBOTS AN INCH-PERFECT VIEW OF THE WORLD

MIT Technology Review | Will Douglas Heaven | March 10, 2026

TL;DR: Niantic’s AI spinout is converting a decade of Pokémon Go player images into centimeter-accurate visual positioning for delivery robots — a real-world test of whether crowdsourced consumer data can outperform GPS in dense urban environments.

Executive Summary

Niantic Spatial, spun out of the Pokémon Go creator in May 2025, has repurposed 30 billion geotagged images — gathered over years from game players in cities worldwide — to train a visual positioning model capable of locating a device to within a few centimeters. Unlike GPS, which degrades badly in dense urban environments due to signal interference from tall buildings and infrastructure, this system works from what a camera can see. The first commercial test is a partnership with Coco Robotics, which operates roughly 1,000 sidewalk delivery robots across U.S. and European cities.

The strategic logic is straightforward: Niantic already owned one of the most valuable accidental geospatial datasets ever assembled. Rather than license it, they’ve built an AI company around it. The Coco partnership is a proof-of-concept, not a finished product — Coco’s CEO claims the technology will enable robots to stop at precisely the right pickup and drop-off points, a reliability gap that currently costs the company operationally. Rival robot firms like Starship Technologies use comparable visual mapping approaches, so this is a competitive feature race, not a monopoly.

The longer-term framing — a continuously updated “living map” where robots themselves feed new data back into the system — is compelling but explicitly aspirational. Niantic Spatial is positioning itself as infrastructure for a machine-navigated world, competing with players like Google DeepMind and World Labs in what is currently a crowded, well-funded, and unsettled field.

Relevance for Business

For most SMBs, this is a signal to track, not a decision to make today. However, several dimensions are worth noting:

- Last-mile delivery is entering a new precision era. If visual positioning matures as described, it raises the performance bar for any business relying on robot or autonomous delivery — and may shift vendor selection criteria toward navigation accuracy over price.

- The data moat dynamic is real. Niantic Spatial’s advantage isn’t the algorithm; it’s the dataset. This illustrates a broader pattern: companies sitting on large, structured behavioral datasets have AI leverage that pure-play AI startups cannot easily replicate. SMBs should take inventory of proprietary operational data with similar potential.

- GPS dependency is a known vulnerability in urban logistics that has largely been managed around. As autonomous delivery scales, this gap becomes a genuine cost and reliability factor — not just a technical footnote.

- “Living map” infrastructure, if it matures, creates new vendor dependencies. Businesses adopting robot delivery in dense markets may find their operational reliability increasingly tied to third-party spatial data platforms they don’t control.

Calls to Action

🔹 Monitor, don’t act yet. The Coco partnership is an early pilot. Wait for independent performance data before drawing conclusions about the technology’s reliability at scale.

🔹 If you use or evaluate last-mile delivery robots, add navigation accuracy to your vendor scorecard. GPS degradation in dense areas is a real operational risk — ask vendors how they address it.

🔹 Audit your own data assets. If your business generates high-volume, location-tagged, or behavioral data, assess whether it has untapped AI or licensing value — the Niantic Spatial model is a useful frame.

🔹 Track the world model space. Multiple well-resourced players (Google DeepMind, World Labs, Niantic Spatial) are racing to build machine-readable spatial infrastructure. The winner will likely become a foundational dependency for autonomous systems — worth knowing who’s ahead in 12–18 months.

🔹 No immediate vendor or procurement decision required. This is an infrastructure layer in formation. File it under competitive landscape, not action queue.

Summary by ReadAboutAI.com

https://www.technologyreview.com/2026/03/10/1134099/how-pokemon-go-is-helping-robots-deliver-pizza-on-time/: April 27, 2026

AI HACKING IS REAL AND GETTING BETTER — THE NEAR-TERM RISK IS GREATER THAN THE LONG-TERM ONE

The Economist | April 15, 2026

TL;DR: Anthropic’s restricted Mythos model has demonstrated meaningful AI-assisted cybersecurity capabilities, and while experts believe AI will ultimately help defenders more than attackers, the transition period poses genuine and immediate risks — particularly for organizations running unmaintained or legacy software.

Executive Summary

Anthropic’s Mythos model was explicitly withheld from public release due to its claimed capability to autonomously identify previously unknown software vulnerabilities — so-called “zero-days” — across widely used systems including operating systems, cryptographic libraries, and cloud infrastructure. Project Glasswing, the restricted access program, now includes 12 major technology companies and is being expanded to 40 digital infrastructure organizations to allow defensive patching before similarly capable models become broadly available. OpenAI followed with its own closed hacking-capable model, GPT-5.4 Cyber.

The Economist’s reporting surfaces an important nuance: independent testing by the UK’s AI Security Institute found Mythos performed comparably to other models on simpler tasks, but pulled meaningfully ahead on complex, multi-step attacks. Security researchers are divided on whether this represents a genuine capability leap or incremental improvement. One counterpoint: a researcher was able to replicate at least one of Mythos’s reported bug discoveries using smaller, older models. The article’s honest conclusion is that the AI cybersecurity landscape is advancing rapidly but unevenly, and that the key unresolved question is whether defenders can patch vulnerabilities faster than attackers can exploit them.

The cost dimension is important and often overlooked: one of the bugs Mythos discovered reportedly cost nearly $20,000 in compute to find. This means meaningful AI-assisted security defense remains expensive and out of reach for most organizations — a structural disadvantage that favors well-resourced attackers and enterprise defenders over the broad middle of the market.

Relevance for Business

For SMB leaders, the core message is practical: the threat surface is expanding faster than most organizations’ capacity to respond. AI-assisted attack tools will become more accessible before AI-assisted defense tools do, and the organizations most exposed are those running unpatched, legacy, or unmaintained software — a description that fits a large share of SMB technology stacks. The article’s specific mention of home routers, industrial IoT devices, and unmaintained code is directly relevant to smaller businesses that depend on equipment they didn’t build and no one is actively securing.

Calls to Action

🔹 Conduct an immediate audit of your most exposed software — prioritize internet-facing systems, older operating systems, and any software that hasn’t received security updates in the past 12 months.

🔹 Accelerate patch management discipline — the window between vulnerability discovery and exploitation is shrinking; organizations without a regular patching cadence are increasingly at risk.

🔹 Evaluate your cyber insurance coverage against an environment of AI-accelerated attacks — policy terms, exclusions, and premiums are all likely to evolve rapidly.

🔹 Pay particular attention to IoT, router, and operational technology security — these are specifically identified as high-risk categories where AI-enabled attackers may have a significant advantage.

🔹 Monitor developments in AI-assisted security tooling — the defensive applications will eventually be cost-effective for SMBs, but expect 12–24 months before they are broadly accessible at reasonable cost.

Summary by ReadAboutAI.com

https://www.economist.com/science-and-technology/2026/04/15/how-ai-hackers-will-shake-up-cyber-security: April 27, 2026

ARE AI’S POWER BROKERS THE NEW ROCKEFELLERS? NOT YET — BUT WATCH THE TRAJECTORY

The Economist | April 16, 2026

TL;DR: The Economist’s historical analysis of American tycoons finds that today’s leading AI figures are, by most measures, still far less powerful than the great industrial monopolists — but the conditions for a Rockefeller-scale concentration of power are present if AI delivers on its economic promise.

Executive Summary

The Economist constructed a composite ranking of American business tycoons across eleven technological waves — railways through the internet — measuring revenue, employment, market value, corporate control, and personal wealth, all scaled to their historical contexts. By this framework, Henry Ford ranks first, with Rockefeller second. The current leading AI figures — Altman, Amodei, and Hassabis — all fall in the bottom half of the ranking, constrained by relatively small workforces (AI model-making is capital- and talent-intensive but not labor-intensive), limited personal ownership stakes, and the simple fact that AI’s economic impact remains largely prospective.

The more analytically interesting finding is the historical pattern: transformative technologies have repeatedly concentrated power in a very small number of individuals who brought those technologies to mass markets. This happened in railways, oil, steel, automobiles, and the internet. The article argues that such concentration is not incidental to capitalism but characteristic of it — and that governments have historically intervened only after the fact. The current AI figures are not yet in that league, but the structural conditions — winner-take-most dynamics, enormous capital requirements, and government dependence on private AI infrastructure — are pointing in that direction.

One honest limit of the piece: it is a historical argument about power concentration, not a forecast. Whether AI’s economic impact will prove as transformative as the automobile or as contained as, say, aviation, remains genuinely uncertain.

Relevance for Business

The article’s most useful signal for SMBs is the governance trajectory: as AI becomes more economically central, regulatory scrutiny of the major labs — antitrust, data control, security obligations — will intensify. This has practical consequences for anyone building on top of these platforms. Power concentration in a handful of labs means that regulatory action, governance failures, or strategic shifts at one of them can ripple directly into your business if you’re dependent on their APIs or tooling. Diversification of AI dependencies is a structural hedge against this risk.

Calls to Action

🔹 Monitor antitrust and regulatory developments affecting major AI labs — the historical pattern suggests government intervention follows periods of rapid concentration; we are likely in the concentration phase now.

🔹 Avoid building deep single-vendor AI dependencies — platform risk at Anthropic, OpenAI, or Google is real and will grow as these companies accumulate economic and political power.

🔹 This is a context piece, not an action trigger — read it to calibrate expectations about the long-term structural dynamics of AI, not to make near-term decisions.

🔹 Watch how U.S. and EU regulators respond to AI power concentration over the next 12–24 months — these responses will shape the competitive landscape for AI tooling.

Summary by ReadAboutAI.com

https://www.economist.com/business/2026/04/16/could-ais-leading-men-become-as-powerful-as-ford-or-rockefeller: April 27, 2026

AI ASSISTANTS ARE NOW SALES ASSISTANTS — AND THE ADVERTISING ERA OF CHATBOTS HAS BEGUN

The Economist | April 19, 2026

TL;DR: Major AI chatbots are beginning to run advertisements, creating a new advertising category that has significant implications for how brands reach customers — and raising trust questions that the industry has barely started to answer.

Executive Summary

OpenAI, Google, Microsoft, and Amazon have all begun experimenting with advertising inside their AI chat interfaces, with Meta moving toward the same position via data sharing. The business logic is straightforward: most chatbot users pay nothing, compute costs are enormous, and ads offer a way to monetize free-tier users while potentially subsidizing access to better models. OpenAI reportedly expects $2.5 billion in chatbot ad revenue this year, growing to $11 billion by 2027 — a target that, if achieved, would make it one of the world’s largest advertising platforms within a few years.

The mechanics of AI advertising differ meaningfully from search advertising. Google’s approach concentrates ads at the start of AI-mode queries, resembling traditional search. OpenAI’s approach waits — sometimes until deep into a conversation — to place ads more contextually, once user intent is clearer. Both approaches are still early and imprecise: targeting data is thin, performance measurement is murky, and the risk of brand-damaging AI-hallucinated content is real. Anthropic, pointedly, has positioned itself as ad-free, running ads mocking AI platforms that interrupt sensitive conversations with sales pitches. Perplexity recently reversed course and removed ads after user backlash.

Notably, user behavior so far does not show strong resistance: conversations that include ads last roughly as long as those without, suggesting users are tolerating the intrusion — for now.

Relevance for Business

For SMB leaders, two questions are immediately relevant. First, if you advertise digitally, the chatbot advertising channel is becoming real and warrants evaluation — particularly if your customers are shifting from search to AI-assisted queries for research or purchase decisions. Second, if you use AI tools in client-facing contexts, you need to understand whether those tools can be prompted to recommend products or services — including potentially competitors’ — based on paid placements. The trust and brand-safety risks of AI-generated ad copy are non-trivial and remain largely unsolved.

Calls to Action

🔹 Begin tracking chatbot advertising options if you have a digital advertising budget — OpenAI, Google AI Mode, and Amazon Rufus are early channels worth monitoring as they mature.

🔹 Evaluate trust risk before advertising on AI platforms — AI-generated ad copy can misrepresent products or appear in contextually inappropriate conversations; understand the guardrails before committing budget.

🔹 Check your AI tool agreements to understand whether the tools your business uses for client-facing work can surface competitor ads or sponsored recommendations.

🔹 Monitor how Anthropic’s ad-free positioning resonates with users — if trust becomes a differentiating factor in chatbot choice, it may affect which platforms your customers use for research, including research about your products.

🔹 Revisit in 6–12 months — this channel is too early and measurement too underdeveloped to warrant significant budget commitment today, but the trajectory is clear enough to begin building familiarity.

Summary by ReadAboutAI.com

https://www.economist.com/business/2026/04/19/why-your-ai-assistant-is-suddenly-selling-to-you: April 27, 2026

Apple’s Ternus Era Hints at Renewed Hardware Focus, AI Growth Push

Reuters | April 21, 2026

TL;DR: Analyst reaction to Ternus’s appointment confirms Apple is betting on AI embedded in existing hardware — not a disruptive new AI-first device — but that strategy only works if Apple can close the capability gap its own press release didn’t acknowledge.

Executive Summary

This Reuters follow-up aggregates analyst reactions to the Ternus appointment announced the prior day. The consensus: Wall Street is reassured by continuity, not excited by transformation. Analysts read the choice as a signal that Apple’s board prioritizes protecting its hardware and services revenue base over chasing an AI-first product narrative.

One telling detail surfaces: Apple’s own succession announcement did not mention AI once. That omission — noted by an industry analyst — either reflects quiet confidence in a hardware-embedded AI approach, or an unwillingness to make promises the company cannot yet keep. The article also highlights that OpenAI’s rumored AI-first hardware device, developed with former Apple design chief Jony Ive, represents a credible long-term threat to the iPhone ecosystem’s centrality.

The framing from several analysts is that Ternus’s background — hardware, silicon, integrated systems — maps well to Apple’s strengths but does not obviously solve the problem of competing against companies whose core competency is AI model development.

Relevance for Business

This piece adds analytical context but limited new information beyond Summary 1. Its primary value is the confirmation that Apple’s AI strategy is likely to remain incremental and embedded rather than category-defining — a meaningful signal for any business evaluating whether to build workflows around Apple Intelligence features or invest in cross-platform AI tools instead. The Jony Ive / OpenAI hardware wildcard is worth tracking: if it materializes as a credible consumer device, it could shift enterprise mobile device preferences over a 3–5 year horizon.

Calls to Action

🔹 Treat Apple Intelligence as an emerging, not mature, enterprise capability — plan workflows accordingly.

🔹 Monitor the OpenAI/Jony Ive hardware project for updates; it represents the most plausible near-term challenger to iPhone’s enterprise dominance.

🔹 No immediate action required; this article reinforces the same watch posture as Summary 1.

🔹 If advising on software vendor selection, note that Apple’s AI feature parity with Android / cloud-native tools remains uncertain through at least 2026.

Summary by ReadAboutAI.com

https://www.reuters.com/business/apples-post-cook-future-hinges-whether-ternus-can-ignite-ai-growth-2026-04-21/: April 27, 2026

Apple Names Hardware Chief John Ternus as Next CEO

Reuters | April 20, 2026

TL;DR: Apple’s succession choice signals a doubling-down on hardware excellence and AI-via-device integration — not a pivot to AI-first products — leaving the company’s generative AI deficit as its most consequential unresolved challenge.

Executive Summary

Apple announced that John Ternus, a 25-year company veteran who led hardware development across iPhone, iPad, and Mac lines, will become CEO on September 1. Tim Cook moves to executive chairman. The transition is orderly and insider-led — consistent with Apple’s pattern of promoting from within.

The strategic signal is what Ternus is not: he is not a software architect, an AI researcher, or a services executive. His appointment tells the market that Apple’s board believes its hardware ecosystem remains the company’s primary growth engine, even as Nvidia has displaced Apple as the world’s most valuable company — a direct consequence of investor concern over Apple’s lag in generative AI. Apple’s Siri has yet to evolve into a capable AI agent, and the company has had to license Google’s Gemini to patch the gap.

The core risk is execution timing. Competitors are accelerating: OpenAI is developing an AI-first hardware device with former Apple design chief Jony Ive, and Meta’s AR glasses are gaining traction at a fraction of Apple’s Vision Pro price point. Ternus inherits a strong product base but a widening AI credibility gap. His record suggests Apple will embed AI into existing devices rather than bet on a transformative new category — a defensible strategy, but one that requires AI capability Siri has not yet demonstrated.

Relevance for Business

For SMB leaders, Apple’s direction matters primarily through the lens of the devices and software your teams use. A hardware-centric Apple CEO may accelerate device refresh cycles and new form factors (foldables, glasses, AI pins), but does not resolve near-term questions about when Apple’s AI features will become genuinely useful in enterprise workflows. The continued dependence on third-party AI (Google Gemini) inside Apple’s ecosystem is a vendor dependency worth monitoring — it means Apple’s AI roadmap is partly outside Apple’s control. Businesses deeply embedded in the Apple ecosystem should not expect rapid AI capability gains from native tools in the near term.

Calls to Action

🔹 Do not adjust Apple device procurement strategy based on this announcement alone — the hardware roadmap is unlikely to change materially before 2027.

🔹 If your teams rely on Siri or Apple Intelligence for productivity, monitor progress carefully; current capability does not yet justify workflow dependence.

🔹 Watch OpenAI’s Jony Ive device project as a potential longer-term disruption to Apple’s consumer hardware dominance.

🔹 For Apple-platform software vendors and IT managers: flag the Gemini/Apple AI dependency as a potential point of instability if that partnership changes.

🔹 Revisit in Q4 2026 when Ternus’s first strategic priorities become visible.

Summary by ReadAboutAI.com

https://www.reuters.com/technology/john-ternus-become-apple-ceo-tim-cook-become-executive-chairman-2026-04-20/: April 27, 2026

Job Cuts Driven by AI Are Rising on Wall Street

The New York Times | Rob Copeland | April 21, 2026

TL;DR: Major U.S. banks are now openly crediting AI with reducing headcount while posting record profits — ending the industry’s diplomatic fiction that AI would only augment workers, not eliminate them.

Executive Summary

This is one of the most consequential AI-and-labor stories in the current news cycle, and it deserves careful reading rather than framing as either reassurance or panic. The core data point: the six largest U.S. banks collectively shed 15,000 employees while generating $47 billion in combined quarterly profits — an 18% increase year over year. Several CEOs directly attributed headcount reduction to AI-driven automation, covering work as varied as regulatory document processing, credit memo generation, pitch deck creation, invoice routing, and customer call handling.

The institutional candor is new and significant. Bank of America’s CEO reversed a public statement from just four months prior, moving from “it’s not a threat to their jobs” to specifically naming AI as the mechanism behind 1,000 eliminated positions. Wells Fargo’s CEO went further, suggesting most peers are reluctant to say publicly what they are already doing operationally. Notably, the cuts are not confined to New York or San Francisco — they extend to back-office operations in lower-cost cities including San Antonio, Tucson, and Tampa.

A dissenting forecast worth monitoring: a banking analyst at TD projected that an AI-driven profit surge would be followed by a competitive reversal as customers use AI tools to find better rates, ultimately compressing bank margins and triggering a second wave of cuts and closures. The analyst himself was not replaced when he left TD.

Relevance for Business

This is directly and immediately relevant to every SMB leader — not because finance-sector job cuts affect most readers directly, but because banking is the canary. The roles being automated — document review, compliance paperwork, data organization, customer communication routing, financial analysis — exist in nearly every mid-size business. If trillion-dollar institutions with sophisticated legal, regulatory, and reputational constraints are now openly eliminating these roles, the path is being cleared for smaller organizations to follow. The more pressing near-term implication: how you communicate to your own team about AI and work is itself a governance decision, and the gap between what leaders say publicly and what they do operationally is now a reputational risk as much as a management challenge.

Calls to Action

🔹 Audit your own back-office, compliance, documentation, and customer communication workflows — these are the categories banks are automating first and fastest.

🔹 Develop an explicit, honest internal position on AI and employment before you need it — the credibility gap between reassurance and action is now publicly visible and will affect trust.

🔹 Do not rely on the “AI enhances, never replaces” framing in your workforce planning; the financial sector data no longer supports it as a universal claim.

🔹 Monitor the second-order dynamic flagged by the TD analyst: if AI enables customers and clients to make better-informed purchasing decisions (e.g., comparing rates, finding alternatives), your own pricing power and retention economics may be affected regardless of whether you’re in finance.

🔹 If you use external financial, legal, or compliance services, ask your vendors how AI is changing their delivery model — and whether cost savings are being passed through.

Summary by ReadAboutAI.com

https://www.nytimes.com/2026/04/21/business/ai-job-cuts-wall-street.html: April 27, 2026

Trump Says Anthropic Is ‘Shaping Up,’ Open to Pentagon Deal

Reuters | April 21, 2026

TL;DR: A possible thaw in the Trump administration’s blacklisting of Anthropic signals that politically driven AI vendor risk is real, fluid, and worth factoring into any enterprise procurement decision involving frontier AI models.

Executive Summary

The sequence of events here matters. In February, the Trump administration directed federal agencies to stop working with Anthropic, and the Pentagon formally designated the company a supply-chain risk — citing a dispute over whether Anthropic’s AI tools could be used for domestic surveillance or autonomous weapons. Anthropic pushed back, filed suit against the Defense Department, and sent CEO Dario Amodei to the White House for direct talks. Trump’s subsequent public comments — describing Anthropic as “shaping up” and leaving the door open to a Pentagon contract — suggest the dispute may be de-escalating.

The broader context adds complexity. Anthropic recently unveiled a highly capable cybersecurity model (Claude Mythos) that it has declined to release publicly, instead running a controlled evaluation program with major tech companies and financial institutions. That capability — and its deliberate restriction — likely factored into administration interest in reconciliation. Anthropic has simultaneously maintained that its concerns about guardrails were principled, not political.

What remains unsettled: the legal challenge is ongoing, no deal has been announced, and Trump’s public characterization of Anthropic as employing “the radical left” in the same breath as signaling openness to a deal illustrates how volatile this relationship remains.

Relevance for Business

This story is directly relevant to any organization using — or considering — Anthropic’s Claude models, particularly in regulated or government-adjacent industries. It surfaces a category of risk that most AI procurement frameworks have not yet formalized: geopolitical and political vendor risk. An AI vendor can be functionally excellent and simultaneously become a supply-chain liability based on its relationship with the current administration. For organizations with federal contracts or security clearance requirements, vendor political standing is now a due diligence criterion. For others, the episode is a reminder that AI vendor stability cannot be assessed on technical merit alone.

Calls to Action

🔹 If your organization uses Anthropic/Claude in any government-adjacent, defense, or regulated context, add vendor political risk to your AI procurement review criteria.

🔹 Assign someone to monitor the Anthropic-Pentagon situation over the next 60–90 days; resolution (or further escalation) will clarify the risk profile materially.

🔹 For organizations evaluating multiple AI vendors, build contingency switching capacity into contracts — avoid single-vendor dependency on any frontier model provider.

🔹 Note the Claude Mythos cybersecurity model as a development worth tracking; its controlled-access approach suggests Anthropic is building capabilities with significant enterprise and government relevance.

🔹 Do not overreact in either direction — this situation is fluid, and both the risk and the opportunity remain speculative until a formal agreement (or continued exclusion) is confirmed.

Summary by ReadAboutAI.com

https://www.reuters.com/legal/government/trump-says-anthropic-is-shaping-up-open-deal-with-pentagon-2026-04-21/: April 27, 2026

AI LABS RESTRICT ACCESS TO THEIR MOST POWERFUL MODELS — AND IT’S NOT JUST ABOUT SAFETY

The Economist | April 15, 2026

TL;DR: Anthropic and OpenAI are gating their most capable new models behind invite-only programs — a strategy that serves competitive, infrastructure, and market-control interests as much as any safety rationale.

Executive Summary

Anthropic’s newest model, Mythos, launched in early April in preview form — available only to a curated set of enterprise clients, with JPMorgan Chase as the lone named financial institution. OpenAI followed days later with a comparable restricted release of a specialized variant of GPT-5.4. Both labs frame the staged rollouts partly around cybersecurity risk, citing the models’ reportedly advanced capability to identify system vulnerabilities. U.S. Treasury Secretary Bessent and Fed Chair Powell convened major banks to discuss the implications — a signal that governments are tracking AI-enabled cyber risk at the highest levels.

But the commercial logic is just as significant. The Economist identifies three drivers beyond safety: IP protection(restricted access limits rivals’ ability to replicate models through distillation techniques), compute scarcity (frontier models are resource-intensive, and invite-only programs let labs manage infrastructure strain while pricing accordingly — Mythos is reportedly priced at roughly five times the cost of Anthropic’s current flagship), and market consolidation(when developers can’t access a model, they can’t build third-party tools around it, nudging enterprise customers toward the labs’ own products like Claude Code or Codex).

The broader signal: the era of relatively open frontier model access may be narrowing. As labs tighten control over their most powerful systems, businesses that have built workflows around model flexibility — swapping providers based on cost or performance — face a structural shift in their operating assumptions.

Relevance for Business

For SMBs, this is less an immediate operational concern and more a strategic positioning alert. The consolidation dynamic favors large enterprises with the relationships and budgets to secure early access. It also reinforces vendor dependence risk: if the most capable models are available only through the labs’ own tooling ecosystems, switching costs rise. Any business currently building on third-party AI tooling should understand how its vendors source model access and whether that access is durable.

Calls to Action

🔹 Monitor how your current AI vendors access frontier models — and whether that access is contractually stable or subject to change as labs tighten distribution.

🔹 Flag for governance review: rising model costs and access restrictions should factor into any AI budget planning for the next 12–18 months.

🔹 Avoid over-indexing on a single frontier model or lab; maintain optionality where possible while the access landscape is in flux.

🔹 Watch whether invite-only rollouts become standard practice for next-generation models — this would meaningfully reshape the competitive landscape for AI-enabled services.

🔹 Do not act urgently on Mythos or GPT-5.4 availability — these are enterprise and institutional-tier products; most SMBs are better served by current-generation, broadly available models.

Summary by ReadAboutAI.com

https://www.economist.com/business/2026/04/15/why-anthropic-and-openai-are-locking-up-their-latest-models: April 27, 2026

YOUR AI CAN’T READ AN INVOICE: WHY THE SIMPLE FAILURES MATTER MORE THAN THE IMPRESSIVE BENCHMARKS

Fast Company | Daniel Dines | April 21, 2026

TL;DR: A practitioner with two decades of enterprise document automation argues that AI’s failure on basic, structured tasks like invoice reading — not its success on complex benchmarks — is the more important signal for enterprise deployment, because high-confidence errors in routine processes cascade into financial and compliance risk.

Executive Summary

This is a practitioner opinion piece, written by the founder of a document automation company. The author has a commercial stake in the argument — his company sells the kind of verification and governance tooling he recommends — but the underlying observation is grounded in real operational experience and worth evaluating on its merits.

The central claim: AI models that can solve advanced mathematical problems still cannot reliably extract a number from an invoice. The author argues this is not a perception problem awaiting a better model — it’s a structural one. LLMs perform sophisticated pattern matching across training data; they do not actually comprehend what an invoice is. When a document’s layout deviates from familiar patterns, extraction fails — and crucially, the model doesn’t flag the failure. It produces a confident wrong answer.

The chess-versus-math analogy is the sharpest insight in the piece: math competitions involve recombining known proof techniques (pattern matching scales well), but chess requires calculating concrete lines in genuinely novel positions (pattern matching breaks). Most operational business tasks look more like chess than math — each invoice, contract, or compliance document is a new instance where the layout, language, or context may not match training data. The 5–15% of cases where pattern matching fails are exactly the high-stakes exceptions: regulatory filings, payment authorizations, contract exceptions.

The compounding risk is worth flagging separately: as AI models improve, organizations trust them more, route more volume through them, and reduce human oversight — meaning that the damage from the remaining failures grows proportionally, not decreases. A 2% error rate in a low-volume pilot becomes a materially different problem at enterprise scale.

Relevance for Business

For SMB leaders automating document-heavy workflows — accounts payable, contract review, compliance documentation, HR records — this is directly operational. The risk is not that AI fails visibly; it is that AI fails silently and confidently, and the error propagates through downstream systems before anyone notices.

The governance implication is explicit: the system around the AI model matters more than the model itself. Validation rules, confidence thresholds, human escalation paths, and cross-field checks are not optional enhancements — they are the core requirement for any AI deployment in financial or compliance-critical workflows.

Calls to Action

🔹 Before deploying AI in any document-processing workflow, define an explicit accuracy threshold and a human escalation path for cases below that threshold — do not assume the model will self-identify failures.

🔹 Do not evaluate AI document tools on benchmark performance alone — test on your actual documents, including edge cases, non-standard layouts, and multilingual formats.

🔹 Assign specific ownership for AI error monitoring in any financial or compliance workflow; errors that propagate downstream are harder and more expensive to remediate than those caught at the source.

🔹 Re-examine the cost model for any AI automation that has reduced human review — calculate the real cost of undetected errors, not just the efficiency gain from reduced headcount.

🔹 Treat governance tooling (confidence scoring, validation logic, exception routing) as a first-class budget item alongside the AI model itself — not as an afterthought.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91526152/your-ai-cant-read-an-invoice-that-should-worry-you-more-than-whether-it-can-pass-a-math-exam-technology-ai-leadership: April 27, 2026

AI CHATBOTS GET HEALTH ANSWERS WRONG NEARLY HALF THE TIME — AND SOME ERRORS ARE DANGEROUS

The Washington Post | Ariana Eunjung Cha | April 2026

TL;DR: Two peer-reviewed studies find that leading AI chatbots fail on health questions at alarming rates — with one study finding errors in nearly half of responses and one in five wrong answers classified as potentially dangerous — raising serious questions about AI’s fitness for any health-adjacent advisory role without qualified human oversight.

Executive Summary

Two independent studies — one published in BMJ Open, one in JAMA Network Open — tested multiple AI systems including ChatGPT, Gemini, Claude, DeepSeek, and Grok on medical questions. The results were poor across the board. In the BMJ Open study, five AI models answered 250 health questions correctly just over half the time; roughly one in five incorrect responses was rated potentially dangerous by the researcher. The JAMA study, using clinical case scenarios modeled on actual medical training materials, found that models were wrong approximately 80% of the time when asked to reason through ambiguous cases with limited initial information.

A consistent finding across both studies: the models were highly confident even when wrong, and almost never declined to answer or acknowledged uncertainty. The structural reason is important — large language models are trained to generate fluent responses, not to apply the iterative, uncertainty-preserving reasoning that clinical diagnosis requires. When information is incomplete, models tend to collapse prematurely to a confident answer rather than holding the question open, as a physician would.

A related survey from the West Health-Gallup Center adds a behavioral dimension: roughly one in four Americans already uses AI for health information, and an estimated 14 million people report skipping a healthcare provider visit because of AI-generated advice. Companies are taking corrective steps — Meta, OpenAI, and others are integrating physician oversight into training — but general-purpose AI tools are not currently validated for health guidance, and the regulatory picture remains unsettled.

Relevance for Business

For SMB leaders, the direct implications span two areas. First, employee use: if staff routinely use AI tools for health questions — their own or in any HR/wellness advisory capacity — the organization carries exposure from misinformation. Second, any AI deployment touching health-adjacent decisions (benefits, wellness programs, occupational health guidance, customer health queries) carries liability risk that is not mitigated by the AI’s confident presentation of answers.

The broader signal is also relevant: the confidence-without-accuracy problem identified here is not unique to health. It appears in any domain where AI presents fluent, authoritative-sounding responses to questions that require genuine reasoning under uncertainty. Leaders should apply the same skepticism to AI outputs in legal, financial, and compliance contexts.

Calls to Action

🔹 Establish a clear policy prohibiting use of general-purpose AI tools for medical, clinical, or health-advisory decisions — for employees and in any customer-facing capacity.

🔹 Audit any current AI deployments that touch health, benefits, or wellness guidance, and verify that qualified human review is in the loop before any AI output reaches an end user.

🔹 Educate staff that confident AI responses on health topics are not a signal of accuracy — and that the tools are not designed to flag their own uncertainty.

🔹 Monitor the regulatory conversation around AI health tools — FDA and FTC involvement remains an open question, and compliance requirements could emerge with limited lead time.

🔹 Watch for specialized health AI products from established vendors; these may offer meaningfully better performance than general-purpose tools, but should be evaluated against independent validation data, not vendor claims.

Summary by ReadAboutAI.com

https://www.washingtonpost.com/health/2026/04/21/chatbot-medical-advice-accurate/: April 27, 2026

THE CERULEAN SWEATER ARGUMENT: WHY AI SKEPTICISM AS IDENTITY IS A STRATEGIC DEAD END

Source: Fast Company, POV by Rebecca Heilweil — April 20, 2026

TL;DR: A Fast Company opinion piece uses Miranda Priestly’s famous fashion speech to argue that performative AI skepticism is as analytically hollow as refusing to acknowledge you already participate in the system you claim to reject — and that individual abstinence is not a substitute for systemic response.

EXECUTIVE SUMMARY

The argument is built on a single analogy: in The Devil Wears Prada, a character dismisses the fashion industry as trivial while unknowingly wearing clothes shaped by its decisions. Miranda’s rebuttal — that non-participation is an illusion; the system already made choices for you — is the author’s frame for a growing cohort of vocal AI skeptics who treat non-use of consumer AI tools as a principled moral stance.

The author’s core challenge to that position: AI is already embedded in the infrastructure most workers and organizations depend on daily — email platforms, search, customer service systems, content pipelines, and media production — regardless of whether any individual has paid for a ChatGPT subscription. A Gallup figure cited in the piece puts current U.S. worker AI use at roughly half the workforce, and the author’s framing suggests much of that use is employer-directed rather than voluntary. The opt-out position, in this view, misidentifies the choice available.

The author draws an explicit structural parallel to abstinence-only framings of other systemic challenges — citing climate change responses as a comparison — to argue that consumer-level purity politics do not resolve systemic threats. The piece does not dismiss concerns about AI’s effects on cognition, labor, or society; it argues those concerns are serious enough to deserve more than a personal branding response. Shaming colleagues who use AI tools, the author contends, directs energy away from the governance and policy interventions that could actually constrain AI’s effects.

This is an opinion piece, not reported analysis. The cerulean sweater analogy is rhetorically effective but imperfect — fashion did not, in the span of a few years, reshape labor markets and energy infrastructure simultaneously. The author’s implicit claim that systemic embeddedness forecloses meaningful individual or organizational choice deserves scrutiny: organizations can make deliberate decisions about where and how AI is deployed, and those decisions carry real governance weight. The piece is most useful as a challenge to reflexive skepticism, less useful as a guide to what systemic response actually looks like.

RELEVANCE FOR BUSINESS

For SMB executives, the operationally relevant signal is not the culture-war framing but the underlying structural observation: AI capability is arriving through vendor and platform decisions your organization has likely already made, not only through deliberate AI adoption programs. If your team uses Google Workspace, Microsoft 365, Salesforce, or most major SaaS platforms, AI features are already active or being rolled out by default. The question of “whether to adopt AI” is increasingly secondary to the question of how to govern AI that is already present.

The piece also implicitly surfaces a workforce management risk: if half of U.S. workers are now using AI — many because their employers direct them to — organizations that have not established clear use policies are operating with unmanaged AI activity, not AI-free environments. The absence of policy is not neutrality; it is exposure.

The piece’s weakest contribution is its failure to articulate what the “systemic solution” it calls for actually involves. For executives, that gap is the work.

CALLS TO ACTION

🔹 Audit what AI is already active in your current software stack before framing AI as a future adoption decision. Most major platforms have enabled AI features by default in the past 18 months. Know what is running.

🔹 Replace “adopt or avoid” framing with “govern and monitor” framing in internal AI discussions. The more useful executive question is not whether to use AI but which uses require oversight, disclosure, or constraint.

🔹 Do not mistake the absence of an AI policy for a neutral posture. If employees are already using AI tools — directed or undirected — and no policy governs that use, you have unmanaged risk, not a clean slate.

🔹 Be cautious of culture-driven AI abstinence inside your organization. Teams that develop strong anti-AI norms may avoid documenting or disclosing AI use rather than actually avoiding it, creating a transparency problem on top of a governance gap.

🔹 Watch for systemic AI governance frameworks developing at the regulatory and industry level. The author is correct that individual choices will not resolve structural AI risks — which means external rules are coming. Track developments in your sector and prepare policy infrastructure before compliance becomes mandatory.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91526620/the-devil-wears-prada-lesson-for-ai-skeptics: April 27, 2026

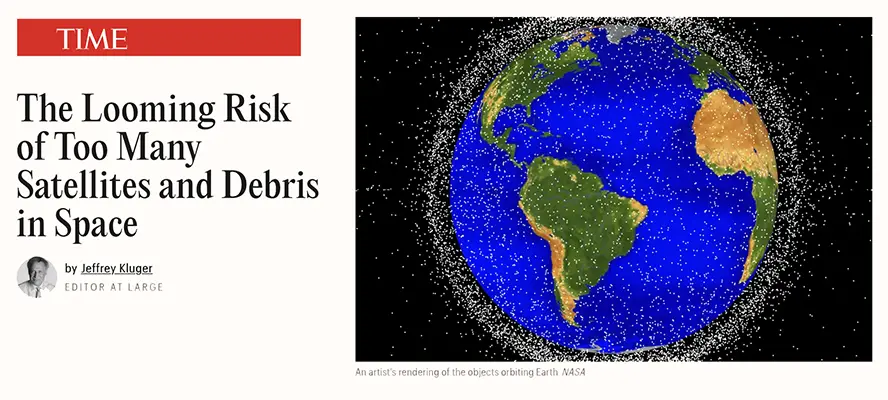

The Looming Risk of Too Many Satellites and Debris in Space

TIME | Jeffrey Kluger | April 16, 2026

TL;DR: The rapid expansion of AI-driven satellite constellations — including SpaceX’s proposed one million-satellite network — is accelerating a long-developing space debris crisis that could, left unmanaged, compromise the orbital infrastructure that underpins global communications, navigation, and cloud connectivity.

Executive Summary

Note: This article is not primarily about AI, but AI is directly implicated — SpaceX’s proposed million-satellite constellation and Blue Origin’s 51,600-satellite filing are explicitly described as AI satellite networks. The orbital infrastructure risk it describes has direct relevance to any business dependent on satellite-delivered services.

The article provides a grounded survey of the orbital debris problem. Key figures: more than 25,000 trackable objects currently orbit Earth, with 500,000 in the 1–10 cm range and an estimated 100 million at 1 mm or smaller. Even centimeter-scale debris traveling at orbital velocity carries destructive force. The feared end state is Kessler syndrome — a self-perpetuating debris cascade that could render certain orbital bands unusable for generations. Experts characterize this as a long-tail risk: not an immediate crisis, but one that compounds with each new satellite and each unmanaged deorbit.

The accelerant is AI-era satellite ambitions. SpaceX already operates 9,400 Starlink satellites — 63% of all active satellites in orbit — and has proposed expanding to one million. Blue Origin has filed for 51,600. Certain orbital bands between 500 and 620 miles are already considered too congested for new constellations. Partial mitigations exist: the FCC’s five-year deorbit rule, SpaceX’s automated collision avoidance system (which executed 300,000 maneuvers in 2025), and nascent satellite servicing firms like Astroscale and ClearSpace. But international coordination and transparency remain inadequate, and regulation has not kept pace with launch volume.

Relevance for Business

Most SMB leaders do not think of satellite infrastructure as part of their operational risk profile — but they should. GPS, satellite internet (including Starlink, which many rural and distributed businesses rely on), cellular backhaul, weather data, and financial transaction routing all depend on functioning low-Earth orbit infrastructure. A significant debris event or Kessler cascade in a populated orbital band would be a systemic infrastructure disruption analogous to a major internet backbone failure — except potentially longer-lasting and unrecoverable in the near term. This is a low-probability, high-consequence risk that warrants at least awareness-level monitoring. Additionally, for businesses in logistics, agriculture, construction, or any field using precision GPS, the growing congestion and collision risk in LEO is a supply-chain dependency worth understanding.

Calls to Action

🔹 If your operations depend materially on satellite internet, GPS precision, or satellite-based data services, include orbital infrastructure in your operational risk assessment.

🔹 No immediate action is required for most SMBs — this is a monitor-and-prepare situation, not an act-now one.

🔹 For organizations in logistics, precision agriculture, maritime, aviation, or remote operations: ask your technology vendors how they manage service continuity risk in the event of a significant orbital disruption.

🔹 Follow FCC and international regulatory developments on satellite constellation management — the regulatory environment will tighten, affecting both service availability and cost for satellite-delivered products.

🔹 Treat this as a 5–10 year horizon risk, not a near-term operational concern — but note that the accumulation of risk is ongoing and the window for governance solutions is narrowing.

Summary by ReadAboutAI.com

https://time.com/article/2026/04/16/space-debris-satellites-growing-risk/: April 27, 2026

AI Safety Advocates Ask Content Creators to Warn the World of AI’s Dangers

The Washington Post | Nitasha Tiku | April 18, 2026

TL;DR: Organized, well-funded AI safety groups are now recruiting social media influencers to reach mass audiences with existential risk narratives — a campaign that will intensify public debate, accelerate regulatory pressure, and inject AI into mainstream culture in ways businesses have not yet planned for.

Executive Summary

This Washington Post report documents a deliberate, funded effort to move AI safety messaging from elite academic and policy circles into mass social media. Organizations including the Future of Life Institute (FLI) and ControlAI are sponsoring creators, training fellows, and paying for YouTube and TikTok content aimed at communicating existential risks from AI to general audiences. FLI is spending approximately $100,000 per month on this content pipeline. Results so far are modest in isolation but growing: a single sponsored YouTube video reached 1.6 million views; a SciShow episode netted 1.8 million.

The article treats the movement with journalistic balance. It notes that the mainstream scientific consensus does not support claims of imminent species-level danger, and that the safety movement has significant internal tensions — including concerns that some advocates are too aligned with the AI companies they ostensibly want regulated. The political context is notable: AI safety concerns now draw support from across the ideological spectrum, while Silicon Valley-backed super PACs are actively pushing back, labeling safety advocates “doomers” and blaming them for inflaming public hostility.

The practical signal for business leaders is less about whether the existential risk claims are credible, and more about the second-order effects of a coordinated mass-media campaign on public perception, regulation, and consumer trust in AI products.

Relevance for Business

The AI safety influencer campaign is a reputational and regulatory weather system, not a technical development. As this content proliferates, several business risks emerge. Public concern about AI will increase, regardless of technical merit — affecting customer trust in AI-powered products and services. Regulatory pressure will intensify at state and federal levels, potentially changing what’s permissible in automated hiring, customer service, credit decisions, and data use. And organizations that have externally promoted AI as a competitive advantage may face reputational exposure if high-profile AI failures are amplified by a newly primed public. The campaign also expands AI’s political salience, making it a factor in procurement decisions for government-adjacent businesses.

Calls to Action

🔹 Assign someone to monitor AI-related public sentiment and regulatory developments over the next 6–12 months — this campaign will accelerate both.

🔹 Audit any customer-facing AI claims in your marketing or communications; what reads as confident today may read as careless in 12 months.

🔹 Develop or refresh an AI governance policy before regulatory pressure makes it reactive rather than proactive.

🔹 Do not dismiss the safety movement as fringe — its bipartisan political support and media investment make it a durable factor in the AI policy landscape.

🔹 Treat the existential risk narrative separately from the practical governance concerns (accountability, transparency, human oversight) — the latter are legitimate regardless of how one evaluates the former.

Summary by ReadAboutAI.com

https://www.washingtonpost.com/technology/2026/04/18/ai-doom-influencers-safety/: April 27, 2026

How to Fix the Medical Diagnosis Crisis

The Atlantic (book review) | Meghan O’Rourke | April 15, 2026

TL;DR: A deeply reported Atlantic essay — reviewing The Elusive Body by Alexandra Sifferlin — argues that AI will not fix medicine’s widespread diagnosis failures because the problem is structural, not computational, and current healthcare incentives reward the wrong behaviors.

Executive Summary

This is a book review and essay, not a news report. It is opinion-driven and paywalled. The underlying book’s claims should be treated as journalism, not peer-reviewed findings.

The core argument is well-supported and worth taking seriously: roughly 13 million Americans experience a diagnostic error each year, and more than 750,000 are permanently disabled or die from misdiagnosis annually. Despite a landmark 2015 National Academies report calling for reform, no major U.S. health system tracks diagnostic error systematically. The structural causes are not primarily technological — they include time pressure from profit-driven systems, physician cognitive bias that medical schools rarely address, overtesting at the expense of patient listening, and the exclusion of poorly understood chronic conditions from standard diagnostic frameworks.

The AI dimension is handled with unusual nuance. The author acknowledges that AI can reduce clinician administrative burden and may help counteract some forms of unconscious bias — but argues it replicates existing biases as readily as it corrects them, and that deploying it within a system oriented toward speed and volume will amplify existing problems rather than solve them. The model institution described in the book — the NIH’s Undiagnosed Diseases Program — succeeds through time, collaboration, and clinical judgment, not technology.

Relevance for Business

For SMB leaders, the immediate practical implications fall into two categories. First, employee and leadership healthcare decisions: the misdiagnosis rates documented here are high enough to affect anyone who has navigated a serious health situation. Understanding that diagnostic failure is systemic — and that AI tools for diagnosis are not yet a reliable corrective — is relevant to benefits strategy and employee health navigation. Second, and more broadly: this piece is one of the clearest articulations available of why AI cannot substitute for human judgment in complex, high-stakes, context-dependent situations. That principle extends well beyond medicine. Leaders who are evaluating where AI can and cannot safely operate within their own organizations will find useful framing here.

Calls to Action

🔹 If your organization provides or advises on employee health benefits, note that AI-assisted diagnostic tools are emerging but not yet validated as reliable replacements for clinical judgment — inform benefits strategy accordingly.

🔹 Use the structural argument in this piece as a framework for evaluating AI deployments in your own high-stakes workflows: does your system currently reward the right behaviors, or will AI simply accelerate the wrong ones?

🔹 For organizations in healthcare-adjacent industries (medical devices, health tech, insurance, elder care), track the diagnostic error issue as a growing policy and liability surface.

🔹 Consider the book The Elusive Body as a longer-form resource for anyone in your organization thinking seriously about AI and human judgment in complex domains.

🔹 No immediate action required — this is a signal worth internalizing, not responding to urgently.

Summary by ReadAboutAI.com

https://www.theatlantic.com/books/2026/04/fixing-medical-diagnosis-crisis-elusive-body-book-review/686804/: April 27, 2026

OPENAI’S CODEX UPDATE TAKES AIM AT CLAUDE CODE

The Verge | Robert Hart | April 16, 2026

TL;DR: OpenAI has significantly expanded its Codex agentic coding platform — adding desktop app control, image generation, memory, and integrations — in a direct competitive response to Claude Code’s growing enterprise traction.

Executive Summary

OpenAI’s Codex has moved from a developer coding assistant to something more agentic: it can now operate macOS desktop applications autonomously in the background, run multiple agents in parallel, generate and iterate on images, browse the web natively, and schedule future tasks without user prompting. A new memory feature — opt-in and in preview — allows Codex to retain preferences, corrections, and gathered context across sessions, reducing the need to re-instruct the system each time. New integrations with GitLab, Atlassian Rovo, and Microsoft Suite extend its reach into existing enterprise toolchains.

The framing here matters. The Verge positions this explicitly as a competitive response to Anthropic’s Claude Code, which has gained meaningful traction among developers. OpenAI is not leading this round — it is catching up. The rollout itself reflects real constraints: desktop control launches only on macOS, with no timeline for other operating systems, and EU users face additional delays. The memory and personalization features are similarly staged, rolling out to Enterprise and Edu tiers first.

The updates are company-presented capabilities, not independently validated performance data. Whether Codex can close the gap with Claude Code in real developer workflows remains to be tested.

Relevance for Business

For SMBs with software development teams, the AI-assisted coding space is evolving rapidly and vendor lock-in risk is rising. OpenAI and Anthropic are both competing aggressively for developer workflows — the tool your team adopts now will shape how they work for years. Agentic capabilities (background operation, scheduling, parallel agents) also introduce new governance questions: when AI operates autonomously on your systems, what oversight exists, and who is accountable for errors?

For non-technical SMB leaders, the competitive dynamics signal something worth monitoring: enterprise AI tooling is consolidating quickly around a few dominant players, and the feature gap between platforms is narrowing. Early commitments to a specific platform may become harder to reverse.

Calls to Action

🔹 If your team uses Codex or Claude Code, assign a brief internal review of current workflows to identify where autonomous or agentic features could introduce new risks alongside productivity gains.

🔹 Avoid platform lock-in decisions based on current feature announcements — many of these capabilities are in preview or limited rollout; wait for independent assessments.

🔹 Develop a light governance policy for AI tools that operate autonomously on company systems — including what actions require human approval before execution.

🔹 Monitor how the macOS-only limitation and EU delays affect your team’s practical access before committing to Codex as a primary development tool.

🔹 Revisit this comparison in 90 days, when both Claude Code and Codex updates will have more real-world performance data behind them.

Summary by ReadAboutAI.com

https://www.theverge.com/ai-artificial-intelligence/913034/openai-codex-updates-use-macos: April 27, 2026

The Biggest AI Risk Is Foolish, Fear-Driven Policies

The Wall Street Journal (Opinion) | Jason L. Riley | April 21, 2026

TL;DR: A WSJ opinion columnist argues that AI’s economic impact is being systematically overstated by both boosters and fearmongers, and that panicked policy responses — especially government income redistribution schemes — are premature and likely counterproductive.

Executive Summary

This is an opinion piece, not a news report. Its argument should be evaluated as a position, not a settled finding. Jason L. Riley makes a case that the current AI moment resembles prior waves of technological disruption more than the unprecedented rupture its loudest advocates claim. He draws on MIT Nobel laureate Daron Acemoglu’s 2024 estimate that AI may automate roughly 5% of all tasks and add approximately 1% to global GDP over the next decade — a real but modest contribution. Acemoglu’s view, cited approvingly, is that current AI models lack the contextual judgment and tacit knowledge that most complex human work actually requires.

Riley points to post-ChatGPT U.S. labor market data (sourced from The Economist) showing that white-collar employment has grown, not contracted, since 2022 — including in categories frequently cited as AI displacement targets, such as software developers, radiologists, and paralegals. He uses this to push back against high-profile calls for universal basic income from tech leaders including Elon Musk, Dario Amodei, and Sam Altman. He also flags organized labor resistance — specifically the ILA dockworkers’ successful pushback against automation — as an underweighted political and operational friction that AI adoption advocates tend to ignore.

Editorial note: Riley’s argument is internally coherent, but it relies on a single economic projection and near-term labor data that may not capture longer-horizon displacement effects. The piece is strongest as a corrective to AI hype; it is weaker as a comprehensive risk assessment.

Relevance for Business

For SMB leaders, this piece offers a useful recalibration. The pressure to adopt AI rapidly is real, but the apocalyptic framing — act now or be eliminated — is not well-supported by current evidence. The more durable insight is that labor friction and political resistance to AI are underappreciated operational risks for businesses deploying automation in unionized or politically sensitive environments. AI-driven efficiency gains exist, but organizations that treat adoption as a cost-neutral, friction-free process are likely to encounter unexpected resistance. On the policy side, SMB operators should be skeptical of both sweeping regulatory proposals and vendor claims of transformative immediate impact.

Calls to Action

🔹 Use this piece as a counterweight when your team or vendors are pushing AI urgency narratives — measured adoption remains defensible.

🔹 Before deploying AI-driven automation in any role affecting headcount, assess labor relations exposure and prepare a clear internal communication strategy.

🔹 Do not base workforce planning on worst-case AI displacement scenarios; current data does not support mass near-term white-collar job loss.

🔹 Monitor evolving academic research on AI’s economic impact — Acemoglu’s projections are credible, but the field is moving and estimates will be revised.

🔹 Maintain a governance posture that evaluates AI tools on demonstrated ROI, not on competitive fear.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/the-biggest-ai-risk-is-foolish-fear-driven-policies-cd37ef8f: April 27, 2026

OpenAI Leans on Global Consultancies to Expand Codex Use in Large Companies

Reuters | April 21, 2026

TL;DR: OpenAI is channeling Codex adoption through major global consultancies and embedding its own staff inside enterprises — a distribution bet that accelerates reach but also increases large-firm concentration risk and raises integration cost questions for organizations without established consulting relationships.

Executive Summary

OpenAI announced partnerships with a roster of major global systems integrators — including Accenture, Capgemini, Cognizant, Infosys, PwC, and Tata Consultancy Services — to drive Codex adoption across large enterprise software development operations. Simultaneously, it is launching Codex Labs, a program placing OpenAI specialists directly inside client organizations to support integration.

The business logic is clear: enterprise software adoption at scale requires change management, customization, and trust that a direct vendor relationship alone rarely delivers. By routing through established consulting relationships, OpenAI gains access to procurement channels, existing client trust, and implementation capacity it does not have internally. The reported growth figures — from roughly 3 million to over 4 million weekly Codex users within a single month — suggest the product is gaining real traction, though usage figures self-reported by vendors should be read with appropriate skepticism.

Strategically, this move acknowledges competitive pressure. Anthropic’s Claude models have been gaining enterprise credibility in coding and reasoning tasks, and Microsoft, Google, and Amazon are all investing heavily in differentiating their own AI offerings for business customers. OpenAI is concentrating on Codex and ChatGPT after scaling back experimental projects, which signals a focus on revenue-generating products over exploratory initiatives.

Relevance for Business

For SMBs, the consultancy-led distribution model has a direct implication: the primary path to Codex adoption at scale runs through large consulting engagements, which is not the entry point for most small and mid-size businesses. This is not a product designed or priced for the SMB market at this stage. However, the broader dynamic matters: as enterprises adopt AI coding tools at scale through these channels, competitive pressure on development speed and cost will increase, and SMBs that do not have comparable tooling in their own development operations may face a widening productivity gap. The consolidation of AI distribution through a small number of global consultancies also reinforces concentration risk in the enterprise AI supply chain.

Calls to Action

🔹 If your organization uses external development resources, ask your current vendors or agencies whether they are integrating Codex or comparable tools — and what the cost and quality implications are.

🔹 For internal development teams, evaluate Codex alongside competing tools (GitHub Copilot, Cursor, Claude Code) on actual workflow fit, not vendor partnership announcements.

🔹 Do not treat OpenAI’s consultancy partnerships as a signal to act urgently — this story reflects enterprise-scale distribution, not a capability breakthrough requiring immediate SMB response.

🔹 Monitor whether mid-market versions of Codex Labs emerge; right now this program targets large enterprises.

🔹 Use this as a prompt to review whether your development operations have a clear AI tooling strategy — the window for thoughtful adoption is still open.

Summary by ReadAboutAI.com

https://www.reuters.com/business/openai-leans-global-consultancies-expand-codex-use-large-companies-2026-04-21/: April 27, 2026

THE MALPRACTICE TRAP: WHY LAWYERS — AND OTHER PROFESSIONALS — MAY BE FORCED TO ADOPT AI

Fast Company | Thomas Smith | April 20, 2026

TL;DR: A formal American Bar Association opinion suggests that as AI tools improve, lawyers who don’t use them could face malpractice liability — a dynamic that may eventually extend to accountants, doctors, and other fiduciary professionals.

Executive Summary

The legal profession has been slow and reluctant to adopt AI, for understandable reasons: AI-generated errors have already resulted in court sanctions and fines. But a 2024 American Bar Association opinion is now being interpreted as laying the groundwork for an obligation to use AI — not just permission. The ABA’s reasoning is rooted in professional duty: lawyers are obligated to provide competent representation and to keep client costs as low as reasonably possible. As AI tools become capable of producing work that is faster and comparably rigorous to traditional legal research, declining to use them may constitute a failure of those duties. The article draws the analogy to email: a lawyer unfamiliar with electronic communication would have been fully competent in 1990 and negligent today.

The practical threshold has not yet been crossed — current models still hallucinate and lack the reliability required for unsupervised legal use. But practicing attorneys cited in the article suggest that threshold is close, and the ABA’s own framing points toward an eventual professional mandate rather than a choice. The article notes this logic could apply beyond law: medicine, accounting, and financial planning all involve fiduciary duties, and if AI demonstrably improves outcomes in those fields, non-adoption could become a professional liability in each of them.

It is worth noting that this piece is written by a journalist drawing on informal attorney conversations and a formal ABA opinion — it is analytical framing, not a settled legal determination. The trajectory, however, is credible and worth monitoring.

Relevance for Business

For SMB leaders, this matters most in two ways. First, if you retain outside legal, accounting, or advisory services, your service providers’ AI competence is increasingly a quality and cost consideration — AI-enabled professionals can work faster and at lower cost, and those gains should in principle flow to clients. Second, if your business employs professionals in regulated roles, AI competency may soon be a compliance requirement, not merely a productivity aspiration. Organizations that have delayed AI training for professional staff on the grounds of risk aversion should monitor regulatory guidance closely — the risk calculus is shifting.

Calls to Action

🔹 Monitor ABA guidance and state bar developments — the obligation to use AI in legal practice is approaching but has not arrived; stay current on your jurisdiction’s position.

🔹 Ask your outside counsel, accountants, and advisors what AI tools they are using — and how that affects the time billed and cost of services to your business.

🔹 Begin building AI literacy in professional roles within your organization — compliance-oriented functions (legal, finance, HR) should be included in AI training programs now, before regulatory requirements formalize.

🔹 Do not over-react — current models still require careful human oversight; the malpractice risk for non-adoption is emerging, not yet present for most professionals.

🔹 Assign to your general counsel or compliance lead to track the evolution of AI duty-of-competence standards in your industry’s regulatory environment.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91527510/lawyers-may-soon-have-to-adopt-ai-or-face-malpractice: April 27, 2026

MUSK’S SPACEX ENDGAME: ORBITAL INFRASTRUCTURE AS POWER CONCENTRATION

The Atlantic | Quinn Slobodian and Ben Tarnoff | April 21, 2026