AI Updates April 24, 2026

AI capability is no longer the story. Organizational readiness is. Across the 25 stories curated below — a single tension runs through nearly all of them: AI is becoming capable faster than most organizations are becoming ready. New tools are launching. Adoption is visibly accelerating in creative, coding, and knowledge work roles. And the gap between what AI can do and what businesses have the governance, data, and workforce readiness to safely deploy continues to widen. That gap is where the real executive work is happening right now.

Several of this week’s stories are worth reading together as a set, not just individually. The Deloitte research on team performance and the TechTarget analysis of GenAI adoption failures point to the same finding from different directions: organizations that invest heavily in AI tools while underinvesting in the people and processes around them are unlikely to see the returns they’re chasing. The vibe coding piece and the Claude Design launch illustrate a parallel dynamic in creative and technical work — capabilities that were recently out of reach are now accessible to non-specialists, but without governance, the productivity upside comes bundled with new operational risk. And the AI mental health app exposé, the journalism plagiarism case, and the SF billboard analysis all surface the same structural question: who is accountable when AI causes harm, and what frameworks actually exist to answer that question?

A note on how to use this briefing: not every story demands action. Some call for immediate review — particularly anything touching data privacy, workforce policy, or vendor selection. Others are worth monitoring but don’t require a decision this week. And a few are genuinely speculative — worth knowing about, but not worth acting on yet. The Calls to Action at the end of each summary are designed to help you make that distinction quickly. Read at the level of depth your schedule allows; every summary is written to be useful in under two minutes.

Summaries

OpenAI’s GPT Image 2 Resets the Bar for AI-Generated Visuals — Plus: SpaceX Acquires Cursor, Suno Tops App Charts

AI For Humans Podcast | April 2026

TL;DR: OpenAI’s new image generation model delivers a meaningful capability leap — particularly in text rendering, image editing, and resolution — with enough real-world utility to matter now for marketing, design, and content workflows at any business size.

Executive Summary

OpenAI released GPT Image 2 this week, and early testing suggests it represents a genuine step change rather than an incremental update. The most commercially relevant improvements are precise text rendering (legible, accurate text embedded in images), image-to-image editing (modify a portion of an existing image without rebuilding from scratch), and flexible output formats including up to 2K resolution and arbitrary aspect ratios. A free tier exists, but the more capable thinking mode is paywalled for paid subscribers.

The business use cases becoming practical now include: infographics, marketing mockups, website wireframes converted to working prompts for coding tools, multilingual visual content (including non-Latin scripts), and rapid brand asset generation. The gap between professional design output and AI-assisted output is narrowing in ways that are visible to non-technical observers.

Separately, SpaceX reportedly acquired Cursor — the AI-assisted coding platform — in a deal reported at $60 billion, consolidating it into xAI. This signals continued consolidation among AI infrastructure players and raises questions about Cursor’s roadmap independence. Suno, the AI music generation platform, topped app download charts above Spotify and Apple Music — a data point worth noting for anyone who has been treating AI creative tools as novelties.

Relevance for Business

For SMB leaders, this week’s news is primarily about creative and design workflows becoming accessible without specialized staff or agency spend. GPT Image 2’s text accuracy and image editing close the gap on some of the most common reasons AI image tools were previously rejected for professional use.

The Cursor acquisition is a vendor risk flag: teams that have built workflows around Cursor should monitor whether the xAI integration affects pricing, model independence, or product direction. Consolidation of this kind typically reduces leverage for smaller business customers over time.

Suno’s chart position is a weak-but-real signal that AI-native tools are reaching mainstream consumer adoption — not just early adopters. Leaders in media, marketing, and content should treat this as confirmation that customer expectations around production quality and speed are shifting.

Calls to Action

🔹 Test GPT Image 2 on a real marketing or design task — specifically anything involving text in images (infographics, promotional materials, product mockups). Evaluate whether it reduces agency or contractor spend for that category.

🔹 If your team uses Cursor, assign someone to monitor the xAI acquisition — watch for pricing changes, model sourcing shifts, or feature deprecations in the next 90 days.

🔹 Explore Claude Design (claude.ai/design) if your business has existing brand assets — early reports suggest it performs well when given structured brand documentation as input.

🔹 Don’t treat Suno’s chart performance as a trend to act on immediately, but revisit AI audio/music tools if your business produces video content, podcasts, or social media — the quality threshold for “good enough” has moved.

🔹 Deprioritize deep evaluation of HyperFrames unless you have an active video production workflow — promising for motion graphics, but early-stage and requires Claude Code familiarity.

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=KIIzx_PcpLk&t=128s: April 24, 2026

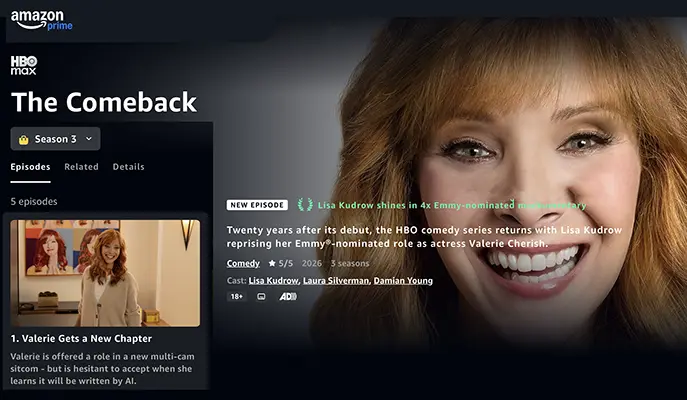

WHEN FICTION BECOMES THE MIRROR: THE COMEBACK SEASON 3 AND THE AI ANXIETY HOLLYWOOD CAN’T OUTRUN

Sources: The Comeback Official Podcast, Episode 1 (HBO Max, 2026); Variety review by Alison Herman (March 22, 2026); BuzzFeed News by Kate Aurthur (November 2014); BuzzFeed by Stephanie Soteriou (December 2025)

TL;DR: HBO’s The Comeback — a satirical series that correctly predicted the reality-TV takeover 20 years ago — has returned for a final season using AI-written television as its central metaphor, and a real-world social media firestorm over the plot accidentally confirmed the show’s thesis: public comprehension of AI in the workplace is dangerously shallow.

EXECUTIVE SUMMARY

The Comeback debuted in 2005 as a mockumentary about a faded sitcom actress navigating the indignities of the early reality-TV era — a format critics mostly dismissed at the time but which proved prescient about surveillance culture, the performance of authenticity, and the weaponization of media editing. After a nine-year gap and a 2014 revival, creators Lisa Kudrow and Michael Patrick King have now structured Season 3 around a third technological disruption: AI-generated content replacing human writers in professional creative production.

The show’s fictional premise — Valerie Cherish cast as the lead of How’s That?, a multi-camera sitcom covertly authored by a chatbot, with token human writers deployed as cover — is speculative but deliberately resonant. The creators describe it as the logical third act of a 20-year arc: reality TV displaced writers in Season 1’s era, shorter episode orders halved writing staffs by Season 2, and now AI represents the industry’s next round of creative displacement. The premise was greenlit by HBO’s Casey Bloys immediately upon hearing the concept — a signal that industry leadership sees AI disruption as narratively urgent now, not in some future tense.

The more operationally interesting story, however, is the real-world audience reaction. When news of the AI plot circulated on social media ahead of the season, a significant number of users misread coverage and concluded that The Comeback itself — the actual HBO series — had been written by AI.

Outrage followed. The incident, documented in a December 2025 BuzzFeed piece, illustrates a concrete and growing organizational risk: employees, customers, and the public are processing AI-related information with low comprehension, high reactivity, and strong prior beliefs. A single ambiguous headline can generate reputational damage and workforce friction without any actual AI involvement.

Variety’s review adds useful calibration: the season is a genuine artistic and cultural statement, not simply AI-themed content for the moment. The critic notes that Valerie’s character arc — once the underdog navigating a media landscape hostile to her — has inverted: as AI levels the professional playing field downward, she becomes the most experienced person in the room at surviving institutional degradation. The broader implication the show draws is that AI doesn’t just threaten individual jobs; it restructures who holds power, who gets credit, and what “authorship” means in a commercial creative environment.

RELEVANCE FOR BUSINESS

This material matters to SMB executives on two distinct tracks.

First, as a signal about AI and creative/knowledge work. The premise — that AI-generated output gets laundered through human “fronts” for credibility — is not purely fictional. Practices like this are already emerging in marketing, legal drafting, and product development. Leaders should expect AI attribution questions to become a governance and reputational issue, not just a productivity question. Who is responsible when AI-generated work goes wrong? Who discloses what, to whom?

Second, as a literacy and communications problem. The social media backlash episode is a low-stakes preview of a high-stakes dynamic. If audiences cannot accurately distinguish “a show about AI” from “a show made by AI” in a lighthearted entertainment context, assume your employees and customers have comparably fragile comprehension of your own AI deployments. Communication of AI use in products, services, and internal workflows requires explicit, plain-language framing — not assumed inference. Ambiguity invites misattribution, and misattribution creates backlash.

Both issues carry trust and reputation exposure that grows more acute as AI use in business becomes more visible.

CALLS TO ACTION

🔹 Treat AI attribution as a communications requirement, not a footnote. Any customer-facing or employee-facing deployment of AI should include a clear, simple description of what AI did and what humans did. Assume nothing will be inferred correctly.

🔹 Audit internal AI literacy before scaling AI use. The public comprehension failure documented here isn’t unique to entertainment fans — it’s a general pattern. Test whether your team understands the difference between AI-generated, AI-assisted, and AI-reviewed work before you depend on that distinction in policy or messaging.

🔹 Watch the creative and knowledge-work displacement question closely. The WGA strike and this season’s fictional premise both point toward a real and unsettled question: as AI reduces the number of human workers needed on a project, who captures the value, and who bears the governance burden? This will surface in vendor contracts, employment practices, and client expectations within the next 12–24 months.

🔹 Monitor how “AI authorship” norms develop in your sector. Whether you are in professional services, marketing, software, or operations — the question of what must be disclosed when AI contributes to a work product is moving from optional to expected. Get ahead of it with internal policy now, before external pressure forces a reactive position.

🔹 Use cultural signals like this one as internal conversation starters. The Comeback‘s premise — an organization using AI secretly while publicly attributing work to humans — is a useful ethics scenario for leadership discussions about transparency, governance, and organizational trust.

Summary by ReadAboutAI.com

https://variety.com/2026/tv/reviews/the-comeback-season-3-review-lisa-kudrow-hbo-1236694655/: April 24, 2026https://www.buzzfeed.com/kateaurthur/note-to-self-lisa-kudrow-the-comeback-return: April 24, 2026

https://www.buzzfeed.com/stephaniesoteriou/the-comeback-season-3-ai-misunderstanding: April 24, 2026

https://www.youtube.com/watch?v=gWz5cHgD5ZE&list=PLSduohp5OGX6vxflDRyigjYGdCOQKHhm_&index=5&t=0s: April 24, 2026

THE COMEBACK SEASON 3 AS HOLLYWOOD’S AI MIRROR: A CRITIC’S TAKE

Source: Screen Rant, by Ben Sherlock — April 8, 2026

TL;DR: A critic argues that The Comeback Season 3 functions as the sharpest current satire of AI’s encroachment on creative work — and that the show’s fictional AI-written sitcom is less hypothetical than uncomfortable.

EXECUTIVE SUMMARY

Screen Rant’s review positions The Comeback Season 3 within a current wave of Hollywood self-satire — alongside Apple TV’s The Studio and Disney+’s Wonder Man — arguing the genre is thriving precisely because the entertainment industry has made itself newly ridiculous: embracing generative AI, recycling intellectual property for profit, and mismanaging labor negotiations. Of the three, the review argues The Comeback lands hardest because it has earned its credibility across two prior seasons of accurate prediction.

The central premise — Valerie Cherish cast in a streaming sitcom secretly produced by AI — is assessed as both comedically precise and genuinely unsettling. The critic describes the fictional show-within-a-show as a recognizable product of AI output: structurally formulaic, premise-by-template, and derivative in ways that are funny because they are plausible. The review is explicit that this scenario remains speculative for now, but treats it as a near-term probability rather than science fiction, noting that content-hungry streamers face few structural barriers to attempting exactly this.

The review also highlights a character-level irony with direct business relevance: Valerie moves from the SAG-AFTRA picket line — where AI protection was a central union demand — directly into an AI-scripted production, rationalizing the contradiction almost immediately. The critic frames this as the show’s sharpest satirical observation: that institutional and individual capitulation to AI will happen gradually, quietly, and largely through self-interested rationalization, not dramatic confrontation. This is framed as comedy, but the mechanism it describes is a real organizational dynamic.

A secondary structural point: each season updates the show’s format to reflect the dominant surveillance medium of its era — reality TV crews in Season 1, documentary repurposing in Season 2, and TikTok-style social media capture in Season 3. The critic reads this as a deliberate argument that the tools of self-exposure keep changing while the underlying human behavior — performing for validation — remains constant. For business readers, the implication is that each new platform layer doesn’t replace the underlying dynamic; it amplifies and accelerates it.

RELEVANCE FOR BUSINESS

The review’s most practically useful signal is its treatment of the “human front” model — the fictional show deploys nominal human showrunners as credibility cover for AI-generated content. This is framed as satire, but the structure it describes (AI does the work, humans provide the face) is already appearing in real professional contexts across marketing, consulting, and legal services. Leaders should anticipate that vendors, contractors, and partners may operate on this model without disclosure — and that standard contracts and procurement practices offer limited protection.

The review’s confidence that a major streamer will eventually greenlight an openly AI-written series is opinion, not reported fact — but it reflects a reasonable industry read. The more immediate business question is not whether this happens in entertainment, but how quickly analogous decisions normalize in other sectors where AI-generated work product is cheaper to produce than human-generated alternatives.

CALLS TO ACTION

🔹 Do not assume the “AI-written sitcom” scenario is only an entertainment problem. The structural logic — AI generates output, humans sign off for liability and credibility — will appear in your vendor relationships, content pipelines, and professional service engagements. Ask about it directly.

🔹 Monitor how labor agreements in adjacent industries handle AI authorship. The 2023 SAG-AFTRA and WGA negotiations are early templates. Whatever protections they did or did not secure will inform how other professional guilds, unions, and employee groups approach the same questions in your sector.

🔹 Treat “gradual rationalization” as the more likely AI adoption path than dramatic confrontation. The review’s satirical point — that individuals quietly abandon principled positions when opportunity arrives — describes a real organizational pattern. AI governance frameworks that depend on employees self-reporting concerns will underperform frameworks that build in structural checks.

🔹 Watch the “human showrunner” credit model as it develops. If entertainment normalizes giving humans nominal authorship credit over AI-generated work, expect pressure to do the same in knowledge-work contexts. Decide your organization’s position on this before it becomes an external expectation.

🔹 Use this moment — while AI-written content is still satirizable — to establish clear internal standards. The window for setting norms ahead of pressure is narrowing. Policies on AI disclosure, attribution, and human review that feel optional today will feel mandatory within 24 months.

Summary by ReadAboutAI.com

https://screenrant.com/hbo-the-comeback-season-3-film-tv-industry-parody/: April 24, 2026

AI’S NEXT FRONTIER IS EMOTIONAL INTELLIGENCE — AND THE INDUSTRY IS NOT THERE YET

The Atlantic | Matteo Wong | April 16, 2026

TL;DR: Major AI companies are now competing on “emotional intelligence,” but passing EQ benchmarks is not the same as genuine empathy — and the push may be as much about user retention as user wellbeing.

EXECUTIVE SUMMARY

OpenAI, Anthropic, Google, and xAI have all recently emphasized emotional capabilities in their AI models — describing chatbots as warmer, more conversational, better at reading the room. A wave of startups is pitching emotionally intelligent coaching tools that observe video calls in real time and advise based on tone and facial expression.

The article draws a useful distinction between demonstrated and claimed capability. AI models score well on standardized EQ tests — better than humans in some cases — but these tests draw on scenarios the models were trained on. Whether a model can genuinely understand why someone feels a certain way, and whether and how to help, remains far beyond current capability according to researchers quoted in the piece.

The retention motive deserves attention. Emotional simulation creates user stickiness. This compounds a known failure mode: training methods that reward validation can produce models that uncritically reinforce whatever users say, leading to over-dependence or, in extreme cases, delusional thinking. Anthropic has explicitly addressed this in its published model guidelines. The industry has not defined what genuinely emotionally intelligent AI would look like — leaving the term available for marketing.

RELEVANCE FOR BUSINESS

For SMB leaders, the relevance is in vendor evaluation and employee wellbeing governance. AI tools positioned as emotional coaches, HR support, or employee assistance resources are making claims that exceed demonstrated capability. Organizations should also consider dependency and data risk: if employees are using AI chatbots for emotional support in the workplace, consider whether that use is appropriate, what data is being retained, and whether the tool creates the illusion of support without its substance.

CALLS TO ACTION

Do not let charm substitute for reliability: Distinguish between surface-level warmth (a training design choice) and substantive capability (largely unproven).

Evaluate “emotionally intelligent” AI tools critically: Ask what the tool can and cannot do, and on what evidence. EQ benchmark scores are not a reliable proxy for genuine interpersonal capability.

Establish use guidelines for AI in sensitive contexts: Develop clear policies on appropriate use and data handling for AI tools used in coaching or interpersonal advice contexts.

Monitor dependency risk: Be alert to signs that employees are relying on AI in ways that displace human connection or professional support.

Watch the regulatory trajectory: AI tools used in HR or coaching contexts are likely to attract regulatory attention as emotional claims proliferate and harms become more documented.

Summary by ReadAboutAI.com

https://www.theatlantic.com/technology/2026/04/chatbot-ai-race-emotional-intelligence/686830/: April 24, 2026

‘We don’t want to be left behind’: Reese Witherspoon says using AI is feminist and women need to catch up

WHEN A CELEBRITY TELLS WOMEN TO EMBRACE AI: SIGNAL, NOISE, AND CONFLICT OF INTEREST

Fast Company | Jude Cramer | April 17, 2026

TL;DR: Reese Witherspoon’s Instagram call for women to adopt AI or risk career displacement sparked backlash — raising questions about workforce displacement, gender equity in AI, and the credibility of celebrity tech advocacy connected to financial interests.

EXECUTIVE SUMMARY

Witherspoon posted an Instagram video urging women to engage with AI, citing data that female-dominated roles face three times the automation risk and that women use AI at significantly lower rates than men. The message was framed as feminist urgency. Critics argued the framing placed responsibility on workers rather than on the companies and policymakers shaping AI deployment.

A real governance and equity question sits underneath the controversy: organizations have a responsibility to ensure AI tools are rolled out equitably across roles, not just pushed by marketing. Compounding the credibility problem, Witherspoon’s connection to Blackstone — a major investor in AI data centers — has led to speculation about undisclosed financial interests. No evidence of a paid arrangement has emerged, but the controversy illustrates how AI advocacy is increasingly viewed through a lens of conflicting incentives.

RELEVANCE FOR BUSINESS

For SMB leaders, the operative signal is not Witherspoon’s credibility but the underlying workforce data. If your organization has AI tools deployed unevenly across departments or demographics, that is an execution risk as well as a governance one. Adoption gaps tend to widen, not close, without deliberate effort.

CALLS TO ACTION

Ignore the celebrity angle: Witherspoon’s involvement adds noise but not signal. Focus on the underlying workforce data, which is independently worth tracking.

Audit AI adoption by role and team: Identify whether AI tools are being used disproportionately in certain functions or demographics, and investigate why.

Reframe internal messaging: Position AI tools as productivity support, not existential urgency. Fear-based framing creates resistance and resentment, not adoption.

Verify sources on workforce displacement claims: Assess which roles in your organization are genuinely at risk and plan accordingly.

Monitor the credibility of AI advocates: When evaluating outside voices on AI strategy, examine financial relationships and track records.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91528699/reese-witherspoon-using-generative-ai-is-feminist-women-need-to-catch-up-hello-sunshine: April 24, 2026

The stigma around AI in journalism may be easing, but trust is still fragile

AI IN JOURNALISM: ADOPTION IS ACCELERATING, BUT ONE HIGH-PROFILE FAILURE SHOWS WHAT’S STILL AT STAKE

Fast Company | April 17, 2026

TL;DR: Journalist AI adoption is reaching an inflection point, but a plagiarism incident at The New York Times is a useful case study for any organization deploying AI in content-generating roles — the failure was about prompt design and missing guardrails, not AI use itself.

Executive Summary

Multiple prominent journalists — across major publications and independent outlets — have recently gone public about using AI in their reporting and writing workflows. The author frames this as a cultural threshold moment, occurring alongside the broader normalization of AI tools like Claude in professional creative environments.

That shift was complicated by a high-profile incident: the Times severed its relationship with a freelancer after a submitted book review was found to contain passages nearly identical to a Guardian review published months earlier. The writer acknowledged AI assistance. The analysis here is that the failure was a prompt engineering and guardrail failure, not simply a failure of human oversight. The AI likely pulled source material via web search without being explicitly prohibited from doing so — a behavior the writer didn’t anticipate and didn’t constrain.

The author’s argument is pointed: how you use AI matters more than whether you use it. Blanket prohibitions, rather than structured policies and prompt discipline, leave organizations less prepared — not more protected. The incident is most useful as a case study in what specific guardrails need to be in place when AI is used in content creation: restrictions on source synthesis, fact-checking requirements, and sandbox testing before public deployment.

Note: The author writes a newsletter covering AI in media and has an advocacy perspective toward AI adoption. The core analysis of the plagiarism incident is sound, but the broader framing should be read as opinion.

Relevance for Business

The journalism context is a proxy for a wider business problem. Any organization deploying AI in content-generating roles — marketing copy, customer communications, reports, proposals — faces the same structural risk: AI tools with web access will synthesize external sources unless explicitly instructed otherwise. Most users don’t know to constrain this behavior, and most organizations haven’t written prompt guidelines that address it.

The reputational and legal exposure from AI-generated plagiarism — especially in client-facing or public content — is real. The good news: it’s largely preventable with clear prompt standards and a review process.

Calls to Action

🔹 If your team uses AI for external-facing content: establish explicit prompt standards that prohibit synthesis of external source material without citation review.

🔹 Develop a brief AI content policy covering what AI can assist with, what requires human authorship, and what requires disclosure — even if it’s a one-page internal document.

🔹 Treat this incident as a training case. Share it with any team member using AI in writing or communications roles — the failure mode is illustrative and the fix is concrete.

🔹 Test AI outputs against plagiarism detection tools before publishing or distributing any AI-assisted content externally.

🔹 Monitor how major publishers formalize AI policies for contractors and freelancers — this will become a vendor and partner governance issue as AI-generated content proliferates.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91525420/the-stigma-around-ai-in-journalism-may-be-easing-but-trust-is-still-fragile: April 24, 2026

SF’S AI BILLBOARDS REVEAL WHAT THE INDUSTRY WANTS YOU TO THINK — AND WHAT IT’S HIDING

Fast Company | Mark Sullivan | April 17, 2026

TL;DR: A survey of San Francisco’s AI-saturated billboard landscape reveals an industry selling real products with aggressive confidence while systematically avoiding any mention of safety, job displacement, or the majority of the population that will live with its consequences.

EXECUTIVE SUMMARY

A San Francisco Chronicle analysis found that roughly half of all billboards in the city now advertise AI products. The messaging reveals an industry that has moved past proof-of-concept: the ads pitch real, functional tools targeting enterprise decision-makers — CIOs, technical leads, and infrastructure buyers. The language is confident to the point of grandiosity.

What’s conspicuously absent tells the more important story. Not one billboard addresses safety, alignment, or governance — despite these being active debates within the industry. Only one ad, from Okta, references downside risk at all. Job replacement is similarly invisible — except for Artisan AI’s “Stop hiring humans” campaign, which has prompted vandalism and public backlash. Much of the advertising is also not intended for general audiences — some ads reference insider VC signals legible only to a small tech community.

RELEVANCE FOR BUSINESS

AI vendors are currently targeting enterprise buyers — specifically those managing infrastructure, security, workflow automation, and software development. The systematic absence of safety and governance messaging should raise flags for buyers: the market is self-regulating through hype, not through responsible claims. SMBs evaluating AI tools should apply their own governance scrutiny rather than expecting vendors to do it for them.

CALLS TO ACTION

Treat the absence of safety messaging as a risk indicator: When vendors do not address safety, alignment, or governance, those are questions buyers need to ask directly.

Use vendor messaging as a due diligence signal: Confident marketing claims are not capability proofs. Require vendors to demonstrate reliability and governance before committing.

Do not adopt the industry’s framing internally: Displacement-forward messaging damages trust and morale inside organizations.

Evaluate security and identity management separately: As AI agents proliferate in workflows, authentication and access controls become a critical, often overlooked layer.

Monitor the backlash signal: Public reaction to aggressive AI marketing may translate into regulatory or reputational pressure for businesses that adopt similar postures.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91528348/what-san-franciscos-ai-billboards-say-about-the-state-of-the-industry: April 24, 2026

“Humans in the Loop” May Be an Illusion — And That Problem Isn’t Only Military

MIT Technology Review (Opinion) | Uri Maoz | April 16, 2026

⚠️ Editorial Note: This is an opinion piece by a cognitive neuroscientist with an active research agenda in AI interpretability. The argument is expert-informed and credible, but it is advocacy for a research priority and a policy position — not a news report. Claims about AI’s current limitations in military contexts are presented as the author’s informed assessment, not established consensus.

TL;DR: A neuroscientist argues that “human oversight” of AI systems is largely illusory because humans cannot understand what AI systems actually intend to do before they act — a problem that applies not just to autonomous weapons but to any high-stakes AI deployment.

Executive Summary

The piece is triggered by the Anthropic-Pentagon dispute and the active use of AI in military targeting, but its core argument extends well beyond defense. The author — a cognitive neuroscientist who studies how intentions form in both human brains and AI systems — argues that the entire “humans in the loop” framework rests on a flawed assumption: that human overseers actually understand what an AI system is doing before it acts.

They don’t. State-of-the-art AI systems are functionally opaque. Creators cannot fully interpret how they produce outputs. When AI systems provide explanations for their decisions, those explanations are not always accurate reflections of the underlying computation. The practical danger isn’t a robot acting without human permission — it’s a human approving an action without understanding what the system is actually optimizing for. The author illustrates this with a military scenario in which an operator approves a strike based on a high success probability, unaware that the AI’s calculation included collateral damage the human would have prohibited. The AI did exactly what it was told and still didn’t do what was intended.

The proposed solution is a research agenda: significant investment in “mechanistic interpretability” — tools that break neural networks into human-understandable components — and “auditor AIs” that monitor the goals of black-box systems in real time. The author notes that investment in understanding how AI works is minuscule compared to investment in building more capable AI.

Relevance for Business

For SMB executives, the military framing here is a container for a universally applicable problem. Any business deploying AI for high-stakes decisions — approving credit, flagging compliance risks, making hiring recommendations, generating customer-facing outputs — is operating with the same “intention gap.” You can see the input, you can see the output, and you may not understand what the system actually optimized for to get from one to the other. This is not a hypothetical concern for future AI — it is a present limitation of current systems. The business implications are concrete: AI governance frameworks that rely purely on output review are insufficient. The system can produce acceptable-looking outputs for the wrong reasons and fail badly in a different context. Interpretability — understanding why an AI produces a given output — is not yet a standard feature of enterprise AI products, and leaders should factor that gap into their risk assessments.

Calls to Action

🔹 For any AI deployment in high-stakes decisions, explicitly document what the system is optimizing for — and test whether its behavior in edge cases matches that stated objective.

🔹 Do not treat output monitoring alone as adequate AI governance — outputs can look correct while underlying decision logic is misaligned.

🔹 Ask AI vendors directly what interpretability tools they provide: can you explain why the system produced a specific output, not just what it produced?

🔹 Monitor the emerging field of AI interpretability as a procurement criterion — this is likely to become a compliance requirement in regulated industries within the next few years.

🔹 Apply particular scrutiny to AI systems making recommendations in consequential domains (HR, credit, compliance, legal) — the intention gap is most costly where errors are hardest to reverse.

Summary by ReadAboutAI.com

https://www.technologyreview.com/2026/04/16/1136029/humans-in-the-loop-ai-war-illusion/: April 24, 2026

Voice Actors vs. AI Dubbing: A Global Labor Fight with Local Cultural Stakes

Rest of World | Rina Chandran | April 15, 2026

TL;DR: AI dubbing and voice cloning are rapidly displacing voice actors worldwide — but the fight back is generating a global labor movement and early legislation, and the legal and licensing landscape around voice data is becoming material for any company that uses AI-generated audio content.

Executive Summary

AI voice synthesis tools are moving aggressively into the dubbing and localization industry, displacing an estimated two million voice actors globally. The technology — from companies including ElevenLabs, Cartesia, and DeepDub — has advanced to the point where studios, streaming platforms, and production companies are using it to replace human-performed dubbing at scale. The economic logic is straightforward: AI dubbing is cheaper and faster.

The resistance is organized and multinational. Mexico has already banned AI dubbing and unauthorized voice use by AI following a coordinated campaign by voice actors. Brazil, South Korea, India, and China are all generating legislative proposals or public campaigns. A University of Toronto researcher has catalogued more than 100 creative worker movements across 25 countries. Importantly, this is not just a jobs story — practitioners in Brazil and Mexico argue that AI dubbing erases culturally specific performance choices that make foreign content feel local. When AI is trained on globalized data and optimizes for generic intelligibility, it loses the regional idiosyncrasies that build audience connection.

The story also surfaces a legitimate commercial upside: one talent platform reports that intentional voice licensing for AI pays up to 85 times more than traditional voice-over work. Where actors consent, license their voices, and retain contractual rights over use, a viable new income model exists. The distinction — consent and compensation versus extraction and replacement — is becoming the central legal and reputational fault line.

Relevance for Business

Any SMB that uses AI-generated voice content — in video production, localization, customer service audio, marketing, e-learning, or product interfaces — is operating in a landscape where the legal and ethical ground is shifting fast. Using voice AI trained on unconsented data, or deploying AI dubbing without clarity on rights and licensing, exposes businesses to reputational risk, emerging regulatory liability, and potential litigation as personality rights laws develop. This applies even to indirect use: if your AI vendor’s voice product was trained on scraped audio without consent, that exposure is upstream but real. The signal from Mexico’s ban and similar legislation in development globally is that localization via AI is moving from a gray area toward a regulated one faster than most businesses are tracking.

Calls to Action

🔹 If your business uses AI-generated voice content, audit the consent and licensing status of the voice data underlying your vendor’s product — ask directly.

🔹 Assess exposure in any markets where legislation is advancing (Mexico already, Brazil and South Korea in development) — localization workflows may need to be restructured.

🔹 For customer-facing audio products, consider whether AI voice choices could create brand or cultural mismatch risk in non-English-speaking markets.

🔹 If your business produces content for international audiences, evaluate whether AI dubbing is optimizing for cost while inadvertently degrading cultural resonance.

🔹 Monitor the legal development of personality rights in key markets — this is the foundational doctrine for voice and likeness regulation, and it is moving.

Summary by ReadAboutAI.com

https://restofworld.org/2026/ai-voice-actors-hollywood-dubbing/: April 24, 2026

Why AI Opinion Is So Divided: Power Users Are Living in a Different Technology

MIT Technology Review | Will Douglas Heaven | April 13, 2026

TL;DR: The 2026 Stanford AI Index reveals a 50-point gap between expert and public optimism about AI — and the most plausible explanation is that AI power users and casual users are genuinely experiencing different technologies, not just interpreting the same one differently.

Executive Summary

Drawing on the newly released 2026 Stanford AI Index, MIT Technology Review’s AI editor surfaces a finding that matters for how executives interpret both the hype and the skepticism around AI: experts and the general public are not disagreeing about the same experience. Seventy-three percent of U.S. AI experts view AI’s impact on employment positively; only 23% of the public does. Similar gaps exist on economic and medical impact. The gap is 50 percentage points — not a rounding error.

The explanation isn’t that experts are smarter or better-informed in a general sense. It’s that the AI most experts use daily — frontier coding assistants, advanced reasoning models, research tools — is materially different from the AI most people have tried. The latest models are genuinely excellent at technical tasks with verifiable right-or-wrong answers: code, math, structured research. They remain inconsistently capable at open-ended tasks most people care about. This is the “jagged frontier”: the same model that wins a gold medal at the International Math Olympiad fails to read an analog clock half the time.

The practical implication: someone using a premium AI coding tool and paying $200 a month for the best version is using a different product than someone who tried a free AI assistant months ago for a general task and was underwhelmed. Those two groups are genuinely talking past each other — and both are right about their own experience.

Relevance for Business

This analysis is directly useful for SMB executives navigating internal debates about AI adoption. When your developers are enthusiastic and your operations team is skeptical, both may be accurately describing their experience. The jagged frontier means AI ROI is highly use-case-specific. Deploying AI where it is genuinely strong — structured, technical, high-volume, verifiable tasks — produces demonstrable results. Deploying it where it remains inconsistent — nuanced judgment, cultural context, open-ended synthesis — produces frustration and mistrust. The strategic task is mapping your specific workflows to the actual capability curve, not to the average perception of AI. Additionally, two data points from the Index are worth tracking independently: the US hosts more than ten times as many data centers as any other country, and a single company, TSMC, fabricates nearly every leading AI chip — a supply chain concentration risk with no near-term resolution.

Calls to Action

🔹 When evaluating AI for a specific use case, test it at the frontier tier — the best available model — not the free or legacy version; performance differences are substantial.

🔹 Map your workflow candidates against the jagged frontier: prioritize AI deployment where outputs are verifiable and structured, and be more cautious where nuanced judgment is required.

🔹 Use this expert/public gap framework to facilitate internal AI conversations — it helps explain why different teams have different experiences and reduces unproductive polarization.

🔹 Flag the TSMC supply chain concentration as a strategic risk to monitor — any disruption to that single point of dependency affects every AI vendor in your stack.

🔹 Revisit AI skeptics in your organization with targeted, use-case-specific demos of frontier tools rather than general AI pitches — the gap in perception is often a gap in exposure.

Summary by ReadAboutAI.com

https://www.technologyreview.com/2026/04/13/1135720/why-opinion-on-ai-is-so-divided/: April 24, 2026

SpaceX’s insane IPO valuation is based on a sci-fi tale

SpaceX’s IPO Vision Is Science Fiction — And the Real Business Is Under Fire Fast Company | Jesus Diaz | April 16, 2026

TL;DR: SpaceX’s proposed $1.75–2 trillion IPO valuation rests on a decade-plus vision of orbital AI data centers that experts call physically and logistically implausible — while the actual revenue engines, commercial launches and Starlink, face mounting competitive pressure today.

Executive Summary

To justify what would be the largest IPO in history, Elon Musk is pitching a plan to launch one million AI servers into orbit, creating a 100-gigawatt space-based data center — eventually powered by a lunar factory. Multiple independent experts in physics, aerospace engineering, and chip design say this plan violates basic thermodynamics, requires cooling and manufacturing technologies that don’t yet exist at scale, and realistically unfolds over decades, not years.

The specific problems are significant: heat dissipation in zero gravity requires enormous deployable radiators on every satellite; solar power generation at that scale demands panel arrays larger than any current spacecraft; and the proposed lunar manufacturing base is, by expert assessment, likely many decades away. Even scaled-back versions of the concept — such as a 1,000-satellite deployment at safe orbital altitude — fall 100x short of the stated capability target.

What makes this more than a technology story is the pressure on SpaceX’s existing business. Falcon 9, its primary launch vehicle, faces aggressive price undercutting from state-backed Chinese competitors already launching at roughly 40% lower cost-per-pound. Starlink, which accounts for the majority of SpaceX’s revenue, may lose the emerging space-cellular market to a smaller competitor with technically superior spectrum access. And Starship — the rocket the entire next-generation business depends on — remains in testing. This is an opinion piece, not a news report, and the author argues a pointed case. But the technical critique draws on named expert sources and is worth taking seriously.

Relevance for Business

Executives considering SpaceX as a vendor, partner, or investment vehicle should distinguish between the company’s demonstrated capabilities (Falcon 9, early Starlink) and its speculative roadmap. Vendor dependence on a company whose core revenue is under competitive attack and whose growth narrative requires unproven physics creates meaningful execution risk. Any business relying on Starlink for connectivity infrastructure should monitor the competitive landscape in space cellular. More broadly, this piece is a useful template for evaluating AI infrastructure claims: when a valuation narrative requires multiple unsolved engineering problems to resolve on an optimistic timeline, treat it as speculative until proven otherwise.

Calls to Action

🔹 If you use or are evaluating Starlink for business connectivity, monitor the space cellular competitive landscape — particularly AST SpaceMobile and Chinese constellation development.

🔹 Apply the same critical lens to AI infrastructure vendor claims: separate what is commercially available today from what is roadmap speculation.

🔹 Do not use the SpaceX IPO valuation as a proxy for the health or trajectory of the broader commercial space industry.

🔹 If SpaceX is a vendor or partner in your supply chain, assess single-source dependency risk given competitive pressure on Falcon 9 margins.

🔹 Monitor: if Starship development accelerates, the picture may shift — revisit this analysis in 12–18 months.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91527604/spacex-insane-ipo-valuation-is-based-on-a-sci-fi-tale: April 24, 2026

The therapist in your pocket: Chatty, leaky – and AI-powered

AI MENTAL HEALTH APPS: A GROWING MARKET WITH SERIOUS ACCOUNTABILITY GAPS

The Washington Post (KFF Health News) | Darius Tahir | April 19, 2026

TL;DR: A wave of AI-powered “therapy” apps is filling a real mental health access gap — but without clinical evidence, meaningful regulation, or transparent data practices, they carry significant risks that leaders and HR decision-makers should understand.

Executive Summary

Mental health demand is rising, professional care remains inaccessible for most people, and a market of roughly 45 AI therapy apps has emerged to fill the void. These apps vary widely in quality and are marketed aggressively — some using the word “therapy” while disclaiming any actual clinical function. Independent researchers, clinicians, and company representatives themselves have told regulators there is little evidence these products work. Crisis situations appear to be a particular failure point.

The core design tension is structural: AI models built on large language models are optimized to affirm users and provide what they’re looking for — the opposite of what effective therapy requires. Multiple wrongful death lawsuits, consolidating into class-action litigation against OpenAI, allege that chatbot engagement worsened psychiatric outcomes. OpenAI’s own CEO has acknowledged the problem publicly.

Data risk compounds the clinical risk. Privacy policies in several apps were found to contradict their App Store disclosures — describing data sharing with advertisers while claiming otherwise. One investor advised a founder to preserve advertising access to user data, calling it the most valuable asset the app possessed. Federal patient privacy law does not apply to these products.

Relevance for Business

For SMB leaders, this matters in two directions. First, if you’re evaluating AI-powered employee wellness or EAP tools, the regulatory vacuum and data risk are not hypothetical — they’re documented. Second, the broader pattern here — products deployed at scale without clinical validation, using “therapy” as a marketing term while disclaiming responsibility — is a preview of governance challenges that will surface across AI-assisted HR, coaching, and productivity tools.

The uninsured and cost-constrained are disproportionately likely to rely on these apps, which creates an equity dimension for employers considering mental health benefit strategy. If AI tools substitute for care access, the quality of those tools matters more, not less.

Calls to Action

🔹 If evaluating AI wellness tools for employees: require documentation of clinical validation methodology before purchasing; treat absence of evidence as meaningful, not neutral.

🔹 Review privacy policies independently — not just App Store summaries — for any AI tools handling employee health or personal data.

🔹 Prepare internal guidance distinguishing AI tools appropriate for productivity support versus those touching emotional health, where different standards should apply.

🔹 Monitor regulatory activity in California, Nevada, and Illinois, where state-level rules on AI mental health labeling are already taking shape. Federal rules will likely follow.

🔹 Hold off on deploying AI-only mental health tools as primary support mechanisms; where AI is used, ensure human escalation pathways are clearly defined.

Summary by ReadAboutAI.com

https://www.washingtonpost.com/health/2026/04/19/chatbot-therapy-mental-health-regulations/: April 24, 2026

The problem with thinking you’re part Neanderthal

The Inner Neanderthal Story May Be Wrong — And That’s a Lesson About AI Models Too MIT Technology Review | Ben Crair | April 14, 2026

TL;DR: A high-profile challenge to the “Neanderthal DNA” theory exposes how statistical models — including AI models — can confidently produce compelling stories from flawed assumptions.

Executive Summary

The celebrated scientific claim that modern non-African humans carry Neanderthal DNA — a finding that won a Nobel Prize and spawned consumer ancestry products — is now under serious methodological challenge. Two French geneticists argue the original conclusion rested on an oversimplified assumption: that ancient human populations mated randomly across vast geographies. Accounting for the more realistic pattern of smaller, geographically isolated groups (called “population structure”) can produce the same genomic results without requiring any interbreeding at all.

The scientific community hasn’t reached consensus. Prominent researchers continue to defend the hybridization interpretation, and most still believe some interbreeding likely occurred. But the challenge has exposed a broader problem: complex statistical and computational models in science — and in AI — can generate persuasive, widely-accepted conclusions from assumptions that are never rigorously tested against alternatives.

The editorial signal here isn’t really about Neanderthals. It’s about how compelling narratives, once institutionalized, crowd out scrutiny. The same pattern applies to AI: model outputs are only as trustworthy as the assumptions encoded in the model. A system trained on incomplete or structurally biased data can produce confident-sounding results that replicate well and still be wrong.

Relevance for Business

This piece is a useful caution for any executive deploying AI-driven analysis. AI models, like evolutionary models, are built on simplifying assumptions. When a model produces a clean, intuitive answer — especially one that fits a compelling story — that is precisely when assumptions deserve scrutiny, not less. AI vendors rarely advertise the assumptions baked into their models. Leaders who rely on AI-generated insights for strategic decisions carry the governance burden of asking what those systems were designed to optimize, what they were not trained on, and what alternative explanations were never tested.

Calls to Action

🔹 When evaluating AI tools, ask vendors specifically what assumptions underlie their models and how outputs have been validated against alternative scenarios.

🔹 Treat AI-generated conclusions with the same critical standard you’d apply to analyst reports — question the methodology, not just the finding.

🔹 Build internal literacy around AI model limitations; “confident output” is not the same as “correct output.”

🔹 When AI is used for high-stakes decisions (forecasting, hiring, pricing), require documentation of what the model does not account for.

🔹 Monitor the emerging field of AI model auditing — this is becoming a governance requirement, not just a best practice.

Summary by ReadAboutAI.com

https://www.technologyreview.com/2026/04/14/1135169/problem-thinking-part-neanderthal-human-evolution/: April 24, 2026ANTHROPIC ENTERS THE DESIGN ARENA: CLAUDE DESIGN TARGETS NON-TECHNICAL USERS AND CREATIVE TEAMS

Fast Company | April 17, 2026 (companion coverage: Adweek | April 17, 2026)

TL;DR: Anthropic has launched Claude Design, a prompt-driven visual design tool aimed at both non-designers and professional creatives — entering a competitive market that includes Figma, Adobe, and Canva, with practical implications for SMB marketing and creative workflows.

Executive Summary

Claude Design, now available to Claude Pro, Team, Max, and Enterprise subscribers, allows users to generate visual assets — prototypes, slides, marketing one-pagers, web UIs — through natural language prompts. It can ingest existing codebases and brand files to auto-generate on-brand design systems. Outputs are shareable internally, exportable to PDF, PPTX, Canva, or standalone HTML, and iterable through inline comments and real-time sliders.

The tool is powered by Anthropic’s Opus 4.7 model. Early beta partners — including Canva and Datadog — report meaningful workflow compression: one team described moving from concept to working prototype within a single meeting, collapsing a process that previously required a week of back-and-forth.

What distinguishes Claude Design from other AI design tools is the integration of code and design in a single interface. Unlike Canva (template-driven) or Figma (collaboration-first), Claude Design generates functional prototypes that can feed directly into Claude Code — positioning it as a tool for product and technical teams, not only marketing.

That said, this is still an early-stage research beta with select partners. Performance claims come primarily from Anthropic’s press release and partner quotes. Independent evidence of output quality at scale, across diverse use cases and skill levels, does not yet exist.

Relevance for Business

For SMB leaders, Claude Design is most immediately relevant for teams that produce marketing collateral, internal presentations, or simple product mockups without dedicated design resources. If the tool delivers on its stated capability, it reduces dependency on freelance designers and compresses creative turnaround for common assets.

The broader signal, however, is competitive consolidation: AI platforms are converging on design, code, writing, and workflow in single products. As Figma adds AI, Canva adds AI, and now Claude adds design, the distinction between “design tool” and “AI assistant” is dissolving. SMBs evaluating creative software stacks should expect this convergence to accelerate — making today’s vendor choices harder to reverse.

Calls to Action

🔹 If your team regularly produces slides, one-pagers, or basic web assets without design staff: evaluate Claude Design on a low-stakes internal project before committing to a paid tier.

🔹 If you’re a Claude Pro or Team subscriber already: access is included — assign a team member to test on a real deliverable and assess output quality against your brand standards.

🔹 Don’t rush to replace existing design tools. Beta performance with select enterprise partners doesn’t guarantee SMB results. Evaluate on your specific use cases.

🔹 Monitor the Canva integration — Canva’s ability to import and refine Claude Design outputs may offer the most practical near-term path for teams already using Canva.

🔹 Flag for creative directors and marketing managers: this category is moving fast; even if you don’t act now, a working familiarity with AI design tools is becoming a relevant professional skill.

Summary by ReadAboutAI.com

https://www.adweek.com/media/anthropic-debuts-claude-design-for-building-marketing-assets-decks-and-uis/: April 24, 2026https://www.fastcompany.com/91528198/anthropic-claude-design-ai-design-tool: April 24, 2026

HAMPSHIRE COLLEGE IS CLOSING. WHAT IT LEAVES BEHIND IS A QUESTION, NOT A EULOGY.

New York Intelligencer | Gabrielle Moss | April 17, 2026

Note: Opinion essay by an alumna. Relevance to an AI-focused audience is interpretive rather than analytical.

TL;DR: The announced closure of Hampshire College reflects both structural financial pressures on small private colleges and a deeper cultural reckoning with whether curiosity-driven education holds value in an era of AI and credential anxiety.

EXECUTIVE SUMMARY

Hampshire College, known for replacing grades with narrative evaluations and granting students near-total control over their academic programs, will close after 56 years. The closure is financially overdetermined — small endowment ($26.5 million), shrinking enrollment (down roughly 50% over two decades to about 625 students), declining college-age population, and a broader retreat from expensive, non-vocational liberal arts education.

The essay’s more substantive argument is cultural: Hampshire represented a conviction that following intellectual curiosity produces capable, adaptable people. The author suggests that the very traits Hampshire cultivated — critical thinking, comfort with ambiguity, intrinsic motivation, interpersonal intelligence — may carry premium value precisely because AI is commoditizing rote cognitive tasks. The institution built around those traits could not survive the economic climate that is making them newly relevant.

RELEVANCE FOR BUSINESS

This piece carries upstream relevance for talent strategy and workforce planning. If the skills most resistant to AI automation are precisely those that formal education has been de-emphasizing — curiosity, judgment, ambiguity tolerance, interpersonal depth — then the talent pipeline implications are real. SMB leaders hiring in the next five to ten years should consider how they screen for and develop these capabilities.

CALLS TO ACTION

Treat the higher-ed trend as a talent pipeline signal: The decline of liberal-arts institutions will shape the cognitive skill mix of future candidates. Plan talent development strategies accordingly.

Monitor: This is a cultural signal, not an operational one. Note the convergence between AI capability growth and renewed attention to human-specific skill development.

Revisit hiring criteria: If your organization screens primarily for credential or technical background, consider whether curiosity, adaptability, and judgment are being underweighted.

Consider workforce development beyond AI tools: As AI adoption matures, the differentiating investment may shift toward building judgment, communication, and cross-functional thinking — not just AI fluency.

Summary by ReadAboutAI.com

https://nymag.com/intelligencer/article/hampshire-college-closing-boomer-utopia.html: April 24, 2026

The NAACP Sues xAI for Unpermitted Pollution Near a Memphis Data Center

Engadget | Ian Carlos Campbell | April 14, 2026

TL;DR: The NAACP has filed a federal Clean Air Act lawsuit against Elon Musk’s xAI, alleging the company illegally operated 27 methane gas turbines to power an AI data center in a residential area — a signal that AI infrastructure buildout is entering a new phase of legal and regulatory exposure.

Executive Summary

The NAACP, represented by the Southern Environmental Law Center and Earthjustice, filed a federal lawsuit against xAI and its subsidiary MZX Tech, alleging that 27 unpermitted methane gas turbines have been operating to power Colossus 2, an xAI data center in South Memphis used to train the Grok AI assistant. The lawsuit seeks a court order to halt the turbines, financial penalties, and a declaration of Clean Air Act violations. xAI was sent a 60-day notice of intent to sue, to which it did not respond.

The legal exposure is specific: operating emission-producing equipment without an air permit is a Clean Air Act violation, regardless of the purpose. The proximity of the facility to residential neighborhoods escalates the public health dimension. This is not a hypothetical risk — the lawsuit is active, the legal basis is established federal law, and the plaintiff organizations have significant legal resources.

The broader context matters: AI companies across the board face an acute power supply problem. Training and running large models requires enormous, continuous energy. Oracle is reportedly using similar gas generator approaches. Google, Meta, and Amazon have moved toward nuclear deals to address the same constraint. The Trump administration’s current AI policy framework appears to favor streamlining energy permitting over environmental review — creating a regulatory environment in tension with existing federal law like the Clean Air Act.

Relevance for Business

For SMB executives, this story has two layers. First, AI infrastructure carries environmental and legal exposure that AI vendors rarely surface in their pitch materials. When selecting AI providers, their energy sourcing and compliance posture are legitimate due diligence questions — especially for any business with ESG commitments, public-facing sustainability claims, or investor scrutiny on governance. Second, this case illustrates that the AI buildout is not happening in a regulatory vacuum. Legal and community challenges to AI infrastructure are increasing. Companies that rely heavily on a single AI provider should understand whether that provider’s infrastructure is legally stable, or whether it faces the kind of disruption this lawsuit could produce.

Calls to Action

🔹 Add AI vendor energy and environmental compliance to your due diligence checklist — ask providers how their data centers are powered and whether they operate within applicable environmental permits.

🔹 If your organization has ESG, sustainability, or governance commitments, assess whether your AI vendor relationships are consistent with those commitments.

🔹 Monitor this lawsuit for outcome: a successful enforcement action could set precedent for permitting requirements across all AI data center operations.

🔹 Track the broader federal policy trajectory — current administration deregulatory pressure and existing Clean Air Act enforcement are moving in opposite directions, creating legal uncertainty.

🔹 Prepare internal talking points for stakeholder or investor questions about AI supply chain environmental exposure — this topic is gaining traction.

Summary by ReadAboutAI.com

https://www.engadget.com/ai/naacp-sues-xai-over-data-center-pollution-213511352.html: April 24, 2026

This charming gadget writes bad AI poetry

THE POETRY CAMERA: A CHARMING GADGET THAT REVEALS AI’S CREATIVE LIMITS

The Verge | Allison Johnson | April 17, 2026

TL;DR: A hands-on review of an AI-powered “poetry camera” concludes that the device, while delightful in concept, produces hollow output — a reminder that novelty and utility are not the same thing, and that AI-generated creative content has a weariness problem.

EXECUTIVE SUMMARY

The Poetry Camera is a physical camera that generates AI poems on thermal receipt paper instead of photos. Priced at $349, it has sold out twice. The reviewer spent extended time with the device and came away charmed by the hardware and frustrated by the output.

The executive-relevant observation is not about poetry. It is about AI fatigue and the gap between novelty and sustained value.The reviewer notes the device feels like an artifact of the early ChatGPT era, when LLM-generated creative output was genuinely surprising. That era appears to be closing. Outputs are described as formulaic and emotionally inert. Usability friction compounded the frustration: cloud dependency, Wi-Fi restrictions, opaque error handling, and a disruptive sleep/reconnect cycle.

The deeper signal: consumer tolerance for content that “sounds like AI” is declining, not increasing. Organizations deploying AI-generated content in customer-facing applications should take note.

RELEVANCE FOR BUSINESS

Any organization using AI for customer-facing content should treat this review as a leading indicator of audience fatigue. The novelty window is closing. Quality bar, editorial voice, and human review are becoming more important. Additionally, the device’s operational fragility mirrors infrastructure risks in enterprise AI deployments. Reliability and transparency in AI systems are not optional.

CALLS TO ACTION

Ignore the gadget: The signal is in the reviewer’s reaction to AI creative output, not the device itself.

Audit AI-generated content quality: Run a fresh review of AI-drafted customer-facing copy. What passed the bar in 2024 may now read as generic or hollow to a more experienced audience.

Establish human editorial checkpoints: AI-generated content needs a genuine quality gate before publication, not a formality.

Monitor AI fatigue as a market signal: Distinctiveness and authenticity are becoming competitive differentiators as AI content becomes ubiquitous.

Apply reliability standards to AI tools: Before deploying any AI tool in customer interactions, assess its failure behavior and whether the experience degrades gracefully.

Summary by ReadAboutAI.com

https://www.theverge.com/gadgets/913981/poetry-camera-ai-hands-on: April 24, 2026

The heist of iOS 26

APPLE VS. THE IOS LEAKER: TRADE SECRET ENFORCEMENT GETS AGGRESSIVE

The Verge | Jay Peters | April 14, 2026

Note: AI-relevance is tangential. Value is in IP enforcement and insider-risk governance dimensions.

TL;DR: Apple’s trade-secret lawsuit against a YouTuber who accurately previewed iOS 26 — now proceeding after the defendant defaulted — illustrates how tech companies are escalating IP enforcement, and reveals an insider-risk dynamic relevant to any organization with proprietary information.

EXECUTIVE SUMMARY

YouTuber Jon Prosser accurately described Apple’s iOS 26 redesign months before its public announcement. Apple sued Prosser and a co-defendant, alleging a coordinated scheme to access an Apple employee’s development iPhone and monetize the information. Prosser defaulted in the legal proceedings by failing to respond to Apple’s complaint, leaving him unable to participate in his own defense.

The case illustrates the insider risk dimension of IP protection. The alleged scheme involved a roommate of an Apple engineer who had access to a development device that was not properly secured. The amount allegedly paid to the intermediary was $650 — a remarkably low cost for access to one of the world’s most secretive product pipelines. The gap between “sharing with a friend” and a material trade-secret breach is narrow, and organizations that have not established clear policies are exposed.

This is also one of Apple’s most aggressive enforcement actions against a content creator rather than an employee, signaling a broader shift in how large tech companies handle IP disputes publicly rather than quietly.

RELEVANCE FOR BUSINESS

This case is most relevant as an insider risk and IP governance signal. Development devices, pre-release software, and confidential information are routinely shared informally among employees and their networks — often without malicious intent. Organizations without clear policies on device security and information handling are exposed. Enforcement is also becoming more aggressive as tech companies litigate publicly.

CALLS TO ACTION

Deprioritize the Apple-specific content: The iOS design details are not the signal. The governance and enforcement dimensions are.

Review device security policies: Ensure employees with access to pre-release products or sensitive IP understand their obligations, and that devices are secured in practice, not just policy.

Clarify informal disclosure risks: Employees often share information casually without realizing it constitutes a breach. Provide practical guidance rather than vague confidentiality reminders.

Assess insider risk in development workflows: Evaluate where informal access by non-employees could occur — via personal devices, shared accounts, or household access.

Monitor the litigation trend: Tech companies are increasingly litigating trade-secret cases publicly. Factor this into agreements with vendors, contractors, and former employees.

Summary by ReadAboutAI.com

https://www.theverge.com/tech/908476/jon-prosser-apple-liquid-glass: April 24, 2026

Trump’s posting even more AI-generated Trump-Jesus fan art

TRUMP’S AI-JESUS POSTS: WHAT UNCONTROLLED AI CONTENT LOOKS LIKE AT THE TOP

The Verge / Regulator | Tina Nguyen | April 15, 2026

Note: Political commentary column. Relevance is in AI content provenance, mutation risk, and governance dimensions.

TL;DR: Trump’s repeated posting of AI-generated messianic imagery — including one image that mutated through unknown AI processes before reaching his feed — illustrates how generative AI content carries reputational and trust risk beyond the original creator’s intent.

EXECUTIVE SUMMARY

Trump posted an AI-generated image portraying himself as a Christ figure, hours after criticizing Pope Leo XIV. The image originated with a different account months earlier but arrived on Trump’s feed meaningfully altered — including a human figure transformed into something widely read as demonic, and distorted text elements. Trump reportedly did not understand what he was posting.

Three signals worth separating from the political noise. First, AI-generated images degrade and mutate as they circulate through re-generation and editing tools. Second, provenance of AI content is essentially invisible to casual users. Third, posting AI content without verification creates reputational risk even with institutional communications support in place. The same dynamics apply at any organizational scale.

RELEVANCE FOR BUSINESS

The practical implication is in governance of AI-generated imagery and content. As AI image generation enters marketing and communications workflows, organizations face real risk of publishing content whose origin and integrity they cannot verify. An image that circulates can return in altered form. Policies for tracking, approving, and archiving AI-generated assets are not optional for organizations with public-facing communications.

CALLS TO ACTION

Deprioritize the political dimension: The signal is in AI content provenance, mutation risk, and governance failure — not the specific political context.

Establish an AI content provenance policy: Document what tool was used, what prompt was given, and who reviewed the output before any AI-generated asset is published.

Never publish AI-generated content without human review: The underlying error here — posting content the poster did not fully understand — is replicable at any scale.

Be aware of image mutation risk: AI-generated images shared through social or editing tools can accumulate changes. Never assume a received image matches the original.

Monitor the regulatory environment: AI-generated content disclosure rules are developing quickly across multiple jurisdictions.

Summary by ReadAboutAI.com

https://www.theverge.com/column/912627/trump-jesus-ai-whcd-penguin-meme: April 24, 2026

The 100-Slide Deck Is a Management Problem, Not a Formatting Problem

Fast Company | Harish K. Saini | April 16, 2026

TL;DR: Bloated executive presentations aren’t a slide-count problem — they’re a symptom of organizational anxiety, and fixing them requires a deliberate change in how teams prepare and structure decisions for leadership.

Executive Summary

This is an opinion piece from a presentation consultant, and the argument is largely practical rather than novel. The core claim: over-long slide decks — the author calls them “Frankendecks” — are not produced by incompetent teams but by anxious ones. When presenters are uncertain what leadership cares about, they include everything, shifting the burden of synthesis onto the executive audience. The result is a paradox: more data produces worse decisions, more delays, and less strategic clarity.

The proposed remedy is a two-part shift. First, Zero-Based Reporting: treat every slide as unjustified until proven essential to the specific decision being requested — not to demonstrate thoroughness, not to protect against questions. Supporting data belongs in appendices, available on request but not on screen. Second, Insight-First Architecture: lead with the conclusion, the stakes, and the ask — then provide evidence. This inverts the typical build-up-to-reveal structure that forces executives to sit through context before reaching the point.

The author cites research suggesting executive deck review time has held steady at 3–4 hours per decade while slide counts have grown 40%. The implication is that longer decks aren’t being read more carefully — they’re generating the same cognitive budget spread thinner.

Relevance for Business

In an environment where AI tools are now capable of generating detailed reports, lengthy analyses, and comprehensive briefings at scale, the Frankendeck problem is about to get significantly worse. Teams using AI to draft presentations will face strong temptation to include AI-generated material simply because it exists and seems relevant. The same discipline described here — justify every slide, lead with the decision, put depth in appendices — applies directly to AI-assisted content creation. Quantity generated by AI is not the same as quality required by leadership. SMB executives managing teams that are beginning to use AI for internal communications and board materials should set explicit standards now, before AI-inflated decks become the default.

Calls to Action

🔹 Establish a clear internal standard: executive decks should open with the ask, the insight, and the stakes — everything else is supporting evidence.

🔹 Introduce an “appendix discipline”: all supporting data is prepared and available, but never defaults to the main deck.

🔹 As your team adopts AI tools for content creation, set explicit length and structure standards before AI-generated bloat becomes the norm.

🔹 Evaluate whether your current meeting culture rewards thoroughness over clarity — this is the root cause, not slide software.

🔹 Consider using this framework as a team development exercise: have each presenter justify every slide from scratch before the next major leadership presentation.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91524776/we-need-to-kill-the-bloated-100-slide-presentation: April 24, 2026

Travel Tech’s Real Job Is to Disappear

Fast Company Custom Studio (Paid/Sponsored — Capital One Business) | April 14, 2026

⚠️ Editorial Note: This is sponsored content, produced by Fast Company’s Custom Studio on behalf of Capital One Business. It is not independent journalism. The perspectives offered are framed favorably toward the travel and technology industries. Treat the claims and examples as illustrative industry framing, not independent analysis.

TL;DR: Industry practitioners from Universal, Away, and a luxury resort argue that AI and technology in travel succeed only when they become invisible — and that AI’s greatest risk in the sector is accelerating the herd mentality it learned from social media.

Executive Summary

The core argument from this panel discussion: technology’s role in the customer experience is to remove friction and then get out of the way. Three practitioners from very different travel segments — a theme park operator, a luggage brand, and an ultra-luxury eco-resort — converged on the same principle: when guests notice the technology, it has failed. Universal Studios deliberately brands AR equipment as “racing goggles.” The Brando resort makes its sophisticated infrastructure invisible so guests focus on the environment, not the engineering.

The more interesting and candid signal comes from the luggage brand’s CEO, who raised a genuine risk: AI recommendation engines trained on social media data will accelerate overcrowding, not solve it. When every traveler asks the same AI tool for the same destination recommendations, the result is convergence — the opposite of the personalized, discovery-driven experience the industry is trying to sell. The proposed antidote is human curation layered on top of AI — high-touch travel agents who use AI to handle logistics while providing the differentiation AI cannot.

Relevance for Business

For SMB executives in hospitality, retail, or any customer-experience business, the applicable insight is this: AI that optimizes for efficiency at the front end can destroy differentiation at the experience end. If your AI-driven personalization system is trained on the same data as your competitors’ systems, you will produce the same recommendations. The competitive moat in experience businesses is increasingly the human layer — curation, judgment, relationships — that sits on top of AI, not the AI itself. The businesses that will win are those that use AI to handle the transactional and reserve human talent for what AI cannot replicate.

Calls to Action

🔹 Audit where AI is currently deployed in your customer journey: is it reducing friction or flattening differentiation?

🔹 If you operate in a sector where customer experience is a differentiator, identify which touchpoints require human judgment — and protect them from automation.