AI Updates May 7, 2026

The week’s reporting lands in three distinct registers, and it is worth naming them clearly before you read. First, there is the legal and governance layer — the Musk v. OpenAI trial in Oakland is now in full swing, and whether or not Musk prevails, the proceedings are producing a live, public record of how AI companies are structured, how founders exercise (or lose) control, and what happens when mission and commercialization pull in opposite directions. That record will matter long after the verdict. Second, there is the capital layer — Anthropic’s $65 billion in new commitments, Anthropic’s Wall Street joint venture, Big Tech’s $700 billion in aggregate AI capex, and the first serious public signals that OpenAI may be missing its own internal targets. The money is still flowing, but the scrutiny is catching up. Third, and most immediately actionable for SMB leaders, is the operational layer: what AI is actually doing inside organizations, to workflows, to human judgment, and to the people whose jobs sit in AI’s path.

This edition gives particular weight to the cognitive and workforce dimensions of AI adoption, because that is where the most rigorous and least-covered reporting currently lives. The TIME feature on AI and cognitive de-skilling is not a think-piece — it is peer-reviewed research with direct implications for how you structure AI use inside judgment-heavy teams. The New Yorker’s investigation of AI diagnostic tools in medicine is the most detailed case study available of what happens when AI enters a high-stakes professional domain without structured protocols. Both pieces point to the same finding: the sequence in which AI enters a workflow matters as much as whether it is used at all. That is a governance question, not a technology question, and it belongs on your leadership agenda now.

The remaining stories form a coherent operating picture of the AI landscape your decisions sit inside: a $1.5 billion Anthropic-Wall Street joint venture that accelerates enterprise AI adoption at a scale SMBs will need to track; Amazon’s move to commercialize its logistics infrastructure in direct competition with UPS and FedEx; Oracle’s 30,000-person layoff as a documented model of AI-driven workforce transition done badly; and the Economist’s report on an AI supply crunch that challenges the assumption that AI will simply get cheaper and more available. Taken together, this week’s edition is a map of the territory — legal, financial, operational, and human — that AI is actively reshaping. The summaries that follow are calibrated to help you navigate it with clarity and appropriate urgency, not alarm.

White House Eyes AI Model Approvals — And Anthropic’s Unreleased Mythos May Have Triggered It

TL;DR: A New York Times report reveals the White House is considering requiring government approval before AI models can be publicly released — a potential regulatory shift that Anthropic’s withheld “Mythos” model appears to have catalyzed.

Executive Summary

The core development here is straightforward: the federal government is actively discussing whether AI models should require pre-release approval, driven by two concerns — avoiding political liability from an AI-enabled cyberattack, and securing early access to frontier capabilities for defense and intelligence. This isn’t nationalization, but it’s meaningfully closer to formal regulatory oversight than anything the U.S. has attempted before.

Anthropic’s Mythos sits at the center of this. The company withheld the model on safety grounds roughly a month ago — a move the podcast frames as potentially strategic as much as precautionary. Whether Mythos is genuinely dangerous or whether Anthropic’s public posture was designed to force the conversation, the outcome is the same: a safety-framed AI narrative has now reached the White House policy level. Anthropic’s CEO had previously called for regulation publicly; the Mythos episode may have accelerated that timeline considerably.

Two secondary signals are worth noting. First, a Harvard-affiliated trial found AI outperforming physicians on emergency triage decisions — a concrete, peer-reviewed capability demonstration that cuts against the ambient negativity around AI. Second, AI-generated micro-dramas are proliferating rapidly in China and beginning to reach U.S. platforms (ByteDance’s PineDrama is already in the App Store). The format — short, serialized, low-cost, AI-produced — is beginning to disrupt video production economics, though content quality remains uneven.

Relevance for Business

On the regulatory front: Pre-release government review would primarily affect AI developers, not enterprise users — but it would slow the pace of new model availability and create new dependencies on federal approval timelines. SMBs that have built workflows around rapid model iteration should monitor this closely. Any approval regime that delays frontier model releases will affect vendor roadmaps and, by extension, the competitive tools available to your teams.

On AI narratives: Public skepticism about AI is measurable and growing — a point the podcast illustrates with an NBA team’s anti-AI tweet drawing 16,000 likes in hours. Leaders using or deploying AI tools internally should be prepared for employee and customer resistance that doesn’t track with actual capability. Sam Altman’s acknowledgment that the industry has failed to communicate benefits in human terms is notable — the narrative problem is now recognized at the top.

On AI video/content production: The micro-drama trend signals that AI video is crossing from novelty into volume production. For businesses in marketing, media, or content-adjacent functions, the cost of producing short-form serialized video content is dropping sharply — but so is the signal-to-noise ratio. Saturation is arriving faster than quality.

Calls to Action

🔹 Monitor the pre-release approval story — assign someone to track legislative or executive action following the NYT report. If your operations depend on specific model capabilities, vendor access timelines could become a planning variable.

🔹 Don’t conflate the regulatory debate with your tool decisions — pre-release review, if implemented, targets AI labs, not enterprise users. Avoid over-reacting to headlines that aren’t yet operational.

🔹 Prepare internal AI communication — public skepticism is real. If you’re deploying AI tools internally or with customers, develop straightforward messaging around what the tools do and don’t do. Don’t assume enthusiasm.

🔹 Evaluate AI-assisted video for marketing use cases — if your business produces short-form content, the cost curve on AI video production is worth a practical test now. Quality varies widely; assess with clear criteria.

🔹 Watch the Harvard triage study for your sector — AI outperforming specialists in high-stakes diagnostic contexts is a signal that applies beyond medicine. Identify where AI-assisted decision support could reduce error or cost in your own operations.

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=FMoO1ndNpNI: May 7, 2026

WSJ Future of Everything 2026

Source: WSJ Future of Everything Conference Program | Published: May 4–5, 2026

TL;DR: The WSJ’s flagship annual conference featured Colin Angle — Roomba co-founder — revealing his next robotics company in a session that signals physical AI for the home is moving into the mainstream business conversation.

Executive Summary

The WSJ Future of Everything is the Journal’s marquee live event, convening CEOs, policymakers, and founders across finance, technology, health, and beyond. The 2026 edition — held May 4–5 — included a dedicated session in which Colin Angle, co-founder of iRobot and now CEO of Familiar Machines & Magic, revealed his next venture to WSJ Technology Columnist Christopher Mims.

The placement of this session matters editorially. The WSJ Future of Everything is a curated signal of what institutional business media considers consequential enough to feature alongside banking leaders, AI workforce panels, and macroeconomic discussions. The Angle session — titled “The Guy Who Brought Us The Roomba Has Something New Up His Sleeve” — was not a tech-track niche event. It was on the main Future Stage, alongside conversations with Amazon’s Panos Panay and Bank of America’s Brian Moynihan.

The program itself offers no reportable detail beyond the session description:: Roomba’s Creator Unveils His Next Venture. Angle was present to reveal his next venture. The substantive content of what he announced — a bear-shaped quadruped companion robot under the brand “Familiar Machines & Magic”

What the conference context adds is framing: consumer physical AI, specifically the emotional and relational dimension of home robotics, is now being treated as a serious business topic at the level of Wall Street Journal leadership programming — not just a tech enthusiast story.

Relevance for Business

The WSJ Future of Everything program functions as a rough proxy for which technology themes are crossing from early-adopter conversation into executive-level awareness. Physical AI entering the home — not just as a labor tool, but as a presence designed for human connection — is now in that conversation. Leaders who have been monitoring AI primarily through a productivity and automation lens should note that a second vector, centered on care, companionship, and behavioral engagement, is gaining institutional visibility.

For SMBs, the practical implication is timing: this is the moment to begin forming a point of view, not yet a moment to act. The fact that this topic reached the WSJ main stage suggests the window between “emerging” and “mainstream business consideration” is narrowing.

Calls to Action

🔹 Treat this as a signal about the AI conversation shifting. When the WSJ features consumer companion robotics alongside banking and workforce AI, it reflects a broadening of what counts as executive-relevant AI. Update your internal scanning accordingly.

🔹 Review the substantive reporting from this event (Forbes, The Verge) for the product and market details the conference program itself does not provide.

🔹 Note the WSJ Future of Everything as an annual calibration tool. The session mix each year reflects which technology themes are moving from specialist to mainstream business priority. Add it to your annual intelligence-gathering calendar.

🔹 Begin forming your organization’s position on physical AI in care and home settings — even if action is 2–3 years away. The speed at which physical AI is mainstreaming suggests that waiting for the technology to be proven before forming a view may compress your preparation window.

Summary by ReadAboutAI.com

https://www.wsj.com/future-of-everything: May 7, 2026https://www.dowjones.com/press-room/the-wall-street-journals-the-future-of-everything-returns-to-new-york-city/: May 7, 2026

https://futureofeverything.wsj.com/event/the-future-of-everything/: May 7, 2026

AI IS CHANGING HOW DIRECTORS AND CINEMATOGRAPHERS WORK — BUT NOT THE WAY YOU MIGHT THINK

Fast Company | May 1, 2026

TL;DR: Despite headlines about AI-generated video, working filmmakers are using AI primarily as a back-office and pre-production tool — not a replacement for human craft — and their experience offers a practical template for how knowledge workers in any industry can integrate AI without ceding creative or professional control.

Executive Summary

Fast Company’s Shreya Chaganti profiles working cinematographers and directors who describe AI’s actual impact on their day-to-day work — and the picture is considerably more modest, and more useful, than the hype suggests. The consensus among practitioners: AI-generated video tools like Google’s Veo3, Pika Labs, and Kling AI are improving, but they remain limited to short-form content, struggle with creative precision, and are not displacing production professionals in any meaningful volume.

Where AI is genuinely delivering value is in workflow support: storyboarding and visual referencing via Midjourney and Runway, shot list generation, pre-production logistics, email drafting, budget management, and negotiation prep via ChatGPT. One cinematographer describes using AI to simulate the role of a talent agent walking him through a negotiation; another uses it to generate rough shot lists she then refines in her own voice. Former American Society of Cinematographers president Michael Goi captures the practical consensus: AI won’t elevate a mediocre filmmaker — but it can help a skilled one sharpen their vision.

The piece also notes a viral data point worth watching: an AI-generated TikTok microdrama called Fruit Love Islandamassed 300 million views before being flagged for low quality — suggesting AI-generated content can achieve reach at scale, but audience tolerance for low quality remains a limiting factor.

Relevance for Business

This is one of the most practically useful pieces for SMB leaders in this batch. The filmmaking context is a direct analog for any knowledge-intensive profession: the AI tools that deliver measurable ROI right now are organizational and pre-production — research, drafting, structuring, logistics. The tools that promise to replace human creative output are still limited, inconsistent, and audience-tested with mixed results. For leaders managing creative, marketing, or professional services teams, the lesson is clear: AI as a workflow accelerator is deployable now; AI as a creative substitute requires much more caution and oversight.

Calls to Action

🔹 Identify your organization’s pre-production analog — wherever planning, drafting, and logistics happen before skilled execution, AI tools are likely ready to provide measurable time savings now.

🔹 Test AI for internal workflows before client-facing use — the cinematographers here use AI for shot lists and emails, not finished products; apply the same sequencing in your own operations.

🔹 Do not expect AI to compensate for skill gaps — the practitioners quoted are clear that AI amplifies competence but doesn’t manufacture it; assess what baseline quality your team brings before deploying.

🔹 Monitor AI video generation for marketing use cases — the technology is advancing rapidly for short-form content; assign someone to track quarterly developments, particularly in tools like Veo3 and Kling AI.

🔹 Watch the Fruit Love Island data point — 300M views followed by a quality flag is a useful benchmark: AI-generated content can achieve reach, but audience tolerance for slop is not infinite.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91533937/director-cinematographer-ai-tools: May 7, 2026

AI IS TURNING EVERY STORY INTO RAW MATERIAL

Fast Company | May 1, 2026

TL;DR: “Liquid content” — the AI-driven ability to convert any piece of content into any other format — is now a real production capability, but the article’s most valuable contribution is its honest accounting of why the business case is harder than it looks.

Executive Summary

Media journalist Pete Pachal examines the concept of “liquid content” — the idea that AI tools can transform content across formats (article to video, podcast to social clips, archive to multimedia) cheaply and at scale. He reports from NAB Show and Adobe Summit, where production systems that do exactly this are already commercially available. The technology is real: companies like Amagi and Stringr’s Genna are actively converting live newscasts and written articles into short-form video for social distribution, near-automatically.

But the piece earns its credibility by identifying three structural limits that most vendor pitches omit. First, generative AI content underperforms with audiences: synthetic voices and AI-generated visuals drive lower engagement than human-produced equivalents, and organizations that lean too far into generation rather than assembly may find the audience numbers don’t justify the investment. Second, AI-driven content repurposing only works if the underlying data is clean: messy metadata, broken tagging systems, and archive migrations that corrupted records will hamper any AI system built on top of them. Third, expansion into new platforms still requires human strategy: AI can produce the content, but it cannot replace the judgment needed to build and retain an audience on a new channel.

The article’s most useful insight: archives are an undervalued asset. Organizations with large content libraries and clean metadata are better positioned to benefit from liquid content strategies than those starting from scratch.

Relevance for Business

This piece applies directly to any SMB that creates content — whether for marketing, thought leadership, training, or customer education. The liquid content opportunity is real, but the preconditions are often unmet. Before investing in AI-driven content repurposing tools, leaders should audit two things: the quality of their existing content metadata, and whether their team has the strategic capacity to manage a new platform or format, not just produce for it. The piece is also a useful corrective to vendor claims that AI will deliver immediate content ROI — the reality involves data cleanup, quality thresholds, and ongoing human oversight.

Calls to Action

🔹 Audit your content archive and metadata quality — this is the precondition for any AI-driven content repurposing strategy; if your tags, dates, and categorizations are incomplete or inconsistent, fix that first.

🔹 Distinguish between AI-assembled content and AI-generated content — assembling existing footage and text into new formats performs better with audiences than fully synthetic output; plan accordingly.

🔹 Do not launch a new platform on AI content alone — expansion to video, podcast, or social requires audience strategy, not just production capability; make sure you have both before committing resources.

🔹 Evaluate liquid content tools with a pilot, not a platform commitment — test a specific use case (e.g., converting blog posts to short video clips) before investing in a broader content transformation infrastructure.

🔹 Monitor ROI expectations carefully — as more organizations adopt AI repurposing strategies, supply of reformatted content will increase and audience attention will dilute; niche publications with strong brand identity are better positioned than general-interest ones.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91532289/ai-is-turning-every-story-into-raw-material: May 7, 2026

THE AI SUPPLY CRUNCH IS HERE

The Economist | April 30, 2026

TL;DR: AI demand is outpacing infrastructure capacity across every layer of the stack — chips, power, and data centers — and the economics of who gets access, and at what cost, are shifting fast.

Executive Summary

The AI industry is facing a genuine supply constraint. Token consumption — the basic unit of AI output — quadrupled in just three months, driven heavily by AI coding tools. Major providers are already rationing: adjusting usage terms, shelving product lines, and publicly acknowledging that processing capacity is limiting growth. These aren’t temporary friction points; they reflect structural delays measured in years, not months.

The constraint is multi-layered. Advanced chips remain scarce, but the bottleneck extends to memory, CPUs, power equipment, and physical data center construction — all of which carry multi-year lead times. Supply chains are not positioned to respond quickly, and equipment manufacturers are still investing cautiously relative to what hyperscalers need.

This scarcity is concentrating power rapidly. The five largest cloud providers are committing over $750 billion in capital expenditure this year — a scale that effectively locks up available hardware before smaller players can reach it. The companies best positioned are those with the balance sheets to reserve capacity years in advance. Pricing power at the chip layer is extraordinary: leading manufacturers are operating at gross margins that would be remarkable in any industry, and that dynamic is not about to reverse. For businesses that depend on AI services, the direction of pricing pressure is up — and the era of falling inference costs may not last.

Relevance for Business

The widely held assumption that AI will simply get cheaper and more available is increasingly at odds with structural reality. SMBs that have built workflows, cost models, or productivity assumptions around current AI pricing and availability should stress-test those assumptions now. Vendor dependence is rising, not falling — and the gap between what large enterprises can lock in versus what SMBs can access is likely to widen. Efficiency — not just adoption — is becoming the relevant measure.

Calls to Action

🔹 Audit your AI cost exposure. If current workflows assume stable or declining AI costs, build contingency into your planning for pricing increases over the next 12–24 months.

🔹 Assess vendor concentration risk. If your business depends on a single AI provider, understand their capacity commitments and what service degradation or rationing could mean operationally.

🔹 Monitor usage efficiency now. Treat AI consumption the way you’d treat any constrained resource — track it, set budgets, and establish internal standards before cost pressure forces the discipline.

🔹 Watch for new pricing tiers and access restrictions. Providers under capacity pressure will change terms. Stay current on the service agreements that govern your AI tools.

🔹 Deprioritize infrastructure-layer investment decisions. Custom chip development or alternative compute strategies are not viable for SMBs — this is a strategic space to observe, not enter.

Summary by ReadAboutAI.com

https://www.economist.com/leaders/2026/04/30/the-ai-supply-crunch-is-here: May 7, 2026

AMERICA’S LARGEST LANDOWNER IS USING AI TO DIGITIZE THE FOREST

The Wall Street Journal | April 23, 2026

TL;DR: Weyerhaeuser’s AI deployment across its Indiana-sized timberland operation — from autonomous logging equipment to individual-tree digital twins — is a concrete example of how AI creates genuine competitive advantage in capital-intensive, data-rich, old-economy industries, not just in tech.

Executive Summary

Weyerhaeuser, the 125-year-old timber company and the country’s largest private landowner, is betting that AI can deliver $1 billion in incremental annual profit by 2030 — roughly doubling its 2025 earnings — without relying on any increase in lumber prices. Its shares have fallen roughly 40% from their 2022 peak as housing demand softened, making the AI-driven efficiency strategy a structural response to market conditions, not an opportunistic add-on.

The company’s AI initiatives span three distinct layers. Operational AI — predictive maintenance on mill equipment, real-time demand matching, and route optimization for 5,000 daily logging trucks — mirrors what other manufacturing-sector companies are deploying. More distinctive is its digital twin program: using satellite imagery, drone photography, and lidar mapping to build a tree-by-tree database of its timberlands. This enables seedling survival monitoring without field foresters, and will eventually support harvest planning optimized for financial returns decades out. Most forward-looking is its autonomous equipment program: a remotely operated logging skidder, controlled from 400 miles away, is already in pilot; the company’s SVP of timberlands stated it puts them on a path to full autonomy across the logging process.

The hire of a former Amazon Alexa executive to lead AI deployment signals that Weyerhaeuser is treating this as a serious technology transformation, not a departmental experiment.

Relevance for Business

This piece matters for SMB leaders in two ways. First, as a template for AI value extraction in asset-intensive, data-rich industries: Weyerhaeuser’s competitive advantage stems from 125 years of proprietary forest data that no competitor can replicate. SMBs with deep operational data in niche industries — agriculture, logistics, construction, manufacturing — may have similar untapped advantages. Second, as a labor displacement signal: the path toward one operator running multiple autonomous machines is explicit here, not speculative. Leaders in industries with significant field, logistics, or equipment-operating workforces should be tracking autonomous operations closely, even if full deployment is still years away.

Calls to Action

🔹 Assess your proprietary data assets — if your organization has accumulated years of operational data (equipment performance, customer behavior, field conditions), evaluate whether that data is structured well enough to support AI-driven decision-making.

🔹 Track autonomous equipment developments in your sector — the Weyerhaeuser example is forestry, but similar trajectories are active in agriculture, construction, warehousing, and logistics; assign someone to monitor quarterly.

🔹 Consider AI for operational optimization before transformation — Weyerhaeuser’s most immediate gains come from predictive maintenance and route optimization, not autonomous logging; these near-term applications are worth evaluating now regardless of industry.

🔹 Watch for the “digital twin” model in your industry — creating a detailed digital representation of physical assets (inventory, equipment, facilities, land) is becoming a foundation for AI-driven operational planning; assess feasibility for your context.

🔹 Note the executive hire signal — Weyerhaeuser brought in a senior Amazon AI executive to lead this work; if competitors in your industry are making similar hires, that is an early indicator of accelerating AI investment.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/ai/americas-largest-landowner-is-using-ai-to-digitize-the-forest-bd3eec86: May 7, 2026

Big Tech’s $700 Billion Spending on AI This Year Is Called the ‘Greatest Capital Misallocation in History’

MarketWatch (via WSJ) | April 30, 2026

TL;DR: The four largest technology companies have collectively raised their 2026 AI capital expenditure plans to nearly $700 billion — even as free cash flow collapses and critics question whether the underlying economics of large language models can support the investment.

Executive Summary

Alphabet, Amazon, Meta, and Microsoft have each revised their AI capital spending upward following Q1 earnings, bringing the four-company total to approximately $700 billion for 2026 alone. This is not a stable plateau — it represents an escalation from the $650 billion these companies had already announced. All four cited capacity constraints as the driver; they cannot deploy AI fast enough to meet demand, and chip and memory supply chain bottlenecks are adding to costs.

The financial strain is visible and accelerating. Amazon’s free cash flow fell 95% year-over-year to $1.2 billion in Q1.Alphabet’s dropped 47%. Meta — which unlike the others has no public cloud platform to monetize excess compute — has now raised over $50 billion in debt in the past two quarters to fund AI spending it must recoup primarily through advertising. The pattern of declining cash generation alongside rising borrowing is being flagged by credit analysts.

AI researcher Gary Marcus’s characterization of this as the greatest misallocation of capital in history is an opinion, not a consensus view. Wall Street has not abandoned the AI trade, but analysts are becoming more selective — focusing on whether revenue is accelerating proportionally and whether cost discipline exists outside of AI capex. The core structural concern: large language models are increasingly commoditized, pricing pressure is intensifying, and customer ROI remains uncertain.

Relevance for Business

For SMB executives, this story carries a counterintuitive signal: the AI price war that critics predict is good for buyers. If hyperscalers are overbuilding and competing aggressively, AI compute and API pricing are likely to fall. SMBs should expect continued commoditization of AI tools — which strengthens the case for adoption, but weakens the case for locking into any single provider’s ecosystem.

The debt and cash flow dynamics, however, introduce a different risk: financial stress at hyperscaler scale can lead to product rationalization, pricing shifts, and reduced support for lower-value customer segments. SMBs that depend on specific cloud AI services should be aware that the economics of those platforms are under pressure.

The “capacity constrained” framing from all four earnings calls also signals continued delays and costs in AI infrastructure, which affects everything from data center availability to model access and pricing for enterprise customers.

Calls to Action

🔹 Resist locking into a single cloud AI provider long-term — the competitive dynamics being described suggest pricing will continue to shift and model availability will broaden. Maintain flexibility.

🔹 Expect continued AI tool price compression — use this environment to negotiate better terms on cloud AI services or to evaluate alternatives before committing to multi-year agreements.

🔹 Ask your AI vendors directly about their pricing roadmap for the next 12–18 months. The current investment environment makes near-term pricing instability likely.

🔹 Treat the ROI question seriously — if the world’s largest technology companies are struggling to demonstrate returns on AI investment, scrutinize your own AI deployment’s measurable impact with the same discipline.

🔹 Monitor Meta’s bond issuance and credit ratings — if financial stress at hyperscale becomes a story, it will have downstream effects on cloud service terms and reliability for commercial customers at every size.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/big-techs-700-billion-spending-on-ai-this-year-is-called-the-greatest-capital-misallocation-in-history-7d44aa4b: May 7, 2026

So, About That AI Bubble

The Atlantic | May 1, 2026 By Rogé Karma

TL;DR: AI coding agents — particularly Anthropic’s Claude Code — have driven revenue growth so sharp and fast that the “AI bubble” narrative has structurally reversed, though profitability and expansion beyond software remain unresolved questions.

Executive Summary

Six months ago, the dominant concern was that AI investment had outpaced any plausible return. That framing is now harder to sustain. Anthropic’s annualized revenue has jumped from $14 billion to $30 billion in just two months, with growth rates the article compares — credibly, not hyperbolically — to Google and Zoom at their peaks. The driver is not consumer enthusiasm but enterprise adoption of AI coding agents. Goldman Sachs researchers found software companies routinely exceeding their AI budgets “by orders of magnitude,” with some allocating up to 10 percent of total engineering labor costs to AI tools. The share of U.S. businesses with a paid AI subscription has reportedly crossed 50 percent, up from roughly 25 percent at the start of 2025.

The article credits a specific capability shift: AI agents that can autonomously complete multi-step programming tasks — previously requiring days of human work — in hours, with minimal correction. Controlled research from METR found that the same developers who were 20 percent slower with earlier AI tools completed tasks nearly 20 percent faster using current tools. That reversal is the core signal. It suggests earlier skepticism wasn’t wrong for its time, but the tools have materially changed.

The skeptic case isn’t dismissed — it’s restated fairly. Leading AI firms are still not profitable (Anthropic projects 2028, OpenAI 2030), and the current revenue boom is heavily concentrated in software development. Whether AI agents can deliver comparable productivity gains across legal, marketing, finance, and other knowledge work remains genuinely uncertain. A cited MIT study suggests AI completion rates for general white-collar tasks are rising fast (50% → 65% in roughly a year), but that trajectory hasn’t yet closed the gap with coding. The bubble risk hasn’t disappeared — it has shifted. If demand plateaus at coding, the infrastructure buildout now underway could produce significant overcapacity.

Relevance for Business

For SMB executives, the practical implication is straightforward but consequential: AI coding tools have crossed from “interesting” to “operationally significant” for any business that builds or maintains software. Teams are reporting 4x output with the same headcount. That’s not a rounding error — it’s a workforce planning variable.

More broadly, the article raises a live question for all knowledge-work-dependent businesses: how quickly will AI agents move from coding into your workflows? Agentic tools capable of running product research, drafting deliverables, and managing schedules overnight are already in use. The pace of capability improvement — not just in coding but across general white-collar tasks — is faster than most vendor timelines suggested.

Two second-order risks deserve attention: First, infrastructure constraints are real — Anthropic has already begun rationing access to its coding tools during peak hours, meaning reliability is not guaranteed as adoption scales. Second, vendor dependence is deepening rapidly; companies overrunning AI budgets “by orders of magnitude” are building workflows around tools they don’t control, at price points that may shift.

Calls to Action

🔹 If your business builds or maintains software, evaluate current AI coding tools seriously — the productivity evidence is now controlled and material, not anecdotal.

🔹 Audit your AI spend against actual output gains — enterprise budgets are overrunning projections; understand where you are before that becomes a governance problem.

🔹 Monitor AI agent tools for non-coding workflows (research, scheduling, document creation) — capability is advancing faster than most internal roadmaps assume.

🔹 Don’t over-index on coding benchmarks as a proxy for your industry — the article’s core debate is whether AI productivity generalizes beyond software; that question is open, and your answer should depend on your specific workflows.

🔹 Track infrastructure access as a business continuity issue — rationed access during peak hours is an early warning; build vendor redundancy into any AI-dependent workflow.

Summary by ReadAboutAI.com

https://www.theatlantic.com/economy/2026/05/ai-bubble-revenue-anthropic/687022/: May 7, 2026

IF A.I. CAN DIAGNOSE PATIENTS, WHAT ARE DOCTORS FOR?

The New Yorker | September 22, 2025

TL;DR: A deeply reported New Yorker investigation finds that AI diagnostic tools can already outperform physicians on complex cases — but that the same systems hallucinate, misdiagnose, and underperform in the messy, open-ended conditions of real clinical practice, making human oversight not just valuable but structurally necessary.

Note: This is a September 2025 piece included for its depth and ongoing relevance to AI in healthcare.

Executive Summary

Physician and journalist Dhruv Khullar’s long-form investigation into AI in medicine is one of the most rigorous assessments of demonstrated AI capability — and its limits — published in any mainstream outlet. The piece anchors around a live demonstration at Harvard’s Countway Library in which an AI diagnostic system called CaBot, built on OpenAI’s o3 reasoning model, correctly solved a complex diagnostic case in six minutes that a human expert had spent six weeks preparing. CaBot correctly solved roughly 60% of a large set of comparable cases — a meaningfully higher rate than human doctors achieved in prior studies.

The AI’s capabilities are real and, in some respects, superior: it processes imaging data differently than human physicians, sometimes identifying diagnostically relevant features that experienced clinicians miss. But the piece is equally rigorous about failure modes. Consumer-grade medical chatbots misdiagnosed the majority of complex pediatric cases in one study. A man ended up on a psychiatric hold after following ChatGPT’s suggestion to replace dietary sodium with bromide — an early anti-seizure drug. The same AI that solved a complex sarcoidosis case hallucinated lab values and imaging findings when given disorganized patient information, and arrived at the wrong diagnosis. The key variable: AI diagnostic performance degrades sharply when input data is unstructured, incomplete, or presented by non-clinicians who lack the judgment to flag what’s salient.

The most practically useful finding comes from Harvard’s Adam Rodman: when doctors were given specific protocols for using AI — either reading AI output before their own analysis, or presenting their working diagnosis to AI for a second opinion — their diagnostic accuracy improved. When doctors simply used AI without structured protocols, they performed no better than those who used no AI at all. The tool alone does not improve outcomes; the protocol does.

Relevance for Business

The implications extend well beyond healthcare. This piece is the most detailed documented case study of what happens when AI enters a high-stakes professional knowledge domain without structured protocols — and it applies directly to how SMB leaders should think about AI deployment in legal, financial, compliance, and advisory functions. The pattern is consistent: AI performs best when professionals use it as a second opinion after forming their own view, or when it operates within carefully defined workflow constraints. Unstructured AI access in high-stakes contexts — where employees can ask anything and get confident-sounding answers — creates the conditions for costly errors that may go undetected.

There is also a skill atrophy risk documented here: gastroenterologists who used AI to detect polyps became measurably worse at finding polyps themselves. This is likely to recur in any profession where AI takes over tasks that used to require expert judgment to perform.

Calls to Action

🔹 Do not treat AI access as equivalent to AI deployment — giving employees access to medical-grade or professional-grade AI tools without structured protocols is unlikely to improve outcomes and may introduce new errors.

🔹 Design AI second-opinion workflows, not AI first-answer workflows — the Harvard research is clear: AI improves performance when it follows human judgment, not when it replaces it at the start of the process.

🔹 Assess skill atrophy risk in AI-assisted roles — identify functions where AI is taking over tasks that once required expert judgment, and build in regular unassisted practice or review to preserve underlying competence.

🔹 Treat confident AI output with calibrated skepticism — AI systems can sound authoritative while being wrong in ways that are difficult to detect without domain expertise; build human review into any AI-assisted decision with meaningful consequences.

🔹 Monitor AI healthcare tools as a bellwether — medicine is the domain where AI capability and AI failure modes are being studied most rigorously; the patterns emerging there will arrive in other professional domains within 12–24 months.

Summary by ReadAboutAI.com

https://www.newyorker.com/magazine/2025/09/29/if-ai-can-diagnose-patients-what-are-doctors-for: May 7, 2026

I LET AI LOOK AT MY BREASTS — AND I’M GLAD I DID

The Wall Street Journal | May 4, 2026

TL;DR: WSJ technology columnist Joanna Stern’s first-person account of AI-assisted mammography screening offers the most human-scale illustration yet of AI’s role as a clinical augmentation tool — catching what radiologists miss while still requiring experienced physician judgment to interpret results responsibly.

Note: This is an excerpt from Stern’s forthcoming book, adapted for WSJ Magazine. It is personal narrative with reported detail, not a clinical study.

Executive Summary

Joanna Stern, a veteran tech journalist with an elevated breast cancer risk due to family history, underwent AI-assisted mammography at Mount Sinai Hospital and documented what she found. The experience is reported with characteristic clarity: the AI tools she encountered — ScreenPoint’s Transpara for mammography, Koios DS Breast for ultrasound — function as real-time second readers, flagging areas of concern and assigning probability scores that radiologists then evaluate against their own clinical judgment.

The key finding is not that AI replaces radiologists, but that the combination outperforms either alone under the right conditions. An independent UCLA-led study found that Transpara could flag early signs of cancers that develop between routine screenings, potentially reducing certain cancer risk by up to 30%. ScreenPoint’s own (internal) studies claim 20% greater accuracy on dense breast tissue than radiologists alone. The experienced radiologist Stern worked with trusted AI’s “benign” ratings readily but remained appropriately skeptical of its “suspicious” flags — which are correct only 30–40% of the time by design, calibrated to minimize missed cancers rather than minimize false positives.

The most resonant moment in the piece is Stern’s mother’s observation: her own DCIS calcifications appeared on a mammogram taken six months before they were caught — and an AI system trained to scan both images simultaneously might have caught them earlier, sparing her a lumpectomy and radiation. That claim is speculative but plausible, and it anchors the piece’s emotional argument. The piece closes with a radiologist confirming that AI has both saved lives and missed cancers in her practice — neither outcome is exclusive.

Relevance for Business

For SMB leaders, this piece operates on two levels. Practically: AI-assisted screening tools are becoming standard in leading medical institutions, and the experience of navigating health benefits, coverage decisions, and preventive care will increasingly involve AI-augmented diagnostics. Employers making benefits decisions should understand what AI-assisted care looks like in practice, and whether their plans cover facilities using it.

More broadly, this piece is the most accessible illustration of the human-AI centaur model — skilled human judgment plus AI pattern recognition — operating in a high-stakes domain. The pattern applies directly to any professional service context: AI catches what humans miss; humans catch what AI misflags; the protocol for combining them determines outcomes more than either tool alone.

Calls to Action

🔹 Update employee benefits literacy around AI-assisted diagnostics — if your organization offers health benefits, consider communicating that AI-augmented screening is increasingly available at leading institutions, and what employees should ask their providers.

🔹 Use this piece as a change management asset — Stern’s first-person narrative is one of the most accessible accounts of AI augmentation in action; it is useful for communicating to skeptical employees what responsible human-AI collaboration actually looks like.

🔹 Note the false-positive design trade-off — AI screening tools calibrated to minimize missed cases will generate more false positives; the same design principle applies to AI risk and compliance tools in business contexts; understand the sensitivity/specificity trade-off in any AI tool before deploying it.

🔹 Watch AI diagnostic coverage expand — the same pattern-recognition capability in mammography is already being applied to lung nodule detection, thyroid screening, and colonoscopy; the pace of expansion will affect both employee health outcomes and healthcare cost structures.

🔹 File this as a reference case for AI augmentation conversations — when internal stakeholders ask what responsible AI deployment looks like, this piece provides a concrete, human-scale answer: the human remains in the loop, the AI expands what the human can detect, and the protocol for combining them is designed deliberately.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/ai/joanna-stern-i-am-not-a-robot-ai-book-8e54657e: May 7, 2026

HOW CHATGPT FRACTURED OPENAI FROM WITHIN

The Atlantic | Karen Hao and Charlie Warzel | November 19, 2023

Editorial note: This article is from November 2023 — it covers the Sam Altman firing crisis in real time. It is included here as historical context, not current news. Its value is as a primary source record of the ideological fracture that continues to shape OpenAI’s governance and the broader AI industry today. Readers following the current Musk v. OpenAI trial (covered in Batch 1) will find this account particularly relevant.

TL;DR: ChatGPT’s runaway success in late 2022 didn’t unify OpenAI — it tore it apart, exposing an irreconcilable clash between the company’s mission-driven safety culture and the commercial machine it had inadvertently built.

Executive Summary

This contemporaneous account of the events surrounding Sam Altman’s brief firing in November 2023 remains one of the most revealing documents about how the world’s most influential AI lab actually operates. Based on interviews with ten current and former employees, it traces how OpenAI’s founding tension — between those who believed AI must be developed with extreme caution and those who saw rapid commercialization as the mission — became unmanageable precisely when the company achieved its greatest external success.

ChatGPT was not a carefully planned product launch. It was, by OpenAI’s own internal framing, a “low-key research preview” intended to gather user data for GPT-4. It hit 1 million users in five days — far beyond any internal projection — and immediately overwhelmed the company’s infrastructure, safety teams, and governance structures simultaneously. The commercial side responded by accelerating: a paid tier within three months, an API shortly after, GPT-4 weeks later. The safety and alignment side, already stretched thin, found itself unable to keep pace. Trust-and-safety staff were reassigned from abuse monitoring to fraud prevention. Internal communication collapsed. The company’s “tribes” — as Altman himself had called them in an earlier staff memo — were no longer coexisting.

The ideological fault line was real: Chief Scientist Ilya Sutskever had become increasingly focused on the risks of the very technology OpenAI was racing to commercialize, while Altman framed commercial revenue as the mechanism that would fund safety work. Both positions had internal logic. The board’s decision to fire Altman — citing a lack of candor in communications rather than any financial or legal misconduct — and then the rapid reversal under pressure from investors and employees, demonstrated that governance at OpenAI existed more as architecture than as operating reality. The nonprofit board nominally had total control; in practice, it did not.

Relevance for Business

For SMB executives, this article functions as a case study in what happens when an organization’s stated mission and its actual incentive structure diverge under growth pressure. OpenAI is not unique in this — the pattern appears in any company that begins with a clear purpose and then encounters a product that generates unexpected scale. The AI industry lesson is specific: the governance structures of major AI labs are more fragile and contested than their public postures suggest. For businesses that have built operational dependencies on OpenAI’s products, the underlying instability documented here — however it has since been managed — is a long-term vendor risk factor that deserves weight. The current Musk trial is, in many respects, a continuation of the same governance dispute this article first documented.

Calls to Action

🔹 Read this article in conjunction with the Musk v. OpenAI trial coverage — the two together provide the most complete picture of OpenAI’s structural governance risks available in the public record.

🔹 Use this as a template for evaluating AI vendor stability — look beyond product quality and pricing to ask: what is the actual governance structure, who has real decision-making authority, and what happens under pressure?

🔹 Monitor OpenAI’s post-IPO governance evolution — the shift to a public-benefit corporation structure resolves some tensions documented here but does not eliminate them.

🔹 If your business depends on OpenAI products operationally, assign periodic review of organizational signals — executive departures, public governance disputes, and mission-versus-profit statements are leading indicators, not noise.

🔹 Treat the safety-versus-commercialization tension as a live, unresolved issue across the AI industry — it shapes product decisions, release timelines, and regulatory relationships at every major lab, including Anthropic and Google DeepMind.

Summary by ReadAboutAI.com

https://www.theatlantic.com/technology/archive/2023/11/sam-altman-open-ai-chatgpt-chaos/676050/: May 7, 2026

Anthropic’s Little Brother: How the AI Race Flipped

The Atlantic | Matteo Wong | April 28, 2026

TL;DR: Anthropic has quietly overtaken OpenAI in revenue growth and enterprise credibility, forcing its larger rival to abandon its consumer-first strategy and copy Anthropic’s business playbook.

Executive Summary

For most of the generative AI era, Anthropic was the scrappy challenger — smaller, quieter, and overshadowed by OpenAI’s brand dominance. That dynamic has visibly shifted. Anthropic recently reported an annualized revenue run-rate of $30 billion, apparently surpassing OpenAI’s, driven by the breakout success of Claude Code and strong enterprise sales. Its private market valuation has crossed $1 trillion in some estimates.

The more telling signal is behavioral: OpenAI is now systematically imitating Anthropic. After Anthropic launched Claude Code, OpenAI followed with Codex. After Anthropic’s safety-focused “Constitution” update, OpenAI launched a parallel campaign around its own equivalent document. After Anthropic’s Pentagon standoff elevated its public profile, OpenAI released a cybersecurity-restricted model echoing Anthropic’s own restricted release. This is not coincidence — a leaked internal OpenAI memo called Anthropic’s enterprise traction a “wake-up call” and directed the company to eliminate “side quests” and refocus on business customers.

OpenAI has hired a former Slack CEO to lead enterprise sales, formed consulting alliances with McKinsey and BCG, and quietly wound down Sora, its consumer video product. The pivot is real, but incomplete — the company still spent hundreds of millions acquiring a podcast and continues flirting with ads and e-commerce. Meanwhile, Anthropic is not standing still; it is scaling data-center infrastructure through Amazon Web Services, a move its own CEO once characterized as performative when rivals did it.

Relevance for Business

For SMB leaders evaluating AI vendors, this competitive shift has practical consequences. Anthropic is no longer a niche “safety-first” alternative — it is an enterprise revenue machine with serious institutional backing. OpenAI is restructuring to compete on that same ground. The two companies are converging on the same enterprise model, which means pricing pressure, feature parity, and more aggressive sales outreach are likely ahead for business buyers. Neither company is yet profitable, and both are racing toward IPOs — meaning their strategic priorities will continue to shift as they manage investor expectations. Vendor lock-in risk is real: businesses building deeply on either platform should track stability and roadmap changes closely.

Calls to Action

🔹 Evaluate both platforms on enterprise merit, not brand familiarity — the competitive gap has narrowed and Anthropic’s tools deserve direct assessment if you haven’t done so recently.

🔹 Avoid over-committing to a single AI vendor while both OpenAI and Anthropic are in pre-IPO mode and actively reshaping their product strategies.

🔹 Monitor the IPO timelines for both companies — public listings will bring new financial pressures that may affect pricing, support quality, and product prioritization.

🔹 Track Claude Code and Codex if your teams use AI for software development; this is the current front line of enterprise AI competition and the space most likely to see rapid feature changes.

🔹 Assign someone to watch the safety/governance messaging from both companies — not because it’s marketing, but because government procurement, regulated industries, and enterprise contracts increasingly require documented AI governance standards.

Summary by ReadAboutAI.com

https://www.theatlantic.com/technology/2026/04/openai-imitating-anthropic/686975/: May 7, 2026

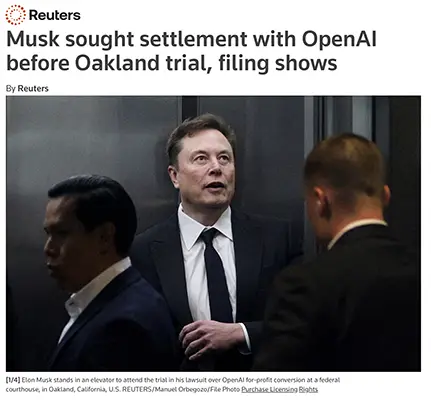

MUSK SOUGHT LAST-MINUTE SETTLEMENT WITH OPENAI BEFORE OAKLAND TRIAL BEGAN

Reuters | May 4, 2026

TL;DR: Court filings reveal Elon Musk privately explored settlement with OpenAI just two days before the Oakland trial opened — then allegedly issued a threat when the overture failed, underscoring the high-stakes and highly personal nature of a case that could reshape how AI companies are structured and governed.

Executive Summary

The Musk v. OpenAI trial, now underway in federal court in Oakland, took a new turn when a Sunday filing revealed that Musk contacted OpenAI President Greg Brockman shortly before proceedings began to gauge interest in settlement. When Brockman proposed that both sides simply drop their claims, Musk allegedly responded with a threat rather than a counter-offer. The exchange, now part of the court record, reinforces the combative dynamic that has defined the trial’s opening days.

The core legal dispute centers on Musk’s claim that OpenAI’s conversion from a nonprofit to a for-profit structure betrayed its founding mission — and that its leaders personally profited from his early charitable contributions. OpenAI’s defense counters that Musk walked away from the organization when he couldn’t assume full control, and that his lawsuit is motivated by competitive rivalry with his own AI venture, xAI. Musk is seeking $150 billion in damages from OpenAI and Microsoft, as well as changes to OpenAI’s leadership.

The trial began April 28 before U.S. District Judge Yvonne Gonzalez Rogers and is expected to last several weeks, with a verdict potentially arriving by mid-May. Sam Altman, Greg Brockman, and Microsoft CEO Satya Nadella are all expected to testify.

Relevance for Business

This case is not just a personality conflict — it is a legal stress test of the nonprofit-to-for-profit conversion model that OpenAI pursued, and that other AI ventures may emulate. The outcome could affect how AI companies raise capital, structure governance, and define fiduciary obligations to founders and early donors. For SMB leaders who rely on OpenAI products — or who are evaluating AI vendor relationships more broadly — the case surfaces a genuine governance risk: what happens when a dominant AI platform is in prolonged legal and reputational turbulence? A protracted trial or adverse ruling could create instability in product roadmaps, partnership structures, or investor confidence at OpenAI and Microsoft.

Calls to Action

🔹 Monitor the trial’s trajectory — a verdict expected by mid-May could carry significant implications for OpenAI’s corporate structure and its relationship with Microsoft.

🔹 Flag OpenAI vendor exposure internally — if your organization depends on OpenAI APIs or Microsoft Copilot products, assign someone to track material developments from this case.

🔹 Watch for governance precedent — the court’s treatment of nonprofit-to-for-profit AI conversions may influence how regulators and boards evaluate AI company structures industry-wide.

🔹 Do not over-index on the theatrics — the courtroom drama is real but secondary; the underlying legal question about AI governance and fiduciary duty is the signal worth tracking.

🔹 Revisit this after testimony from Altman and Nadella — their appearances will likely surface new material facts about the OpenAI-Microsoft relationship and may affect market sentiment.

Summary by ReadAboutAI.com

https://www.reuters.com/legal/litigation/musk-sought-settlement-with-openai-before-oakland-trial-filing-shows-2026-05-04/: May 7, 2026

ELON MUSK FACED OPENAI IN COURT. SO FAR, THE CASE IS ALL ABOUT HIM.

The Washington Post | Gerrit D Vynch and Faiz Siddiqui | May 2, 2026

TL;DR: The Washington Post’s trial coverage reveals that Musk’s courtroom conduct — erratic, theatrical, and repeatedly rebuked by the judge — is actively undermining his own case by making witness credibility, not legal substance, the central story.

Executive Summary

The Washington Post’s courtroom account of the Musk v. OpenAI trial’s first week focuses less on legal arguments than on what happens when a high-profile defendant can’t control the environment. Federal Judge Yvonne Gonzalez Rogers reprimanded Musk on the opening day for a flood of social media posts attacking opposing parties — including one rendering Sam Altman’s first name as “Scam” — and warned both sides to restrain themselves publicly. The judge’s exasperation was palpable: she asked Musk directly how proceedings could move forward without him making things worse outside the courtroom.

Musk’s three days of testimony were marked by visible frustration, off-script remarks, pop culture references, and repeated judicial warnings. A Stanford business professor and a corporate litigation attorney quoted in the piece both identified the same risk: Musk’s credibility as a witness is the central variable. Any perceived dishonesty or inconsistency in his testimony could be fatal to a case he himself initiated. OpenAI’s defense has framed Musk as a sore loser who abandoned the organization, later weaponizing litigation when it became a competitor to his own AI company, xAI.

The article also notes a broader pattern: Musk’s reputation routinely complicates legal proceedings involving his companies, from Tesla shareholder litigation to jury selection challenges driven by his political associations.

Relevance for Business

For executives, this coverage carries a clear governance signal: founder credibility matters enormously when legal disputes involve mission, fiduciary duty, and organizational integrity. The case also reinforces that AI company behavior at the top is becoming a business-relevant risk factor — not just a media story. Companies evaluating AI partnerships or vendor relationships should account for reputational volatility at the leadership level of their providers. The OpenAI-Microsoft relationship is itself on trial in ways that go beyond this specific lawsuit.

Calls to Action

🔹 Track credibility developments in testimony — the legal outcome of this case will likely hinge on Musk’s perceived truthfulness as a witness, not just legal arguments.

🔹 Note the governance pattern — founder-led disputes over AI mission and control are not unique to Musk; similar tensions exist at other AI firms and may surface as legal or reputational events.

🔹 Consider reputational risk in vendor selection — leadership volatility at AI providers can translate into product, partnership, or regulatory risk downstream.

🔹 Monitor Altman’s and Nadella’s testimony — their upcoming appearances will add material context about OpenAI’s governance choices and Microsoft’s role.

🔹 Deprioritize the spectacle — the trial’s entertainment value is real but not the executive signal; focus on what the legal record reveals about AI governance norms.

Summary by ReadAboutAI.com

https://www.washingtonpost.com/technology/2026/05/02/musk-altman-openai-trial/: May 7, 2026

Musk vs. OpenAI: A High-Stakes Trial With Long Odds

The Wall Street Journal | Keach Hagey | April 26, 2026

TL;DR: Elon Musk’s lawsuit against OpenAI, seeking up to $180 billion and the removal of its CEO, begins trial this week — but legal experts and prediction markets rate his chances as well below even odds.

Executive Summary

The Musk-OpenAI trial is simultaneously a legal long shot and a governance stress test for the AI industry. Musk’s core argument — that he funded OpenAI under a charitable mission that was later abandoned when the company converted to a for-profit structure — has been narrowed from 26 original claims down to two: breach of charitable trust and unjust enrichment. Legal scholars find the breach-of-charitable-trust theory interesting but legally strained, particularly because both California’s attorney general and a state court have already reviewed and approved OpenAI’s restructuring. Musk’s standing to bring the case at all — as a donor rather than a board member or officer — is itself contested by nonprofit law specialists.

The remedies Musk seeks are sweeping: damages potentially exceeding $180 billion flowing from OpenAI’s for-profit arm to its nonprofit parent, the removal of CEO Sam Altman and President Greg Brockman, and an unwinding of the company’s recent corporate restructuring. Even a partial win on any of these fronts would significantly complicate OpenAI’s path to a public listing expected later this year. The judge has already indicated that the jury’s role on the size of any damages would be advisory, not binding — a structural hedge that limits Musk’s upside even in a favorable verdict.

Legal analysts suggest that even if Musk prevails, the most realistic outcome is a modest financial award combined with targeted governance adjustments — not the organizational demolition he is seeking. Prediction markets currently put his odds of victory at roughly 40%.

Relevance for Business

This trial matters beyond its headline drama for three reasons. First, it is a live test of whether nonprofit governance commitments made by tech founders are legally enforceable — a question with broader implications for how AI mission statements and governance pledges are treated in courts and contracts. Second, any outcome that disrupts OpenAI’s IPO timeline creates uncertainty for enterprise customers and partners who have built roadmaps around OpenAI’s product continuity. Third, the case is drawing attention to the governance structures of AI companies generally — a conversation that will affect how regulated industries, government buyers, and cautious enterprise customers evaluate AI vendor risk going forward.

Calls to Action

🔹 Monitor the trial outcome, but avoid operational decisions based on its progress — the most likely outcomes do not fundamentally alter OpenAI’s near-term product availability.

🔹 Flag this for your legal and compliance teams if your organization has contracts, data agreements, or API dependencies tied to OpenAI — any governance disruption carries downstream risk.

🔹 Use this moment to review your own AI vendor contracts for provisions around change of control, corporate restructuring, or mission drift.

🔹 Watch OpenAI’s IPO timeline — delays or complications stemming from the trial could affect pricing, support terms, and product investment signals.

🔹 Treat the governance argument as a precedent to monitor — how courts handle mission-versus-profit claims in AI companies will shape the regulatory environment for the sector.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/elon-musk-is-an-underdog-in-his-180-billion-fight-against-openai-32a74332: May 7, 2026

ARE WE LOSING OUR MINDS TO AI?

Fast Company | May 1, 2026

TL;DR: The AI debate has crossed from professional disagreement into cultural polarization — and the Musk-Altman trial, including a violent attack on Altman’s home, is the most visible symptom of a broader social fracture that business leaders should understand and not dismiss.

Executive Summary

Fast Company’s Chris Stokel-Walker uses the Musk-OpenAI trial — and specifically a violent attack on Sam Altman’s home by a man who cited opposition to AI development — as a frame for examining how AI discourse has deteriorated from debate into division. The piece argues that public sentiment around AI is increasingly binary: enthusiasts who treat skeptics as Luddites, and critics who view AI advocates as indifferent to the harm the technology causes. The middle ground, the article suggests, is shrinking.

Technology historian Mar Hicks at the University of Virginia offers the most analytically grounded perspective in the piece: when a technology is marketed on promises it doesn’t deliver, backlash follows. She also argues that the disproportionate concentration of AI’s benefits among the wealthy — while its costs fall on those with less power — is historically predictable and helps explain the current intensity of opposition. The Musk-Altman conflict itself, she suggests, is less about AI philosophy than about two powerful men competing for control over society’s future.

The article is opinion-adjacent — it diagnoses a cultural mood more than it reports a discrete development. The core claim to evaluate: AI anxiety is no longer a fringe concern, and its social and political consequences are arriving faster than many leaders anticipated.

Relevance for Business

The polarization described here has direct workforce and organizational implications for SMB leaders. Employees hold strong views on AI — both for and against — and those views are increasingly charged. Leaders who deploy AI tools without acknowledging employee concerns risk accelerating internal friction, eroding trust, and triggering resistance that slows adoption. Externally, companies that are publicly enthusiastic about AI may face reputational risk with customers who are skeptical or fearful. The middle path — transparent, measured, human-centered AI deployment — is not just an ethical preference; it’s a risk management strategy.

Calls to Action

🔹 Acknowledge the social context — understand that employees and customers are absorbing a polarized media narrative about AI; communicate your organization’s AI approach with that awareness.

🔹 Audit internal AI communication — assess whether your messaging around AI tools sounds like vendor promotion or genuine leadership; the former accelerates distrust.

🔹 Build space for dissent — employees who are skeptical of AI should have legitimate channels to raise concerns; suppressing dissent creates compliance theater, not adoption.

🔹 Monitor AI backlash as a reputational variable — in customer-facing contexts, being associated with aggressive AI deployment may carry brand risk that outweighs efficiency gains.

🔹 Do not conflate urgency with recklessness — the pressure to adopt AI quickly is real, but moving without employee and stakeholder trust creates execution risk that negates the speed advantage.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91534884/are-we-losing-our-minds-to-ai: May 7, 2026

ARE WE LOSING OUR MINDS TO AI?

TIME | April 30, 2026

TL;DR: A well-researched TIME feature — grounded in cognitive science, not opinion — surfaces a genuinely consequential finding for leaders deploying AI at work: the same tools that improve output can quietly degrade the thinking capacity behind it, and the sequence in which AI enters a workflow may matter as much as whether it’s used at all.

Executive Summary

This piece by TIME editorial fellow Tharin Pillay takes the “cognitive offloading” debate seriously, drawing on peer-reviewed research rather than cultural anxiety. The core finding is specific and actionable: researchers at the University of Chicago and University of Toronto found that using AI early in a task — before thinking through the problem independently — worsened performance, caused participants to remember less, and narrowed their analytical range. Using AI later, after independent thought, led to deeper engagement and broader responses.

This is not the same argument as “AI makes you lazy.” It is a more precise claim: the timing and sequencing of AI in a workflow affects cognitive outcomes, not just task completion. A University of Pennsylvania researcher who coined the term “cognitive surrender” draws a useful line between structured tasks (coding, formatting, data extraction) — where AI accuracy is high and offloading is appropriate — and judgment-dependent work, where premature AI reliance can produce confident but shallow outputs.

A countervailing voice from UCL is worth noting: Sam Gilbert argues that reduced incentive to use a cognitive faculty is not the same as reduced capacity — just as GPS reduced our incentive to memorize routes without eliminating our ability to do so. The debate is genuinely unsettled. What is settled: Anthropic’s own study of 80,000 users found that nearly half of lawyers reported relying on AI for judgment while also experiencing firsthand AI errors — a pattern likely to apply across professional contexts.

Relevance for Business

For SMB leaders managing knowledge workers, this research carries direct implications. Deploying AI as a first-pass tool across judgment-heavy roles — legal review, financial analysis, strategic planning, client communication — may produce faster outputs while quietly eroding the human judgment needed to catch AI errors. This is not a reason to avoid AI; it is a reason to be intentional about where in a workflow AI is introduced. Organizations that establish sequencing norms — think first, then prompt — are likely to preserve both quality and accountability better than those that treat AI as a default starting point.

The piece also surfaces a governance gap: if employees are using AI to form judgments they then present as their own, organizations lack visibility into where AI reasoning has displaced human reasoning — until something goes wrong.

Calls to Action

🔹 Establish AI sequencing norms for judgment-heavy roles — instruct teams to form an independent view before consulting AI on decisions involving client advice, financial assessment, or strategic recommendations.

🔹 Audit where AI is entering workflows earliest — in roles where AI is used from the start of a task, assess whether output quality has increased, decreased, or merely moved faster with similar errors.

🔹 Distinguish task types in your AI policy — structured, verifiable tasks (formatting, data extraction, summarization) are lower risk for early AI use; judgment-dependent tasks warrant a different protocol.

🔹 Include AI sequencing in onboarding and training — employees who learn to think first and prompt second are better positioned to catch AI errors and develop the expertise needed to oversee the tools.

🔹 Monitor this research space — longitudinal studies on AI’s cognitive impact are still early; revisit in 12 months as more data emerges on skill atrophy and professional performance in AI-assisted roles.

Summary by ReadAboutAI.com

https://time.com/article/2026/04/30/ai-thinking-cognitive-offloading/: May 7, 2026

The Secret Weapon Against AI Dominance

By Jacob Noti-Victor and Xiyin Tang

TL;DR: Whether AI-generated work can be copyrighted — not whether AI training infringes on human work — is the legal question that will actually determine if human creative labor survives the AI era.

Executive Summary

The article’s central argument is a reframe: the dominant legal fight over AI and copyright — whether AI companies can train on human-created work without permission — is largely the wrong battle. The authors contend that the more consequential question is whether AI-generated output can itself receive copyright protection, and that the answer to that question may do more to preserve (or eliminate) human creative jobs than any number of infringement lawsuits.

A 2024 federal appellate ruling (Thaler v. Perlmutter) established that fully autonomous AI output cannot be copyrighted, since copyright requires a human author. The Supreme Court declined to revisit that ruling. What remains unsettled — and commercially consequential — is where the line falls for hybrid work: how much human involvement is needed before AI-assisted output becomes protectable? That threshold question is now the central battleground, and the authors argue that industry will aggressively lobby to define “human authorship” as loosely as possible, potentially reducing it to a prompt or a light editorial review.

The practical stakes are concrete. Copyright is what makes entertainment and media IP commercially viable — licensing, adaptation, distribution all depend on it. This has quietly created a financial incentive for major studios, labels, and publishers to keep humans employed, not out of principle, but because AI-only output can’t be licensed or monetized the same way. The authors cite Netflix’s internal production guidelines and Hachette’s withdrawal of a book suspected of AI-written content as examples of business pragmatism rather than moral commitment. The collapse of OpenAI’s Sora — in part because AI-generated video can’t produce licensable IP — is offered as further evidence that the copyright structure currently provides a real, if fragile, structural brake on wholesale labor displacement.

Relevance for Business

This piece matters to SMB executives across any industry that creates, licenses, commissions, or depends on creative content — which is a broader category than it might first appear.

The legal uncertainty is the operational risk. Businesses producing marketing content, branded media, training materials, or customer-facing creative assets with significant AI involvement may be generating work with unclear ownership and limited legal protection. AI-assisted output that lacks sufficient human authorship may not be protectable — and could be freely copied by competitors.

The article also surfaces a genuine strategic question for any business using AI in content workflows: where exactly is the human contribution, and is it substantive enough to establish authorship? That question will soon move from theoretical to legal. The Copyright Office has already signaled that prompting alone is insufficient — courts haven’t yet agreed, but the pressure is building. Organizations that assume AI-generated content carries the same IP protections as human-authored work are carrying unexamined legal exposure.

Finally, the enforcement dimension is worth noting. As AI output becomes harder to distinguish from human work, misrepresentation in copyright filings — whether intentional or inadvertent — carries increasing risk.

Calls to Action

🔹 Audit your AI content workflows for IP exposure — if your business produces AI-assisted creative output and treats it as proprietary, have legal counsel evaluate whether it meets the emerging “human authorship” threshold. Don’t assume current protections hold.

🔹 Document human creative contribution — where AI is used in content production, establish and record what human authors actually contributed. This documentation will matter if ownership is disputed.

🔹 Monitor the copyright threshold litigation closely — the Thaler ruling settled the easy question. The harder cases — how much human involvement is enough — are coming. Assign someone to track Copyright Office guidance and relevant court decisions.

🔹 Be cautious about fully AI-generated marketing or branded content — work that lacks human authorship may be freely reproduced by others. Understand what you are and aren’t protecting before scaling AI content production.

🔹 If you license or commission creative work, revisit vendor contracts — ensure agreements specify human authorship requirements and address AI use disclosure. Licensing arrangements built on AI-only output may carry hidden legal fragility.

Summary by ReadAboutAI.com

https://www.theatlantic.com/ideas/2026/04/creative-labor-ai-copyright/687000/: May 7, 2026

HOW SUNDAR PICHAI PUSHED GOOGLE TO THE FRONT OF THE AI RACE

TIME | TIME100 Most Influential Companies of 2026 | April 30, 2026

TL;DR: Google has quietly assembled the most complete AI stack of any company — research, chips, cloud, software, and distribution at scale — and Sundar Pichai’s decade-long, low-drama strategy is now its primary competitive advantage.

Executive Summary

This is a profile piece written for TIME’s most influential companies list, and it reads accordingly — admiring, narrative-heavy, and generous with anecdote. Discounting for that framing, the underlying business picture it traces is substantively interesting for leaders tracking the competitive AI landscape.

Google’s position is genuinely unusual. It controls its own AI chips (TPUs), runs one of the world’s largest cloud platforms, owns the world’s highest-traffic search engine and second-largest video platform, employs the Nobel Prize–winning AI lab that produced breakthrough models, and has embedded AI into products that over two billion people use monthly — many without specifically seeking it. Gemini now accounts for roughly a quarter of global AI traffic, up from six percent a year ago. Search revenue grew 17% year-over-year in late 2025, quieting fears that AI would cannibalize Google’s core business. The company crossed $4 trillion in market cap earlier this year.

The article’s most strategically significant point — though stated matter-of-factly — is that Google may win the AI competition not by having the best model, but by having the best distribution. One analyst quoted in the piece puts it plainly: people need something dramatically better to change behavior, and Google’s AI is good enough, deeply embedded, and everywhere. That is a meaningful competitive moat. The piece also surfaces real tensions: ongoing employee resistance to military contracts, a pending lawsuit related to a user who died by suicide after a Gemini interaction, and internal debate about how quickly to ship versus how carefully to govern. These are not footnotes — they are the governance risks that will shape Google’s AI trajectory.

Relevance for Business