AI Updates May 15, 2026

The stories in this week’s post share a common undertone that’s easy to miss when you read each one in isolation: AI is no longer a horizon story. It is a pressure-in-the-room story. The technology has moved fast enough that the systems built to govern it — legal, regulatory, corporate, clinical, cultural — are visibly straining to keep pace. That strain is the defining theme of this week’s coverage, and it carries direct operational implications for every business that is either adopting AI tools or managing people who are.

Several of this week’s summaries deal with governance failures in plain view. The Musk v. Altman trial is producing a documented public record of how the world’s most consequential AI company was run without adequate conflict-of-interest controls or board independence. A peer-reviewed Harvard study found that AI now outperforms physicians on clinical reasoning benchmarks — yet the researchers themselves caution that benchmark performance is not the same as deployment readiness. Meanwhile, courts are absorbing a 158% increase in AI-generated legal filings, including fictitious citations submitted by trained professionals at major firms. These are not warnings about a future risk. They are descriptions of things happening now, with real consequences for the organizations caught in them.

The week’s business-facing stories reinforce a more nuanced version of the same message. OpenAI’s $4 billion deployment arm signals that the AI market is shifting from capability competition to implementation competition — a change that raises switching costs and deepens vendor dependence for any organization that signs on. Big Tech is financing $700 billion in AI infrastructure through global debt markets. Anthropic is securing exclusive access to over 220,000 Nvidia GPUs to fix a reliability problem its own growth created. AI cybersecurity threats have crossed a threshold where attackers are using AI to autonomously discover unknown vulnerabilities. Agentic AI features are already embedded in many enterprise software platforms — often quietly, without explicit adoption decisions. And research from Carnegie Mellon, Oxford, MIT, and UCLA finds that just ten minutes of AI-assisted problem-solving measurably reduces independent performance when the AI is removed. The question this week is not whether AI is transforming business. It is whether your organization’s governance, measurement, and risk practices are moving as fast as the technology itself.

Summaries

Rolling Stones Deploy Commercial-Grade Deepfake in Mainstream Music Video

Rolling Stone | May 14, 2026

TL;DR: The Rolling Stones’ new “In the Stars” video uses commercially available deepfake technology to convincingly de-age three living artists — a quiet signal that AI-driven identity manipulation has moved from experiment to routine creative production.

Executive Summary

The Rolling Stones released a music video in which director François Rousselet used Deep Voodoo deepfake technologyto render Mick Jagger, Keith Richards, and Ronnie Wood as they appeared roughly 50 years ago. The production is a high-profile, consent-based deployment of AI identity transformation — distinguishing it from unauthorized deepfakes, but normalizing the underlying capability at scale.

What matters here is less the music industry story and more the production reality it signals: a major creative team commissioned a named deepfake vendor, integrated the output into a polished commercial release, and published it without friction. The technology worked well enough that it anchors a mainstream promotional campaign for an album — Foreign Tongues, out July 10.

The consent factor is doing significant work here. This is de-aging with full artist participation, not impersonation. But the ease of execution, the vendor commoditization (Deep Voodoo is a known, accessible studio), and the absence of any apparent legal or reputational backlash indicate the barrier to this class of production has dropped materially.

Relevance for Business

For SMB executives, the headline isn’t the Rolling Stones — it’s the normalization signal. When a project of this visibility uses deepfake de-aging as a standard production tool rather than a novelty, it accelerates stakeholder expectations across industries: marketing teams will be asked about it, clients will reference it, and internal policy gaps will widen.

Key implications:

- Brand and identity risk rises as the technology becomes cheaper and more accessible — your executives, spokespeople, or brand assets could be targets, not just celebrities

- Vendor ecosystem maturing: Deep Voodoo’s commercial engagement here suggests the market for AI identity tools is professionalizing — including for misuse

- Consent and governance gaps: most organizations have no policy covering employee or executive likeness in AI-generated content, internal or external

- Marketing and creative teams will increasingly encounter client or leadership requests to use similar tools — without guardrails in place, execution risk is real

Calls to Action

🔹 Assign a policy owner for AI-generated likeness and identity use — even a lightweight internal guideline reduces exposure before a request lands without one

🔹 Brief your marketing and communications leads that consent-based deepfake production is now commercially viable; have a position ready before a vendor pitches it

🔹 Audit existing contracts with spokespeople, employees in public-facing roles, or brand ambassadors for language covering AI-generated representations — most older agreements are silent on this

🔹 Monitor Deep Voodoo and peer vendors as indicators of where pricing and accessibility are heading — this is a useful proxy for the broader AI identity-manipulation market

🔹 Deprioritize alarm, prioritize preparation: this specific use is consensual and creative — the business risk is in the gap between capability normalization and your internal readiness

https://www.rollingstone.com/music/music-news/rolling-stones-in-the-stars-music-video-odessa-azion-1235562708/: May 15, 2026The Rolling Stones – In The Stars (Official Video)

https://youtu.be/oT5LwwEHgnc?si=FLDNMJAR8BD-CO-G: May 15, 2026

The Comeback’s Series Finale Puts AI’s Hollywood Takeover on Trial

The Comeback Official Podcast (HBO/OBB Sound) | May 2026

TL;DR: The final season of HBO’s The Comeback uses its fictional studio universe to dramatize the AI displacement debate in Hollywood — depicting an industry executive who openly tells the press that AI can write sitcoms so studios don’t have to pay for quality, and a veteran actress who publicly calls it out.

Executive Summary

The finale of The Comeback — and the podcast unpacking it — offers something unusual for a legacy comedy series: a surprisingly direct depiction of how AI economics are reshaping creative labor in Hollywood. The show’s storyline hinges on a studio streaming head who, at a press event, declares that AI can handle sitcom writing so that studios can reserve budgets for prestige “auteur” projects. The message embedded in the fiction: AI is being positioned not as a creative tool, but as a cost-reduction mechanism that explicitly devalues certain categories of work.

The writers built the AI subplot around real research. According to series co-creator Michael Patrick King, the “paywall” concept — the idea that studios allocate a fixed budget cap for AI usage per project, and when that cap is hit, the AI simply stops — emerged from actual industry reporting. The show’s fictional AI system, “Al Assist,” shuts down mid-production when its allotted budget runs out. The paywall detail is not satire; it reflects a genuine structural constraint in how enterprise AI is being deployed in media production.

The central dramatic conflict is that the show’s protagonist is simultaneously dependent on the AI-written show for her career comeback, and the public face being pressured to either defend or condemn AI on behalf of writers. When she speaks honestly at the press event — acknowledging the AI’s limitations — the studio head reacts with visible alarm. Her eventual refusal to continue under those terms is met with the line: “Digital Valerie will.” The show frames this not as dystopia, but as the present-tense reality of an industry mid-transition.

The resolution — Valerie pivoting to a human-led quality project — is optimistic, but the creators are clear that her closing line is the actual thesis: “AI’s here. Gotta move on.”

Relevance for Business

The Comeback is fiction, but its AI storyline is drawn from current industry dynamics that apply well beyond entertainment. The show surfaces several patterns SMB leaders are already encountering or will encounter soon:

The “good enough” mandate. The studio head’s line — “We’re not looking for great, we’re just looking for good enough that people leave it on” — is the AI deployment logic now appearing across customer service, marketing content, and internal communications. Leaders should be honest about where that logic applies in their own operations, and where it creates reputational or quality risk.

Budget caps as a structural reality. The “paywall” mechanic — AI stops when the allotted spend runs out — mirrors what many organizations are discovering: AI costs are real, variable, and not always predictable. Fixed-budget AI deployments can fail at inopportune moments.

The displacement framing is accelerating. The show depicts elite creative professionals (represented by the “Mount Rushmore” of TV writers) pressuring others to publicly oppose AI — while quietly protecting their own positions. The same dynamic is visible in many knowledge-work industries: senior roles may be safer, while mid-tier production work is first in line for AI substitution.

Human expertise still has a rebuttal. The show’s counterargument — made explicitly by the protagonist — is that domain expertise matters, that there is a difference between technically functional output and culturally resonant work, and that the people who know the difference are an asset. That argument is available to SMB leaders defending quality-differentiated positions.

Calls to Action

🔹 Audit where “good enough” logic is already operating in your business — AI-generated content that saves cost but degrades brand trust or customer experience is a hidden liability. Name it explicitly before it becomes a problem.

🔹 Plan for AI budget variability — If your organization uses AI tools with usage-based pricing or enterprise caps, map what happens operationally when those limits are hit mid-project. Build contingencies.

🔹 Watch the labor displacement signal in your sector — The pattern The Comeback depicts (AI replacing mid-tier production work while senior roles are repositioned as “auteur”) is not unique to Hollywood. Identify which roles in your organization fit that profile.

🔹 Document your domain expertise — The show’s most practical business argument is that the person who knows the most about a discipline should be in the room when AI is being evaluated for that discipline. Make sure that expertise is represented in your AI adoption decisions, not just your IT or finance teams.

🔹 Don’t wait for the industry consensus — Valerie’s closing line is the most executive-relevant moment in the entire transcript. AI adoption in your competitive space is not pausing for debate. The strategic question is how to move forward with it without sacrificing what actually differentiates you.

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=eKDVV29wmUc&list=PLSduohp5OGX6vxflDRyigjYGdCOQKHhm: May 15, 2026

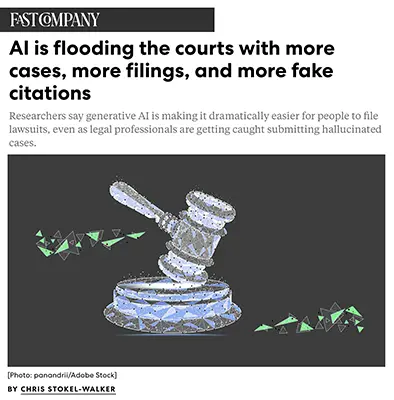

TRUMP’S CHINA SUMMIT BRINGS 16 CEOS TO BEIJING

The New York Times | May 11, 2026

TL;DR: A high-profile U.S. business delegation — spanning finance, tech, defense, and semiconductors — is joining President Trump in Beijing for Xi Jinping meetings this week, but Nvidia’s CEO was not initially invited, a telling signal given the company’s pending chip-export approval from both governments. Jensen Huang did eventually meet up with the team and is in China.

Executive Summary

President Trump departed for China this week accompanied by 16 corporate leaders representing a broad cross-section of American industry, from Apple and Goldman Sachs to Boeing and Qualcomm. The stated U.S. agenda includes establishing formal boards for bilateral investment and trade — framing this less as a diplomatic visit and more as a structured commercial negotiation at the highest level.

Two absences matter most. Jensen Huang of Nvidia — whose company is awaiting regulatory clearance from both the U.S. and China to ship H200 AI chips — was not invited and will not attend. Whether this reflects deliberate diplomatic choreography or simple exclusion is unclear, but the optics are significant: the world’s most valuable company is sitting out a summit explicitly designed around trade and investment. Separately, Cisco’s CEO was listed then withdrew, a minor but notable last-minute shift.

Elon Musk’s inclusion signals the completion of his political rehabilitation with the Trump administration following their 2025 falling out. He returns as a participant in high-stakes geopolitical commerce — though in his capacity as Tesla CEO, not as a policy figure.

Relevance for Business

For SMB executives, this summit is a leading indicator of U.S.-China trade trajectory. The composition of the delegation — semiconductors, finance, aerospace, payments — maps directly onto the sectors where tariff relief, market access, or new restrictions are most likely to emerge from these talks. Qualcomm, Micron, and Apple all have deep China exposure; any bilateral agreements affecting supply chains or market access will ripple downstream to smaller vendors and customers in their ecosystems.

The Nvidia situation is the sharpest signal to watch: AI chip access to China remains a live policy variable, and the outcome of these discussions could accelerate or further constrain the AI hardware market. For businesses planning AI infrastructure investments, supply availability and pricing may shift based on what does or doesn’t get resolved this week.

Calls to Action

🔹 Monitor summit outcomes closely — any announced investment or trade board framework will likely include sector-specific provisions with downstream effects on U.S.-China supply chains.

🔹 Flag China-exposed vendors in your stack — if your business relies on companies like Apple, Qualcomm, or Micron, assess how potential new trade terms could affect pricing, availability, or roadmap timing.

🔹 Watch the Nvidia AI chip decision specifically — H200 export approval (or denial) will have direct implications for AI infrastructure costs and availability in the near term.

🔹 Avoid over-interpreting the delegation’s presence as resolution — attendance signals intent, not outcome; treat any announcements as starting points for monitoring, not settled policy.

🔹 No immediate SMB action required — but assign someone to track this story through end of week as follow-on announcements emerge.

WASHINGTON (Reuters) – More than one dozen CEOs and top executives will join the U.S. delegation as President Donald Trump travels to China this week, according to a White House official. The companies include:

- Apple (Tim Cook)

- Blackrock (Larry Fink)

- Blackstone (Stephen Schwarzman)

- Boeing (Kelly Ortberg)

- Cargill (Brian Sikes)

- Citi (Jane Fraser)

- Cisco (Chuck Robbins)

- Coherent (Jim Anderson)

- GE Aerospace (H Lawrence Culp)

- Goldman Sachs (David Solomon)

- Illumina (Jacob Thaysen)

- Mastercard (Michael Miebach)

- Meta (Dina Powell McCormick)

- Micron (Sanjay Mehrotra)

- Qualcomm (Cristiano Amon)

- Tesla/SpaceX (Elon Musk)

Summary by ReadAboutAI.com

https://www.nytimes.com/2026/05/11/us/politics/trump-china-musk-cook.html: May 15, 2026https://www.reuters.com/business/finance/apple-boeing-citi-tesla-meta-executives-join-trumps-china-trip-2026-05-11/: May 15, 2026

Ten Minutes Is Enough: AI Use Impairs Independent Problem-Solving, Research Finds

Fast Company | News | May 11, 2026 | By Jude Cramer

TL;DR: A multi-university study found that just 10 minutes of AI-assisted problem-solving measurably reduced participants’ independent performance when AI access was removed — with the sharpest declines among those who asked AI for direct answers rather than hints.

Executive Summary

Researchers from Carnegie Mellon, Oxford, MIT, and UCLA conducted a controlled study in which participants solved math problems with and without an AI assistant, then had the assistant removed without warning. The AI-assisted group outperformed the control group while AI was available — but once access was cut, their solve rate dropped roughly 20% below the control group. Participants who had used AI were also twice as likely to simply abandon problems rather than attempt them independently.

A follow-up experiment testing reading comprehension produced similar results. Critically, the effects were not uniform across all AI usage patterns: participants who used AI to request direct answers saw the largest decline, while those who used AI only for hints or clarifications performed comparably to the control group. This distinction matters — it’s not AI use that creates the impairment; it’s dependency on AI as a replacement for thinking.

The study adds to a growing body of research, including an MIT brain-activity study on essay writing, suggesting that regular AI reliance — even brief — may reduce cognitive engagement and independent reasoning capacity over time. The researchers explicitly flag concerns about accumulating effects in daily workflows.

Note: This is peer-reviewed research from credible institutions, not advocacy. The study used controlled conditions; real-world effects in complex work environments will be more variable. The finding is directionally significant but should not be overstated.

Relevance for Business

This has direct implications for how SMB leaders deploy AI across their teams, particularly in roles requiring judgment, analysis, and problem-solving. If employees are routinely offloading cognitive work to AI tools, the business may be building operational dependency risk: performance that looks strong on AI-assisted metrics but degrades if AI access is disrupted, downgraded, or inaccessible in time-sensitive moments.

There are also talent development implications: if AI is used as an answer machine rather than a thinking aid in training, onboarding, or skill-building contexts, the organization may be producing employees who are less capable independently — not more. This is particularly relevant in analytical, legal, financial, and customer-facing roles where independent judgment under pressure matters.

Calls to Action

🔹 Audit how your team is actually using AI tools. Are people asking for direct outputs or using AI to sharpen their own thinking? The difference has measurable downstream effects.

🔹 Build AI usage guidelines that distinguish answer-seeking from reasoning-support. This is not about limiting AI — it’s about protecting the independent judgment capacity that AI cannot replace.

🔹 Do not assume AI-augmented performance reflects team capability. Before reducing headcount or restructuring roles based on AI productivity gains, evaluate how teams perform when AI is unavailable or unreliable.

🔹 Treat this research as a hiring and development signal. In roles requiring independent reasoning, candidates and employees who can think clearly without AI assistance remain strategically valuable — and may be increasingly rare.

🔹 Monitor this research area. Longitudinal studies on cumulative cognitive effects of daily AI use are still limited. The early evidence warrants caution; it does not yet warrant alarm.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91539907/cognitive-science-scientists-found-using-generative-ai-chatgpt-impairs-brain-performance-thinking-problem-solving-skills: May 15, 2026

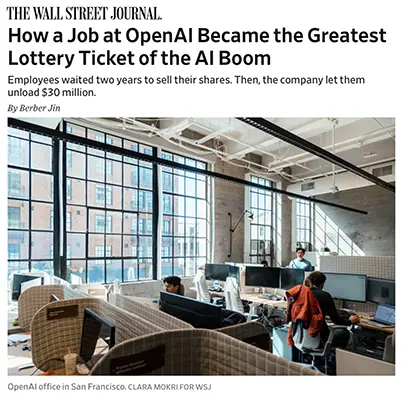

HOW A JOB AT OPENAI BECAME THE GREATEST LOTTERY TICKET OF THE AI BOOM

The Wall Street Journal | Berber Jin | May 10, 2026

TL;DR: More than 600 current and former OpenAI employees collectively realized $6.6 billion in a single share sale last October — with roughly 75 walking away with $30 million each — establishing unprecedented pre-IPO wealth creation that is reshaping AI talent competition, compensation norms, and the broader labor market for technical workers.

Executive Summary

The Wall Street Journal reports the financial mechanics and scale of OpenAI’s October 2025 employee tender offer, in which the company tripled its per-employee share sale cap to $30 million to meet investor demand. The resulting $6.6 billion collective payout across 600+ participants — before any public listing — has no historical precedent in technology. Early Google and Facebook employees made comparable wealth, but only after IPOs that came years into their companies’ histories. OpenAI employees realized this wealth while the company remains private, valued at $852 billion as of its latest financing round.

The compensation context surrounding this event matters as much as the event itself. OpenAI offers some technical roles annual salaries exceeding $500,000, provides equity packages that dwarf industry norms, and in August 2025 gave select staff one-time bonuses worth millions. Meta matched with $300 million packages for some top researchers. These figures are not representative of the broader AI labor market, but they define the ceiling that AI companies are competing toward — and that ceiling is rising.

The structural implication for all employers: the talent war for AI specialists has decoupled from conventional compensation frameworks. Organizations that cannot offer equity upside at these scales are competing for a narrowing pool of candidates who are choosing between life-changing wealth at AI labs and conventional technology employment elsewhere. This isn’t just a Big Tech problem — it cascades down into mid-market and SMB hiring as expectations set at the top reshape norms throughout the sector.

The article also notes second-order effects: rising San Francisco rental prices, growing class divides within the city, and the anticipation of further wealth release when OpenAI and Anthropic complete what are expected to be historically large IPOs.

Relevance for Business

For SMB leaders, the most direct implication is AI talent scarcity and compensation pressure. The extreme wealth being generated at the top of the AI market is creating a gravitational pull that affects hiring well below the elite level. Software engineers, data scientists, and ML practitioners who might previously have considered SMB or mid-market roles are increasingly orienting toward companies — or adjacent roles — with equity exposure to AI upside. This is a structural market shift, not a cyclical one.

The IPO signal is also relevant: when OpenAI and Anthropic go public, thousands of newly liquid employees will enter the market with significant capital. Some will start companies (increasing startup competition), some will fund others (accelerating the AI startup ecosystem), and some will simply leave the workforce temporarily. The downstream effects on the AI vendor landscape will be material.

Calls to Action

🔹 Recalibrate your AI talent acquisition strategy. You will not win on cash compensation against AI labs. Identify what you can offer — mission, scope, equity in your own business, flexibility, autonomy — and compete on those dimensions explicitly.

🔹 Assess your current AI/ML talent retention posture. If you have technical staff with skills that are valuable to AI companies, understand what would retain them and whether you’re positioned to act.

🔹 Watch the OpenAI and Anthropic IPO timelines. The liquidity event that follows will reshape the AI startup and talent landscape. Plan for increased competition for AI-adjacent roles in the 12–24 months post-IPO.

🔹 Consider partnership or vendor relationships as an alternative to hiring. If recruiting and retaining elite AI talent is not viable at your scale, a well-structured vendor or API relationship may deliver more value with less execution risk.

🔹 Use this as a board-level conversation trigger. If AI capability is part of your competitive strategy, the talent economics described here should inform your workforce planning assumptions and compensation structure discussions.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/openai-employee-stock-sales-71ed10bd: May 15, 2026

The Hidden Rules Behind AI Chatbots — And How to Use Them

The Washington Post | Kevin Schaul | May 11, 2026

TL;DR: AI companies embed thousands of words of invisible instructions — called system prompts — into every chatbot conversation, shaping behavior in ways most users never see; understanding this architecture gives business users meaningful practical control.

Executive Summary

Every interaction with a commercial AI chatbot — ChatGPT, Claude, Gemini, Grok — operates within a hidden instruction layer set by the AI company before the user types a word. These system prompts, which range from 2,300 to 27,000 words across major platforms, govern personality, content policies, tool access, and specific behavioral guardrails. The user’s own input is processed only after this prior instruction layer has already shaped the context.

The practical implications are direct. System prompts can override or constrain user requests, sometimes in ways that are opaque to the person interacting with the tool. They can also be changed rapidly — without model retraining — which means chatbot behavior can shift overnight without any public announcement. This is both a feature (companies can fix problems quickly) and a risk (the tool you deployed last month may not behave exactly the same today). The article cites examples of documented behavioral shifts tied to system prompt changes, including Grok’s antisemitic content episode and ChatGPT’s fixation on goblins.

What users can do: Major platforms offer customization features — within the limits of the vendor’s system prompt — that allow users to adjust tone, format, verbosity, and reasoning style. These adjustments don’t change core capabilities, but they can meaningfully improve output relevance for specific business use cases. The article also notes that system prompts are not always reliably followed by the models themselves, adding a layer of behavioral unpredictability.

The transparency question is unresolved. Most AI companies decline to publish complete system prompts, and the ones that have been extracted by researchers reveal that companies actively embed commercial, legal, and political priorities into the instruction layer — priorities that may not align with enterprise user needs. The article notes OpenAI began serving ads in ChatGPT in February 2026, with system prompt guidance on how the model should respond when asked about those ads.

Relevance for Business

For SMB executives deploying or evaluating AI tools, this piece has direct governance and procurement relevance. The system prompt architecture means the AI tool you’re evaluating is not a neutral instrument — it reflects the vendor’s priorities, commercial relationships, and policy choices. That’s not disqualifying, but it warrants understanding.When evaluating chatbot tools for workflows that touch compliance, legal, customer communication, or any sensitive domain, ask vendors what behavioral constraints are embedded and whether those can be overridden by enterprise customers through system prompt access.

The stability risk is also material: if your team’s workflows depend on consistent AI behavior, the vendor’s ability to alter that behavior silently through system prompt changes represents an operational dependency worth flagging in vendor agreements.

Calls to Action

🔹 Explore the customization features available in whichever AI platform your team uses (ChatGPT, Claude, Gemini all offer user-level instruction customization). Even modest adjustments can improve output quality for specific business tasks.

🔹 Ask vendors about system prompt access and stability. For enterprise deployments, understand whether custom system prompts are available, what behavioral constraints are fixed, and whether prompt changes are communicated in advance.

🔹 Build behavioral testing into your AI vendor evaluation process. System prompts can shift; test your tools periodically against a consistent set of business-relevant queries to detect behavioral drift.

🔹 Flag the advertising and commercial interest layer. If your AI tool vendor has introduced or is considering advertising, assess whether that creates conflicts with your use case — particularly in customer-facing or compliance-adjacent workflows.

🔹 Assign to your AI/IT governance lead for review of current vendor agreements relative to system prompt transparency and change notification.

Summary by ReadAboutAI.com

https://www.washingtonpost.com/technology/interactive/2026/chatbots-hidden-rules-system-prompts/: May 15, 2026

AI SHOPPING IS A MULTI-TRILLION-DOLLAR RACE — WITH A CAUTIONARY TALE ALREADY BAKED IN

Fast Company | May 7, 2026 (Subscriber Exclusive)

TL;DR: Google and OpenAI are both racing to own AI-powered shopping, but the early scorecard — including OpenAI’s quietly shelved Instant Checkout — reveals that building AI commerce infrastructure is significantly harder than announcing it, and the winner won’t be known for months.

Executive Summary

This is one of the more substantively reported pieces in this batch, drawing on interviews with executives at Google, OpenAI, Stripe, Walmart, and multiple startups. The core finding: the gap between AI commerce announcements and working AI commerce is large, and the underlying reason is structural. Large language models were trained on text, not on product data — they were never designed to handle the real-time inventory, loyalty systems, shipping logic, return workflows, and merchant-specific rules that a functional shopping experience requires. Building that plumbing is a multiyear infrastructure project, not a feature.

OpenAI’s Instant Checkout — announced with Shopify in September 2025 as a native in-ChatGPT purchasing experience — was quietly discontinued in March 2026 after fewer than 30 of Shopify’s millions of merchants actually went live. The retreat is significant: it signals that even the most-hyped AI applications encounter hard limits when they collide with existing commercial infrastructure. OpenAI’s revised position is that merchants should own checkout themselves; ChatGPT will help users discover products and hand off to merchant-controlled purchase flows.

Google currently holds the structural advantage for several reasons: its Shopping Graph — a real-time database of product pricing, inventory, and merchant relationships built over two decades — gives it product data that no competitor can replicate quickly. Its Gemini platform also has access to years of user data across Gmail, Photos, and Drive (with user permission via its Personal Intelligence feature), enabling personalization that ChatGPT, which learns only from conversation history, cannot match today. Google already has working in-chat checkout via Google Pay, with Gap and Ulta Beauty live as of March and April 2026. Meanwhile, two competing infrastructure protocols have emerged — OpenAI and Stripe’s Agentic Commerce Protocol (ACP), and a Google-led coalition’s Universal Commerce Protocol (UCP) — and the market has not settled on a standard.

Relevance for Business

If you sell products online, this is the most important category to monitor in 2026. The shift to agentic commerce — where AI agents research, compare, and complete purchases on behalf of users — would represent one of the most significant channel disruptions in retail history. Businesses that depend on Google Shopping, paid search, or direct-to-consumer web traffic should understand that the discovery layer is being rebuilt. Who controls discovery in an agentic shopping world controls customer acquisition — and that power is shifting toward platform incumbents with data advantages. SMB retailers without direct relationships to Google Merchant Center or Shopify’s integrations may find themselves increasingly invisible to AI shopping agents that surface products from structured, machine-readable data sources. The protocol war (ACP vs. UCP) also means that early integration decisions carry real switching-cost risk — supporting both is currently the recommended posture, but that adds cost and complexity.

Calls to Action

🔹 If you sell products online, audit how your product data is structured — real-time inventory, pricing, and product attributes need to be machine-readable to be discoverable by AI shopping agents. This is not optional in the medium term.

🔹 Ensure your products are live and well-optimized on Google Merchant Center — Google’s Shopping Graph advantage means Gemini-driven discovery will likely favor merchants already in its ecosystem.

🔹 Monitor the ACP vs. UCP protocol competition — do not make a major platform commitment based on either standard alone until the market converges. Ask your e-commerce platform vendor which they support and what their roadmap looks like.

🔹 Treat OpenAI’s Instant Checkout failure as useful data — it is evidence that AI commerce hype is running well ahead of infrastructure reality. Evaluate vendor claims in this space with appropriate skepticism and a 12–18 month patience window.

🔹 If you are not in retail, file this as a second-order signal: AI shopping agents will change customer behavior, supplier relationships, and potentially pricing dynamics in nearly every B2C category over the next three to five years.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91533534/shop-til-you-bot-google-openai-and-the-race-to-build-agentic-commerce: May 15, 2026

ARTISAN’S “STOP HIRING HUMANS” ADS ARE GETTING ATTENTION — MOSTLY THE WRONG KIND

Fast Company | May 8, 2026

TL;DR: AI sales startup Artisan is running provocative anti-human ads in New York and San Francisco subway systems — generating viral backlash that reveals as much about public AI anxiety as it does about the company’s actual product.

Executive Summary

Artisan, which sells an AI sales agent designed to replace entry-level outbound sales reps, has built its brand around deliberately inflammatory advertising. Its subway billboards — including taglines urging companies to stop hiring humans — have generated significant social media attention, the overwhelming majority of it negative. With 71% of Americans reporting concern about AI-driven job displacement (Gallup, 2025), and Gen Z anger toward AI rising while excitement falls, Artisan’s campaign is connecting with a genuine public nerve — just not in the way a product launch typically aims to.

The backlash is substantive, not just emotional. Critics online raised legitimate points about AI output quality versus quantity — specifically, whether an AI sales agent that books meetings or researches prospects is producing reliable, actionable work or inflated activity metrics. These are real operational questions any leader would ask before deploying such a tool. Artisan’s CEO frames the campaign as honest provocation — arguing that AI should take over roles that were never good for humans in the first place, like cold outbound sales. That’s a coherent position, but it sidesteps the harder questions about output quality, oversight, and what replaces the human judgment that even low-level sales roles involve.

This is a marketing story, not a product capability story. The article provides no independent evidence of Artisan’s actual performance. The signal here is cultural and strategic, not technical.

Relevance for Business

Two things matter here for SMB leaders. First, the AI-replacement framing is a real strategic conversation, not just a stunt — companies are actively evaluating whether AI agents can handle entry-level sales, customer support, and research functions. The question isn’t whether to consider it, but how to evaluate it with appropriate rigor. Second, the public reaction is a workforce management signal: employees are watching how AI vendors position these tools, and leaders who adopt AI for headcount reduction without a thoughtful communication strategy risk eroding trust with their remaining teams. The Artisan backlash illustrates how quickly “efficiency” narratives can become morale and reputation problems.

Calls to Action

🔹 Evaluate AI sales tools on output quality, not activity volume — before piloting any AI agent for outbound sales or research, define what “good” looks like and build verification into the process.

🔹 Separate the vendor’s marketing from the product’s actual capability — Artisan’s ads are designed to generate attention, not to accurately represent what AI sales agents can and cannot do reliably.

🔹 Develop an internal communication posture on AI and roles — if you’re exploring AI tools that touch headcount, your team deserves a clear, honest framing before they see a billboard.

🔹 Monitor the AI agent sales category — it is maturing rapidly, and vendors beyond Artisan (many less controversial) are building comparable tools worth evaluating on their merits.

🔹 Take the Gen Z sentiment data seriously — if your workforce skews younger, declining enthusiasm for AI combined with growing anger is a change management signal that warrants attention in how you introduce AI tools.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91539104/artisan-ai-asks-companies-to-replace-human-workers-its-strategy-is-working-well-ad-campaign-stop-hiring-humans: May 15, 2026

Anthropic–SpaceX Deal Signals Compute Is the Choke Point for AI Growth

MarketWatch | May 6, 2026

TL;DR: Anthropic’s deal for exclusive access to SpaceX’s Colossus 1 data center — and interest in orbital compute — reveals that raw infrastructure capacity, not model quality, is now the primary constraint on AI product delivery.

Executive Summary

Anthropic has secured exclusive use of SpaceX’s Colossus 1 facility in Memphis, gaining over 220,000 Nvidia GPUs and 300 megawatts of power within the month. The company has also signaled interest in future orbital data center capacity, a speculative but notable long-range signal about where the compute arms race may head.

The business context matters: Anthropic’s annualized revenue has reportedly surged past $30 billion, up from $9 billion at year-end 2025. That growth created a reliability problem — Claude’s core services had been running at roughly 99.1% uptime, translating to nearly 80 hours of downtime annually. Industry standard is 99.999%. Rationed access and peak-hour caps on Claude Code and the API were visible symptoms of a supply constraint, not a product limitation. This deal is primarily about fixing that.

The political texture is worth noting: Musk had publicly criticized Anthropic as recently as earlier this year. His reversal — and SpaceX’s willingness to lease compute capacity to a direct competitor to its xAI subsidiary — prompted observers including Wharton’s Ethan Mollick to question whether it signals that xAI is deprioritizing frontier model competition. That is a claim, not a confirmed strategic shift, and should be treated as such.

Relevance for Business

For SMB leaders using Claude Code or building on the Claude API, the practical near-term effect is fewer rate limit interruptions and higher usage ceilings. If your team has been hitting five-hour caps or peak-hour restrictions, expect that friction to ease.

More broadly, this deal illustrates a structural dynamic that affects every AI vendor relationship: the companies building AI products are themselves constrained by infrastructure, and those constraints shape what you can reliably deploy. Reliability — not capability — is becoming the competitive differentiator at the product layer. Leaders evaluating AI vendors should be asking about uptime commitments and infrastructure roadmaps, not just model benchmarks.

The Musk angle creates an unusual governance footnote: Anthropic’s primary infrastructure partner is now also the parent company of a direct AI competitor. That dependency is worth tracking.

Calls to Action

🔹 If you use Claude Code or the Claude API, revisit rate limits and plan for expanded usage — the constraint should ease materially within weeks.

🔹 When evaluating any AI vendor, add uptime history and infrastructure resilience to your due diligence checklist alongside model performance.

🔹 Monitor the xAI/Grok competitive trajectory — if SpaceX is effectively ceding compute to Anthropic, that may shift the frontier model landscape in ways that affect which tools your organization should be building on.

🔹 Note the orbital data center signal as speculative — it’s an Anthropic “expression of interest,” not a commitment. File it under long-range infrastructure risk awareness, not near-term planning.

🔹 Assign someone to track AI vendor reliability metrics — 80 hours of annual downtime is operationally significant. Know your vendors’ numbers before you deepen dependency.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/anthropic-strikes-spacex-deal-to-fuel-claude-code-growth-and-for-data-centers-in-space-e11819e4: May 15, 2026

Robot Lawn Mowers and the IoT Security Crisis: When AI-Adjacent Devices Become Physical Threats

The Verge | May 7, 2026

TL;DR: A security researcher demonstrated that he could remotely control every Yarbo robot lawn mower in the world — accessing owners’ GPS coordinates, Wi-Fi passwords, and live camera feeds — exposing a systemic IoT security failure with direct implications for any organization deploying connected physical devices.

Executive Summary

The Verge published an investigation documenting serious, demonstrated security vulnerabilities in Yarbo robot lawn mowers. A security researcher named Andreas Makris, working from Germany, was able to take remote control of Yarbo robots across the United States and Europe — reportedly tracking over 11,000 devices worldwide. The vulnerabilities include a hardcoded root password shared across all devices, a manufacturer-installed remote access backdoor that cannot be disabled by owners and is restored after firmware updates, and the ability to extract owners’ home GPS coordinates, Wi-Fi passwords, and email addresses. Makris demonstrated the vulnerabilities live to the reporter, who independently verified key findings by visiting owners’ homes using data extracted from the devices.

The manufacturer — which markets itself as a New York company but is actually Shenzhen-based Hanyang Tech — initially dismissed the concerns and had no security contact or bug bounty program. Following publication, Yarbo issued partial remediation commitments, though the researcher’s assessment is that the backdoor architecture is by design, not accident.

This is not a Yarbo story. This is an IoT story. The article frames Yarbo as an extreme example of a widespread problem: consumer and commercial connected devices — from robot vacuums to industrial equipment — are frequently shipped with security practices that would fail any reasonable enterprise review. The physical dimension of this case — bladed machinery that can be remotely redirected — makes the risk concrete in a way that network-only vulnerabilities do not.

Relevance for Business

The Yarbo case is most directly relevant to SMBs in three ways.

First, any organization deploying connected physical devices — on premises, in facilities, or in customer environments — faces the same underlying risk pattern. The question is not whether your robotic or IoT devices are as poorly secured as Yarbo’s; the question is whether you have verified their security posture through your own due diligence rather than assuming the manufacturer’s claims are accurate.

Second, the network exposure extends beyond the device itself. Yarbo’s vulnerabilities allowed extraction of Wi-Fi passwords and home network access points. In a commercial setting, a compromised connected device is a potential entry point into your broader network. Connected physical devices should be treated as potential network security risks, not just hardware purchases.

Third, the supply chain and transparency issue is notable: Yarbo markets itself as a US company but operates from China, has no security disclosure infrastructure, and attempted to impose non-disparagement agreements on reviewers. When evaluating IoT and robotics vendors, the presence of a security disclosure program, a bug bounty mechanism, and transparent ownership is a basic due diligence requirement — not a nice-to-have.

Calls to Action

🔹 Audit any connected physical devices currently operating in your facilities — robotic equipment, IoT sensors, smart locks, environmental controls — and verify their security posture against basic standards: default password policies, remote access architecture, firmware update practices.

🔹 Isolate connected devices on separate network segments rather than allowing them to share credentials or access points with core business systems.

🔹 Add security disclosure program and bug bounty existence to your vendor checklist for any IoT or robotics purchase — their absence is a meaningful red flag about a vendor’s security culture.

🔹 Treat country-of-manufacture and actual ownership transparency as procurement factors, particularly for devices with cameras, GPS, or network access in sensitive environments.

🔹 Monitor CVE disclosures relevant to devices already deployed — the Yarbo vulnerabilities were published without prior vendor remediation, meaning organizations may not receive proactive notification. Assign responsibility for tracking disclosures for all networked physical devices in your environment.

Summary by ReadAboutAI.com

https://www.theverge.com/tech/925696/yarbo-robot-lawn-mower-hack-remote-control-camera-access-mqtt: May 15, 2026

Microsoft’s Legal Agent: AI Enters the Contract Review Workflow — With Caveats

The Verge | May 1, 2026

TL;DR: Microsoft has launched an AI agent inside Word specifically for legal contract review — a narrow, workflow-specific tool built on acquired talent, not general AI — and its arrival signals that agentic AI is moving into professional services faster than most firms have planned for.

Executive Summary

Microsoft has released a Legal Agent inside Word, currently limited to participants in its Frontier program in the United States. The tool is designed to review contracts clause by clause, track negotiation history, flag risks and obligations, and work with documents that already contain tracked changes. According to Microsoft, the agent follows structured legal workflows rather than relying on open-ended AI interpretation — a design choice that positions it as a specialized tool rather than a general assistant applied to legal documents.

The origin of the technology matters: Microsoft built this on the work of engineers from Robin AI, a venture-backed startup that failed despite significant investment in AI-powered contract review. Acquiring talent from failed specialized AI startups and embedding their capabilities into existing enterprise platforms is becoming a standard Microsoft playbook — it reduces the risk of competing with Microsoft’s distribution advantage while giving Microsoft a credible technical foundation. Leaders should watch for this pattern across other professional domains.

The article is brief and leans toward announcement coverage, so the business framing requires some editorial inference. The key question the article doesn’t fully answer: what is the liability posture when the Legal Agent misreads a contract clause? That question is unresolved and will matter significantly as adoption scales.

Relevance for Business

For any SMB that relies on contract review — vendor agreements, client terms, employment contracts, lease agreements — this development is directly relevant. It signals that AI-assisted legal review is moving from experimental to embedded, with Microsoft’s distribution ensuring rapid, often invisible, deployment to organizations already using Microsoft 365.

The risk is not that the tool is bad. It may be quite good for routine, lower-stakes document review. The risk is deploying it without updated policies around AI-assisted legal decisions: Who reviews the AI’s output before a contract is signed? What is the error liability when the agent misses an obligation? Is your organization’s legal counsel aware that this tool is being used in your Word environment?

For SMBs without in-house legal teams, the temptation to use a tool like this as a substitute for legal review — rather than a supplement to it — is real and should be explicitly addressed in policy.

Calls to Action

🔹 If your organization uses Microsoft 365, determine whether Legal Agent features are rolling out to your environment and whether your team is already using them — awareness often lags deployment.

🔹 Establish explicit policy on AI-assisted contract review before the tool reaches your staff: define what it can supplement, what still requires human legal review, and who is accountable for errors.

🔹 For SMBs without in-house counsel, treat this as a prompt to clarify your legal review process generally — AI tools in this space are multiplying, and an undisciplined adoption creates liability exposure.

🔹 Monitor the Frontier program rollout — current access is limited, but broad availability is likely within one to two product cycles. Use the window to prepare governance before deployment.

🔹 Watch how legal professional associations respond — bar associations and legal regulators in several jurisdictions are actively developing guidance on AI-assisted legal work. Their positions will shape the liability landscape for tools like this.

Summary by ReadAboutAI.com

https://www.theverge.com/news/921944/microsoft-word-legal-agent-ai: May 15, 2026

Rave Sues Apple for Anti-Competitive App Store Removal — A Case That Mirrors Broader Platform Power Disputes

Reuters | May 7–8, 2026

TL;DR: A video-sharing app developer has sued Apple for allegedly removing its product to eliminate competition with Apple’s own SharePlay feature — a case that resurfaces persistent questions about App Store power and what recourse smaller developers have.

Executive Summary

Rave, a Canadian developer whose app allows cross-platform shared video viewing, has filed an antitrust lawsuit against Apple in U.S. federal court, alleging its removal from the App Store in 2025 was motivated by competitive self-interest rather than the guideline violations Apple cited. Rave claims Apple removed it after Apple launched its own competing SharePlay feature, and is seeking reinstatement and substantial damages. Parallel legal actions have been filed in Canada, Russia, the Netherlands, and Brazil.

Apple’s public response is direct and serious: the company states the app was removed for repeated policy violations including hosting pornographic and pirated content, and for user reports involving child sexual abuse material. Rave disputes these characterizations. The facts in dispute are severe and unresolved — this is an active legal proceeding, not a settled dispute, and the competing claims cannot be evaluated from public filings alone.

What is not in dispute is the structural dynamic: Apple controls the only distribution channel for iOS apps, and that control gives it unchecked discretion over which competing products survive. This dynamic — explored extensively in the Epic Games v. Apple litigation — remains legally contested and commercially consequential. The Rave case adds another data point to the growing pattern of App Store removal disputes, at a moment when regulatory scrutiny of platform gatekeeping is intensifying in multiple jurisdictions.

Relevance for Business

For SMB leaders, the direct operational lesson is one of platform dependency risk. Any business with revenue or customer engagement tied to a single app marketplace — Apple App Store, Google Play, Amazon Marketplace — faces the same structural exposure Rave is now litigating. The remedy, if any, comes through courts and regulators over years. The more immediate practical question is whether your business continuity would survive a platform removal, and whether you have direct customer relationships that don’t route through a third-party store. This case also reinforces the argument for cross-platform strategies — Rave’s Android and Windows presence meant the company wasn’t entirely eliminated, even after iOS removal.

Calls to Action

🔹 Audit your platform dependencies: if a meaningful share of revenue or customer acquisition runs through a single marketplace or platform, map that exposure and identify what would happen if access were suspended.

🔹 Invest in direct customer relationships — email lists, owned web properties, and direct sales channels reduce platform concentration risk regardless of what happens in app stores.

🔹 If you develop or sell software through the App Store, review Apple’s current developer guidelines with legal counsel — and document your compliance posture in case of future disputes.

🔹 Monitor the outcome of this case and the Epic v. Apple remand — regulatory or judicial changes to App Store policies could affect your distribution economics within 12–24 months.

🔹 No immediate action required for businesses without App Store presence — but file this as a relevant case study in platform governance risk.

Summary by ReadAboutAI.com

https://www.reuters.com/world/rave-files-antitrust-lawsuit-against-apple-over-removal-video-sharing-app-from-2026-05-07/: May 15, 2026

Enterprise AI Won’t Feel Like AI When It Actually Works

Fast Company | Tech | May 11, 2026 | By Enrique Dans

TL;DR: The organizations beginning to extract real value from AI are not deploying better tools — they are redesigning how their companies operate, and the AI they’re building is increasingly invisible infrastructure, not a visible interface.

Executive Summary

This is a substantive opinion piece — part three of a series — making a structural argument about where enterprise AI is actually heading. The core claim: the companies gaining ground are not those with the most sophisticated AI interfaces. They are the ones redesigning workflows, embedding AI into operational processes, and treating it as organizational infrastructure rather than a productivity layer on top of existing work.

The piece draws on McKinsey, Deloitte, Microsoft, Accenture, and Anthropic’s own engineering guidance to support a convergent conclusion: layering AI onto legacy processes doesn’t work. What works is rebuilding operations with AI’s capabilities and constraints built in from the start — persistent context, governance, feedback loops, and memory across functions. The framing shift the author pushes is meaningful: the question is no longer “what should I ask the AI?” but “what does the system need to already know before any question is asked?”

The competitive divide the author anticipates is sharp: companies that use AI as a visible tool layer versus those that embed it as a systemic capability will diverge in operational outcomes — not gradually, but in ways that may appear sudden when they become visible. The warning for laggards is that by the time the gap is obvious, it will have been building quietly for months or years.

Note: This is a well-argued perspective piece. The directional thesis is well-supported by the cited research, but the author’s certainty about timing and competitive discontinuity should be treated as a reasoned forecast, not a settled outcome.

Relevance for Business

SMB leaders face a practical decision embedded in this piece: whether AI deployment is a tool procurement question or an operational redesign question. Most current enterprise AI spending answers the first question. The research cited here suggests the second is where outcomes actually differ.

The implications for SMBs specifically are real but require nuance. Full-scale operational redesign demands resources, change management capacity, and often architectural IT changes that are harder for smaller organizations to execute. However, the underlying principle — design workflows around AI capabilities rather than attaching AI to existing ones — is actionable at a departmental or process level without enterprise-scale transformation. The risk of inaction is vendor lock-in to tools that produce outputs but don’t change outcomes.

Calls to Action

🔹 Shift the AI conversation internally from “what tools are we using?” to “what workflows are we redesigning?”If the answer to the second question is “none yet,” that’s the gap to close.

🔹 Pilot workflow-embedded AI in one high-friction operational area before expanding. Choose a process where AI can carry persistent context — not just answer one-off questions.

🔹 Be skeptical of vendors selling interfaces. Evaluate AI investments based on whether they change operational outcomes, not on demo quality or feature counts.

🔹 Monitor the McKinsey, Deloitte, and Anthropic research threads cited here — they represent the most credible current evidence on what enterprise AI deployment actually produces.

🔹 Assign someone to track your AI-to-workflow integration ratio. If AI is being used widely but only at the interface layer, you are likely generating outputs without changing outcomes.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91536400/when-enterprise-ai-finally-works-it-wont-look-like-ai: May 15, 2026

AI-ENABLED HACKING HAS CROSSED A NEW THRESHOLD — ATTACKERS ARE USING IT TO FIND UNKNOWN VULNERABILITIES

Reuters | A.J. Vicens & Sam Tabahriti | May 11, 2026

TL;DR: Google’s Threat Intelligence Group has documented the first confirmed case of attackers using AI to autonomously discover a previously unknown software vulnerability and build an exploit — a development that signals AI is moving from a hacker research aid to an active component in offensive cyber operations.

Executive Summary

This report from Google’s Threat Intelligence Group describes a confirmed, not hypothetical, development: a prominent cybercrime group used AI to identify a zero-day vulnerability in a widely used open-source system administration tool and construct an exploit. The attack was blocked before it could be deployed at scale, but the significance is in the method, not the outcome. This is the first time Google has publicly documented AI being used in the full vulnerability discovery-to-exploit pipeline.

Google’s chief threat analyst characterized this as likely the tip of a much larger pattern, with criminal organizations and state-linked hacking groups from China, Russia, and North Korea actively integrating AI into attack workflows. The current techniques are described as early-stage, but the trajectory is clear: AI reduces both the time and expertise required to execute sophisticated cyberattacks. Tasks that previously required specialized human analysts — scanning for novel vulnerabilities, generating malicious code, selecting targets — are being delegated to AI systems operating with limited human oversight.

The report does not identify the cybercrime group or the specific tool targeted, which limits independent verification. However, the structural warning is consistent with prior assessments from European financial regulators and other intelligence bodies: AI is asymmetrically lowering the barrier to offensive cyber capability while defenders still largely operate with human-speed response cycles.

Relevance for Business

For SMB executives, this is an urgent operational signal, not a background trend. The practical implications:

Attack surface expansion is accelerating. If AI can now autonomously discover unknown vulnerabilities in widely used open-source tools, the window between a vulnerability existing and being exploited is compressing. Many SMBs rely on open-source components throughout their technology stack — often without systematic inventory or patch monitoring.

Security vendor claims need re-evaluation. AI-powered threat detection tools are a growing market. The escalation described here raises the bar for what “adequate” security monitoring means, and creates commercial pressure to upgrade — not all of which will be warranted.

Calls to Action

🔹 Conduct or commission an open-source dependency audit. Identify which open-source tools are embedded in your technology stack and whether you have visibility into their vulnerability status and patch cadence.

🔹 Accelerate patch and update discipline. The window between a zero-day being discovered and weaponized is narrowing. Manual or ad hoc patching cycles are increasingly insufficient.

🔹 Review your incident response readiness. If a tool you depend on is exploited before a patch is available, what is your containment and recovery plan? If you don’t have a documented answer, this is the moment to develop one.

🔹 Treat this as a vendor conversation trigger. Ask your managed security or IT providers specifically what their approach is to zero-day threat detection — not just known vulnerability scanning.

🔹 Monitor the Google Threat Intelligence Group’s reporting cadence. They have committed to publishing findings in this area; treat their reports as a leading indicator of the threat landscape your defenses will need to address.

Summary by ReadAboutAI.com

https://www.reuters.com/legal/litigation/hackers-pushing-innovation-ai-enabled-hacking-operations-google-says-2026-05-11/: May 15, 2026

‘It’s Not You, It’s My Startup’ — Founder Mode Is Ending Relationships

Business Insider | Amanda Yen | May 10, 2026

TL;DR: A cultural dispatch from inside the AI boom reveals that a growing cohort of young founders — convinced the window to build is narrow and closing — are deprioritizing or ending romantic relationships as a deliberate strategic choice.

Executive Summary

This is a cultural trend piece, not a technology or business operations story. Its signal for executives is indirect but real: it illuminates the psychology and social costs driving the current generation of early-stage founders, many of whom are in their early-to-mid 20s and building AI-adjacent companies. The median age of Y Combinator participants dropped to 24 in 2025 (from 30 in 2022), and the piece captures what that shift looks like on the ground — founders who frame personal relationships as competing resources against startup momentum, treating emotional bandwidth and financial capital as zero-sum.

The behavior being described is not fringe. Dating coaches, startup psychologists, and the founders themselves describe a self-reinforcing logic: the AI boom feels like a narrow window, which justifies extreme focus, which crowds out sustained personal investment, which in turn shapes how these founders approach hiring, teams, and leadership. The piece is anecdotal and first-person in places, but the pattern it identifies aligns with documented founder psychology research.

The second-order implication worth noting: founders who operate this way tend to build cultures in their own image— high output, low tolerance for ambiguity, lean toward quantification over relationship management. If you’re hiring from this cohort, partnering with their companies, or competing against them, understanding their operating logic has practical value.

Relevance for Business

For SMB leaders, this piece is most useful as a talent and partnership lens. If you’re evaluating early-stage AI startups as vendors, partners, or acquisition targets, the cultural signals here matter: extreme founder focus can accelerate early execution but often creates succession risk, burnout cycles, and culture fragility at scale. If you’re recruiting from this talent pool or managing Gen Z employees shaped by this ethos, it’s worth understanding the values — and limits — they carry in.

Calls to Action

🔹 Monitor, don’t act. This is a cultural observation, not an operational directive. File it as useful context on the psychological profile of the current founder cohort.

🔹 Apply to vendor/partner evaluation. When assessing early-stage AI startups, factor in founder sustainability and team depth — not just product capability.

🔹 Consider talent culture implications. If you’re managing or hiring people who embrace “monk mode” productivity norms, build explicit structures for collaboration and communication that don’t depend on individual emotional availability.

🔹 Deprioritize as a standalone business story. The article is a cultural essay with limited direct operational relevance. Read the original if the topic is personally or organizationally relevant; otherwise, this summary is sufficient.

Summary by ReadAboutAI.com

https://www.businessinsider.com/hot-new-breakup-line-startups-founder-mode-2026-5: May 15, 2026

Logitech Bets on AI-Enabled Hardware and Business Customers Despite Macro Headwinds

Reuters | May 8, 2026

TL;DR: Logitech is increasing R&D and marketing investment to capture AI-hardware demand from business customers and gamers — a confident forward posture, though supply chain pressure from Middle East disruptions is creating near-term friction.

Executive Summary

Logitech’s CEO Hanneke Faber announced plans to sustain elevated spending on product development and go-to-market efforts this fiscal year, targeting AI-enabled peripherals, gaming, and enterprise customers as the company’s primary growth vectors. The company projects 2–4% sales growth in constant currencies, reflecting cautious optimism even as geopolitical disruption — specifically Middle East logistics bottlenecks — trims an estimated $15 million from the current quarter’s revenue on top of a $5 million hit in the previous quarter.

The strategic logic is straightforward: enterprise hardware refresh cycles are accelerating as companies invest following recent strong earnings, and AI-optimized peripherals (webcams, headsets, keyboards tuned for AI-assisted workflows) represent an emerging product category where Logitech has established distribution advantages. Gaming remains a resilient segment due to demographic tailwinds. The company also cites 78% recycled plastic content as a cost shield against oil-price-driven input inflation — an operationally meaningful detail, not just an ESG talking point.

This is largely a company-framed investor communication. The AI hardware opportunity is real but early; what exactly “AI-enabled devices” means at the peripheral level is still being defined by the market.

Relevance for Business

For SMB executives managing office technology budgets, this signals that AI-optimized peripheral hardware is becoming a defined product category, not a future aspiration. Procurement decisions made in the next 12–18 months may need to account for whether standard peripherals will integrate smoothly with AI-assisted workflows on platforms like Microsoft Copilot or similar tools. Logitech’s increased R&D spend suggests new product launches are likely — it may be worth deferring large hardware refresh purchases by a cycle to see what ships. The Middle East supply disruption is also a reminder that global hardware supply chains remain vulnerable to regional instability, even for non-semiconductor goods.

Calls to Action

🔹 Hold off on large-scale peripheral hardware refreshes if your current equipment is functional — new AI-optimized devices are likely in the pipeline within 12 months.

🔹 Add “AI compatibility” to your hardware procurement checklist — ask vendors how their peripherals integrate with your AI productivity stack before purchasing.

🔹 Flag supply chain risk if your business sources or distributes hardware through Gulf region logistics routes — disruption affecting Logitech may indicate broader exposure.

🔹 Monitor Logitech’s product announcements over the next two quarters if video conferencing, gaming peripherals, or enterprise hardware are significant line items in your budget.

🔹 No urgent action required — this is a vendor strategy story with medium-term relevance; watch and reassess at your next hardware planning cycle.

Summary by ReadAboutAI.com

https://www.reuters.com/business/logitech-ceo-plans-boost-spending-rd-marketing-2026-05-08/: May 15, 2026

AWS Suffers Second Major Overheating Outage in Months, Raising Infrastructure Reliability Questions

Reuters | May 7–8, 2026

TL;DR: An overheating failure at a single AWS data center in Virginia disrupted services for multiple businesses — including Coinbase — and represents the second such incident in recent months, pointing to a structural infrastructure risk tied directly to rising AI compute demand.

Executive Summary

A temperature spike at an AWS facility in northern Virginia knocked out power to one of its Availability Zones, causing service disruptions for downstream customers and requiring hours of recovery. The immediate cause was a cooling failure; AWS rerouted traffic away from the affected zone and brought supplemental cooling capacity online, though restoration took longer than anticipated. Coinbase, among the affected platforms, confirmed services were restored.

The more consequential signal is the pattern: this was the second overheating-driven cloud outage in recent months, following a cooling failure at CyrusOne that disrupted CME Group in November. The underlying driver is AI-related compute density — modern AI workloads generate significantly more heat per rack than conventional cloud infrastructure, and cooling systems across the industry are under pressure they were not originally designed to handle. Data center operators are actively shifting toward liquid and specialized coolant systems, but that transition is capital-intensive and ongoing.

For businesses relying on cloud services, this event is a reminder that cloud resilience is not automatic. AWS’s Availability Zone architecture is designed to isolate failures — but workloads that weren’t configured for multi-zone redundancy experienced real downtime.

Relevance for Business

Any SMB whose operations depend on AWS-hosted services — SaaS platforms, payment systems, internal tools — should treat this as a practical prompt to review business continuity assumptions. Cloud availability is typically high, but not unconditional, and vendors’ SLAs rarely cover all categories of lost revenue or operational disruption. The frequency of large-scale outages — CrowdStrike in 2024, AWS in October 2025, now this — suggests that infrastructure risk deserves a permanent seat in operational planning, not just a checkbox in vendor contracts. The AI-driven heat problem also signals rising data center operating costs industry-wide, which may eventually affect cloud pricing.

Calls to Action

🔹 Review your AWS architecture (or ask your IT provider to do so) — verify which critical workloads are configured for multi-zone redundancy and which are not.

🔹 Check your SaaS vendors’ infrastructure dependencies — if key business tools run on AWS us-east-1 (North Virginia), assess their redundancy posture and historical uptime.

🔹 Revisit your business continuity plan for cloud service disruptions: what is the fallback, and how long can operations sustain reduced access to cloud-based tools?

🔹 Do not over-react — AWS outages remain rare relative to overall uptime, and multi-cloud hedging carries its own cost and complexity. Calibrate response to actual risk exposure.

🔹 Monitor whether the frequency of overheating incidents increases as AI compute density rises — this is a trend to track, not yet a crisis to solve.

Summary by ReadAboutAI.com

https://www.reuters.com/business/retail-consumer/amazon-cloud-unit-says-data-center-overheating-north-virginia-disrupts-services-2026-05-08/: May 15, 2026

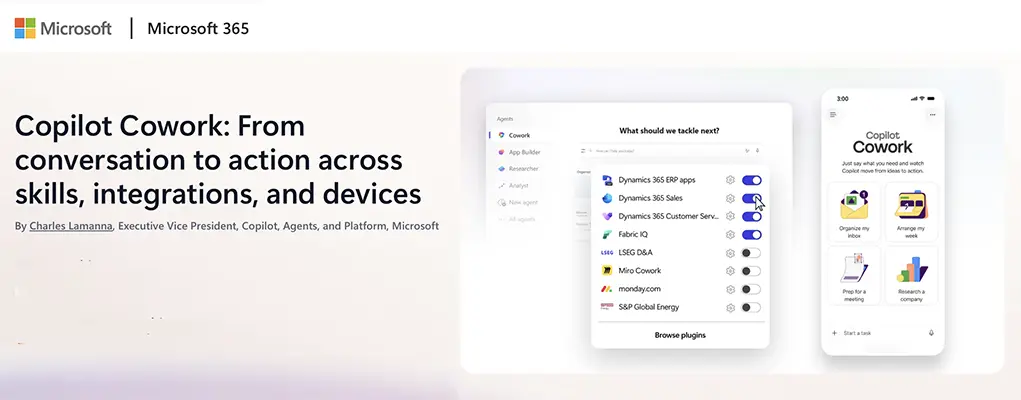

Microsoft Copilot Cowork Expands to Mobile, Adds Skills and Integrations

Microsoft 365 Blog | May 5, 2026

TL;DR: Microsoft is pushing Copilot Cowork from a chat-based assistant toward an AI that executes multi-step work tasks autonomously — now on mobile and connected to third-party business tools.

Executive Summary

Microsoft’s Copilot Cowork, available through its early-access Frontier program, represents a strategic shift in how the company positions AI within the enterprise: less about answering questions, more about completing work. The new capabilities announced include mobile access on iOS and Android, a “Skills” layer that lets teams encode reusable workflows, and integrations with both Microsoft’s own stack (Dynamics 365, Power BI via Fabric IQ) and third-party tools such as monday.com, Miro, LSEG, and S&P Global Energy.

The architectural claim here is significant: Cowork is built on “Work IQ,” Microsoft’s layer that ingests organizational data, tools, and context — meaning the AI is acting on knowledge specific to your business, not just the public internet. That’s the differentiator Microsoft is staking, and the one most worth evaluating critically, since actual performance depends heavily on how well enterprise data is structured and governed. The Skills feature is the most immediately practical element — it allows teams to capture standard operating procedures as reusable AI instructions, which could meaningfully reduce rework and inconsistency in high-volume, repeatable tasks.

This is a company announcement, written by a Microsoft executive. The capabilities described are real but early, access remains gated through the Frontier program, and Microsoft openly acknowledges it is “still early and moving fast.” Leaders should treat this as a credible preview of where the Microsoft 365 platform is heading — not a production-ready deployment.

Relevance for Business

For SMBs already inside the Microsoft 365 ecosystem, this matters directly. Cowork is not a separate product to buy — it is evolving within the platform you may already use. The vendor lock-in implication is real: as Microsoft deepens AI integration across Teams, Outlook, Dynamics, and Power BI, the cost of switching platforms rises. The Skills layer also introduces a governance question — who owns, audits, and updates the AI workflows your team encodes? That is an internal process question, not a technology question, and it needs an owner. The third-party integrations signal Microsoft’s intent to position Cowork as a cross-tool orchestration layer, which would concentrate significant workflow control in a single vendor.

Calls to Action

🔹 If you’re a Microsoft 365 subscriber, apply for Frontier program access to evaluate Cowork in a limited, controlled use case — prioritize a repeatable, low-risk workflow for initial testing.

🔹 Assign someone to document your team’s most repetitive task sequences now — these are the candidates for Skills automation once Cowork is more widely available.

🔹 Assess your current Dynamics 365 or Power BI footprint — deeper integration with Cowork may change the ROI calculus on those tools.

🔹 Begin an internal conversation on AI workflow governance: who approves, reviews, and retires Skills before they spread across teams?

🔹 Monitor, but do not over-invest in evaluating third-party integrations until Cowork exits the Frontier early-access stage and general availability timelines are clear.

Summary by ReadAboutAI.com

https://www.microsoft.com/en-us/microsoft-365/blog/2026/05/05/copilot-cowork-from-conversation-to-action-across-skills-integrations-and-devices/: May 15, 2026

AI Hallucinations Are Now a Legal Liability — and Courts Are Feeling the Volume

Fast Company | Tech | May 11, 2026 | By Chris Stokel-Walker

TL;DR: AI is simultaneously flooding courts with more cases filed by non-lawyers and producing fictitious citations in filings by trained professionals — creating a dual pressure on the legal system that researchers say is approaching unsustainable levels.

Executive Summary

Two converging trends are reshaping the legal system. First, AI tools are enabling people without legal training to file civil lawsuits on their own — MIT research shows self-represented civil cases climbed from roughly 11% to 18% of the federal caseload in the post-AI period, while AI-generated text in legal filings rose from near zero to approximately 18% by early 2026. Second, AI hallucinations are producing fabricated legal citations in filings from trained professionals — including a recent incident involving Sullivan & Cromwell, one of the most prominent U.S. law firms, which submitted fictitious case names, fabricated quotes, and incorrect statutory references.

The volume problem is already significant: the number of filings judges must review has risen roughly 158%, though case resolution times have not yet increased materially. Researchers warn that the legal system is absorbing pressure now, but that the runway is shorter than it appears — particularly as AI tools improve and more people realize they can generate legal documents with minimal effort or expertise.

The practical risk for businesses is two-sided: as a potential defendant or counterparty, you may face more AI-generated litigation from unrepresented parties. As a party relying on legal counsel, you face new exposure from AI-generated errors in your own filings if your legal team — internal or external — isn’t applying rigorous human review to AI-assisted work product.

Relevance for Business

For SMB leaders, the implications cut across legal risk, vendor oversight, and governance. Any business with exposure to litigation — contract disputes, employment matters, regulatory filings — should be asking whether their legal team has a clear AI usage policy and a human verification protocol for AI-generated content. The Sullivan & Cromwell incident demonstrates that this risk is not limited to small or unsophisticated firms.