AI Updates April 9, 2026

The week’s coverage arrives at a moment when the AI story is splitting in two directions at once. On one side: explosive growth, record investment, and tools that are genuinely changing how work gets done. On the other: a mounting accumulation of governance failures, financial instability, regulatory friction, and evidence that the productivity gains being promised don’t always survive contact with real organizations. For SMB leaders, the practical challenge isn’t picking a side — it’s learning to hold both realities at once, and making decisions that are robust to either outcome.

This mid-week’s 28 articles span that full range. You’ll find a deeply reported investigation into whether Sam Altman can be trusted to lead the world’s most consequential AI company, alongside a look at how Disney’s Imagineers are using reinforcement learning to train free-roaming animatronic characters. There’s a cautionary tale about a seven-year FDA clearance effort that ended in shutdown and open-sourcing, and a useful corrective to the “lines of code as productivity” narrative that’s spreading through tech leadership circles. The financial picture for OpenAI and Anthropic — explosive revenue growth, structural losses through the early 2030s, and IPOs that will test public markets’ appetite for that math — deserves particular attention from any executive who has embedded either company’s tools in core workflows.

The through-line, if there is one, is accountability: who bears it when AI-generated content causes harm, when a vendor raises prices after an IPO, when a benchmark score doesn’t translate to real-world performance, when an AI coding tool ships 169 server requests where 7 would do. The organizations that will navigate this period well are those that treat AI adoption as a governance question, not just a capability question — and that build the internal literacy to ask harder questions of their tools, their vendors, and themselves.

Summaries

OpenAI: Three Documents, One Question

On April 6, 2026, three things landed simultaneously: the most damaging investigation into Sam Altman’s leadership ever published, a 13-page policy blueprint calling for a New Deal-scale restructuring of the AI economy, and a high-profile Axios interview in which Altman compared himself to FDR while warning of imminent cyberattacks. The co-arrival of the New Yorker investigation and the policy offensive was not coincidental — it was, as multiple analysts noted immediately, a classic narrative substitution play. When the story is “can this man be trusted,” the counter-move is to make the story “here is this man’s sweeping vision for the future.” Whether that vision is sincere, cynical, or some mixture of both is genuinely unresolvable from the outside. What is resolvable is the pattern: Altman has now publicly advocated for strict AI regulation, then regulation that “does not slow us down,” and now a New Deal. Each position arrived at a moment of political or reputational pressure and delivered a commercial benefit. That track record is itself the due-diligence finding.

For SMB executives, the actionable posture is neither dismissal nor deference. The policy proposals in the blueprint — automated labor taxes, incident reporting requirements, auditing regimes — will shape the regulatory environment your AI vendors operate in, regardless of who authored them or why. The cyberattack warning in the Axios interview reflects OpenAI’s own preparedness tracking and deserves to be acted on independent of the messenger. And the New Yorker investigation is the most thoroughly documented account yet of how OpenAI’s governance actually works — which matters directly if OpenAI products are embedded in your operations. Read all three. Weight them appropriately. And maintain enough vendor optionality that the answer to “what happens if OpenAI’s IPO restructures its priorities or its pricing” is not “we have no idea.”

The timing is not a coincidence. On April 6 — the same day the New Yorker investigation published — OpenAI released a 13-page policy paper titled “Industrial Policy for the Intelligence Age: Ideas to Keep People First,” and Altman gave a high-profile interview to Axios comparing the moment to the New Deal. Multiple critics noted the simultaneous release as transparently strategic.

SAM ALTMAN MAY CONTROL OUR FUTURE — CAN HE BE TRUSTED?

The New Yorker | Ronan Farrow & Andrew Marantz | April 6, 2026

TL;DR: A deeply reported New Yorker investigation — drawing on previously undisclosed internal documents, depositions, and more than a hundred interviews — concludes that Sam Altman has built the world’s most consequential AI company through a consistent pattern of misrepresentation, eroding safety commitments, and strategic manipulation of regulators, investors, and employees.

Executive Summary

The article’s central finding is not that Altman is merely aggressive or ambitious, but that multiple senior colleagues — including OpenAI co-founders Ilya Sutskever and Dario Amodei — independently concluded that he cannot be trusted to lead an organization whose stated mission is to develop potentially civilization-altering technology safely. The evidence assembled includes roughly seventy pages of internal Slack messages and HR documents (the “Ilya Memos”), more than two hundred pages of contemporaneous notes compiled by Amodei, board depositions, and accounts from current and former Microsoft executives. The pattern described is not one dramatic breach but an accumulation: contradictory representations to different stakeholders, safety commitments announced and then quietly abandoned, and a post-firing investigation structured — according to insiders — to produce acquittal rather than accountability. No written report was produced. Altman rejoined the board shortly after being cleared.

The business context has grown significantly more consequential since the 2023 boardroom drama. OpenAI is now reportedly pursuing a trillion-dollar IPO valuation, has secured sweeping government contracts touching immigration enforcement and autonomous weapons, and is constructing AI infrastructure in Gulf autocracies — moves that raised enough national-security concern during the Biden Administration that Altman withdrew from a security-clearance process. The safety infrastructure that originally defined OpenAI has been systematically dismantled: the superalignment team dissolved, key safety leaders resigned, and the company’s most recent IRS filing omitted “safety” from its list of significant activities. On an independent scorecard from the Future of Life Institute, OpenAI now receives an F for existential safety.

The article also documents a significant political pivot. Altman moved from Democratic donor and advocate for AI regulation to a close Trump Administration ally — donating to the inaugural fund, supporting the rollback of Biden’s AI executive order, and positioning OpenAI to absorb Pentagon contracts after Anthropic was blacklisted for refusing to remove limits on autonomous weapons and mass surveillance. The speed and completeness of that transition — and the commercial benefit Altman derived from it — is presented as evidence of the larger pattern: stated principles held until they become inconvenient.

Relevance for Business

For SMB executives, this article matters less as a character study and more as a structural risk briefing about the vendor ecosystem you are building on:

- Vendor governance risk is real. OpenAI’s internal safety architecture has been hollowed out. Leaders who assumed OpenAI’s nonprofit origins signaled unusual accountability now have documented evidence to the contrary. Decisions about AI vendor selection should account for governance quality, not just capability benchmarks.

- Regulatory environment is actively shifting. Altman’s pivot to the Trump Administration, combined with the rollback of Biden’s AI executive order, has reduced near-term federal oversight. That creates short-term operational flexibility but elevates longer-term policy whiplash risk — especially for businesses in regulated industries or those operating internationally under EU frameworks.

- Concentration risk is growing. The U.S. AI infrastructure is increasingly dependent on a small number of highly leveraged companies, some of which — by the article’s account and by Altman’s own past statements — may be in a bubble. Dependency on a single AI vendor without fallback options is a material operational risk, particularly as IPO pressures reshape product and pricing priorities.

- The labor and ethics signals matter for hiring. Senior AI researchers continue to exit OpenAI over safety and governance concerns. For companies competing to attract technical talent, the reputational trajectory of major AI labs is relevant to who will work for — and with — you.

Calls to Action

🔹 Audit your AI vendor dependencies. If OpenAI products are embedded in core workflows, map the exposure and identify what a vendor transition would require. Optionality is worth maintaining.

🔹 Separate capability claims from governance claims. When evaluating AI tools and vendors, treat safety and ethics commitments as claims to verify — not features to assume. Ask providers directly about their current safety governance structure.

🔹 Monitor the OpenAI IPO process. A public offering at trillion-dollar valuations will introduce new financial pressures and disclosure requirements. Watch for what the S-1 reveals — and what it omits — about governance, liability, and product safety posture.

🔹 Prepare a regulatory monitoring brief. The policy environment around AI — export controls, military contracting, content liability — is changing faster than most internal compliance calendars. Assign someone to track material changes quarterly, not annually.

🔹 Revisit AI ethics and use policies internally. As AI tools embed more deeply in operations, the governance gap at major AI labs increases the responsibility that falls on business users. A lightweight internal policy on acceptable AI use is now a risk management basic, not an optional governance nicety.

Summary by ReadAboutAI.com

https://www.newyorker.com/magazine/2026/04/13/sam-altman-may-control-our-future-can-he-be-trusted: April 9, 2026

“Industrial Policy for the Intelligence Age: Ideas to Keep People First”

OpenAI | April 6, 2026

TL;DR: Released the same day as the New Yorker’s damaging investigation into Sam Altman, OpenAI’s 13-page policy blueprint calls for sweeping government intervention in the AI economy — but the timing, the source’s obvious self-interest, and the document’s own vagueness demand that leaders read it as agenda-setting rather than policy.

Executive Summary

The document’s core argument is that approaching superintelligence constitutes a disruption comparable to the Industrial Revolution — one that markets alone cannot manage and that requires a new generation of industrial policy. OpenAI organizes its proposals around two pillars: building a more equitable AI economy, and building a more resilient society. The economic proposals include a national public wealth fund giving every citizen a stake in AI-driven returns, taxes shifted from labor toward capital and automated production, portable benefits decoupled from employers, four-day workweek pilots, adaptive safety nets triggered automatically by displacement metrics, and expanded access to AI tools framed as a near-universal right. The resilience proposals include mandatory AI incident reporting to a public authority, independent auditing regimes for frontier models, model-containment playbooks for dangerous systems that can’t be recalled, guardrails on government use of AI, and an international information-sharing network modeled on multilateral safety bodies.

The document is not a policy proposal in the legislative sense — it explicitly frames itself as a conversation starter. Individual proposals are often vague on implementation, sequencing, or enforcement. Several (portable benefits, labor voice mechanisms, public wealth funds) have been debated for years without resolution, and the paper doesn’t engage with why they’ve stalled. The section calling for “mission-aligned corporate governance” at frontier AI companies — including protection against “insider capture” that allows no individual to use AI to concentrate power — reads as particularly incongruous given that the New Yorker investigation, published the same day, documents precisely that concern about OpenAI’s own leadership.

The conflict of interest is structural, not incidental. OpenAI is the world’s largest AI company, heading toward an IPO, operating under federal contracts, and shaping the regulatory environment in which it competes. A policy framework designed by that company — however substantive in parts — will inevitably reflect the regulatory perimeter OpenAI finds tolerable. Critics noted immediately that proposals emphasizing voluntary standards, industry-led auditing, and light-touch market regulation create conditions where OpenAI operates with significant freedom under rules it helped define. That does not make every proposal wrong, but it is the essential frame for evaluating any of them.

Relevance for Business

For SMB leaders, this document matters less as a policy roadmap and more as a signal of where the regulatory conversation is heading — and who is trying to lead it. Several proposals, if enacted in any form, would directly affect business operations: taxes on automated labor would change the cost calculus for AI-driven workflow automation; portable benefits mandates would reshape employer obligations; AI incident reporting requirements could extend to companies deploying, not just building, AI tools; and auditing standards for frontier models could propagate downstream into enterprise procurement requirements. None of these are imminent — but organizations that wait for regulations to finalize before thinking through their implications will be behind.

The deeper business signal is that the regulatory vacuum around AI is beginning to fill — not from legislators, but from the industry itself. When the largest player in a market publishes the framework for its own governance, the terms of that framework tend to anchor subsequent debate. SMB executives should understand what OpenAI is proposing and why, because the policy environment their AI vendors operate in will increasingly reflect some version of it.

Calls to Action

🔹 Read the document with the source in mind — treat proposals that limit regulatory scope or concentrate governance authority in industry bodies with particular skepticism, given OpenAI’s direct financial interest in the outcome.

🔹 Flag the tax and labor proposals for your CFO and HR leads — automated labor taxes, portable benefits frameworks, and worker voice mechanisms are early-stage ideas now, but they signal the direction of future compliance obligations for AI-adopting businesses.

🔹 Monitor incident reporting and auditing proposals — if mandatory AI incident disclosure requirements emerge from this debate, they will likely extend to enterprise deployers, not just model developers. Begin mapping what you would need to report and to whom.

🔹 Treat this as a benchmark document — compare it to Anthropic’s policy blueprint (released six months earlier) and watch for where Congressional or regulatory proposals converge with or diverge from the OpenAI framework. The gaps will reveal where genuine policy debate is happening versus where industry consensus is simply being ratified.

🔹 Do not treat vagueness as safety — the document’s exploratory framing means no specific regulation is imminent. But the issues it raises — who benefits from AI productivity gains, who bears the costs of displacement, who audits powerful systems — will not stay exploratory for long.

Summary by ReadAboutAI.com

https://openai.com/index/industrial-policy-for-the-intelligence-age/: April 9, 2026https://cdn.openai.com/pdf/561e7512-253e-424b-9734-ef4098440601/Industrial%20Policy%20for%20the%20Intelligence%20Age.pdf: April 9, 2026

“OPENAI’S WARNING: WASHINGTON ISN’T READY FOR WHAT’S COMING”

Axios / Mike Allen interview with Sam Altman | April 6, 2026

TL;DR: In a wide-ranging interview timed to his policy blueprint release, Sam Altman warns that the next generation of AI models will be a qualitative leap beyond today’s, that a major cyberattack is likely within the year, and that AI pricing will eventually follow a utility model — while deflecting, with practiced smoothness, every hard question about his own trustworthiness and the conflicts embedded in his policy agenda.

Executive Summary

Altman’s central claim is that the window for preparing society for superintelligence is narrowing faster than governments realize. He describes the coming model generation not as an incremental upgrade but as a transition: where current models help scientists make small discoveries, the next class may enable “the most important discovery of a researcher’s decade.” On productivity, he suggests individuals with advanced models and sufficient compute will soon be able to match the output of entire software teams. These are extraordinary claims, made without supporting evidence — but they come from the CEO of the company building the systems in question, which is itself the governance problem the interview never fully addresses.

The most immediately actionable signal is Altman’s candid assessment of near-term threats. He explicitly states he believes a “world-shaking cyberattack” is possible within the current year, driven by rapidly advancing AI capabilities — and that biosecurity is not far behind. His framing here is notably direct compared to the hedged language elsewhere in the conversation: he is not speculating about a distant future but flagging what OpenAI’s own preparedness framework is actively tracking. He acknowledges that company-level safety measures are insufficient once highly capable open-source biology models exist — a concession that the problem is already partially beyond the industry’s control.

On the policy blueprint itself, Altman is candid that the most politically achievable proposals are the least transformative: energy infrastructure expansion has genuine bipartisan support; the larger structural proposals — wealth funds, labor taxes, four-day workweeks — sit “at the edges of the Overton window.” He frames the blueprint as a conversation-starter rather than a platform, and declines to take ownership of the harder implementation questions. On pricing, he offers the clearest signal of the interview: he expects base AI pricing to continue falling but premium frontier-model pricing to remain elevated and possibly rise as demand for “bigger, smarter” models outpaces supply. For businesses that have built workflows assuming current pricing, this is a material planning consideration.

What the interview does not resolve is the central tension the New Yorker investigation raised on the same day: when asked directly why people should trust him, Altman pivots to a collective argument — “no one person should be making decisions alone” — which is a reasonable institutional principle but not an answer to the personal accountability question. The juxtaposition of that response with an internal document that begins its first item with “Lying” is something no amount of policy framing fully dissolves.

Relevance for Business

Three signals from this interview carry direct operational weight for SMB leaders. First, the cyberattack warning is not generic threat-landscape boilerplate — it is the CEO of the company that builds and sees the attack-enabling capabilities naming this as a live near-term risk his own preparedness team is tracking. If you haven’t reviewed your cybersecurity posture recently, this is the nudge to do it this quarter, not next year. Second, Altman’s pricing comments are the most specific public guidance OpenAI has offered on its commercial direction: falling base prices, rising premium for frontier capability, utility-style billing in the medium term. Any business with significant AI spend or a vendor relationship dependent on current pricing should stress-test that assumption. Third, the interview’s political subtext matters: Altman’s acknowledgment that even staunch free-market Republicans are privately open to rethinking the labor-capital balance in an AI economy is a signal that the regulatory environment is more unstable than current federal inaction suggests, and that SMB executives should treat AI policy as a variable, not a constant.

Calls to Action

🔹 Treat the cyberattack warning as an operational signal, not background noise — review your cybersecurity infrastructure, incident response plan, and vendor dependencies this quarter. Altman is describing a threat his own systems are actively monitoring.

🔹 Stress-test your AI cost assumptions — Altman’s pricing comments suggest base commodity AI costs will fall, but frontier-model access will command a premium. If your workflows are built on current pricing tiers for high-capability models, model the impact of a 30–50% increase in that segment.

🔹 File the productivity claims for your own testing — “a person plus compute doing the work of a whole team” is a vendor’s forward-looking statement, not a demonstrated benchmark. Run your own pilots before making staffing or workflow decisions based on it.

🔹 Monitor biosecurity regulation — Altman’s candid acknowledgment that highly capable open-source biology models are close at hand, and that company-level safeguards won’t be sufficient, is a signal that biosecurity regulation is coming and may intersect with industries — healthcare, pharma, research — that use AI in life-sciences contexts.

🔹 Separate the messenger from the message — the policy blueprint and the interview contain genuinely useful framing for what AI governance could look like. Read them as a map of where the conversation is going, while holding the source’s conflicts of interest clearly in view.

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=B21KxGs8zDI: April 9, 2026https://www.axios.com/2026/04/06/behind-the-curtain-sams-superintelligence-new-deal: April 9, 2026

The Sam Altman story this week is ultimately a single question wearing three different outfits: when the world’s most powerful AI company is led by someone whose own co-founders documented a pattern of deception, what governance structures actually protect the rest of us — and who gets to design them? Until that question has a better answer than “OpenAI, largely,” every business that has embedded these tools in its operations carries a governance exposure that no terms-of-service agreement resolves.

HOW DISNEY IMAGINEERS ARE USING AI AND ROBOTICS TO RESHAPE THE COMPANY’S THEME PARKS

Fast Company | Chris Morris | April 2, 2026

TL;DR: Disney’s deployment of AI-powered robotics in theme parks — from a free-roaming Olaf to reinforcement-learning-trained animatronics — offers a concrete, well-resourced example of how AI and physical automation are being integrated into high-stakes, experience-critical operations.

Executive Summary

This is a reported feature, largely promotional in framing but substantively informative. Disney’s Imagineering division is using AI in two distinct ways: as a creative acceleration tool (compressing months of concept work into days for experienced designers) and as a training mechanism for physical robotics (reinforcement learning enabling characters like Olaf to navigate real-world movement without constant manual reprogramming). The robotic Olaf debuted at Disney Adventure World in Paris, replicating the character’s on-screen movement through AI-trained motion modeling. Imagineers plan to expand robotic characters globally across parks and cruise ships, with free-roaming interactions and meet-and-greets as the long-term target.

The organizational signal is as important as the technical one. Disney’s Imagineering leadership describes a deliberate cultural shift — away from precedent-based process toward startup-style opportunity-seeking — enabled by proximity to technology partners like Nvidia and Unreal Engine. The company’s direct relationship with Nvidia’s CEO is explicitly cited as a competitive advantage, in a market where GPU access is otherwise constrained. The article is candid that Imagineers see AI as a supplement to human creativity, not a replacement — a claim consistent with the described workflow, where AI ideation is handed to skilled human designers for development.

The animatronics-to-robotics convergence described here — static, bolted characters evolving toward free-roaming, AI-trained mobile robots — represents a meaningful shift in what physical AI deployment looks like at consumer scale, in a setting where safety, reliability, and character fidelity are non-negotiable constraints.

Relevance for Business

For most SMB executives, Disney’s Imagineering operation is not a direct operational analog. The relevant takeaways are more general. First, AI-assisted ideation is already compressing creative timelines at a large enterprise — the “days vs. months” framing from Disney’s own designers is a benchmark worth testing in your own creative workflows. Second, reinforcement learning for physical automation is moving from research into consumer-facing deployment; businesses in hospitality, retail, events, or any physical experience sector should track how this capability scales and commoditizes. Third, Disney’s explicit dependency on a preferred Nvidia relationship as a competitive moat is a reminder that AI infrastructure access is not neutral — organizations with preferential hardware or model access have structural advantages that others will struggle to replicate.

Calls to Action

🔹 Test AI-assisted ideation in your own creative workflows — Disney’s experience suggests skilled practitioners who adopt AI tools see significant compression of early-stage concept time. Pilot this before drawing conclusions.

🔹 Monitor physical AI and robotics developments — If your business involves physical customer experience — retail, hospitality, events, facilities — reinforcement-learning-trained robots are moving toward commercial deployment on a faster timeline than most realize.

🔹 Treat AI infrastructure access as a strategic variable — Hardware availability and vendor relationships are not commodity inputs. Organizations that secure preferred access to compute or model capabilities are building durable advantages.

🔹 Read promotional framing with calibration — This article is largely a Disney-controlled narrative. The “AI as supplement, not replacement” framing is consistent with how most large organizations present AI adoption publicly, regardless of internal reality. Monitor what the workforce implications actually look like over time.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91519970/disney-imagineers-ai-and-robotics-paris-park: April 9, 2026

Really, You Made This Without AI? Prove It.

The Verge | April 4, 2026

TL;DR: At least 12 competing “human-made” certification schemes have emerged to help creators distinguish their work from AI-generated content — but fragmented standards, unenforceable verification, and no regulatory backing mean none of them work reliably yet.

Executive Summary

The proliferation of AI-generated content has created a trust problem with real commercial stakes: audiences increasingly can’t tell human-made from machine-made work, and many platforms aren’t helping. The existing industry standard meant to address this — C2PA content credentials, backed by Adobe, Microsoft, and Google — has largely failed in practice. The core reason is structural: those who profit from undisclosed AI content have no incentive to label it.

In response, a fragmented market of “human-made” certification labels has emerged, each with different eligibility rules, verification methods, and enforcement capacity. Approaches range from honor-system badges anyone can download, to AI detection tools (which experts acknowledge are unreliable), to manual audits of creative process documentation, to blockchain-based provenance certificates. None has achieved critical mass, and even the most rigorous lack regulatory teeth. The deeper problem is definitional: as AI becomes embedded in standard creative tools, “human-made” is increasingly difficult to define, let alone verify. Researchers quoted in the piece suggest the concept of authorship itself may need to be rethought.

The economic incentive cuts both ways. Creators have strong motivation to signal authenticity — human-origin work may increasingly command a premium. But bad actors, including AI content farms generating commercial revenue at scale, have equally strong motivation to misuse whatever label wins out.

Relevance for Business

This is a trust and provenance issue that touches any SMB that commissions, publishes, or purchases creative content — marketing materials, copy, design, photography, video. If a “human-made” standard eventually gains traction (regulatory or market-driven), businesses using AI-assisted content without disclosure could face reputational exposure or procurement friction. Conversely, organizations that can credibly demonstrate human authorship may gain a differentiation advantage in content-saturated markets. For now, no standard is worth adopting at cost — but the direction of travel is clear enough to warrant a policy position.

Calls to Action

🔹 Develop an internal AI content disclosure policy now — not because regulation requires it today, but because being caught without one later carries more risk than having one early.

🔹 Do not invest in any specific “human-made” certification scheme at this stage; the market is too fragmented and no standard has regulatory or platform-level backing.

🔹 Monitor C2PA adoption — if major platforms (Meta, Google, Adobe ecosystems) begin surfacing provenance labels to end users, this moves from background issue to customer-facing one quickly.

🔹 Consider the reputational positioning question: in your market, does “human-made” carry a premium your customers would pay for or respond to? If yes, get ahead of how you’d substantiate that claim.

🔹 Watch for regulatory movement — governments are largely absent from this conversation today, but EU AI Act implementation and similar frameworks may force the issue within 2–3 years.

Summary by ReadAboutAI.com

https://www.theverge.com/tech/906453/human-made-ai-free-logo-creative-content: April 9, 2026

WHY AN AI-AUGMENTED WORKFORCE WILL STILL NEED YOU

Fast Company | R. Justin Martin | March 19, 2026

TL;DR: An experienced AI practitioner and business school professor argues that human judgment, problem framing, and accountability remain irreplaceable — and that organizations succeed with AI when they treat it as a capability multiplier, not a workforce replacement.

Executive Summary

This is an opinion piece by a Wake Forest business school professor with over a decade of applied AI experience. It is openly reassuring in tone — which is both its value and its limitation. The argument is structured but familiar: AI amplifies output without replacing the judgment needed to direct it; foundational skills matter more, not less, when AI handles execution; and organizations that treat AI as a replacement rather than an augmentation tool will underperform those that don’t. The piece is strongest in its observation about abstraction risk: as AI inserts more layers between human intent and final output, the need for verification, contextual oversight, and accountability actually increases rather than decreases.

The author makes a useful distinction between organizations and individuals. At the organizational level, adoption is experimental and uneven — leaders are still calibrating where AI creates value versus risk. At the individual level, the productivity ceiling has shifted upward: employees who use AI tools can now produce what previously required more time or more people. The gap between adopters and non-adopters is therefore growing, even if no one is yet being replaced.

The piece avoids the extremes of both AI doom and AI hype, which is appropriate for its audience. It is, however, a single practitioner’s perspective — not a data-driven analysis — and should be read accordingly. Its core prescription (engage with the tools, maintain critical thinking, expect growing pains) is sound but not particularly novel at this stage.

Relevance for Business

The article reinforces a consistent signal across this week’s coverage: AI changes what humans need to do well, not whether humans are needed. For SMB leaders, the most actionable implication is the abstraction point — as AI-generated outputs become more prevalent in your workflows, verification and quality oversight become more important, not less. Managers who assume AI-generated work requires no review are taking on silent risk. The piece also reinforces the case for treating AI adoption as a gradual organizational learning process rather than a one-time implementation event.

Calls to Action

🔹 Build verification into every AI-assisted workflow — as AI adds layers between intent and output, human review of final work product is a quality control requirement, not an optional extra step.

🔹 Focus AI deployment on capacity expansion, not headcount reduction — SMB leaders who frame AI as a growth enabler will have an easier adoption experience and retain staff trust.

🔹 Invest in problem-framing skills across your organization — as execution becomes more automated, the ability to define problems clearly before deploying AI becomes a primary source of competitive differentiation.

🔹 Expect and budget for a learning curve — the article is correct that organizations learn through trial and error, and allocating time and tolerance for that process will produce better long-term outcomes than demanding immediate ROI.

🔹 Use this piece as a communication tool — its tone and framing are well-suited to sharing with employees who are anxious about AI’s impact on their roles.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91510296/why-an-ai-augmented-workforce-will-still-need-you: April 9, 2026

SILICON VALLEY IS IN A FRENZY OVER BOTS THAT BUILD THEMSELVES

The Atlantic | Matteo Wong and Lila Shroff | April 3, 2026

TL;DR: Leading AI companies are publicly claiming — with limited detail — that AI is now helping build itself, but the reality is piecemeal automation of discrete tasks, not self-improving recursive intelligence, and expert opinion on timelines ranges from measured to resigned.

Executive Summary

This is a well-reported piece that separates genuine signal from industry framing on a topic generating outsized excitement and fear: AI systems that accelerate their own development. The verified facts are modest. Anthropic says Claude writes a large share of its codebase (with unspecified human supervision). OpenAI recently released a model it describes as involved in its own creation and plans to release an “intern-level AI research assistant” within six months — a system that, per the company’s own description, would help with literature reviews and experiment interpretation. Google DeepMind’s AlphaEvolve achieved a 0.7% improvement in data center efficiency and trimmed Gemini’s training time by 1%. These are real, incremental gains — not recursive self-improvement.

What’s missing from current AI research automation is what insiders call “research taste” — the human capacity for creative hypothesis generation, strategic prioritization, and recognizing what’s worth pursuing. DeepMind’s VP of science notes that a bot can optimize things, but needs humans to define what to optimize for. The article usefully notes that the “wild” claims (automated AI research by 2028 per Altman, by 2032 per an AI Futures Project researcher) are speculative and self-interested, while 20 of 25 leading researchers interviewed in an independent academic study identified this area as among the most severe and urgent AI risks.

The article’s sharpest observation is that the predictions don’t need to be true to be consequential: even marginal acceleration of AI development outpaces governments’ and institutions’ ability to regulate, compete, or adapt. Policy is already lagging.

Relevance for Business

For SMB leaders, the practical takeaways are calibration and timing. The near-term reality is that AI coding tools are genuinely accelerating software development — a 15–20% productivity gain in research-heavy technical organizations is documented and meaningful. The longer-term scenario — AI that autonomously drives its own capability improvements — remains unbuilt and dependent on breakthroughs that have not arrived. Leaders should resist both the hype (imminent AGI) and the dismissal (this is just chatbots) and instead focus on what is demonstrable today. The governance concern is real and worth monitoring: if AI development does meaningfully accelerate, regulatory frameworks will lag further, increasing uncertainty for businesses operating in regulated industries.

Calls to Action

🔹 Treat claims about self-improving AI as forward-looking speculation, not operational fact — the current reality is targeted automation of discrete research tasks, not autonomous AI development.

🔹 Take the productivity signal seriously: AI coding and research assistance tools are producing documented efficiency gains in technical workflows — explore where analogous gains exist in your operations.

🔹 Monitor how leading AI companies describe their own automation progress — the gap between their public claims and what they’ll confirm on record is itself a signal worth tracking.

🔹 Factor accelerating AI development into your planning horizon: even incremental improvements to AI research speed will shorten the time between capability generations — compress your technology review cycles accordingly.

🔹 Assign someone to track the governance dimension: legislative and regulatory response to AI development acceleration is lagging, and businesses in regulated sectors should anticipate governance uncertainty as a persistent operating condition.

Summary by ReadAboutAI.com

https://www.theatlantic.com/technology/2026/04/ai-industry-self-improving-bots/686686/: April 9, 2026

Why Are Designers, Engineers, and Product Managers in a ‘Three-Way Standoff’?

Fast Company | Grace Snelling | April 2, 2026

TL;DR: Hiring data shows design roles in tech have stagnated since early 2023 while PM and engineering demand has grown, fueling a wider debate about whether AI is structurally shrinking the designer’s role — with real implications for how product teams are staffed.

Executive Summary

Data from tech job tracker TrueUp, surfaced through Lenny Rachitsky’s widely-read product newsletter, shows that open design roles across more than 9,000 tech companies have been essentially flat since early 2023, while product manager openings are at their highest point since 2022 and software engineering roles continue to recover. The PM-to-designer demand ratio, which favored designers as recently as mid-2023, has now reversed. The author and industry commentators attribute the shift — cautiously — to AI enabling engineers to prototype and ship faster, reducing both the opportunity and organizational appetite to route work through traditional design processes.

The debate this data sparked is instructive. Those closer to AI development tend to see the design function as having failed to demonstrate strategic indispensability; defenders argue that human taste and systems thinking will become more valuable, not less, as AI-generated output homogenizes. Marc Andreessen’s framing — that AI now performs well enough as a coder, designer, and product manager that each role believes it can subsume the others — captures the organizational ambiguity that many product teams are already navigating. Anthropic’s chief design officer offered a more measured read: role boundaries are blurring in early-stage development, but remain meaningful in later product stages.

This is opinion-and-data commentary, not a definitive structural analysis. The TrueUp data tracks open postings at tech companies specifically and does not reflect the broader economy, agency work, or non-tech industries.

Relevance for Business

For SMB leaders, the signal is practical: if you are staffing or restructuring a product or marketing team, the traditional design-engineer-PM triad is under pressure, and role definitions are in flux. Engineers with AI tools are increasingly able to produce functional, aesthetically adequate interfaces without dedicated design involvement. That changes the business case for dedicated design headcount, particularly at the early stages of product development. At the same time, businesses that deprioritize design entirely risk output that is functional but undifferentiated — a risk that grows as AI-generated sameness increases across the market. The strategic question is not whether to hire designers, but where in the workflow human design judgment still creates competitive value.

Calls to Action

🔹 Revisit your product team structure — If you haven’t reviewed how design, engineering, and product management interact since AI tools became embedded in workflows, it’s time. Role overlap is real and worth mapping.

🔹 Don’t eliminate design on the basis of trend data alone — The TrueUp data reflects tech-company hiring dynamics; your business context may differ. Evaluate where design creates margin, not just where it creates cost.

🔹 Assess whether AI tools are already changing how your team works — Engineers and PMs may already be performing design tasks informally. Surfacing that is better than ignoring it.

🔹 Monitor the debate, not the hype — This is an active, unresolved conversation among practitioners. Treat vendor claims about AI replacing any of these roles with proportionate skepticism.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91519219/why-are-designers-engineers-and-product-managers-in-a-three-way-stand-off: April 9, 2026

HOW GENERATIVE AI IS QUIETLY RESHAPING OUR WORK CULTURES

Fast Company | Laëtitia Vitaud | April 2, 2026

TL;DR: Beyond productivity and jobs, generative AI is reshaping the deeper fabric of how organizations communicate, build trust, lead, and decide — with a documented gender gap in adoption that leaders should treat as an equity and performance risk, not a side note.

Executive Summary

This is an opinion-forward analytical piece that applies a cross-cultural framework (Erin Meyer’s Culture Map) to trace how generative AI is shifting workplace norms across eight dimensions. The argument is substantive and worth taking seriously, even where it is speculative. The core thesis: AI’s cultural effects are already underway and largely unmanaged. Organizations focused exclusively on productivity and job displacement are missing a slower, deeper transformation.

The most decision-relevant observations for leaders: AI is rewarding explicit communication and eroding the value of contextual, implicit cues — a shift that benefits some communication styles and disadvantages others. AI is also flattening the traditional authority of expertise, since knowledge access is no longer a scarce leadership asset. Decision-making is accelerating in ways that may be outrunning deliberation; the author raises the genuine concern that teams are endorsing AI recommendations without examining them. And trust dynamics are inverting — when polished output becomes universal, human presence and genuine attention become the premium signal, not the quality of the work product itself.

The gender gap finding carries the most direct governance implication. A Harvard Business School meta-analysis cited in the piece found women are 20–25% less likely to use generative AI tools than men. A separate study found that when engineers submitted identical AI-assisted code for review, women received competence ratings 13% lower than men using the same tools — an asymmetric penalty the author frames as a “digital Matilda effect.” If AI adoption is gendered, productivity gains from AI will be distributed unevenly, and organizations that do not account for this will compound existing inequality while believing they are being neutral.

Relevance for Business

For SMB executives, the most immediately actionable signal is the gender adoption gap. If your AI tool deployment is not tracking adoption by role, team, and demographic, you are likely accumulating an invisible performance and equity gap. Beyond that, the cultural shifts described here — toward explicitness, toward relationship-based trust, toward collective decision-making — have direct implications for how you design team workflows, evaluate performance, and structure leadership. The manager whose authority rested on proprietary expertise is losing a structural advantage.The leader who can synthesize, direct, and decide with AI-augmented teams is gaining one.

Calls to Action

🔹 Audit AI tool adoption across your organization — If you are not measuring who is using AI tools and who is not, you cannot manage the equity or productivity implications.

🔹 Address the gender adoption gap proactively — Create structured onboarding and psychological safety for AI tool use; do not assume adoption will equalize on its own.

🔹 Review how AI-generated recommendations are being approved — If your team is routinely endorsing AI outputs without scrutiny, that is a governance risk. Build deliberate review steps into high-stakes workflows.

🔹 Reconsider how trust and performance are signaled internally — As AI-generated work becomes ubiquitous, the markers of genuine effort and human judgment become more valuable, not less. Update how you recognize and reward those qualities.

🔹 Watch for the “frictionless consensus” problem — Teams where AI mediates most communication may be suppressing productive disagreement. Deliberately create space for unfiltered challenge.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91517087/ai-changing-work-culture-gender-gap: April 9, 2026

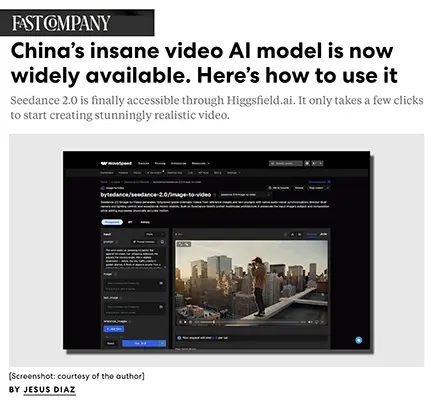

China’s High-Realism Video AI Model Is Now Widely Available. Here’s How to Use It.

Fast Company | Jesus Diaz | April 3, 2026

TL;DR: ByteDance’s Dreamina-Seedance 2.0 — a Chinese-built AI video generator capable of producing cinematic, photorealistic short clips from text and image inputs — is now accessible globally through third-party platforms, at meaningful but manageable per-clip costs.

Executive Summary

This is a how-to article, not an investigative piece, and should be read as such. The core news is that Seedance 2.0, ByteDance’s advanced generative video model, has become broadly accessible outside China through aggregator platforms like Wavespeed and Higgsfield. The model accepts multiple simultaneous inputs (images, video clips, audio), generates clips up to 15 seconds long at up to 2K resolution, and handles character consistency, camera movement, and lip-sync without separate post-production tools. Per-generation pricing at Wavespeed runs roughly $1.80–$2.70 for a five-second clip at 720p–1080p, with no subscription required.

The article is enthusiastic and lightly editorialized (“go down in flames as a species”). What it confirms factually is that high-quality AI video generation — previously accessible primarily to researchers or well-funded production teams — is now available to any small business or individual with a credit card, with results that rival professional production in some narrow use cases.

The strategic signal here is less about Seedance specifically and more about the pace at which AI video generation is becoming a commodity. ByteDance is one of several global players (Google Veo, Kling, others) competing in this space, and access is increasingly mediated by aggregator platforms that commoditize the underlying models.

Relevance for Business

For SMBs, the immediate opportunity is in low-cost content production — product demos, social media video, training clips, ad creative — where photorealism and production polish previously required agency spend or specialized staff. At roughly $2–3 per five-second clip, the economics are accessible for experimentation.

The broader concern is the competitive compression this creates in industries where visual content quality has been a differentiator. When any competitor can generate polished video at marginal cost, the bar for content quality rises without a corresponding barrier to entry. Leaders in marketing, e-commerce, and media-adjacent roles should expect this dynamic to accelerate. Note also that ByteDance (TikTok’s parent) is the developer — data governance and platform dependency questions apply, particularly for organizations with any sensitivity around intellectual property or brand assets.

Calls to Action

🔹 Assign someone to run a small test — one or two video assets for internal use or low-stakes social content — to build organizational familiarity with current AI video capability and cost.

🔹 Evaluate aggregator platforms (Wavespeed, Higgsfield) as a procurement category, with attention to pricing transparency, data handling, and model provenance before committing budgets.

🔹 Review your content strategy in light of AI video commoditization — competitive advantage will increasingly depend on creative direction and brand consistency, not production access.

🔹 Flag the ByteDance provenance if your organization has sensitivity around China-linked vendors — apply existing vendor risk frameworks rather than treating AI tools as a separate category.

🔹 Revisit in 6 months — the model and pricing landscape is changing fast enough that any detailed vendor assessment today will need refreshing.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91520507/seedance-china-video-ai-model-available-in-the-us: April 9, 2026

Baltimore Is Pushing Back Against AI’s Worst Excesses. What Happens Next Could Reshape American Tech.

Fast Company | Sarah Bregel | April 3, 2026

TL;DR: Baltimore has become the first U.S. city to sue Elon Musk’s xAI over AI-generated harmful imagery, while simultaneously resisting new data center construction — a preview of how local governments may increasingly fill the AI governance vacuum left by federal inaction.

Executive Summary

Two distinct but related conflicts are playing out in Baltimore. The first is legal: the city filed suit against xAI, SpaceX, and the X social network, alleging that the Grok chatbot was used to generate 3 million sexualized images in roughly ten days — including approximately 23,000 that researchers identified as depicting minors. This makes Baltimore the first U.S. municipality to sue a major AI company over harmful content generation. Separately, Tennessee teenagers have filed suit over non-consensual image generation involving their likenesses. These cases test whether existing local legal frameworks can reach AI platform behavior in the absence of federal legislation.

The second conflict is infrastructural. Baltimore is already home to 17 data centers and is the 44th-largest data center hub nationally. A City Council bill now proposes a one-year moratorium on new data center construction, citing energy consumption, water usage, and environmental impact disproportionately affecting minority communities. BGE electricity rates have risen 92% since 2010, with two additional rate increases already in 2026. A parallel campaign advocates for converting to public utility power. Johns Hopkins University’s new AI research institute — while not a data center — has drawn protests over tree removal and neighborhood disruption, despite promising roughly $505 million in economic impact and thousands of construction jobs.

The through-line is this: in the absence of meaningful federal AI oversight, local governments and communities are beginning to assert themselves — through litigation, legislation, and public pressure. The legal theories are untested, the moratorium may not pass, and the outcomes are uncertain. But the political dynamic they represent is durable.

Relevance for Business

For SMB leaders, this story has three practical layers. First, data center costs and energy availability are real infrastructure variables for businesses in regions where AI-driven energy demand is straining grids — rate increases in Baltimore are a local example of a national pattern. Second, organizations that use or deploy AI tools producing user-generated or AI-generated visual content should assess their exposure to harmful content liability, particularly in light of how quickly litigation is moving from individual plaintiffs to municipal governments. Third, the regulatory environment is shifting from federal to local, which means AI governance obligations may soon vary meaningfully by geography — a planning consideration for multi-location businesses.

Calls to Action

🔹 Review your exposure to AI-generated content risks — if your platforms, tools, or products involve generative imagery, assess your content moderation policies against the liability theories emerging in these suits.

🔹 Monitor local AI ordinances in your operating cities — the Baltimore moratorium and similar actions in other cities signal a trend toward municipal-level AI regulation that may affect data infrastructure, vendor contracts, or compliance obligations.

🔹 Factor energy cost volatility into AI infrastructure planning — data center energy demand is a documented driver of utility rate increases, and this risk is not limited to Baltimore.

🔹 Track the xAI litigation — while outcome is uncertain, the legal framing (platform liability for AI-generated harmful content) could set precedents relevant to any business deploying third-party AI tools in customer-facing contexts.

🔹 Assign internal review of vendor agreements for AI tools that generate content — particularly around indemnification clauses and content policy enforcement responsibilities.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91518398/baltimore-fights-back-ai-grok-johns-hopkins-data-institute: April 9, 2026

The Gig Workers Who Are Training Humanoid Robots at Home

MIT Technology Review | Michelle Kim | April 1, 2026

TL;DR: A global at-home gig economy has emerged to supply the physical movement data that humanoid robotics companies need — raising serious questions about data quality, worker privacy, and how long the data supply chain can sustain a multi-billion-dollar industry.

Executive Summary

Companies like Tesla, Figure AI, and Agility Robotics are racing to build humanoid robots capable of navigating real environments. The training bottleneck isn’t compute — it’s physical-world movement data, which simulations can’t replicate accurately enough. The current solution is a rapidly scaling gig workforce: thousands of contractors in Nigeria, India, Argentina, and elsewhere are wearing iPhones on their heads and recording themselves doing household chores. Platforms like Micro1 (Palo Alto), Scale AI, and Encord aggregate and sell this footage to robotics firms. Industry estimates put annual spending on such data at over $100 million and climbing.

The model raises compounding concerns. On quality: researchers note that what constitutes good robot training data is still poorly understood, and the scale of footage makes rigorous quality control difficult. On privacy: workers record intimate domestic spaces, and many have no clarity on how their data is stored, used by downstream clients, or whether deletion requests are honored. On labor: workers are paid at rates that are attractive by local standards — $15/hour in Nigeria, for example — but the work is repetitive, poorly understood by those doing it, and contingent with no guaranteed tenure.

A UC Berkeley roboticist quoted in the article notes that language models trained on internet text consumed the equivalent of 100,000 years of human reading — robots may need proportionally more physical data, and the infrastructure to collect it is still embryonic. The data supply chain for humanoid robots is a long-duration dependency with unresolved quality, consent, and scale questions.

Relevance for Business

For most SMBs, the direct action is limited. But the story carries two useful signals. First, humanoid robots are closer than they appear — $6 billion invested in 2025 alone, with multiple enterprise deployments targeting warehouses and factory floors within the next few years. Businesses in logistics, light manufacturing, and facilities management should begin monitoring what’s deployable, not just what’s announced. Second, this story is an early indicator of emerging regulatory and reputational risk in AI supply chains: the data underpinning these systems raises informed consent and privacy questions that regulators in the EU and elsewhere are likely to examine. Organizations that procure or deploy robotics at scale will eventually be asked about their supply chain.

Calls to Action

🔹 Monitor humanoid robotics deployment timelines if your business operates warehouses, manufacturing, or facilities — this moves from research story to procurement decision within 3–5 years for some sectors.

🔹 Note the data supply chain risk — as AI supply chain scrutiny grows, understanding where your AI tools’ training data came from (and under what consent conditions) may become a compliance question.

🔹 Treat current progress claims with appropriate skepticism — the data collection infrastructure needed is still being built, and leading experts suggest commercially capable general-purpose humanoids remain years away.

🔹 No immediate action required for most SMBs — revisit when leading robotics vendors publish concrete commercial pricing and deployment timelines for your sector.

Summary by ReadAboutAI.com

https://www.technologyreview.com/2026/04/01/1134863/humanoid-data-training-gig-economy-2026-breakthrough-technology/: April 9, 2026

THE AI SKILLS GAP IS ALREADY WIDENING, REPORT SUGGESTS

Fast Company | Moises Mendez II | March 26, 2026

TL;DR: Anthropic’s own research — with the inherent conflict of interest that entails — suggests that workers with sustained AI experience are pulling ahead of those without it, and organizations deploying AI without structured training are generating internal friction rather than productivity gains.

Executive Summary

This is a short article summarizing an Anthropic report on emerging workforce divergence tied to AI proficiency. The core finding: workers with at least six months of hands-on experience using Claude perform better in AI-assisted tasks than those without that history. The implication is a growing competency gap inside organizations — between employees who have adopted AI tools deeply and those who haven’t — and potentially in the labor market between companies that have structured adoption programs and those that haven’t.

Anthropic’s own head of economics is careful to note the report doesn’t yet show broad displacement: no material difference in unemployment rates has been observed for roles where AI can automate core tasks. But he also warns that displacement effects could accelerate quickly — and calls for building monitoring frameworks now, before disruption materializes rather than after. This is a measured but meaningful signal from the company building the tool.

The article also cites reporting that teams with uneven AI adoption create internal tension: high-adopters outpace colleagues, workflows become inconsistent, and confusion about responsibilities grows. This is an organizational management problem, not just a skills problem — and it’s already appearing in companies across banking, retail, and professional services.

The source conflict matters here: Anthropic has a clear commercial interest in encouraging wider AI use. The finding that their product’s users perform better than non-users is not independent research. That said, the organizational friction point — uneven adoption causing team-level friction — is consistent with reporting from multiple independent sources.

Relevance for Business

For SMB executives, this article surfaces a talent and operations risk that is already present, not hypothetical. If some employees are using AI extensively while others aren’t, the productivity gap between them is growing — and the workflow mismatches that creates are a real management burden. More broadly, this signals that AI adoption is not self-executing: without structured support and consistent deployment, you get uneven results and team friction rather than organizational lift. The competitive implication is that companies that invest in structured AI capability-building now will compound that advantage over time.

Calls to Action

🔹 Audit current AI adoption across your team — identify who is using tools extensively, who isn’t, and whether that unevenness is creating workflow inconsistency or interpersonal tension.

🔹 Build a structured onboarding and practice program for AI tools rather than assuming self-directed adoption will produce consistent results — six months of guided experience appears to be a meaningful threshold.

🔹 Treat the Anthropic report as a directional signal, not settled research — the conflict of interest is real, and independent corroboration of the skills gap finding should be sought before making major workforce decisions based on it.

🔹 Design for the augmentation frame, not the automation frame: the goal for most SMBs is producing more with the same team, not reducing headcount — communicate this clearly to reduce employee anxiety and resistance.

🔹 Begin building a monitoring framework now for how AI adoption is affecting your key roles — tracking output quality, throughput, and role evolution will be more useful than waiting for visible disruption.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91516734/the-ai-skills-gap-is-already-widening-report-suggests: April 9, 2026

Art Schools Are Being Torn Apart by AI

The Verge | Jess Weatherbed | March 31, 2026

TL;DR: Creative institutions are integrating generative AI into curricula over significant student and faculty opposition, signaling that AI literacy is becoming a baseline professional requirement across creative fields — with direct implications for hiring, training, and creative vendor relationships.

Executive Summary

Leading art and design schools — including CalArts, the Royal College of Art, MassArt, and Arizona State University — are incorporating generative AI into coursework, even as the majority of students at some institutions report negative attitudes toward the technology. The institutional rationale is pragmatic: employers increasingly expect AI fluency, and creative professionals who cannot work with or critically evaluate these tools may find themselves sidelined. The curriculum focus is not on wholesale AI adoption but on critical engagement — understanding what the tools can and cannot do, their ethical and legal implications, and how human creativity remains the differentiating input.

The resistance is substantive, not merely sentimental. Students and faculty raise concerns about training data scraped without consent, potential job displacement as companies use AI to reduce creative headcount, and the mismatch between the cost of a creative education and a job market that increasingly values prompt engineering over craft. A 2023 survey at Ringling College found 70% of students held negative views of AI — a data point that predates significant further capability gains and likely understates current anxiety.

The article also illustrates the acceleration of generative AI capability across creative modalities — image, music, video — making it increasingly difficult to predict which creative subfields will face disruption next. The creative labor market is being reshaped in real time, and institutions are making a calculated bet that fluency is a better hedge than resistance.

Relevance for Business

SMB executives who hire creative professionals — designers, writers, video producers, brand and marketing staff — should recognize that the talent market is fragmenting. A portion of recent graduates will arrive with strong AI tool proficiency; others will have principled objections; most will have some exposure but uneven skills. This has implications for onboarding, role definition, and team composition. More urgently, businesses using agencies or freelancers for creative work should be evaluating whether those partners are using AI tools, how, and what that means for quality, originality, and potential copyright exposure. The cost of creative production is falling in some categories; the risk of legal and reputational exposure from AI-generated content is rising.

Calls to Action

🔹 Audit your creative vendor relationships — Ask agencies and freelancers directly whether AI-generated content is part of their output, and under what terms.

🔹 Update job descriptions and hiring criteria — Determine whether AI tool proficiency is a requirement, a preference, or a concern for each creative role you hire.

🔹 Develop an internal AI-in-creative-work policy — Establish what AI-assisted or AI-generated content is acceptable for your brand, marketing, and communications output, and communicate it clearly.

🔹 Monitor copyright developments — The training-data legal landscape is still unsettled. Creative output from AI tools carries ongoing legal uncertainty, especially at commercial scale.

🔹 Recognize the talent divide is arriving now — Build onboarding and evaluation processes that account for varying levels of AI exposure among new creative hires.

Summary by ReadAboutAI.com

https://www.theverge.com/tech/903954/art-schools-generative-ai-education-creative-jobs: April 9, 2026

Does Big Tech Actually Care About Fighting AI Slop?

The Verge | Jess Weatherbed | February 23, 2026

TL;DR: The primary industry standard for labeling AI-generated content (C2PA) is fragmented, poorly implemented, and structurally conflicted — because the companies promoting it are also the ones profiting from the AI content flood it is supposed to address.

Executive Summary

This is an analytical piece — opinion-forward but substantively reported — arguing that Big Tech’s anti-AI-slop posture is largely performative. The central exhibit is C2PA (Coalition for Content Provenance and Authenticity), an industry standard designed to authenticate media as human-made rather than AI-generated. Despite backing from Microsoft, Meta, Google, OpenAI, and others, C2PA suffers from incomplete adoption, easily stripped metadata, inconsistent platform implementation, and a fundamental design limitation: it requires every participant in the media creation and distribution chain to be enrolled, which is not achievable at scale. The article notes X (formerly Twitter) withdrew from C2PA after Musk’s acquisition, removing a major content-distribution channel from the standard entirely.

More pointedly, the article argues that the companies steering C2PA have a direct financial incentive to produce the synthetic content the standard is meant to label — and that this conflict of interest makes robust enforcement structurally unlikely. Meta, OpenAI, and Google are all simultaneously expanding generative AI tools and claiming to support content authenticity. The author characterizes C2PA as functioning more as reputational cover than as a working solution.

The practical takeaway for anyone relying on platform-level protections against AI-generated misinformation is clear: those protections are inconsistent, hard to use, and built by parties whose business interests run counter to meaningful enforcement.

Relevance for Business

For SMBs, this story has two practical dimensions. First, any business that relies on social media content — for marketing, customer research, brand monitoring, or competitive intelligence — should treat AI-generated content as a default risk, not an edge case. Platform-level labeling is unreliable. Second, businesses that produce and distribute original content — text, images, video, audio — should consider how they establish and protect provenance. The credibility gap between AI-generated and human-made content is widening, and audience trust in the authenticity of branded content is becoming a competitive variable. Organizations that can credibly demonstrate their content is human-originated may gain a trust premium over time.

Calls to Action

🔹 Do not rely on platform AI labels for content verification — Treat all digital content from social channels as potentially AI-generated, regardless of labels. Apply editorial judgment accordingly.

🔹 Assess your brand’s content authenticity posture — Consider whether your audience values human-made content, and whether your workflows and vendor relationships can credibly support that claim.

🔹 Evaluate C2PA readiness if you operate in media or publishing — If your business depends on content provenance (journalism, legal, brand protection), track C2PA adoption on your key distribution platforms but do not assume coverage.

🔹 Monitor regulatory developments — Governments may eventually mandate AI content disclosure. Businesses that build disclosure-readiness now will face lower compliance costs later.

🔹 Build internal media literacy — Staff who consume social media for business purposes need frameworks for skepticism. Brief your teams on the limitations of current AI labeling systems.

Summary by ReadAboutAI.com

https://www.theverge.com/ai-artificial-intelligence/882956/ai-deepfake-detection-labels-c2pa-instagram-youtube: April 9, 2026

Chatbots Are Now Prescribing Psychiatric Drugs

The Verge | Robert Hart | April 3, 2026

TL;DR: Utah has authorized an AI chatbot to renew certain psychiatric prescriptions without physician involvement — a narrowly scoped pilot that clinical experts say is unlikely to reach those most in need of care, and may introduce new safety risks in the process.

Executive Summary

Utah has granted Legion Health’s AI chatbot authority to handle prescription renewals for a limited list of lower-risk psychiatric maintenance medications — antidepressants and anti-anxiety drugs already prescribed by a physician — for patients deemed clinically stable. The program, structured as a one-year pilot under state oversight, charges patients $19/month and is positioned as a solution to Utah’s mental health access shortage. The scope is deliberately constrained: no new prescriptions, no controlled substances, no patients with recent hospitalizations or medication changes, and mandatory check-ins with a human provider every six months or ten refills.

Despite the tight guardrails, psychiatrists interviewed by The Verge question the fundamental premise. The patients eligible for this service — stable, already on a treatment plan — are generally those whose physicians are most willing to issue refills without an appointment anyway. The tool may therefore serve the least underserved segment of the population it claims to help. More substantively, clinical experts warn that even routine psychiatric care involves contextual judgment that self-report screening cannot fully replace: patients may game the system, miss side effects, or present signals a chatbot cannot read. Utah’s prior AI prescribing pilot with a different vendor was exploited within weeks of launch, raising questions about system robustness under adversarial conditions.

Legion has signaled national ambitions, stating the service will be available “nationwide 2026.” The regulatory and liability implications of AI-issued prescription renewals scaling across state lines remain largely unresolved. The company’s framing — that this is “the beginning of something much bigger than refills” — is promotional and should be weighed against the limited and contested evidence base for the pilot itself.

Relevance for Business

For SMB executives, this story has two distinct dimensions. First, any business operating in behavioral health, telehealth, or employee wellness should monitor how AI-prescribing pilots develop, both as a potential cost-reduction lever and as a governance and liability signal. Second, and more broadly, this pilot illustrates a pattern: AI being deployed in regulated, high-stakes domains with thin clinical evidence, limited transparency, and expansive commercial ambitions. That pattern is relevant to any organization evaluating AI for sensitive workflows — healthcare, HR, legal, or financial — where the cost of a failure is not just operational but reputational or regulatory.

Calls to Action

🔹 Monitor, don’t act — The Legion pilot is too early-stage and clinically contested to inform vendor decisions. Track outcomes over the one-year term.

🔹 If you provide employee mental health benefits, ask your vendors whether any AI-driven prescription or care automation is embedded in their workflows, and what clinical oversight exists.

🔹 Use this as a governance reference case — Prepare internal criteria for evaluating AI in sensitive or regulated domains: What clinical or legal oversight is in place? Who bears liability? What are the escalation protocols?

🔹 Watch for national expansion — Legion has signaled a 50-state rollout by end of 2026. If that proceeds, the regulatory and liability landscape for AI-assisted clinical care will shift quickly.

🔹 Be skeptical of access-to-care framing — When vendors position AI as solving underserved-population problems, assess whether the product design actually reaches those populations or primarily serves the already-engaged.

Summary by ReadAboutAI.com

https://www.theverge.com/ai-artificial-intelligence/906525/ai-chatbot-prescribe-refill-psychiatric-drugs: April 9, 2026

Chatbots Are Struggling With Suicide Hotline Numbers

The Verge | December 10, 2025

TL;DR: A structured test found that most major AI chatbots — including products from Meta, xAI, Character.AI, and Anthropic — failed to provide geographically accurate crisis resources to someone disclosing self-harm, exposing a concrete safety gap with real reputational and regulatory stakes.

Executive Summary

A reporter at The Verge tested more than a dozen AI chatbots by disclosing suicidal ideation and requesting crisis hotline numbers. The results were notably inconsistent. Only ChatGPT and Gemini responded accurately and immediatelywithout additional prompting, providing location-appropriate resources. Most others defaulted to US-centric numbers regardless of the user’s location, refused to engage, or — in one case — ignored the disclosure entirely.

The failure pattern matters beyond the individual products tested. Experts cited in the piece warn that providing wrong or unhelpful crisis information can actively increase risk by introducing friction at the moment a person is most vulnerable. Unlike most AI product failures, this one carries direct human harm potential. The technical fix is not complex — asking for location before responding — but most systems haven’t implemented it consistently.

The business implications extend to trust and liability. Companies deploying AI-powered tools in customer-facing, HR, wellness, or employee support contexts face real exposure if those tools handle sensitive disclosures poorly. This is not a distant regulatory risk; it’s a current operational one.

Relevance for Business

Any SMB using AI in roles where employees or customers might disclose distress — HR chatbots, internal helpdesks, customer service tools, mental wellness platforms — should treat this as an active governance issue, not a future one. The gap between stated safety features and actual performance is documented and specific. Regulators and plaintiffs’ attorneys read the same publications your leadership does. Organizations that deploy AI without evaluating crisis-response behavior are taking on reputational and potentially legal risk they may not have assessed.

Calls to Action

🔹 Audit any AI tools currently deployed in employee-facing or customer-facing contexts for how they handle sensitive disclosures — test them directly, not just by reviewing vendor documentation.

🔹 Do not rely on vendor safety claims at face value — this report shows a consistent gap between what companies say their systems do and what they actually do under testing.

🔹 Establish a clear policy for which use cases AI tools in your organization are — and are not — authorized to handle, especially those touching mental health, HR, or crisis scenarios.

🔹 Assign ownership of AI safety testing to a specific person or function; this is no longer a task that can live informally.

🔹 Monitor regulatory developments around AI mental health applications — legislative and liability attention in this area is increasing.

Summary by ReadAboutAI.com

https://www.theverge.com/report/841610/ai-chatbot-suicide-safety-failure: April 9, 2026

It’s Not Easy to Get Depression-Detecting AI Through the FDA

The Verge | Robert Hart | April 2, 2026

TL;DR: A seven-year effort to bring speech-based depression screening AI to market ended in shutdown and open-sourcing after the FDA’s approval framework proved structurally mismatched with how AI systems actually work — a cautionary signal for any business betting on regulated AI health products.

Executive Summary

Kintsugi, a California startup, spent seven years developing AI that analyzes vocal patterns to detect signs of depression and anxiety, positioning it as a scalable complement to existing clinical screening tools. The company pursued FDA clearance through the “De Novo” pathway — designed for novel, low-risk medical devices — but the framework created a fundamental problem: FDA approval assumes a device design that remains fixed, while AI systems are intended to be continuously updated. Regulatory structures built for static hardware do not map cleanly onto adaptive software.Compounding this, government shutdowns delayed review, and the company ran out of funding before securing final approval.