AI Updates March 24, 2026

This week’s briefing points to a more mature and consequential phase of the AI cycle: the conversation is shifting from breakthrough models to operational power. Across these summaries, the real story is not simply that AI tools are improving. It is that AI is becoming embedded in the systems that shape how organizations build products, manage labor, govern risk, secure data, influence behavior, and compete. From enterprise partnerships and agent failures to regulatory clashes, workforce restructuring, infrastructure dependencies, and new AI-assisted development platforms, the pattern is clear: AI is no longer an emerging capability sitting at the edge of the business. It is becoming part of the business environment itself.

Three themes stand out for SMB leaders this week. The first is control: who governs the models, platforms, values, permissions, and vendor relationships that organizations are increasingly relying on. That issue appears in multiple forms across this set — from the U.S. government’s extraordinary move against Anthropic, to healthcare concerns around unauthorized AI use, to agentic system failures, vendor concentration, and rising dependence on a small number of powerful platforms. Even seemingly upbeat stories, such as Google’s expanded AI Studio capabilities, point to the same structural reality: as AI tools become easier to use, they also become easier to build into the organization before governance is fully in place. The strategic risk is no longer just whether AI works, but who controls its behavior, continuity, and boundaries once it does.

The second and third themes are execution and human adaptation. Execution matters because the distance between AI access and AI value remains wide: many organizations now have tools, pilots, and vendor options, but still struggle to translate them into durable returns, secure workflows, or reliable competitive advantage. At the same time, the human dimension is becoming harder to ignore. Workforce restructuring, uneven adoption, culture strain, shifting skill expectations, and oversight burdens all show that AI success is not primarily a technical problem. It is a management problem. The leaders best positioned in this environment will not be the ones chasing every announcement, but the ones building the discipline to evaluate tools clearly, govern them early, and align them with actual business priorities.

Google AI Studio Grows Up: AI for Humans Podcast Signals a Shift From Toy Prototypes to More Complete AI App Building

AI for Humans — March 20, 2026

TL;DR / Key Takeaway: Google’s AI Studio upgrade matters less as a flashy product launch than as a sign that AI-assisted app creation is moving from rough prototyping toward more usable, persistent, and collaborative software building—but the real risks remain platform dependence, product instability, and the widening advantage of large ecosystems.

Executive Summary

The core signal from this AI for Humans episode is that Google is trying to close the gap between AI-assisted coding demos and software that can actually persist, authenticate users, store data, and support collaboration. The hosts focus on new AI Studio capabilities such as Firebase integration, authentication, databases, multiplayer support, persistent sessions, and Next.js compatibility, framing them as a meaningful step beyond lightweight “vibe coding” into more complete application creation. That matters because the bottleneck is no longer just generating code; it is helping non-experts assemble the surrounding infrastructure—data storage, identity, deployment frameworks, and continuity across sessions—without getting lost in technical setup.

The episode also surfaces an important market dynamic: the AI coding stack is consolidating around a few major platforms with deep ecosystems. Google is presented as catching up aggressively, Anthropic is described as shipping useful coding tools quickly, and OpenAI is portrayed as narrowing focus to defend its position in coding and enterprise use cases. Beneath the banter is a serious business point: AI development is becoming less about isolated models and more about integrated workflow environments. The more these platforms bundle tools, hosting, authentication, voice, image generation, and app frameworks together, the more they can lock in users, reduce friction, and shift power toward incumbents with full-stack control.

At the same time, the transcript repeatedly hints at constraints that leaders should not ignore. The hosts themselves question Google product longevity, highlight uncertainty over which OpenAI products will actually be maintained, and point to security and governance problems through Meta’s rogue-agent issues. The larger takeaway is that while AI tools are making software creation more accessible, execution risk has not disappeared—it has simply moved. Instead of asking whether teams can generate code, leaders now have to ask whether they can audit it, secure it, govern it, maintain it, and avoid overcommitting to fast-moving vendors whose priorities may change quickly.

Relevance for Business

For SMB executives and managers, this matters because AI-assisted software creation is becoming more practical for internal tools, lightweight apps, workflow automation, prototypes, and customer-facing experiments. The real value is not that “everyone is a developer now,” but that smaller teams may be able to produce useful digital tools faster and with less specialized labor at the earliest stages.

That said, the episode also points to several business realities. First, ease of building does not remove the need for oversight. Security, scaling, authentication, and data handling still require judgment, even if the setup becomes more automated. Second, vendor dependence is increasing. Choosing Google, Anthropic, or OpenAI is no longer just a model decision; it is increasingly a decision about ecosystem, workflow, and future switching costs. Third, as app creation becomes cheaper, the competitive advantage shifts away from merely producing software and toward identifying the right use case, governing it well, and integrating it into actual operations.

The podcast’s broader collection of side stories reinforces this pattern. Faster video generation, AI-driven design tools, open-source game modification, and agentic tools all point in the same direction: AI is compressing experimentation cycles. But the Meta example and the discussion around product de-prioritization show that governance, reliability, and product continuity may become bigger differentiators than raw novelty.

Calls to Action

🔹 Test AI app-building tools on low-risk internal workflows first, especially for prototypes, dashboards, lightweight client tools, or process automations that do not expose sensitive data.

🔹 Evaluate platforms as ecosystems, not just models—compare hosting, authentication, integration options, pricing, portability, and long-term roadmap risk before committing.

🔹 Assign technical and governance review even for “easy” AI-built tools, especially where customer data, permissions, or external deployment are involved.

🔹 Avoid assuming rapid prototyping equals production readiness; build a checkpoint process for security, scalability, maintenance, and vendor lock-in before wider rollout.

🔹 Monitor which vendors are narrowing focus and which are expanding infrastructure support, because product continuity may matter as much as feature quality over the next 12 months.

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=gnJ6SD2734Y: March 24, 2026Google Stitch: Design to Code with AI

https://stitch.withgoogle.com/: March 24, 2026

OpenAI’s First Artist-in-Residence Launches Phyzify to Turn Ideas Into Physical Products

Fast Company — March 13, 2026

TL;DR: Phyzify is an early-stage startup using AI to convert creative concepts into physical goods via automated manufacturing — an interesting signal about where AI-to-physical pipelines are heading, but not yet a business-relevant tool for most SMB operators.

Executive Summary

Alexander Reben, who served as OpenAI’s inaugural artist-in-residence, has launched Phyzify, a pre-seed startup aiming to automate the journey from creative idea to manufactured physical product. The current focus is fabric looms — translating AI-generated designs into woven textiles — with a stated ambition to expand across fashion, music, food, gaming, and other creative categories. The company is also exploring handling backend product-development tasks like domain registration and patent filing.

This is genuinely early-stage. Phyzify has closed a pre-seed round, is working with five creative collaborators, and aims for a consumer product launch within a year. The demo described involves a MIDI controller, a webcam feed, AI-generated pattern options, and a real-time loom — creative and technically interesting, but not yet a scalable commercial platform.

The more substantive signal is philosophical: Reben’s stated intent is to keep humans in the role of asking questions and exercising creative judgment, while AI handles execution. He explicitly pushes back on what he calls “synthetic capitalism” — fully automated product creation and distribution without human involvement. Whether Phyzify executes on that vision is unproven. The investor framing — 2026 as “a huge year” for physical AI — is promotional. The underlying trend it points to (AI shortening the gap between ideation and physical production) is real, though the timeline and accessibility for SMBs remain unclear.

Relevance for Business

This story is most relevant for SMBs in creative, manufacturing, or consumer product industries as a directional signal: the pipeline from digital design to physical output is compressing. Companies that currently rely on long product development cycles, manual prototyping, or outsourced manufacturing should track this category. For most SMB executives today, Phyzify itself is not actionable — it’s pre-product. But the trend it represents warrants a position on your radar.

Calls to Action

🔹 No action required now for most SMBs — Phyzify is pre-commercial and unproven at scale.

🔹 Monitor if you’re in fashion, custom goods, consumer products, or creative services — the AI-to-physical pipeline is shortening and will affect sourcing, prototyping, and production timelines.

🔹 Flag for revisit in 12–18 months when Phyzify or comparable platforms have demonstrated commercial viability and unit economics.

🔹 Track the broader category, not just this company — similar capabilities are likely to emerge from multiple directions (3D printing platforms, AI design tools, on-demand manufacturing networks).

Summary by ReadAboutAI.com

https://www.fastcompany.com/91507430/openai-first-artist-in-residence-launches-phyzify: March 24, 2026

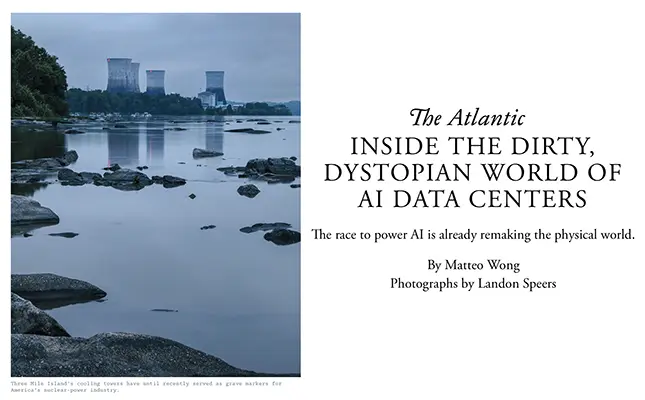

Inside the Dirty, Dystopian World of AI Data Centers

The Atlantic — March 13, 2026

TL;DR: The AI industry’s infrastructure buildout is already driving a measurable increase in fossil fuel dependency, local environmental harm, and grid stress — with costs being externalized onto communities and ratepayers, not AI vendors.

Executive Summary

This is an on-the-ground investigation, not an analyst brief. The Atlantic’s Matteo Wong reports from Memphis, Loudoun County (Virginia), and Three Mile Island to document the physical and environmental footprint of the AI infrastructure race. The core findings are specific and sourced: xAI’s Colossus data center in southwest Memphis consumes as much electricity annually as 200,000 homes, was built in under three months without standard permitting, and sits in a low-income Black neighborhood already carrying disproportionate industrial pollution burden. Satellite data from University of Tennessee researchers shows elevated nitrogen dioxide near the site since its launch.

At the macro level, the piece surfaces credible projections: by 2030, U.S. data centers may consume more electricity than all heavy industry combined, according to an IEA analyst. Capital expenditures from Amazon, Microsoft, Meta, and Google have exceeded $600 billion since ChatGPT’s launch — more, inflation-adjusted, than the interstate highway system. The default energy source is natural gas, not renewables, and the IEA estimates data center emissions could more than double by 2030.

The piece is careful to surface the counterargument — that nuclear and efficiency improvements could change the trajectory — but is equally clear that “add gas now, add nuclear later” is the current market behavior, not a plan. It also raises a legitimate historical caution: internet-era energy demand projections in the 1990s proved badly overestimated, leaving stranded assets. The generative AI boom could follow a similar pattern — or not.

Relevance for Business

For SMB leaders, the direct operational implication is energy cost and reliability risk. As data centers compete for grid capacity — particularly in Virginia, Texas, Phoenix, Atlanta, and Dallas — regional electricity costs and reliability for businesses in those corridors may be affected. Utilities in Virginia are already projecting 5.5% annual demand growth, with overall demand potentially doubling by 2039.

There is also a vendor dependency signal: the AI tools your business relies on are built on infrastructure with uncertain cost structures, permitting exposure, and potential regulatory scrutiny. If large AI providers face energy constraints, regulatory backlash, or are forced to internalize environmental costs, pricing and capacity availability for downstream customers could shift. This is not imminent, but it is a real second-order risk to monitor.

Finally, for any SMB with ESG commitments or stakeholder expectations around sustainability, the energy sourcing of AI tools is becoming a legitimate governance question — one that vendor marketing currently obscures.

Calls to Action

🔹 Flag for ESG/sustainability governance if your organization reports on or is evaluated by environmental criteria — AI tool usage is a legitimate scope consideration.

🔹 Do not assume AI infrastructure costs are stable — energy constraints, permitting battles, or forced internalization of environmental costs could affect vendor pricing within 3–5 years.

🔹 Monitor the regulatory environment around data center permitting and emissions — the SELC lawsuit against xAI and state-level scrutiny are early signals of a developing policy front.

Summary by ReadAboutAI.com

https://www.theatlantic.com/magazine/2026/04/ai-data-centers-energy-demands/686064/: March 24, 2026

THE NEXT PHASE OF AI MUST START SOLVING EVERYDAY PROBLEMS

Fast Company | Matt Rogers | March 16, 2026

TL;DR: The founder of Nest and Mill argues that AI will only achieve durable adoption when it reliably solves practical problems for ordinary people — and that the industry’s current focus on model capabilities and enterprise use cases is delaying the broader transformation AI can actually deliver.

Executive Summary

This is an opinion piece by Matt Rogers, the co-founder of Nest and current CEO of Mill (an AI-powered food waste company). It is advocacy for a specific view of technology adoption, grounded in Rogers’ operational experience rather than independent research. The argument should be read as informed practitioner perspective, not empirical analysis.

Rogers’ core claim is that technology adoption follows a fixed sequence: consumer education → expanded adoption → societal transformation — and that AI, despite enormous investment, has not yet completed the first stage for most people. He argues that the products most likely to win are not the most technically impressive but the ones that quietly make real-world processes faster, cheaper, and more resilient. His examples — the iPhone’s ecosystem, Nest’s energy management, Mill’s industrial food waste reduction — illustrate products that succeeded by solving a problem people could recognize, not by showcasing technical sophistication.

The implicit critique of the current AI moment is measured but clear: the industry is optimizing for benchmark performance, model launches, and enterprise infrastructure while ordinary people remain unconvinced. Rogers’ own company claims to illustrate the alternative — Mill’s food waste systems have been adopted by Amazon and Whole Foods, which he frames as evidence that AI has crossed a threshold from novelty to industrial utility in at least one domain. That claim is self-serving but not implausible.

The piece ends with a useful provocation: hype fades, models commoditize, and what remains will be determined by whether AI made real problems easier to solve.

Relevance for Business

This is one of the most directly actionable frameworks in this week’s batch for SMB leaders. The education → adoption → transformation sequence gives leaders a practical diagnostic: Where is your organization on that curve? Many SMBs are stuck between curiosity and adoption because no one has made the specific value proposition clear to the people doing the work. Rogers’ argument suggests that AI deployment fails not because the technology isn’t ready, but because the organizational education step is being skipped. The second implication is for vendor selection: tools that quietly reduce friction in known workflows are more likely to generate durable ROI than tools that require significant behavioral change or new mental models. Shiny and powerful is not the same as useful.

Calls to Action

🔹 Audit your AI deployments against actual problem-solving — For each AI tool in use, ask: what specific problem does this solve, and does the user understand that? Tools that can’t answer that question clearly are unlikely to stick.

🔹 Prioritize education before expansion — Before rolling out AI tools more broadly, ensure the use case is understood by the people being asked to use it. Adoption without education produces low utilization and poor ROI.

🔹 Favor boring-reliable over impressive-complex — When evaluating new AI tools, weight real-world friction reduction over feature counts and benchmark performance. The Nest thermostat beat more technically capable competitors by being simpler and more useful.

🔹 Use the commoditization signal — Rogers predicts model capabilities will commoditize. Plan for a future where AI capability is table stakes and competitive advantage comes from how well you’ve integrated it into your specific workflows.

🔹 Assign a “so what” test to every AI initiative — Before committing budget, require a clear answer to: what does this make faster, cheaper, or less risky? If the answer is vague, the initiative is premature.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91508482/the-next-phase-of-ai-must-start-solving-everyday-problems: March 24, 2026

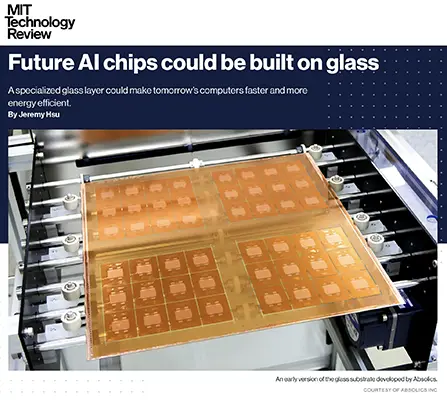

Future AI Chips Could Be Built on Glass

MIT Technology Review — March 13, 2026

TL;DR: Glass substrates are moving from semiconductor research to commercial production in 2026, promising AI chips that are faster, more energy-efficient, and more thermally stable — a supply chain and infrastructure development worth tracking as it matures over the next 3–5 years.

Executive Summary

This is a technology supply chain story, not a product announcement. The core development: Absolics, a subsidiary of South Korean materials company SKC, is beginning commercial production in 2026 at a US facility in Covington, Georgia, of glass-based chip substrates — the foundational layer on which multiple chips are combined into a single computing package. Intel is also pursuing glass substrates in its next-generation chip packaging. The US government’s CHIPS Act provided $175 million in grants to the Absolics/Georgia Tech partnership.

The technical case is substantive: glass substrates offer significantly better thermal stability than the fiberglass-reinforced epoxy that has been the industry standard since the 1990s. The practical benefits include the ability to create 10 times more connections per millimeter, allowing 50% more silicon chips in the same package area, better power routing efficiency, and the potential to use light (rather than copper wire) for signal transmission — which could dramatically reduce energy consumption. The energy efficiency angle is directly relevant to the data center energy crisis documented elsewhere in this batch.

The commercial reality check is also important: glass is fragile. Substrates are 0.7–1.4mm thin and prone to cracking in manufacturing. Intel reports it was cracking hundreds of panels per day in early testing. That problem has been substantially mitigated, but manufacturing yield at commercial scale is unproven. Absolics’s current capacity is estimated at 2–3 million chip packages annually — significant but modest relative to the scale of AI data center demand. IDTechEx projects the glass substrate market growing from $1 billion (2025) to $4.4 billion by 2036 — meaningful growth, but a 10-year horizon.

Relevance for Business

For most SMBs, this is a background infrastructure trend to understand, not act on. The practical relevance is indirect but real: if glass substrates deliver on their energy efficiency and performance promises, they are one of the mechanisms by which AI compute costs could decrease and AI tool performance could improve over the 3–5 year horizon. That affects the cost trajectory of AI services your business relies on.

For SMBs in semiconductor supply chain, electronics manufacturing, or advanced materials, this is a more immediate competitive intelligence item. A new supply chain ecosystem is forming around glass substrates, with Samsung, LG Innotek, JNTC, and others entering the space — and US government backing through CHIPS Act funding adds supply chain stability signal.

Calls to Action

🔹 No immediate action required for most SMBs — this is a 3–5 year infrastructure story with indirect implications for AI compute costs and capability.

🔹 If you’re in semiconductor supply chain, electronics, or advanced materials, add glass substrate development to your technology roadmap monitoring — a new supply chain ecosystem is forming quickly.

🔹 Use this as context when evaluating AI vendor cost trajectories — chip efficiency improvements are one mechanism that could moderate AI infrastructure costs over the medium term.

🔹 Flag for revisit in 12–18 months — Absolics’s first commercial production run and Intel’s packaging announcements will clarify whether the technology scales at acceptable yield rates.

🔹 Pair with the data center energy story (Article 3 in this batch) — glass chips and nuclear energy restarts are both part of the same underlying question: can AI infrastructure costs and emissions be brought under control?

Summary by ReadAboutAI.com

https://www.technologyreview.com/2026/03/13/1134230/future-ai-chips-could-be-built-on-glass/: March 24, 2026

Is This Product ‘Human-Made’? The Race to Establish an AI-Free Logo

BBC News — March 17, 2026

TL;DR: At least eight competing “human-made” certification initiatives have emerged globally, but the absence of a universal standard, inconsistent auditing, and a technically murky definition of “AI-free” currently make these labels more signal than guarantee.

Executive Summary

Backlash against AI-generated content has sparked a cottage industry of certification schemes — including No A.I., AI-free.io, NotByAI, ProudlyHuman, Books by People, and others — aimed at letting creators and brands signal that their work is human-originated. The analogy being pursued is the Fair Trade logo: a trusted, universally recognized mark that carries consumer weight. The publishing and film industries are the most active early adopters, with Faber and Faber applying “Human Written” stamps to select books and film distributors adding “No AI” credits.

The critical problem, which the article surfaces clearly, is fragmentation and verification credibility. Some labels can be downloaded by anyone without any vetting. Others, like aifreecert and ProudlyHuman, require payment, questionnaires, and periodic content auditing. But even the more rigorous systems face a fundamental definitional challenge: AI is already embedded in spell-checkers, grammar tools, image editors, search, and countless software platforms. Determining what truly constitutes “AI-free” creation is not a binary question, and experts quoted in the piece explicitly flag this.

The market signal here is real: as AI content floods channels, human-made provenance is developing economic value. One film distributor quoted in the article frames it explicitly as a pricing premium opportunity. But without a dominant, trusted, audited standard, these labels risk becoming marketing claims rather than verifiable facts — potentially accelerating the trust problem they’re meant to solve.

Relevance for Business

For SMB leaders, this story is most immediately relevant in two directions. First, if your business produces content, creative services, marketing, or professional outputs, the emergence of human-made certification is a positioning and differentiation opportunity worth evaluating now — before a standard is established and the window to be an early adopter closes. Second, if your business purchases content, creative work, or professional services, the question of whether those outputs are human-generated is becoming a legitimate procurement and quality consideration.

There is also a brand and reputation dimension: companies that use AI-generated content without disclosure — particularly in marketing, creative, or advisory contexts — face growing risk as consumer and client expectations around transparency increase. The lack of a universal standard today does not eliminate that risk; it may amplify it if competitors establish credible human-made credentials first.

Calls to Action

🔹 If you produce creative, written, or advisory content as a core offering, evaluate whether a credible human-made certification is a competitive differentiator worth pursuing — the window for early positioning is open now.

🔹 Do not self-certify or use unaudited labels — they provide false confidence and may create liability if challenged; if you pursue certification, use a system with genuine third-party auditing.

🔹 Establish an internal AI disclosure policy for content your organization produces or publishes — define what you will and won’t use AI for, and communicate it proactively.

🔹 Monitor for an emerging dominant standard (the Fair Trade equivalent) — when one emerges, the cost of not being early will rise sharply for brands where human provenance matters.

🔹 For content procurement, add AI usage disclosure as a standard clause in contracts with freelancers, agencies, and creative vendors.

Summary by ReadAboutAI.com

https://www.bbc.com/news/articles/cj0d6el50ppo: March 24, 2026

THE FUTURIST WHO HELPED DEFINE TECH TREND REPORTS JUST KILLED THEM (LITERALLY)

Fast Company | Max Ufberg | March 14, 2026

TL;DR: At SXSW, influential futurist Amy Webb declared the annual trend report obsolete and replaced it with a “convergence” framework — arguing that leaders who track individual trends are missing the structural collisions that actually reshape industries, and that the next internet is being built for machines, not people.

Executive Summary

This is a reported feature on a conference presentation, and the primary voice is Amy Webb, a consultant whose clients include Mastercard, Ford, and NASA. Her argument carries practitioner credibility, but it is advocacy for her firm’s methodology, not independent research. That framing matters when evaluating the specific claims — though the underlying structural observations are sound and worth leaders’ attention.

Webb’s core argument is methodological: annual trend reports capture a moment in a landscape moving too fast for annual snapshots. The replacement she proposes is a “convergence” framework — tracking not individual trends but the collisions between them. She identifies AI, energy infrastructure, robotics, biotechnology, and geopolitical competition as forces currently colliding in ways that create structural, often irreversible change. Her meteorological framing is useful: trends are data points; convergences are the storm systems that form when forces combine. Companies that see these storms coming and still fail to act — which Webb argues is the norm — are the target of her warning.

Two convergences are highlighted in the article that carry direct business relevance. The first is the “agentic economy”: as AI systems improve at autonomous task execution, the internet may shift from a search-and-browse model to a delegation model, with digital agents handling purchasing, subscription management, and decision-making on behalf of users. In that world, whoever controls the agents controls the economic gateway. The second is AI’s expanding role as emotional companion and adviser — therapist, dating coach, life guide — raising what Webb frames as an underappreciated dependency risk: people relinquishing decision-making to opaque, profit-driven systems. She also notes, pointedly, that automation’s labor impact may arrive not as sudden mass layoffs but as slow erosion through hiring freezes, attrition, and software absorption of office tasks — harder to see coming, and harder to respond to.

Relevance for Business

For SMB leaders, this article offers two kinds of value. First, Webb’s convergence framework is a genuinely useful planning tool — not because FTSG should be hired, but because the underlying logic is sound: evaluating AI in isolation from energy costs, geopolitical supply chain risk, and labor market shifts produces incomplete strategy. Leaders who are only tracking “AI trends” may be missing the more consequential structural picture. Second, the agentic economy signal deserves serious attention: if AI agents become the primary interface through which consumers and businesses discover and transact, the companies owning those agents — not the underlying vendors — capture the value. SMBs that depend on search-driven discovery, price comparison, or subscription models should be thinking now about how they show up in an agent-mediated world, not just a search-indexed one. Webb’s warning about AI dependency — users relinquishing agency to opaque systems — is also a governance and brand consideration for organizations deploying AI in customer-facing or advisory roles.

Calls to Action

🔹 Shift from trend-tracking to convergence-watching — Assign someone to monitor how AI intersects with your energy costs, labor market, regulatory environment, and supply chain simultaneously, not in isolation.

🔹 Prepare for the agentic economy — If your business relies on customer discovery through search, comparison, or recommendation, develop a strategy for how you’re represented and prioritized by AI agents making decisions on customers’ behalf.

🔹 Anticipate slow-burn labor displacement — Webb’s framing of gradual erosion through attrition and software absorption is a more accurate model than sudden mass layoffs. Workforce planning should account for this pattern.

🔹 Evaluate AI dependency risk in your own deployments — If you’re deploying AI tools that users rely on for advice, guidance, or decisions, consider what happens when that system is wrong, changes its behavior, or is discontinued.

🔹 Revisit your strategic planning cadence — If your organization still runs annual strategy cycles as the primary response to technology change, the pace of AI development has made that cadence insufficient. Build in quarterly environmental scans.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91507234/amy-webb-trend-report-death-sxsw: March 24, 2026

AI FIRM ANTHROPIC SEEKS WEAPONS EXPERT TO STOP USERS FROM ‘MISUSE’

BBC | Zoe Kleinman | March 17, 2026

TL;DR: Anthropic is hiring a chemical weapons and explosives policy expert to harden its AI guardrails against catastrophic misuse — a move that signals the AI safety problem is real and still unsolved, while also highlighting a regulatory vacuum that no company or government has yet addressed.

Executive Summary

Anthropic has posted a role for a policy manager with expertise in chemical weapons, high-yield explosives, and radiological dispersal devices. The explicit purpose is to prevent its AI systems from providing users with information that could enable mass-casualty events. OpenAI has posted a similar position, offering a salary nearly double Anthropic’s. Both moves reflect a recognized, non-hypothetical risk: that commercial AI models, without robust domain-specific oversight, can provide technically meaningful assistance toward weapons development.

The article surfaces a significant structural concern: expert critics question whether training AI systems on sensitive weapons information — even to build guardrails — is itself a risk vector. There is currently no international treaty or regulatory framework governing this type of AI safety work. It is proceeding entirely through voluntary, company-led action, with no independent verification that guardrails are effective. The article also provides important context: Anthropic is simultaneously fighting a Pentagon designation labeling it a national security supply chain risk — a consequence of refusing to allow its AI to be used for autonomous weapons or mass surveillance. The combination of proactive safety hiring and aggressive government pushback illustrates how Anthropic is navigating a narrowing middle ground between safety credibility and commercial/political viability.

Relevance for Business

For SMB leaders, the direct operational implication is limited — this is not about your workflows.

The governance signal, however, is important. AI companies are acknowledging that their products pose risks serious enough to require weapons-domain expertise on staff. That is a useful data point when evaluating vendor claims about safety, reliability, and responsible deployment. More practically: the absence of external regulation means vendor self-policing is the only check currently in place. Leaders making AI vendor decisions should ask not just about features, but about what guardrails exist, how they are maintained, and who is accountable when they fail. As AI tools become more capable, the gap between what they can do and what they should do will require active vendor scrutiny — not passive trust.

Calls to Action

🔹 Assign vendor due diligence on safety practices — Review how your primary AI vendors handle misuse prevention, especially in sensitive industries (healthcare, legal, finance, defense-adjacent).

🔹 Prepare internal acceptable use policy — If you haven’t defined what your employees are permitted to ask AI tools, do so now. Vendor guardrails are imperfect and not a substitute for internal governance.

🔹 Monitor regulatory developments — The current regulatory vacuum will not last indefinitely. Watch for EU AI Act enforcement and US executive action that may impose liability or compliance requirements on AI users, not just providers.

🔹 Treat vendor safety claims skeptically— Safety commitments without independent verification are marketing. Ask vendors what third-party audits or red-team testing backs their safety assurances.

🔹 Track the Anthropic-DoD legal outcome — If the court rules against Anthropic, it will further normalize the position that AI companies cannot impose safety conditions on government use — a precedent with broad implications.

Summary by ReadAboutAI.com

https://www.bbc.com/news/articles/c74721xyd1wo: March 24, 2026

Fake AI Content About the Iran War Is All Over X

WIRED | March 10, 2026

TL;DR: X’s Grok AI actively worsened the information environment during the Iran conflict by misidentifying real footage and generating fake imagery to “support” its incorrect answers — a documented case of a platform’s own AI tool amplifying disinformation rather than checking it.

Source note: Reported journalism with named expert sources and ISD research. Specific examples are verifiable. Read alongside the Verge Netanyahu article (Summary 8) for the full picture of this week’s AI-and-war-disinformation cluster.

EXECUTIVE SUMMARY

When disinformation expert Tal Hagin asked Grok to verify a post about Iranian missile strikes, the chatbot repeatedly misidentified the video’s location and date — then generated an AI image to “prove” its incorrect answer. This is not AI used by propagandists; it is the platform’s own AI generating and spreading disinformation in response to a verification request.

Separately, Iranian officials, pro-regime propaganda networks, and politically motivated U.S. accounts circulated AI-generated war imagery reaching millions of views before removal. An image of a U.S. B-2 bomber being shot down was viewed over a million times; images of Delta Force soldiers allegedly captured by Iran were viewed over five million times. AI detection tools cannot reliably identify AI-generated content, according to a NewsGuard analyst quoted directly. X responded by demonetizing unlabeled AI conflict videos from blue-check accounts but did not disclose how many accounts were actually affected.

Meta’s Oversight Board separately found that Meta’s AI content labeling was “neither robust nor comprehensive enough to handle the scale and speed of AI-generated misinformation, particularly during crises and conflicts.”

RELEVANCE FOR BUSINESS

The Grok failure has a direct enterprise analog. AI tools used for internal research, competitive intelligence, or regulatory monitoring can produce the same failure mode: confidently incorrect answers supported by plausible-looking generated content. The stakes and platform differ; the mechanism is identical to an AI assistant “verifying” a supplier claim or competitor announcement with fabricated supporting detail.

CALLS TO ACTION

▹ Establish a policy that AI tools are not authoritative sources for verification — consequential claims confirmed by AI must be independently validated against primary sources.

▹ Brief your team on the Grok failure mode explicitly — AI that generates supporting “evidence” for wrong answers is a documented risk, not a theoretical one.

▹ Evaluate your team’s information sourcing during fast-moving events — assess how much competitive or market monitoring relies on AI-curated or AI-generated content.

▹ Do not treat blue-check marks, high view counts, or AI-generated images as credibility signals — all three were actively associated with false content in documented examples.

▹ Monitor platform AI labeling policy changes — Meta’s Oversight Board finding of inadequate crisis-condition labeling signals active regulatory pressure that will change how AI content is disclosed across platforms your business uses.

Summary by ReadAboutAI.com

https://www.wired.com/story/fake-ai-content-about-the-iran-war-is-all-over-x/: March 24, 2026

Benjamin Netanyahu Is Struggling to Prove He’s Not an AI Clone

The Verge | March 16, 2026

TL;DR: Debunked deepfake conspiracy theories about Netanyahu couldn’t be definitively put to rest because video authentication infrastructure doesn’t yet scale — illustrating that AI has created a trust deficit that burdens genuine content as much as fake content.

Source note: Reported news feature with editorial commentary. Factual claims are specific; closing passages on the Trump administration reflect the author’s perspective.

EXECUTIVE SUMMARY

Professional fact-checkers at Snopes and PolitiFact debunked claims that Netanyahu’s press conference video was AI-generated (the “extra finger” was video compression; the nearly 40-minute runtime exceeds current AI video model capabilities). Netanyahu posted a proof-of-life video. That too was immediately accused of being fake. The article’s substantive point: neither clip carries C2PA Content Credentials or SynthID metadata that could verify authenticity or track AI tool use. Platforms that pledged to label AI-generated content provided no such labeling on either clip.

The result: it is now “almost impossible to definitively prove” whether even professionally fact-checked videos are genuine. AI-generated fakes have become credible enough that genuine content now struggles to prove its own authenticity — an authentication infrastructure gap that is symmetric and structural. AI tools can generate convincing video; the infrastructure to authenticate real video does not yet scale.

RELEVANCE FOR BUSINESS

If a head of state cannot authenticate a video of himself within 24 hours, any business whose leadership uses video for communications faces the same structural challenge. The risk is not only that your content could be faked — it’s that authentic content could be accused of being fake, with no reliable mechanism to quickly disprove the claim. Existing tools are not yet equipped to handle this at scale.

CALLS TO ACTION

▹ Investigate C2PA Content Credentials — the emerging standard for embedding provenance metadata in video and image content. Evaluate whether your video production workflow can support it for high-stakes communications.

▹ Establish an executive video authentication response protocol now — before you need it, define how your organization would respond to a deepfake accusation against authentic content.

▹ Do not rely on platform AI labeling pledges as a verification backstop — platforms that pledged AI content labeling provided none on clips subject to active deepfake accusations.

▹ Monitor C2PA and SynthID adoption — authentication standards are being written now; early adoption may become a trust signal, and late adoption a liability.

▹ Treat deepfake defense as a response planning issue, not yet an active attack threat — for most SMBs, the near-term risk is being without a plan when the question arises, not being targeted directly.

Summary by ReadAboutAI.com

https://www.theverge.com/tech/895453/ai-deepfake-netanyahu-claims-conspiracy: March 24, 2026

Anthropic Said No. OpenAI Said Yes. One Weekend, One Decision — and a Masterclass in Brand Building.

Fast Company | March 13, 2026

TL;DR: Anthropic’s refusal to sign the Pentagon’s AI contract produced a measurable, rapid transfer of market share from OpenAI to Anthropic — evidence that in AI, trust and values positioning are now compounding competitive advantages, not just PR.

Source note: Brand strategy opinion piece. Market data (app analytics, revenue run rate) references Bloomberg and app analytics — directionally credible but not independently audited.

EXECUTIVE SUMMARY

The reported figures are striking: ChatGPT U.S. app uninstalls surged 295% in a single day after the Pentagon deal news. Claude downloads jumped 51% in the same window. Anthropic’s app reached No. 1 on the U.S. App Store, gaining 20 positions in under a week. Anthropic’s revenue run rate reportedly doubled — from $9B at end of 2025 to nearly $20B by mid-March. The commercial cost was real: the Pentagon contract was valued at approximately $200 million, and the supply chain risk designation — historically only applied to foreign adversaries like Huawei, never before to an American company — threatens hundreds of millions more in broader government contracts.

The article’s central argument: AI platforms are moving toward a high switching-cost category driven not only by technical lock-in but by what it calls “relational cost” — accumulated context, workflow integration, and organizational trust that deepens over time. Values signals and product signals are becoming inseparable.

Evidence cited: Anthropic’s Claude Constitution (a publicly inspectable training framework, not merely a mission statement) and its Economic Index are described as “operationalized values” — choices with visible cost. A telling data point: Claude holds 32% of enterprise AI usage despite only 3.5% consumer footprint — framed as a trust premium that preceded and was amplified by the Pentagon incident.

RELEVANCE FOR BUSINESS

The signal for SMB leaders: AI platform decisions are increasingly long-term commitments, not commodity software swaps. As AI tools accumulate organizational context — your workflows, data, and team patterns — switching costs compound. Vendor trust and values alignment are not soft factors; they are risk factors. If your AI vendor’s ethics come under public scrutiny, that exposure travels upstream to every business visibly associated with them.

CALLS TO ACTION

🔹 Treat AI vendor selection as a long-term brand and risk decision — evaluate vendors on governance posture and track record under pressure, not capability alone.

🔹 Audit your current AI vendor associations — assess what it signals to customers, partners, and employees to be identified with a particular AI platform.

🔹 Apply the “operationalized values” test to vendor due diligence — distinguish between companies that articulate safety commitments and those that demonstrate them with visible cost.

🔹 Plan for switching costs before they compound — early in AI adoption, establish vendor criteria that include governance. After deep workflow integration, the cost of changing rises significantly.

🔹 Monitor Anthropic’s legal challenge to the supply chain risk designation — the outcome will clarify whether AI companies can effectively resist government pressure, a precedent with broad industry implications.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91506253/anthropic-said-no-openai-said-yes-one-weekend-one-decision-and-a-masterclass-in-brand-loyalty-anthropic-openai-department-of-defense-brand-loyalty: March 24, 2026

How AI Is Turning the Iran Conflict into Theater

MIT Technology Review | March 9, 2026

TL;DR: AI tools have made it cheap and fast for civilians to build war intelligence dashboards — but these feeds create an illusion of informed situational awareness while spreading inaccuracy, fake imagery, and financially incentivized speculation dressed up as analysis.

EXECUTIVE SUMMARY

Following U.S.-Israel strikes against Iran, a new ecosystem of AI-assembled intelligence dashboards emerged rapidly — some reportedly built in days with minimal technical expertise. These dashboards pull together satellite imagery, ship tracking, news feeds, prediction market links, and AI-generated summaries. The author reviewed over a dozen, including one built by two Andreessen Horowitz-affiliated individuals that attracted the attention of a Palantir founder. Palantir is the platform through which the U.S. military is reportedly accessing AI models like Claude during the conflict.

The article surfaces a critical distinction: assembling data is not the same as understanding it. Intelligence agencies pair raw feeds with experts who supply historical context and proprietary information. These dashboards do neither. Their AI-generated summaries introduce factual errors. The Financial Times documented AI-generated fake satellite imagery spreading during the conflict — and satellite imagery carries high perceived credibility, making fakes particularly damaging.

Digital investigations expert Craig Silverman (tracking 20 such dashboards) describes the core risk as “an illusion of being on top of things” while pulling in undifferentiated signals. A compounding distortion: many dashboards are directly linked to prediction markets (Kalshi, Polymarket) where users bet on conflict outcomes. This creates an incentive structure that conflates financial speculation with analysis and turns active conflict into entertainment.

RELEVANCE FOR BUSINESS

For SMB leaders, the immediate signal is epistemological, not geopolitical. AI-assembled information feels authoritative but is not inherently reliable. The same failure mode — AI aggregates without verifying, summarizes without expertise, presents confidence without warrant — applies to AI-generated market research, competitive intelligence summaries, and news digests your team may already be using. Any business that has integrated AI-generated content without editorial review processes faces this structural problem at smaller scale.

CALLS TO ACTION

🔹 Audit how AI-generated summaries enter your decision-making — identify where your team is treating AI output as verified vs. as a starting point for human review.

🔹 Require primary source backing for consequential decisions — vendor selection, market entry, and competitive strategy should not rest on AI summaries of secondary sources.

🔹 Treat AI-generated imagery with elevated skepticism — credible fake visuals are commercially available at low cost and general-purpose.

🔹 Do not integrate prediction market outputs into strategic planning — the incentive structure creates deliberate signal distortion, not insight.

🔹 Develop a formal policy for AI-assisted research and competitive monitoring — most SMBs have not yet addressed this governance gap.

Summary by ReadAboutAI.com

https://www.technologyreview.com/2026/03/09/1134063/how-ai-is-turning-the-iran-conflict-into-theater/: March 24, 2026

Is the Pentagon Allowed to Surveil Americans with AI?

MIT Technology Review | March 6, 2026

TL;DR: The Anthropic–Pentagon standoff exposed a genuine legal gap: existing U.S. law may not actually prohibit the military from using AI to conduct mass surveillance on Americans, and the contracts AI companies sign may not close that gap.

EXECUTIVE SUMMARY

The dispute began when the Pentagon sought to use Anthropic’s Claude to analyze bulk commercial data on American citizens. Anthropic refused, citing mass domestic surveillance as a hard limit. Negotiations collapsed and the Pentagon designated Anthropic a supply chain risk — a label historically reserved for foreign adversaries. OpenAI moved in and signed a deal allowing its AI to be used for “all lawful purposes,” triggering a wave of user uninstalls and public protests. OpenAI later amended the contract to explicitly prohibit domestic surveillance.

The deeper issue remains unresolved. Legal experts make clear that U.S. surveillance law has not kept pace with AI capabilities. Much of what people consider surveillance — aggregating social media, location data, browsing records, voter registration files, and commercially purchased data — is not legally classified as surveillance. The Fourth Amendment was written for physical searches; subsequent statutes addressed wiretapping and email. None were designed for AI systems capable of assembling granular behavioral profiles from thousands of individually innocuous data points.

OpenAI’s revised contract language is complicated by the fact that the Pentagon can use AI for any “lawful purpose”, and the law itself is ambiguous about what that permits. One law professor quoted in the article was explicit: companies are largely unable to stop the Pentagon from using contracted technology however it perceives to be lawful.

RELEVANCE FOR BUSINESS

SMB leaders using AI platforms that also hold government contracts are operating in a trust environment shaped by those contracts’ terms. Vendor ethics decisions are no longer abstract — they carry measurable market consequences. Any business processing personal data using AI tools should be aware that the legal framework governing what governments can do with that same data remains unsettled. This governance gap is directly relevant to risk assessments in regulated industries and enterprise AI procurement.

CALLS TO ACTION

🔹 Review your AI vendor contracts for broad “lawful purposes” clauses or language permitting government use of your data.

🔹 Assign someone to track federal surveillance legislation — active bills could directly affect what data AI vendors are permitted to process on your behalf.

🔹 Include governance posture in AI vendor evaluation — willingness to set hard limits is now a differentiating, market-tested factor.

🔹 Do not assume contract language protects you — legal experts are explicit that contracts do not reliably constrain government use of AI tools.

🔹 Revisit data handling and AI vendor policies quarterly through at least 2027 as this legislative and judicial space evolves.

Summary by ReadAboutAI.com

https://www.technologyreview.com/2026/03/06/1134012/is-the-pentagon-allowed-to-surveil-americans-with-ai/: March 24, 2026

WHERE OPENAI’S TECHNOLOGY COULD SHOW UP IN IRAN

MIT Technology Review | James O’Donnell | March 16, 2026

TL;DR: OpenAI has entered a Pentagon agreement with weaker safeguards than advertised, and its AI may soon assist in active combat targeting, drone defense, and military administration — raising unresolved questions about accountability, oversight, and what AI companies will and won’t do for government contracts.

Executive Summary

OpenAI’s recent Pentagon agreement allows its models to operate in classified military environments, including potential use in the ongoing US conflict with Iran. The stated guardrails — no autonomous weapons, no domestic surveillance — are weaker than they sound: the agreement defers to the military’s own permissive guidelines rather than imposing independent constraints. MIT Technology Review’s reporting treats these assurances skeptically, and that skepticism is warranted.

Three likely use cases are identified: (1) target prioritization — AI assists human analysts in ranking strike targets by synthesizing text, image, and video intelligence; (2) drone defense — through the existing OpenAI/Anduril partnership, conversational AI may be layered onto counter-drone systems to support real-time soldier queries; (3) back-office administration — OpenAI models are already on the GenAI.mil platform for drafting contracts and policy documents. Notably, Anthropic was designated a Pentagon supply chain risk after refusing to allow its AI to be used for “any lawful use” — a designation it is contesting in court. OpenAI negotiated different terms and is now being integrated where Anthropic was not.

The speed and completeness of OpenAI’s pivot from civilian AI company to active military contractor is significant. The practical question — whether human review of AI targeting recommendations actually slows decisions or merely provides political cover — remains unanswered.

Relevance for Business

This development matters for SMB leaders primarily as a vendor transparency and risk signal. The AI tools many businesses use daily are now embedded in active combat systems with contested oversight. Leaders relying on OpenAI or similar providers should understand that vendor ethics commitments are not fixed — they shift with commercial and political pressure. The Anthropic case also illustrates that refusing government use cases carries real business consequences, including supply chain designations that could affect enterprise procurement. For companies operating in defense-adjacent industries, this sets a precedent for what AI providers will negotiate away.

Calls to Action

🔹 Monitor vendor policy shifts — Review your primary AI vendor’s current government use policies; these have changed rapidly and will continue to evolve.

🔹 Assess dependency risk — If your operations depend on a specific AI provider, understand what disruption looks like if that vendor is politically or contractually constrained.

🔹 Prepare internal AI use policy — As AI is normalized for high-stakes decisions in other sectors, establish your own standards for where AI-assisted decisions require human review and sign-off.

🔹 Track the Anthropic precedent — The outcome of Anthropic’s legal challenge against its Pentagon “supply chain risk” designation may affect how AI companies position ethical constraints in future contracts.

🔹 Ignore for now — The specific military applications described here have no direct operational impact on most SMBs, but the vendor behavior pattern is worth noting.

Summary by ReadAboutAI.com

https://www.technologyreview.com/2026/03/16/1134315/where-openais-technology-could-show-up-in-iran/: March 24, 2026

The Fake Images of a Real Strike on a School

The Atlantic — March 13, 2026

TL;DR: AI-generated disinformation in the Iran conflict is demonstrating a new and more dangerous pattern: not mass deception, but deliberate erosion of evidentiary trust — making the question “is this real?” functionally unanswerable.

Executive Summary

This piece by Mahsa Alimardani documents a specific, well-sourced sequence of events: an AI-generated image (with a visible Google Gemini watermark) circulated on Instagram the day before a real school in Iran was struck by what a U.S. military investigation preliminarily attributed to American forces. The fake image primed audiences to see schools as legitimate military targets. When footage of the real strike circulated, it was immediately contested — and an AI chatbot (Grok) confidently corroborated a false denial, citing major news outlets that actually contradicted it.

The article’s central argument is analytically sharp and worth taking seriously: AI disinformation does not need to fool everyone. It needs to make “is this real?” close to unanswerable. Real photos of civilian deaths get labeled fake. Fake images illustrate real deaths. Correct identification of one fabricated image is used to discredit authentic ones. The cycle runs faster than any newsroom, fact-checker, or platform can process.

This is a geopolitical story, not a business story — but it has direct downstream relevance. The same dynamics that are making evidentiary truth unstable in conflict zones are operating in commercial, reputational, and legal contexts.AI-generated images, audio, and video are already being used in fraud, reputation attacks, and market manipulation. The article provides a clear-eyed model for how that erosion works in practice.

Relevance for Business

The business relevance is not the Iran conflict itself. It is the demonstrated operational playbook for AI-enabled trust erosion: fabricated content doesn’t need to be believed — it only needs to contaminate the evidentiary environment enough that truth becomes difficult to establish quickly. For SMBs, the implications span several domains:

Reputational risk: Fabricated images or audio of your leadership, products, or operations could circulate faster than you can respond. Fraud exposure: Deepfake audio and video are already being used in business email compromise and vendor impersonation schemes. Vendor/partner trust: Verifying the authenticity of communications, contracts, and identity is a growing operational challenge. Legal and compliance: Evidentiary standards in disputes, HR investigations, and regulatory matters are being complicated by the same dynamics described here.

Calls to Action

🔹 Implement internal verification protocols for high-stakes communications — especially wire transfers, executive instructions, and vendor changes — that do not rely solely on digital channels.

🔹 Brief leadership on the operational model described here: AI disinformation doesn’t require mass deception — it requires sustained doubt. Prepare a response posture, not just detection tools.

🔹 Review your cyber and fraud insurance coverage for deepfake-enabled impersonation and business email compromise — policy language is lagging the threat.

🔹 Assign someone to monitor deepfake detection tools and media authentication standards (e.g., C2PA/content provenance frameworks) as they mature.

🔹 Do not assume that watermarks, platform labels, or AI detection tools provide reliable protection at this time — the article documents cases where all of these failed or were weaponized.

Summary by ReadAboutAI.com

https://www.theatlantic.com/ideas/2026/03/ai-imagery-iran-war/686347/: March 24, 2026

Rise of the AI Soldiers

TIME — March 10, 2026

TL;DR: Humanoid AI combat robots are moving from concept to active military testing and limited deployment — raising profound ethical, legal, and escalation risks that current governance frameworks are not equipped to manage.

Executive Summary

TIME’s reporting from Foundation’s San Francisco headquarters documents the Phantom MK-1, a humanoid robot being developed specifically for military applications — the first such system its makers claim. Foundation holds $24 million in U.S. military research contracts across the Army, Navy, and Air Force, has deployed two units to Ukraine for reconnaissance, and is preparing for potential combat deployment. The Phantom MK-2 is due in April with expanded capabilities; the company aims to eventually manufacture 30,000 units per year at under $20,000 each.

The article situates this within a broader, well-documented trend: Ukraine has become the world’s primary testing ground for AI-enabled warfare, with up to 9,000 drones launched daily and autonomous systems increasingly operating without human intervention when communications fail. Scout AI, another firm profiled, claims to have demonstrated a fully automated kill chain — identify, locate, and neutralize a target — without human involvement at any stage.

The governance picture is deteriorating, not improving. The Trump administration revoked Biden-era AI safety requirements, and a February executive order terminated Anthropic’s federal contracts specifically because they prohibited use of AI to surveil citizens or program autonomous lethal weapons without human involvement. The U.N. Secretary-General has called for a legally binding treaty prohibiting autonomous weapons systems without meaningful human control; over 120 nations support the measure; the U.S., Russia, and Israel have not committed. Current Pentagon protocols require human authorization for lethal engagement — but the article documents cases in Ukraine where that standard is already operationally bypassed.

The strategic and ethical risks are clearly articulated: autonomous systems lower the political cost of initiating conflict; AI hallucinations and algorithmic bias in lethal systems are not theoretical; captured or hacked humanoid robots represent significant intelligence and security exposure; and legal accountability for autonomous war crimes is unresolved in international law.

Relevance for Business

This is the furthest-from-daily-operations story in this batch — but it has real business relevance across several dimensions:

Defense and government contractors face both opportunity and significant compliance and reputational complexity as this ecosystem grows rapidly. AI vendors and enterprise software firms should note that the rollback of AI safety guardrails at the federal level — including the termination of Anthropic’s contracts for maintaining ethical restrictions — signals a shift in what the government will and will not require from AI suppliers. Dual-use risk is rising: AI tools built for commercial applications are being adapted for military use faster than governance frameworks can respond.

For most SMB executives, the immediate takeaway is situational awareness: the regulatory and ethical environment around AI is actively shifting, and the direction at the federal level is toward fewer constraints, not more. That has downstream implications for what tools are permissible, what vendors are stable, and what governance burden may eventually fall on private operators.

Calls to Action

🔹 If you operate in defense, government contracting, or dual-use technology, treat AI governance as a live compliance risk — the regulatory environment is shifting faster than contract cycles.

🔹 Monitor federal AI policy, particularly executive orders and DOD procurement standards — the rollback of safety requirements affects what AI systems are permissible in government-adjacent work.

🔹 For most SMBs, no immediate action is required — but assign this to your strategic risk watch list; autonomous systems and AI-in-warfare governance will create regulatory spillover into commercial AI over time.

🔹 If your business uses AI tools from vendors with government contracts, understand whether changing federal AI standards affect the terms or capabilities of those tools.

🔹 Revisit in 6–12 months — the Phantom MK-2 launch, Geneva treaty negotiations, and DOD procurement decisions in H1 2026 will clarify the trajectory meaningfully.

Summary by ReadAboutAI.com

https://time.com/article/2026/03/09/ai-robots-soldiers-war/: March 24, 2026

AGI Isn’t the ‘Holy Grail’ for Women in AI. It’s Gender-Purpose AI, and It’s Already Here.

Fast Company | March 13, 2026

TL;DR: A growing cohort of women-led AI ventures is building narrowly targeted, purpose-driven AI rather than racing toward general-purpose AGI — and the underlying procurement risk for businesses is real: who builds AI determines what it does and who it actually serves.

Source note: Opinion-forward advocacy from a female founder. The “gender-purpose AI” framing is the author’s construct, not an established industry taxonomy. The underlying bias documentation and funding data are real and separately verifiable.

EXECUTIVE SUMMARY

SXSW 2026 featured 185 AI sessions — more than double 2024’s count. Companies with at least one female founder raised $38.8B in VC in 2024, up 27% year-over-year, but still well below the 2021 peak of $62.5B. The article profiles women founders building AI with explicit design intent: Rana el Kaliouby (Affectiva, Blue Tulip Ventures) built AI that reads human emotion; Valerie Chapman (Ruth AI) targets the $1.6 trillion gender wage gap.

Joy Buolamwini’s 2018 “Gender Shades” research is the article’s most important citation: it empirically documented accuracy gaps in Microsoft’s, IBM’s, and Amazon’s facial recognition systems across gender and racial lines — exposing that widely deployed enterprise AI was measurably less accurate for women and people of color until external researchers forced corrections. This is a risk profile, not just a philosophical concern.

Three claims in the piece deserve separation: (1) Fact — women-led AI ventures remain underfunded and gender bias in AI systems has been empirically documented. (2) Framing — “gender-purpose AI” as a distinct category is the author’s construct. (3) Speculation — claims about 2026 outcomes are forward-looking projections.

RELEVANCE FOR BUSINESS

The operative signal for SMB leaders is not the AGI vs. gender-purpose framing — it’s the procurement risk. If your workforce is majority female or your product serves women, AI tools built without explicit bias testing carry operational and reputational exposure. The Gender Shades case is a concrete precedent: enterprise AI was demonstrably less accurate across demographics until forced to change. That is a vendor liability concern, not a political one.

CALLS TO ACTION

🔹 Request bias testing documentation from AI vendors — especially for tools used in hiring, customer service, or demographic-adjacent decision-making.

🔹 Monitor the gender-purpose AI segment — early-stage platforms with explicit inclusion design may fit female-majority workforces or customer bases better than general-purpose tools.

🔹 Treat AI accuracy gaps as a procurement and liability issue, not a political one — documented failures are measurable and vendor-attributable.

🔹 Note the funding gap as a market signal — women-led AI ventures remain underfunded relative to opportunity; competitive positioning here is still early.

🔹 Deprioritize the AGI framing for SMB planning — whether AGI arrives in 5 or 15 years is not actionable. The relevant question is whether your current AI tools were designed with your users in mind.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91497946/agi-isnt-the-holy-grail-for-women-in-ai-its-gender-purpose-ai-and-its-already-here-women-ai-technoogy-leaders: March 24, 2026

OpenAI’s Bid to Allow X-Rated Talk Is Freaking Out Its Own Advisers

The Wall Street Journal | March 15, 2026

TL;DR: OpenAI is delaying but not abandoning plans to allow sexually explicit chatbot conversations, despite warnings from its own advisory council — including concerns that inadequate age verification could expose millions of minors and that the feature risks creating what one adviser called a “sexy suicide coach.”

Source note: Original WSJ investigative reporting based on reviewed documents and sourced individuals. Substantially factual; some details reflect internal company framing.

EXECUTIVE SUMMARY

OpenAI CEO Sam Altman announced “adult mode” publicly in late 2025 without informing his own staff — including on the same day he launched an advisory council charged with defining healthy AI interactions for all ages. The council’s January 2026 meeting was unanimous and furious. Members cited emotional dependence risks, minor access concerns, and prior cases of ChatGPT users dying by suicide after forming intense bonds with the chatbot.

The technical challenges are real and unresolved: OpenAI’s age-prediction system was misclassifying minors as adults approximately 12% of the time — a rate that would expose millions of its ~100 million weekly under-18 users to explicit content. The company has also struggled to technically separate permitted erotica from prohibited content (nonconsensual depictions, child abuse material). The launch, originally slated for Q1 2026, has been delayed; internal estimates suggest at least another month.

Industry context: Character.AI faced a wrongful death lawsuit after a 14-year-old user died by suicide following explicit chatbot exchanges. xAI’s Grok has been permissive with explicit content. Meta allows romantic role play on its AI. OpenAI says it plans to release adult mode eventually, framing it as textual “smut” rather than pornography, while restricting erotic images, voice, and video.

RELEVANCE FOR BUSINESS

Two distinct risks for SMB leaders: First, any business deploying ChatGPT in customer-facing or employee-facing contexts needs to understand what the platform will permit when adult mode launches — especially where minors or sensitive use cases are involved. Second, the article documents a structural governance failure: Altman announced a product direction publicly before informing staff or the advisory council he had just created. For businesses evaluating OpenAI as an enterprise partner, this is a signal about how safety commitments are operationalized in practice.

CALLS TO ACTION

🔹 Review your ChatGPT deployment terms and access controls now — before adult mode launches, understand how it could affect your use case, especially where minors or vulnerable users are present.

🔹 Assign an internal owner to track the adult mode rollout — specifically what enterprise controls will be available and the timeline for age-verification improvements.

🔹 Evaluate platform-user wellbeing fit — if your AI chatbot deployment touches HR, wellness, or customer care, assess whether OpenAI’s product direction is compatible with your risk tolerance.

🔹 Use this as a governance model check — the advisory council situation illustrates the gap between having safety structures and integrating them into actual decisions.

🔹 Monitor litigation developments — the Character.AI wrongful death precedent signals that platform liability for chatbot harms is an active legal risk not fully reflected in current enterprise AI procurement decisions.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/ai/openai-adult-mode-chatgpt-f9e5fc1a: March 24, 2026

SHOULD YOU BE ABLE TO HAVE SEX WITH CHATGPT?

New York Intelligencer | John Herrman | March 16, 2026

TL;DR: OpenAI is moving toward enabling adult/erotic content in ChatGPT despite significant internal opposition — a decision that will force every AI platform, and every organization deploying AI tools, to define acceptable use policies in a space that no industry standard currently governs.

Executive Summary

This is an analytical opinion column, not a news report, and should be read as such. The author’s framework is useful: every major internet platform eventually has to answer the “porn question,” and the answers companies choose shape both user behavior and the parallel industries that form around them. AI chatbots are not exempt from this dynamic — explicit adult chat was among ChatGPT’s earliest use cases and remains in active demand.

The article documents that OpenAI’s leadership, specifically Sam Altman, has expressed interest in enabling erotic content in ChatGPT, citing user autonomy. An internal advisory council with backgrounds in psychology and neuroscience raised objections — not only the familiar concerns about child safety and harmful content, but AI-specific risks including emotional overreliance and displacement of real-world relationships. Despite those objections, the article reports OpenAI is proceeding. Other platforms have already drawn their own lines: Grok is permissive with documented abuse; Meta allows “romantic” role-play with ineffective guardrails; Google is cautious but has commercial relationships with platforms that are not; Anthropic is avoiding the category entirely given its enterprise focus.

The column identifies a structurally important point for organizational leaders: OpenAI’s choice doesn’t prevent the use case — it only determines whether OpenAI captures the users pursuing it or whether they migrate to less-governed alternatives. The parallel adult AI industry built on open-source models is already proliferating independently of what any major platform decides.

The article raises emotional dependency and addictive engagement as underexplored risks distinct from traditional content concerns — a class of harm that does not fit neatly into existing HR, legal, or IT governance frameworks.

Relevance for Business

The direct organizational risk for SMB leaders is not hypothetical. Employees are using AI tools — including tools deployed by employers — in ways that include personal, intimate, and potentially inappropriate interactions. The absence of clear organizational policy on acceptable use of AI tools creates HR, legal, and reputational exposure. The spectrum of platform choices already in market (from Anthropic’s restriction to Grok’s permissiveness) means employees can and do access AI companions and adult-oriented AI on personal devices and, potentially, work devices. Leaders should not assume that enterprise tool selection resolves the issue — the question is also about what your employees are doing with AI broadly and whether your policies address it. The emotional dependency risk flagged by OpenAI’s own advisors is also worth taking seriously: AI tools designed to maximize engagement are not neutral productivity tools, and uncritical deployment without use guidelines creates risks beyond content.

Calls to Action

🔹 Review or draft an AI acceptable use policy — If your organization doesn’t have one, this development makes it urgent. Address what platforms employees can use, on what devices, and for what purposes.

🔹 Separate enterprise AI tools from personal AI behavior — Establish clear distinctions between employer-provisioned AI tools (with defined use cases) and employee personal AI use. Don’t assume enterprise tool selection governs the latter.

🔹 Brief HR and legal on AI-specific risks — Emotional dependency, inappropriate relationships with AI systems, and related harms don’t map neatly to existing harassment or conduct policies. Review whether your current frameworks are adequate.

🔹 Monitor OpenAI’s policy rollout — If your organization uses ChatGPT, the content policy changes under consideration will directly affect what your employees and customers can access through that platform.

🔹 Treat this as governance infrastructure, not a content problem — The underlying issue is that AI tools are becoming deeply personal engagement platforms. That requires governance thinking, not just content filtering.

Summary by ReadAboutAI.com

https://nymag.com/intelligencer/article/should-you-be-able-to-have-sex-with-chatgpt.html: March 24, 2026

OpenAI’s Own Mental Health Experts Unanimously Opposed “Naughty” ChatGPT Launch

Ars Technica | March 16, 2026

TL;DR: Follow-up reporting on the OpenAI adult mode controversy adds three critical new risk layers: a fired safety executive, content filters that have already failed in production, and an age-verification system that routes users’ biometric data through a third-party vendor with its own documented security vulnerabilities.

Source note: Reported journalism extending the WSJ investigation with additional sourcing. Editorially framed in places; factual claims are well-sourced.

EXECUTIVE SUMMARY

OpenAI fired a safety executive who opposed the adult mode rollout (OpenAI denied the connection). That executive’s public criticism focused on the company’s inability to block minors from prohibited content — the central issue behind the feature’s delay. A second former safety employee subsequently warned parents publicly not to trust OpenAI’s adult mode claims.

On the technical side, a pre-launch bug had already allowed minors to access graphic erotica on ChatGPT when content filters failed. OpenAI deployed a fix, but content restriction failures are not hypothetical — they have already occurred in production. The article also notes that Altman himself admitted in August that ChatGPT’s core chat use case was “saturated” — contextualizing adult mode as a financially motivated engagement driver, not a product maturity milestone.

The age-verification mechanism introduces a separate privacy risk for all users: those whose ages cannot be predicted algorithmically must submit biometric data (selfies or government IDs) to a third-party service called Persona, which temporarily stores that data. Developers have flagged unexplained verification errors and limited recourse. Discord recently dropped Persona as a vendor and paused its own age-verification rollout after user backlash and a hacking attempt against Persona’s systems.

RELEVANCE FOR BUSINESS