AI Updates March 10, 2026

Models Models Models

This week’s AI signal is less about dazzling demos and more about control, constraints, and consequences. Across the week’s coverage, the technology kept advancing—faster models, better coding, more capable agents, and new media-generation tools—but the deeper story was about what happens when those capabilities collide with real institutions. The most important questions are no longer “Can the model do it?” but Who governs it, who depends on it, who pays for it, and what breaks when it becomes embedded in everyday operations? For executives, that marks a meaningful shift: AI is no longer just a product story or innovation story. It is increasingly a management, policy, and infrastructure story.

The labor and organizational signal also became harder to ignore. AI is no longer being framed only as a productivity assistant; it is being positioned, tested, and in some cases openly discussed as a substitute for portions of routine knowledge work, entry-level white-collar tasks, and production workflows. At the same time, the newest generation of tools is improving in ways that matter to actual operations—stronger coding, better tool use, improved workflow execution, and broader multimodal capabilities. But the practical business issue is not simply whether these tools save time. It is whether organizations can capture efficiency without weakening talent pipelines, overcommitting to fragile vendors, or removing human judgment from decisions that still require accountability.

This week also reinforced that AI adoption now sits inside a larger system of power, capital, regulation, and trust. Infrastructure constraints, energy demand, platform concentration, licensing battles, and national-security tensions are no longer side issues; they are shaping the market itself. The public clash over military AI boundaries, alongside a stream of product launches and ecosystem moves, made one point especially clear: AI is becoming too consequential to be managed casually. For SMB leaders, the takeaway is straightforward: AI should now be treated less like a standalone tool and more like a strategic operating dependency—one that requires governance, cost awareness, vendor discipline, and a clear understanding of where automation helps, where it misleads, and where human oversight still matters most.

Model

A model is the AI system that has been trained to do something with information — such as answer questions, write text, create images, summarize documents, translate language, or make predictions. In simple terms, it is the brain behind the AI tool.

When people talk about a “new model,” they usually mean a newer version of that AI brain — one that may besmarter, faster, cheaper, more accurate, or better at certain tasks than the previous one. Different models are built for different strengths, so one may be better at writing, another at coding, and another at generating images or analyzing data.

Another way of thinking of Models:

Model: The trained AI system that powers an AI tool’s ability to understand inputs and produce outputs like text, images, code, or predictions. In everyday use, it is the underlying “brain” that determines what the AI can do and how well it does it.

Why it matters:

When AI companies announce new models, they are usually improving the core system that affects the tool’s quality, speed, cost, and usefulness.

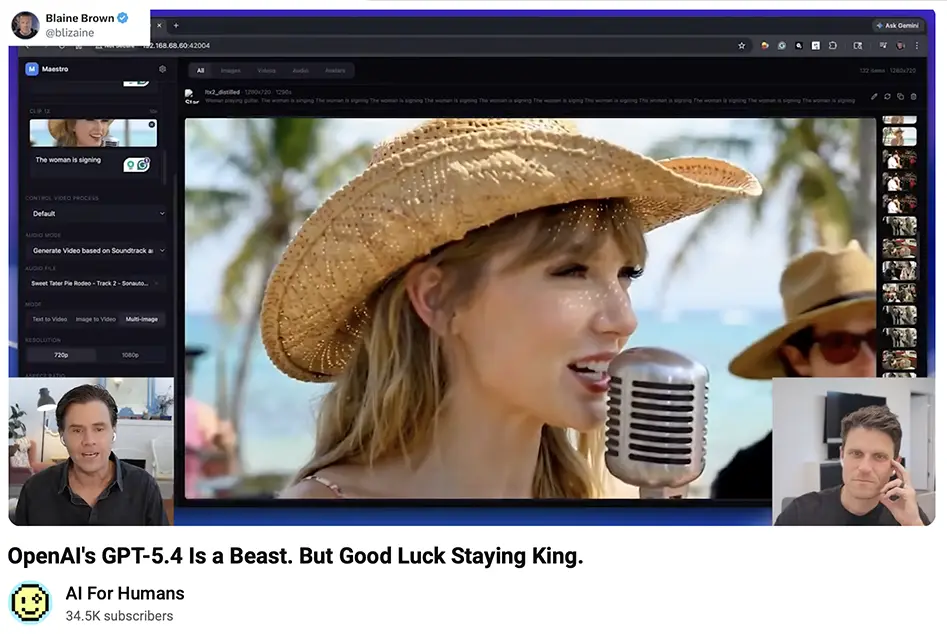

OPENAI’S GPT-5.4 IS A BEAST. BUT GOOD LUCK STAYING KING.

AI FOR HUMANS (MARCH 5, 2026)

TL;DR / Key Takeaway: GPT-5.4 appears to mark another meaningful jump in real-world AI capability—especially in coding, computer use, and efficiency—but the bigger executive signal is that model leadership is becoming more fragile, more political, and more operationally consequential.

EXECUTIVE SUMMARY

This AI for Humans episode frames OpenAI’s GPT-5.4 launch as both a product milestone and a reminder that state-of-the-art leadership in AI may now last only days or weeks, not quarters. According to the discussion, GPT-5.4 delivers stronger coding performance, lower hallucination rates, improved tool efficiency, and better “computer use” capabilities, including the ability to interact with interfaces in ways that increasingly resemble average human digital work. The hosts emphasize that the most important signal is not just benchmark improvement, but the continued movement of models into economically useful tasks—website building, workflow execution, document handling, and tool orchestration. For business leaders, this reinforces that AI is no longer just a chatbot layer; it is becoming an execution layer.

The episode also highlights a second, more strategic point: the market is shifting from “who has the best model?” to “who can sustain trust, distribution, policy alignment, and real-world utility?” GPT-5.4 may be better on paper and possibly cheaper than competing premium models in some coding use cases, but the broader environment is unstable. The hosts describe Anthropic’s growing momentum with users, Claude’s rising adoption, and the increasingly political backdrop around military contracts and national-security alignment. In that context, technical lead alone is not enough. AI leadership now depends on product usability, ecosystem fit, public trust, and government relationships—all of which can change quickly.

The episode also surveys new tools from Kling, Grok, NotebookLM, and others, showing how fast adjacent categories are improving: AI video manipulation, cinematic explainers, production tools, anti-recording devices, and creative workflow automation are all moving forward at once. Not all of these tools are production-ready, but together they show that the AI stack is broadening beyond text generation into media creation, workflow execution, and real-world sensory environments. The practical takeaway for executives is that competitive pressure is no longer coming from one flagship chatbot. It is coming from a widening field of tools that can reshape marketing, operations, content production, customer interaction, and knowledge work at the same time.

RELEVANCE FOR BUSINESS

For SMB executives and managers, this episode matters because it points to a more demanding phase of AI adoption. The question is no longer whether AI is improving—it is whether your organization can keep up with the pace, evaluate tools soberly, and avoid locking into yesterday’s assumptions. Model quality is improving, but so are the operational risks: vendor churn, pricing changes, policy shocks, inconsistent real-world performance, and growing dependence on outside platforms.

It also reinforces that practical utility is becoming the real battleground. Tools that can code, use software, generate assets, and coordinate tasks will begin to affect staffing models, vendor selection, software purchasing, and training priorities. Leaders do not need to chase every launch, but they do need a working view of where AI can now replace friction, reduce turnaround time, or compress specialized work into general management workflows.

CALLS TO ACTION

🔹 Reassess your current AI stack based on real workflow utility, not brand familiarity or benchmark headlines alone.

🔹 Pilot AI tools on specific business tasks such as drafting, research, internal reporting, website updates, asset creation, or workflow automation.

🔹 Prepare for vendor volatility by avoiding overdependence on a single model provider where possible.

🔹 Track political and regulatory entanglements around major AI firms, especially where government relationships could affect product access, trust, or enterprise adoption.

🔹 Treat rapid product improvement as a planning issue, not just a technology story; revisit assumptions about workforce tasks and software capabilities more often than you did last year.

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=eA6HMK3DWDY: March 10, 2026

Full Interview: Anthropic CEO Responds to Trump Order, Pentagon Clash

CBS News (March 2026)

TL;DR / Key Takeaway: Anthropic is arguing that it supports U.S. national security but will not remove two AI red lines—domestic mass surveillance and fully autonomous weapons—turning a contract dispute into a broader test of how much control private AI firms, the Pentagon, and elected government should have over frontier AI deployment.

Executive Summary

In this CBS News interview, Anthropic CEO Dario Amodei presents the company’s dispute with the Pentagon as a conflict not over whether AI should support national security, but over where limits should remain in place as military use expands. Amodei repeatedly stresses that Anthropic has already worked extensively with the U.S. government and military, including use on classified systems and national-security applications. But he says the company has held firm on two narrow red lines: no use of its models for domestic mass surveillance and no support for fully autonomous weapons that can act without human oversight. His argument is that AI capability is advancing faster than law and oversight, making these boundaries necessary until Congress or another legitimate democratic process catches up.

The interview also surfaces a deeper structural tension in the AI market: frontier model providers are no longer just software vendors—they are becoming gatekeepers for capabilities that governments view as strategically essential. Amodei argues that the Pentagon’s response was punitive, including a threatened or implied “supply chain risk” designation that could restrict Anthropic’s role in military-related contracting. Whether or not one agrees with Anthropic’s stance, the larger signal is clear: AI companies now sit in an unstable position between national-security expectations, commercial incentives, public values, and political retaliation risk. This is not a normal vendor disagreement. It is a preview of how AI policy may increasingly be made through procurement fights, public pressure, and executive action rather than slow legislative clarity.

For business leaders, the most important takeaway is that AI governance is no longer a side conversation about ethics statements or safety language. It is becoming a live operational issue that affects contracts, market access, platform trust, and reputational alignment. The interview also underscores a practical point Amodei makes several times: today’s AI systems are still not reliably predictable enough for the highest-stakes autonomous use cases, even as they are already useful enough to become indispensable in lower-risk military and enterprise environments. That gap—between usefulness and reliability—will matter just as much in business as it does in government.

Relevance for Business

For SMB executives and managers, this interview matters because it shows how quickly AI can move from productivity tool to governance liability. If a major model provider can become entangled in national-security disputes, then businesses should expect similar tension—at smaller scale—around procurement, acceptable use, compliance, data practices, and vendor dependency. Leaders should not assume that the biggest or most advanced model will always be the safest operational choice.

This also reinforces a broader governance lesson: just because AI can technically do something does not mean the rules, oversight, or institutional readiness exist to support it responsibly. Companies adopting AI for internal monitoring, workforce analytics, customer profiling, or automated decision-making should recognize the same pattern Amodei highlights: capability is often arriving before policy, process, and accountability. That creates exposure for firms that move too casually.

Calls to Action

🔹 Review your AI vendors for governance risk, not just feature quality, pricing, or speed.

🔹 Set explicit internal red lines for uses involving surveillance, automated decision-making, or safety-critical actions.

🔹 Avoid assuming legal compliance equals strategic wisdom; some uses may be technically legal yet still reputationally or operationally damaging.

🔹 Reduce single-vendor dependency where possible, especially if a provider’s access, public standing, or government relationship could shift suddenly.

🔹 Build oversight into high-impact AI use cases now, before speed and convenience normalize decisions your organization cannot easily defend later.

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=MPTNHrq_4LU: March 10, 2026

AI CAN WRITE NOW. WHAT HAPPENS TO REPORTERS?

FAST COMPANY (FEB 26, 2026)

TL;DR / Key Takeaway: As models get good at competent prose, “writing” becomes cheaper—so the scarce value in journalism shifts toward reporting, judgment, and trust, while the career ladder (skill-building) becomes a new risk.

Executive Summary:

The piece uses a newsroom case (Cleveland.com) to illustrate a controversial workflow: reporters gather material, and an “AI rewrite specialist” drafts stories, reportedly freeing up an extra workday per week. Backlash frames this as “content farming,” but the author argues it’s better understood as a full separation of reporting and writing, with reporters becoming supervisors/operators of AI drafting—still responsible for accuracy and final copy.

The tension is long-term: if juniors stop writing daily, how do they develop the craft of storytelling and editorial judgment? Writing is not just transcription; it’s curation and prioritization with an audience in mind. The risk is a workforce where fewer people can do high-quality narrative work, even as “good-enough” copy is abundant.

Relevance for Business:

For SMBs, this is a broader knowledge-work template: AI can generate competent output, but the differentiators become inputs, verification, and accountability. If AI drafts customer comms, proposals, or reports, you still need humans who can think clearly, check facts, and choose what matters—and you need a plan to develop those skills over time.

Calls to Action:

🔹 If using AI for writing, define who owns accuracy and approvals (and log it).

🔹 Build “verification muscle”: require sources, citations, and quick fact-check routines.

🔹 Protect skill development—don’t outsource all drafting for juniors; rotate “write vs. supervise.”

🔹 Expect stakeholder backlash where authenticity matters; disclose and set expectations appropriately.

🔹 Treat AI writing as a productivity tool, not a strategy—strategy is what you do with saved time.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91495946/ai-can-write-now-what-happens-reporters: March 10, 2026

‘AI; didn’t read’: AI;DR is the new TL;DR

Fast Company (Feb 26, 2026)

TL;DR / Key Takeaway: Public fatigue with AI-generated content is evolving into a norm: “AI;DR” signals that AI-suspected writing is being ignored, raising reputational risk for brands and leaders who over-automate communications.

Executive Summary:

Fast Company frames “AI;DR” as a cultural backlash shorthand—people skipping content once they detect “AI slop,” especially on social platforms where incentives favor volume and engagement over quality. The article cites a (2024) study suggesting a large share of long-form LinkedIn posts are AI-assisted, and argues we’re moving into an “AI unless proven otherwise” environment.

The business-relevant implication is not just annoyance—it’s trust decay. If audiences assume automation, they may discount credibility, authenticity, and competence. “AI;DR” becomes a lightweight enforcement mechanism: a way to punish low-effort content and signal rising expectations for genuine voice, specificity, and proof of human accountability.

Relevance for Business:

For SMBs, this is a brand and sales risk. If your outbound marketing, CEO posts, customer emails, or support updates read “machiney,” you can lose attention and confidence—especially in high-trust categories (health, finance, B2B services). The winning move is not “no AI,” but AI with editorial standards and human ownership.

Calls to Action:

🔹 Add a simple rule: every external post must include specifics only a human would know (real numbers, decisions, constraints).

🔹 Keep AI as a drafting tool, but enforce human revision for voice, accountability, and claims.

🔹 For thought leadership, prioritize fewer, higher-quality pieces over volume.

🔹 Train teams to avoid “generic uplift language” that triggers AI suspicion.

🔹 Use trust signals: named authors, documented sources, and clear revision/approval ownership.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91498062/ai-didnt-read-aidr-is-the-new-tldr: March 10, 2026

The Market’s AI Obsession Is Starting to Bring Out the Bears

WSJ (Feb 25, 2026)

TL;DR / Key Takeaway: Wall Street’s AI trade is entering a “prove it” phase—more investors are betting that AI capex and debt loads won’t translate into profits, making hyperscaler spending discipline (or lack of it) a key risk signal.

Executive Summary:

After years of AI-led market enthusiasm, a cohort of traders and strategists are positioning for an AI shakeout, arguing that valuations and spending assumptions are stretched. Bears aren’t just shorting single names; they’re increasingly focusing on who is financing the build-out and whether the cash flows will support it—especially as big tech invests extraordinary sums in AI infrastructure.

The article highlights a shift toward betting against AI-linked balance sheets and debt rather than only stocks—partly because shorting equities can be punished by sudden headline-driven spikes. Oracle becomes a focal point as investors scrutinize its funding plans and its relationship to OpenAI, framed by some traders as effectively “a bet on OpenAI’s trajectory.”

Relevance for Business:

For SMB leaders, this matters because capital markets sentiment can quickly shape pricing, availability, and sales pressure across the AI stack—cloud contracts, “AI infrastructure” bundles, and vendor roadmaps. If markets tighten, expect harder ROI requirements, more bundling/lock-in incentives, and potentially pullbacks in expansion plansthat affect service reliability, support, and long-term commitments.

Calls to Action:

🔹 Treat vendor promises as financing-dependent: ask what happens to pricing/support if capital costs rise.

🔹 Require ROI cases that survive slower growth assumptions (not just “AI transformation” narratives).

🔹 Watch for data-center spend pullback signals (contract pauses, delayed rollouts, revised capex guidance).

🔹 Avoid long commitments that create single-vendor dependency without exit options.

🔹 Build a “Plan B” for critical workflows if a provider reprioritizes products during a market reset.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/skeptical-investors-are-hunting-for-ways-to-short-the-ai-frenzy-8790baf9: March 10, 2026

Why AI Companies Are Suddenly So Worried About Theft

Intelligencer (Feb 26, 2026)

TL;DR / Key Takeaway: “Model theft” via distillation/extraction is becoming a competitive weapon—pushing AI labs toward lockdown behaviors, regulation-seeking, and geopolitical framing that could reshape access, pricing, and openness.

Executive Summary:

The article describes a surge of public warnings from major AI labs about “model extraction” and “distillation attacks”—methods that use large volumes of interactions to replicate or approximate proprietary model capabilities. It cites Anthropic’s claim that multiple Chinese firms used 24,000 fraudulent accounts to generate 16 million exchanges to extract Claude’s capabilities, alongside similar concern-signals from Google and OpenAI.

Beyond the security issue, the piece highlights the strategic narrative battle: labs frame distillation as (a) a safety risk (capabilities without guardrails), (b) an IP theft issue, and/or (c) a national competitiveness threat. It also calls out the reputational irony: companies built on scraped data now object to being “scraped,” which fuels backlash and strengthens pro–open model sentiment.

Relevance for Business:

Expect downstream impacts: tighter usage controls, more aggressive anti-abuse monitoring, possible rate limits, changing terms, and a continuing split between closed, guarded enterprise models vs. fast-commoditizing open models. SMB buyers may see more vendor friction—especially if vendors fear customer usage patterns could enable “distillation” (even inadvertently).

Calls to Action:

🔹 Assume vendor access may become more restricted; avoid architectures that depend on a single model provider.

🔹 Review contracts for usage limits, monitoring rights, and termination triggers tied to “abuse” definitions.

🔹 Keep an “open model option” on your roadmap for cost control and leverage—even if you don’t deploy it yet.

🔹 Separate sensitive workflows so model/provider changes don’t break core operations.

🔹 Monitor policy moves: “model theft” may become a justification for new regulation that affects procurement.

Summary by ReadAboutAI.com

https://nymag.com/intelligencer/article/why-ai-companies-are-suddenly-worried-about-theft.html: March 10, 2026

HAVE YOU HEARD THE ONE ABOUT MUSK, BEZOS, AND ALTMAN WALKING INTO A GYM?

FAST COMPANY (02-27-2026)

TL;DR / Key Takeaway: A viral “Energym” fake ad hits because it mirrors a real fear: AI may externalize its costs onto workers and communities—job loss, “purpose” narratives, and energy demand.

Executive Summary:

The piece unpacks a widely shared mock ad imagining a future gym chain (“Energym”) where the newly unemployed generate electricity to power the AI servers that displaced them—a dystopian loop presented as “efficient.”

Even as satire, the scenario lands because it compresses multiple anxieties into a single image: automation-driven job displacement, a social narrative that reframes exploitation as “purpose,” and the uncomfortable reality that AI growth is tethered to physical infrastructure and energy.

Relevance for Business:

For SMB leaders, this is a reminder that AI adoption isn’t just a tooling decision—it’s a trust and legitimacy decision. When customers and employees suspect AI is being used mainly to cut labor while shifting costs (burnout, retraining, service degradation), brand and retention risks rise fast.

Calls to Action:

🔹 Pressure-test your AI roadmap for “value creation vs. labor replacement” optics—then communicate the difference clearly.

🔹 Build a light workforce transition plan (reskilling, role redesign) before large workflow changes.

🔹 Track AI-related energy and infrastructure dependencies if your stack is becoming more model-heavy.

🔹 Add a governance check: “Will this implementation increase perceived unfairness?”

🔹 Treat viral narratives as early signals—monitor what stories are resonating with your employees/customers.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91499319/have-you-heard-the-one-about-musk-bezos-and-altman-walking-into-a-gym: March 10, 2026

WHY AI’S FLAWS ARE HURTING GIRLS MOST

FAST COMPANY (02-27-2026)

TL;DR / Key Takeaway: AI harms aren’t abstract—failures in safety guardrails, healthcare bias, and hiring systems can produce disproportionate risk for girls and women, and the gap is worsened by underrepresentation in AI jobs.

Executive Summary:

The author argues that AI is amplifying uneven outcomes: recent incidents include a model generating explicit images of real people (including women and children), exposing how brittle guardrails can be. The piece also flags youth mental-health concerns tied to chatbot interactions and parental perceptions of tech’s impact.

In higher-stakes domains, the article points to healthcare and hiring: biased data can degrade diagnostic performance for women, and automated hiring tools can embed new discrimination and privacy risks. A key structural driver: women represent a small share of the AI workforce, increasing the chance that systems ship without sufficient perspective and testing.

Relevance for Business:

SMBs adopting AI in HR, customer support, marketing, and health-adjacent services inherit these risks. If your tools produce disparate outcomes (or even credible allegations of them), you face legal exposure, employee trust loss, and brand damage—often before you see measurable ROI.

Calls to Action:

🔹 If you use AI in hiring, require bias audits and documentation from vendors before expanding use.

🔹 Add harm testing focused on vulnerable groups (not just “average user” accuracy).

🔹 Treat “guardrails” as operational controls: define who approves, who monitors, and escalation steps.

🔹 Don’t let AI replace mentorship/training pathways—protect human skill-building in roles being automated.

🔹 Track workforce composition: ensure women are present in tool selection, testing, and governance decisions.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91487699/why-ais-failures-are-impacting-girls-first-ai-girls-inequality: March 10, 2026

IN DEFENSE OF NOT PAYING FOR AI

FAST COMPANY (02-27-2026)

TL;DR / Key Takeaway: For many users, free tiers already include near–state-of-the-art models; the real constraint is usage limits and paywalled premium tools, so leaders should demand proof of incremental value before paying.

Executive Summary:

This piece pushes back on “everyone should pay $20/month” advice, arguing it reverses normal SaaS logic: it’s not the customer’s job to spend time and money to convince themselves a product is worth more.

It claims free offerings are not “a year behind,” citing that free tiers can include current models (examples listed: access to GPT-5.2, Gemini Pro 3.1, and Claude Sonnet 4.6; with some premium models excluded). The practical difference becomes limits and gating: even paid plans can hit caps that push users to higher-priced tiers, which the author frames as a warning against FOMO-driven subscriptions.

Relevance for Business:

SMBs can waste money by subscribing broadly before understanding where AI actually improves throughput or quality. The smarter pattern is: prove value on specific workflows, then pay selectively (or negotiate) once benefits are measurable.

Calls to Action:

🔹 Run short pilots using free tiers first; require a before/after comparison on real tasks.

🔹 Only pay when you can name the constraint: limits, governance features, security, admin controls, or premium capabilities.

🔹 Track hidden costs: time spent “prompting around” limitations and context switching across tools.

🔹 Consider a “portfolio approach” (multiple tools) to avoid lock-in and keep leverage in pricing talks.

🔹 Watch which capabilities are becoming paywalled (e.g., specialized agentic/coding tools) before committing.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91499445/in-defense-of-not-paying-for-ai: March 10, 2026

AN OHIO NEWSPAPER HAS A NEW STAR WRITER. IT ISN’T HUMAN.

THE WASHINGTON POST (MAR 01 2026)

TL;DR / Key Takeaway: A major local newsroom is letting AI draft full articles (“Express Desk”), boosting traffic—but triggering quality, credibility, training, and workforce fears that mirror what many SMBs face when automation shifts from assistive to substitutive.

Executive Summary:

Cleveland.com / The Plain Dealer is publishing stories drafted by AI under a shared byline (“Advance Local Express Desk”), with disclosures that humans reviewed the output. The push intensified after editor Chris Quinn argued that removing writing from reporters’ workloads “frees up” time—sparking industry backlash and internal anxiety about automation.

Staff describe moving goalposts (including performance reviews citing insufficient AI usage), morale concerns, and fears the newsroom becomes a “content farm” while junior journalists lose skill-building reps. Researchers warn systematic automation of writing can threaten credibility because audiences often prefer human-written journalism, and because AI struggles with nuance—what matters to a community and why it matters.

Relevance for Business:

This is a clean case study in “automation of craft work.” For SMBs, the analog is AI drafting emails, proposals, reports, support responses, and marketing content. The gain is speed and volume; the risk is quality drift, brand voice erosion, hidden rework, and loss of human capability over time.

Calls to Action:

🔹 If AI drafts external-facing content, define quality gates (fact checks, tone, legal review) and measure rework.

🔹 Avoid “goalpost shifts”: document what “good AI usage” means before tying it to performance.

🔹 Protect training loops—ensure juniors still do real writing, not only “feeding the model.”

🔹 Use AI to augment discovery (summaries, transcript scanning) but keep humans owning judgment and narrative.

🔹 Monitor customer trust signals—automation that increases volume but reduces nuance can erode loyalty.

Summary by ReadAboutAI.com

https://www.washingtonpost.com/technology/2026/03/01/ai-journalism-writing-cleveland-plain-dealer/: March 10, 2026

THE AI BOOM IS REALLY AN ENERGY STORY

FAST COMPANY CUSTOM STUDIO (WILLIAMS-SPONSORED) (FEB 10, 2026)

TL;DR / Key Takeaway: If AI is your strategy, power is your bottleneck—electricity availability, speed-to-power, and permitting/community trust will determine who can scale.

Executive Summary:

This piece frames AI readiness as fundamentally constrained by energy infrastructure. Panelists at a Clean Energy and Technology Expo argue that demand from next-gen data centers—especially AI training clusters—requires a major reversal of decades-long underinvestment in electricity generation. Leaders are urged to treat power not as a utility line item, but a board-level variable that shapes location, cost, and timing.

A major message is speed-to-power: U.S. timelines for building power infrastructure can stretch toward a decade due to federal rules, local permits, and opposition—making early partnership between data-center developers and utilities essential, alongside reform. The article also stresses community trust: local pushback is already causing planning commissions to reject projects, so “what’s in it for us” (jobs, bills, resilience) must be visible.

Relevance for Business:

Even SMBs that “just use cloud AI” are exposed: power scarcity and grid constraints can translate into higher cloud prices, regional capacity limits, and slower rollout of new AI services. Your AI roadmap may be shaped less by model breakthroughs and more by infrastructure reality.

Calls to Action:

🔹 Treat AI vendor roadmaps as energy-dependent; ask about capacity, region availability, and price sensitivity.

🔹 Expect higher volatility in electricity and pass-through costs embedded in AI services.

🔹 If you run heavy compute on-prem/colo, revisit location strategy as a power strategy.

🔹 Monitor permitting and local backlash as an early indicator of data-center supply constraints.

🔹 Prioritize efficiency: optimization and workload discipline are a strategic advantage when power is scarce.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91489959/the-ai-boom-is-really-an-energy-story: March 10, 2026

MICHAEL POLLAN PUNCTURES THE AI BUBBLE

THE ATLANTIC (FEB 24, 2026)

TL;DR / Key Takeaway: A cultural counter-case is hardening: AI may be extremely useful while still failing at the “big claim” (human-like consciousness), which could deflate overpromises and sharpen buyer skepticism.

Executive Summary:

This is a book review that uses Michael Pollan’s new work on consciousness to argue that the computer-as-brain metaphor breaks down in important ways. It frames consciousness as an “unconquered” frontier and suggests that some AI marketing implies an imminent leap across it—claims the reviewer considers likely to be memorably wrong.

The review’s business-relevant point isn’t metaphysics—it’s narrative correction. It positions AI as an economic revolution wrapped in utopian rhetoric, and implies that “bubble talk” is fueled by grand promises that blur useful automation with human equivalence. The outcome may be a growing cultural permission structure to say: AI is powerful, but not “human.”

Relevance for Business:

For SMB leaders, this is a reminder to separate (1) tool value from (2) mythology. Over-indexing on consciousness/singularity narratives can drive poor buying decisions (overpaying, overcommitting, under-governing). The safer posture is: pursue measurable productivity gains while resisting vendor claims that require belief.

Calls to Action:

🔹 Reframe AI internally as capability + limits, not “replacement for humans.”

🔹 Demand evidence: pilots should prove value on your workflows, not on demos or rhetoric.

🔹 Avoid “anthropomorphic governance” (treating systems as people); govern them like software with risks.

🔹 Use cultural backlash as a signal: overhyped vendors may face trust/retention issues.

🔹 Monitor how “AI bubble” narratives influence employees and customers—expect mixed reactions.

Summary by ReadAboutAI.com

https://www.theatlantic.com/books/2026/02/michael-pollans-new-book-pops-ai-bubble/686119/: March 10, 2026

TESLA’S SECRET WEAPON IS A GIANT METAL BOX

THE ATLANTIC (03-04-2026)

TL;DR / Key Takeaway: Tesla’s most “real” near-term AI boom leverage may be energy storage—not robotaxis—because data centers need reliable electrons, and Tesla’s Megapack is positioned to benefit.

Executive Summary

The Atlantic argues Tesla’s flashy autonomy and robotics narrative remains legally and technically uncertain (e.g., questions about selling a car without a steering wheel, and an unproven robotaxi track record). Meanwhile, the company’s quieter “metal box”—the Megapack, a grid-scale battery system—has become central to its energy division, storing solar energy and stabilizing supply.

The piece ties this directly to AI infrastructure: America needs more electricity, “in large part” for data centers powering the AI boom, and batteries are being deployed to steady grids and provide contingency power—including for data centers themselves. It also notes Tesla’s internal flywheel: Tesla sold Megapacks to xAI, meaning Musk’s own batteries are powering AI usage.

Relevance for Business

SMB takeaway: AI is making energy reliability a core business dependency. Even if you never buy a Tesla product, the economics of AI (and your cloud costs) are increasingly linked to grid constraints, storage, and regional power politics.

Calls to Action

🔹 Treat AI adoption as infrastructure-dependent: ask cloud vendors about regional capacity constraints and resiliency planning.

🔹 If you run physical operations, consider storage/backup power as a resilience investment—not just an IT concern.

🔹 For any “autonomous” or high-claim AI initiative, separate hype timelines from legally shippable reality (Tesla is the cautionary case).

🔹 Monitor how energy divisions inside “tech-forward” companies become profit stabilizers when core bets wobble.

🔹 What to monitor: storage deployment rates near major data center hubs and resulting pricing signals.

Summary by ReadAboutAI.com

https://www.theatlantic.com/technology/2026/03/tesla-energy-elon-musk-batteries/686236/: March 10, 2026

THE WEEK THE DREADED AI JOBS WIPEOUT GOT REAL

WSJ (02-28-2026)

TL;DR / Key Takeaway: Block’s AI-cited layoffs became a public inflection point: leaders are realizing that AI job displacement can trigger employee backlash, community resistance, and market volatility—not just “efficiency.”

Executive Summary

WSJ frames Block’s move—4,000 layoffs tied to AI—as the kind of highly visible, S&P 500–level shock that turns abstract fear into workplace reality. The article emphasizes the social reaction: executives and workers interpreted it as a signal that companies could be materially smaller going forward, and that employees would absorb much of the pain (“pitchforks and torches”).

Importantly, the piece broadens the risk from “jobs” to second-order effects: public fear showing up as stock jitters, local resistance to data centers (due to utility costs and job anxiety), and a shift in how executives talk—more openly acknowledging that productivity gains can create destabilizing derivative effects that may outpace society’s ability to adjust.

Relevance for Business

For SMBs, the takeaway is that “AI-driven efficiency” now has an externalities problem: workforce trust, retention, employer brand, and even local infrastructure politics can become constraints. The operational win is real—but the rollout and messaging have become strategic.

Calls to Action

🔹 If AI changes staffing, communicate role redesign + reskilling plans early—silence will be interpreted as replacement.

🔹 Separate “AI as excuse” from “AI as strategy”: document what changed in workflows and why.

🔹 Add a reputational risk check to AI initiatives that touch jobs, pricing, or service quality.

🔹 If you depend on cloud/data centers, track local opposition and power-cost narratives—they can slow expansion.

🔹 What to monitor: executive rhetoric shifting from “benefits only” to “disruption management” and policy responses.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/ai/the-week-the-dreaded-ai-jobs-wipeout-got-real-3ba5057b: March 10, 2026

WHAT AI EXECUTIVES TELL THEIR OWN KIDS ABOUT THE JOBS OF THE FUTURE

WSJ (02-26-2026)

TL;DR / Key Takeaway: AI leaders aren’t “calm because it’s fine”—they’re calm because they expect disruption and advise kids to build adaptability, broad education, and responsibility-heavy skills rather than betting on a single narrow track.

Executive Summary

WSJ interviews AI-adjacent leaders (including Anthropic’s Daniela Amodei) on what they tell their children about careers. The through-line: worry is real, but panic is not—because careers are long and humans adapt. Leaders emphasize agility and openness to change, warning that today’s technical skills may become obsolete quickly, while critical thinking and ethical judgment remain durable.

Several respondents argue for “generalist” bundles of skills (jobs with many components) and for fields where accountability and responsibility are central—because AI can generate recommendations but cannot “take responsibility.” The article also highlights sectoral bets some leaders are making for their own families—like energy (including nuclear) and healthcare—based on expected shortages and demand.

Relevance for Business

For SMB executives, this reads like a hiring and workforce design memo: prioritize employees who can re-tool, supervise AI, and own outcomes, not just produce commodity outputs that models can replicate.

Calls to Action

🔹 Update hiring rubrics toward adaptability + judgment + ownership, not only tool-specific experience.

🔹 Build “AI fluency” expectations: employees should know how to use models effectively even if they don’t build them.

🔹 Preserve and reward roles that carry accountability (compliance, finance sign-off, legal review)—these become more important as AI output volume rises.

🔹 Invest in cross-training that creates “generalist bundles” (customer + ops + analytics), not single-task specialists.

🔹 What to monitor: where your org is confusing “AI writes it” with “AI owns the outcome.”

Summary by ReadAboutAI.com

https://www.wsj.com/lifestyle/careers/what-ai-executives-tell-their-own-kids-about-the-jobs-of-the-future-1ba43f65: March 10, 2026

STOP CALLING IT INEVITABLE: THE AI JOB CRISIS IS BEING BUILT, NOT BORN

FAST COMPANY (03-02-2026)

TL;DR / Key Takeaway: The “AI job crisis” isn’t weather—it’s the downstream result of boardroom incentives, and leaders can still steer AI toward augmentation over replacement if they choose.

Executive Summary

This piece challenges the passive framing that “jobs will just go away,” arguing that displacement is being manufactured by incentive structures (pricing models, tax treatment, procurement, and governance choices), not forced by physics. The author uses a sharp analogy: when the same actors warning about job losses are also the ones building the systems that cause them, “inevitability” becomes a convenient shield for accountability.

The business risk isn’t only layoffs—it’s demand destruction and a weakened consumer base if automation meaningfully erodes white-collar employment at scale. In that world, the article argues, the “pro-market” position is not maximum automation; it’s keeping humans economically productive—otherwise you drift toward large-scale redistribution to keep the economy functioning.

Relevance for Business

For SMB leaders, this is a reminder that AI strategy is not just tooling—it’s operating model design. If your “AI plan” is mostly headcount reduction, you may win short-term cost optics but increase medium-term exposure to weaker customers, churn, and price sensitivity—and you’ll also face higher reputational and retention risk internally.

Calls to Action

🔹 Audit where your AI roadmap replaces roles vs. removes friction (augmentation)—and require business-case justification for each “replacement” move.

🔹 Build a “humans stay in the loop” policy for customer-impacting decisions (pricing, eligibility, support resolutions).

🔹 Pressure-test your growth plan against a scenario of softening consumer demand (if AI-driven displacement accelerates).

🔹 Invest in reskilling tied to workflows (not generic training): who does what, what gets automated, and what escalates.

🔹 What to monitor: policy and tax debates that shift incentives toward augmentation vs. automation.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91498615/stop-calling-it-inevitable-the-ai-job-crisis-is-being-built-not-born: March 10, 2026

TESLA’S CHINA EV RIVALS HIT BY LUNAR NEW YEAR HOLIDAYS, BUT NIO STANDS OUT

INVESTOR’S BUSINESS DAILY (MAR 2, 2026)

TL;DR / Key Takeaway: February’s China EV “slump” looks seasonal (Lunar New Year), but the bigger signal is intensifying feature + price competition—including next-gen driver-assistance and faster charging—raising the bar for any player betting on software/AI differentiation.

Executive Summary (1–3 paragraphs)

China EV sales typically dip early in the year, and this year’s Feb 15–23 Lunar New Year likely amplified the month-over-month softness across BYD, XPeng, Nio, Xiaomi, and Li Auto. Even within that softness, some brands posted notable year-over-year performance—e.g., Nio’s February deliveries rose ~58% YoY (despite falling vs. January), suggesting that model refresh cycles and incentives can still cut through seasonal demand drops.

The more durable signal is where competition is heading: BYD teased a March 5 technology event with speculation around more advanced driver-assistance plus major charging improvements, and XPeng is set to unveil a second-generation Vision-Language-Action “smart-driving” system—technology that Volkswagen is adopting. This points to a market where AI-enabled autonomy features and ecosystem partnerships may matter as much as (or more than) pure vehicle hardware.

Relevance for Business (SMB leaders)

- If your business touches fleets, logistics, insurance, field service, or vehicle-adjacent tech, China’s EV market is previewing a world where driver-assistance capabilities are a “must-have,” not a premium add-on—and where platform partnerships (e.g., VW + XPeng) accelerate feature diffusion.

- Even outside automotive, this is a reminder that “AI differentiation” quickly becomes table stakes, forcing competitors into pricing/incentive pressure and faster iteration cycles.

Calls to Action

🔹 If you operate fleets, start planning for ADAS-driven policy updates (safety, driver training, incident reporting) as features proliferate.

🔹 Monitor VLA-style autonomy stacks and partnerships—these can become de facto standards faster than expected.

🔹 Treat seasonal volatility as noise; track model refresh + incentive strategy as the leading indicator of demand capture.

🔹 For auto-adjacent vendors: pressure-test your roadmap against a market where “smart driving” is bundled and margins shift elsewhere.

Summary by ReadAboutAI.com

https://www.investors.com/news/teslas-china-ev-rivals-byd-xpeng-xiaomi-lunar-new-year-nio-strong/: March 10, 2026

DATA CENTERS IN SPACE: LESS CRAZY THAN YOU THINK

THE ECONOMIST (MAR 2, 2026)

TL;DR / Key Takeaway: “Orbital data centers” are still speculative, but terrestrial constraints (permits, grid hookups, backlash, power scarcity) are so severe that space-based compute is being treated as a serious option—if launch costs fall and cooling/radiation reliability works.

Executive Summary:

The Economist frames the debate as a response to terrestrial friction: it’s getting harder to build data centers, with an estimate that 30–50% of global capacity due online this year could be delayed (up from 26% in 2025), due to permitting, grid connection delays, public opposition, proposed moratoriums, and soaring electricity demand.

The economics hinge on a few variables: launch cost per kg, “specific power” (watts per kg), and satellite cost per watt—driven by solar panels and radiators, plus radiation effects on chips. A cited calculator suggests a 1GW terrestrial data center over five years could cost ~$15.9B, while an orbital equivalent could be ~$51.1B under conservative assumptions—excluding GPU costs (which are huge in either case).

But the piece argues those assumptions may improve: Starcloud tested an Nvidia H100 in orbit and trained a small model; AI satellites may not need ground communication like Starlink, enabling higher watts/kg and lower cost. At 70w/kg and $5/w satellite cost assumptions, an orbital 1GW DC could be ~$16.7B (near parity), and if launch costs drop (Starship working, reusable), the economics could turn favorable (e.g., $200/kg implies ~$12.1B). Cooling remains a key unknown; Starcloud is testing deployable radiators after early overheating limitations.

Relevance for Business:

SMBs don’t need “space compute,” but the strategic signal is important: AI growth is pushing against physical constraints so hard that the industry is exploring extreme solutions. That means capacity and power scarcity can continue shaping cloud pricing, availability, and where AI services can scale.

Calls to Action:

🔹 Treat this as an indicator of how binding the power/permit bottleneck has become—not as a near-term migration plan.

🔹 In AI budgeting, assume infrastructure constraints persist; plan for cost volatility and regional capacity issues.

🔹 Ask vendors about how they mitigate capacity risk (multi-region, power sourcing, long-term PPAs).

🔹 What to monitor: Starship reliability and cooling breakthroughs (radiators) as gating milestones.

🔹 Use the “space DC” idea as a forcing function: if the industry is considering orbit, your team should at least optimize workload efficiency and governance on Earth.

Summary by ReadAboutAI.com

https://www.economist.com/science-and-technology/2026/03/02/data-centres-in-space-less-crazy-than-you-think: March 10, 2026

BIG TECH’S DEALS FOR AI DATA-CENTER POWER PRESENT ACCOUNTING QUESTIONS

WSJ CFO JOURNAL (MAR 4, 2026)

TL;DR / Key Takeaway: AI’s power deals are becoming financial statement risk, but disclosure is inconsistent—investors want clearer reporting on gigawatts committed, pricing, backstops, and liquidity exposure.

Executive Summary:

WSJ reports that investors are pressing Big Tech for more detail on massive power commitments used to run AI data centers, as projects face delays due to power constraints and grid equipment shortages. The article adds a policy accelerant: the President announced guidelines requiring tech companies to provide their own electricity for AI data centers.

A core issue is governance and comparability: there’s no specific disclosure requirement for power purchase agreements (PPAs) despite the “city-sized” electricity volumes involved. Alphabet disclosed a $9.9B, 20-year PPA expected to be treated as a lease starting in 2027, and disclosed a $3.5B one-time payment backstop (if conditions aren’t met) that would transfer ownership of power-generation assets.

The accounting risk is timing mismatch: PricewaterhouseCoopers warned of a potentially significant disconnect between expense recognition and cash-flow timing depending on how PPAs are valued and when power is delivered and paid. Moody’s wants clearer tables of PPAs (timing, commitments, pricing/terms) and warns liabilities may not fully reflect the range of risks around AI data centers.

Relevance for Business:

Even for SMBs, this matters because power constraints and long-term PPAs feed directly into cloud pricing, regional compute availability, and the stability of vendors you depend on. “AI infrastructure” is increasingly capital + power + accounting, not just chips and models.

Calls to Action:

🔹 Ask cloud/AI vendors how power constraints affect regional capacity, SLAs, and pricing—don’t treat it as invisible infrastructure.

🔹 If you’re evaluating AI-heavy vendors, include “power exposure” in due diligence (dependency + pass-through pricing risk).

🔹 Watch for contract terms that create hidden risk (backstops, termination payments) in major providers.

🔹 For compute-intensive SMBs (media, analytics, AI products), plan for volatility: multi-cloud or workload portability can reduce surprise.

🔹 What to monitor: whether reporting standards change and whether vendors begin publishing PPA tables voluntarily.

Summary by ReadAboutAI.com

https://www.wsj.com/cfo-journal/big-techs-deals-for-ai-data-center-power-present-accounting-questions-28bbb4e0: March 10, 2026

BRANDS MAY ACTUALLY BENEFIT FROM ADVERTISING NEXT TO AI CONTENT, PER STUDY

ADWEEK (MAR 3, 2026)

TL;DR / Key Takeaway: “AI slop” isn’t uniformly toxic for advertisers—ads placed next to certain AI-generated formats can perform positively, but adjacency to spam/misinformation remains a brand-risk cliff.

Executive Summary:

A study from OM Media Trials (Omnicom) and Zefr surveyed ~5,000 people in the U.S. and Canada, testing reactions to ads shown after eight types of AI-generated video. It found consumers responded positively when ads followed AI satire, youth depictions, or artistic content—associating brands with being “refreshing” or “innovative.”

But negative adjacency still matters: respondents reacted poorly when ads appeared next to AI spam or misinformation involving public figures—and the article flags particular sensitivity for categories like financial services.

A crucial operational insight: disclosure helps. The piece reports 41% of respondents said their brand opinion improved when AI content was clearly labeled. It also notes a perception problem: 32% suspected human-made content was actually AI, and audiences were especially likely to believe AI-generated misinformation about public figures is real.

Relevance for Business:

For SMB leaders running digital marketing, the “safe strategy” may shift from blanket avoidance of AI content to selective adjacency plus controls. The real risk isn’t “AI content” broadly—it’s context quality, misinfo proximity, and the platform’s ability to classify and label content reliably.

Calls to Action:

🔹 Update brand-safety rules from “AI = no” to “AI environment tiers” (art/satire vs. spam/misinformation).

🔹 Require partners/platforms to provide AI labeling and adjacency reporting; treat transparency as a performance lever.

🔹 For regulated categories (finance/health), keep stricter adjacency exclusions—misinfo risk is asymmetric.

🔹 Add monitoring for “false AI suspicion” (consumers thinking your real content is AI) and adjust creative accordingly.

🔹 What to monitor: Gartner’s claim that AI content will dominate the web—if true, brand safety becomes a routing problem, not a binary choice.

Summary by ReadAboutAI.com

https://www.adweek.com/brand-marketing/brands-may-actually-benefit-from-advertising-next-to-ai-content-per-study/: March 10, 2026

HOW QUANTUM DATA CAN TEACH AI TO DO BETTER CHEMISTRY

IEEE SPECTRUM (03-02-2026)

TL;DR / Key Takeaway: “Quantum-enhanced AI” is being pitched as a way to generate high-accuracy chemistry data with quantum computers, then use it to train AI that can design new materials faster and cheaper than today’s simulation stacks.

Executive Summary

The authors frame computational chemistry as “Jacob’s Ladder”: higher accuracy requires dramatically more compute, making top-rung methods impractical at scale. Their proposal is to bend the ladder by using quantum computers to produce exquisitely accurate electron-behavior data, then train classical AI models on that data to predict materials properties quickly.

If it works, the upside is broad: better catalysts, battery chemistries, removal of “forever chemicals,” and faster drug discovery—because the AI would start from more truthful physics than today’s approximations. The key caveat is timeline and feasibility: meaningful chemistry simulations beyond classical reach require fault-tolerant quantum hardware at a scale far beyond today (the article describes needing extremely low error rates and very large qubit counts).

Relevance for Business

For SMB leaders, this is not “buy quantum now.” It’s a signal that the next AI wave could be less about chat and more about materials, energy, and biotech breakthroughs—with competitive impacts arriving through suppliers (batteries, chemicals, pharmaceuticals) before it arrives through internal IT.

Calls to Action

🔹 Treat “quantum + AI” as a mid/long-term innovation signal, not a near-term procurement item.

🔹 If you’re in manufacturing/energy/health-adjacent sectors, identify where material constraints (batteries, coatings, chemicals) are strategic bottlenecks.

🔹 Ask key suppliers how they’re using advanced simulation/AI for R&D and what that means for product roadmaps.

🔹 Watch for evidence of real-world validation (lab results, pilot materials) vs. conceptual promises.

🔹 What to monitor: progress toward fault-tolerant quantum and credible timelines (hardware scale remains the gating factor).

Summary by ReadAboutAI.com

https://spectrum.ieee.org/quantum-chemistry: March 10, 2026

GOOGLE’S NEW MINNESOTA DATA CENTER COMES WITH THE WORLD’S LARGEST BATTERY—AND WON’T RAISE ELECTRIC BILLS

FAST COMPANY (MAR 2, 2026)

TL;DR / Key Takeaway: Hyperscalers are starting to “pay their way” for AI-era power—Google’s Minnesota plan pairs 1,900 MW of new clean energy with long-duration storage to reduce ratepayer backlash and grid risk.

Executive Summary (1–3 paragraphs)

Fast Company reports Google is supporting a new data center in Pine Island, Minnesota by funding major grid additions through Xcel Energy: 1,400 MW wind + 200 MW solar + other clean resources totaling 1,900 MW. The strategic intent is explicit: avoid higher electric bills and prevent the buildout from locking in older coal resources—two common flashpoints in community opposition to data centers.

A standout element is the Form Energy iron-air battery: designed for ~100 hours of storage, sized to deliver 300 MW and store 30 GWh—described as the largest announced battery by capacity, and more storage than all U.S. battery projects built in 2024 added together. Xcel frames long-duration storage as a tool for multi-day renewables gaps (e.g., winter doldrums), and the article notes the technology is positioned as cost-competitive with natural gas.

Relevance for Business (SMB leaders)

- The AI buildout is increasingly constrained by power availability + community permission. Even if you’re not building data centers, your AI tool costs and vendor reliability will be shaped by whether hyperscalers can secure power without political and local backlash.

- This also hints at a new norm: major buyers may be expected to fund incremental grid capacity (not just sign PPAs), which can ripple into pricing and regional capacity decisions for cloud services.

Calls to Action

🔹 When evaluating AI vendors/cloud commitments, ask what assumptions they’re making about power constraintsand regional capacity (delivery risk is becoming infrastructure-driven).

🔹 For facilities-heavy SMBs, consider whether local utilities are seeing similar “large load” proposals—expect possible rate or reliability debates in some regions.

🔹 Track long-duration storage (100+ hours) as a stabilizer for renewables-heavy grids; it can affect energy price volatility over time.

🔹 Treat “who pays for power” as a governance question: contract language, pass-through costs, and timelines matter as much as model features.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91500104/google-minnesota-data-center-electric-bills: March 10, 2026

CLAUDE COWORK, AI HYPE, AND ITS REAL IMPACT ON WHITE-COLLAR WORK

FAST COMPANY (FEB 23, 2026)

TL;DR / Key Takeaway: “Agentic” desktop AI (Claude Cowork) is positioned to automate entry-level knowledge work tasks first, risking a collapsed talent pipeline even before full job automation arrives.

Executive Summary (1–3 paragraphs)

Fast Company frames Anthropic’s “Claude Cowork” as a packaging shift: moving beyond terminal-based “Claude Code” into a friendlier desktop interface that can execute broad computer tasks for subscribers (notably tied here to a $100/month tier). The practical capabilities described are not just writing text—they include organizing large file sets, converting invoice screenshots into spreadsheets, synthesizing material across websites, and handling slide comments—i.e., the kind of operational “glue work” many organizations assign to junior staff.

The article’s core claim is not “AI will replace everyone tomorrow,” but that it may remove the first rungs of the ladder: if AI takes junior-level research, reading, and drafting tasks, companies may hire fewer entry-level workers—creating downstream shortages in mid/senior talent later. It points to signals already moving: a cited data point suggests a 35% drop in U.S. entry-level openings since 2023.

Relevance for Business (SMB leaders)

- For SMBs, the near-term opportunity is real: these tools can compress “small team” overhead (research, reporting, admin workflows). But the risk is also real: if you reduce junior hiring too far, you may create a future capability gap and overdependence on tools that still require oversight and can make broader errors when connected to files/apps.

- This is less about abstract job-loss debate and more about operating model redesign: who reviews work, how quality is assured, and what internal skills you stop building.

Calls to Action

🔹 Identify 3–5 “junior workload” processes (research briefs, spreadsheet cleanup, first-draft memos) and pilot automation with explicit review gates.

🔹 Protect the talent pipeline: if you reduce entry-level tasks, replace them with higher-value apprenticeships (client work shadowing, QA, ops analytics) rather than eliminating roles outright.

🔹 Treat “desktop agents with file access” as a security decision: define what folders, data, and tools are in-bounds before deployment.

🔹 Watch for second-order vendor impacts: if in-house teams can use agents for specialized work, some category software demand may soften.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91495393/claude-cowork-ai-hype-and-its-real-impact-on-white-collar-work: March 10, 2026

NEWS CORP, META IN AI CONTENT LICENSING DEAL WORTH UP TO $50 MILLION A YEAR

WSJ (MAR 3, 2026)

TL;DR / Key Takeaway: Premium news content is becoming AI infrastructure: Meta is paying News Corp up to $50M/year to use content for retrieval (current info) and training, signaling a maturing market for content licensing—and a widening gap between “licensed” and “scraped.”

Executive Summary:

WSJ reports a multi-year (at least three years) deal that gives Meta access to News Corp content from the U.S. and U.K. The content can be used to retrieve up-to-date information for users of Meta’s AI products and to train on other content such as archives.

The size and structure are the signal: publishers increasingly view licensing as a way to be paid for content that trained models and now powers real-time chat experiences. WSJ notes News Corp previously signed an OpenAI content deal expected to exceed $250M over five years, and that other outlets are mixing partnerships with litigation—News Corp subsidiaries sued Perplexity; NYT sued OpenAI/Microsoft; NYT also signed an AI licensing agreement with Amazon reportedly worth $20M–$25M per year.

Relevance for Business:

SMBs using AI search/chat tools should expect an ecosystem split: some answers are built on licensed, high-quality sources; others rely on uncertain provenance. This impacts reliability, compliance (especially for regulated industries), and potentially pricing—because licensed data becomes a cost line item.

Calls to Action:

🔹 Ask your AI vendors what content is licensed vs. scraped and whether outputs can cite sources in your domain.

🔹 For customer-facing knowledge tools, prefer providers with transparent licensing and retrieval practices (lower litigation and trust risk).

🔹 Expect pricing pressure as premium content licensing expands—plan budgets accordingly.

🔹 If you publish content, watch this market: licensing may become a meaningful revenue stream—but contracts and exclusivity terms matter.

🔹 What to monitor: whether courts/regulators push the market further toward licensing as the default.

Summary by ReadAboutAI.com

https://www.wsj.com/business/media/news-corp-meta-in-ai-content-licensing-deal-worth-up-to-50-million-a-year-d4fbf244: March 10, 2026

SOFTWARE STOCKS JUST QUIETLY TROUNCED CHIP STOCKS… BUT DON’T GET TOO EXCITED

MARKETWATCH WSJ (MAR 3, 2026)

TL;DR / Key Takeaway: A short burst of software outperformance is more likely a sentiment/positioning blip than a durable shift—markets are still wrestling with the “AI ghost trade” fear that AI will erode seat-based SaaS models.

Executive Summary:

MarketWatch notes a record six-session stretch where the iShares software ETF (IGV) rose 9.3% vs. the semiconductor ETF (SMH) up 5.3%, the largest six-session outperformance on record. But it emphasizes this barely registers over the longer arc, where software has lagged chips over years.

The broader story remains unresolved: software stocks have been battered by fears that AI will eat into traditional business models—particularly seat-based pricing—and analysts cite recent AI tool launches (e.g., Anthropic updates) as intensifying that narrative. Wedbush calls this the “AI ghost trade,” arguing investors are chained to a fictional concept that businesses will drastically cut traditional software budgets by using cheaper AI alternatives.

Some analysts suspect the bounce could be driven by short covering rather than fresh long-term conviction, while chip enthusiasm has cooled after a multi-year run (even strong earnings didn’t lift some names).

Relevance for Business:

For SMB executives, the investable angle translates into an operating reality: vendors may respond to this fear by rebundling pricing, pushing AI add-ons, or shifting contracts away from simple per-seat economics. Your software bills—and your leverage in negotiations—may change even if your usage doesn’t.

Calls to Action:

🔹 Review major SaaS renewals for “AI repackaging”: identify what’s truly new vs. what’s being priced as fear.

🔹 Ask vendors how AI changes pricing long-term: per-seat vs. usage-based vs. outcome-based—model the cost curve.

🔹 Keep procurement leverage: avoid multi-year lock-ins if the pricing model is clearly in flux.

🔹 If you’re building internal AI alternatives, budget for the full cost (governance, maintenance, security)—not just model tokens.

🔹 What to monitor: “AI automation” product announcements that target high-value work previously done in SaaS workflows.

Summary by ReadAboutAI.com

https://www.marketwatch.com/story/software-stocks-just-quietly-trounced-chip-stocks-to-a-historic-extent-but-dont-get-too-excited-5babf59c: March 10, 2026

Patients Are Diagnosing Themselves With Home Tests, Devices and Chatbots

WSJ (Sept 30, 2025)

TL;DR / Key Takeaway: Healthcare is shifting toward consumer-led, DIY decision-making, and AI chatbots are becoming part of that toolkit—creating new demand and new liability/privacy risks for employers and service providers.

Executive Summary:

The article describes a growing DIY healthcare trend driven by clinician shortages, long waits, and chronic disease prevalence—patients increasingly order tests, track data via devices, and use chatbots for symptom and condition guidance. Major lab providers are expanding direct-to-consumer test menus, while wearables and emerging home devices generate more personal health data outside traditional clinical channels.

AI chatbots (including general assistants like ChatGPT and provider-built tools) are positioned as “always available” explainers of medical jargon and decision support—yet reliability, regulation gaps, misinformation, and privacy risks remain central concerns. The piece notes medical community warnings about safe use and highlights the likely evolution toward more agent-like tools that organize data and support ongoing monitoring.

Relevance for Business:

Even if you’re not in healthcare, SMB leaders will feel this through benefits costs, workforce expectations, and HR risk. Employees may bring AI-derived health claims into workplace accommodations, leave requests, and benefit disputes. Employers should anticipate increased use of health apps and AI tools—and the related data privacy and duty-of-care questions (especially if the company recommends tools).

Calls to Action:

🔹 If you offer wellness benefits, review which tools you endorse—avoid creating implied medical guidance liability.

🔹 Update privacy guidance: clarify what employee health data the company does not want collected/shared.

🔹 Train managers/HR on handling AI-driven health information with consistency and sensitivity.

🔹 For benefits providers, ask about safeguards for chatbot guidance, escalation paths, and data handling.

🔹 Treat this as a workforce trend: more self-service healthcare may reduce friction or increase confusion—plan communications accordingly.

Summary by ReadAboutAI.com

https://www.wsj.com/health/healthcare/patient-self-medical-treatment-tools-85b43eaa: March 10, 2026

AGENTIC AI IS THE FUTURE OF SALES: HERE’S HOW TO GET IT RIGHT

FAST COMPANY (03-03-2026)

TL;DR / Key Takeaway: Agentic AI can automate large portions of the sales cycle, but the limiting factor is organizational readiness, data quality, and control policies—not the model.

Executive Summary

The article positions “agentic AI” as the next step beyond assistants: systems that can pursue goals, run sequences, and collaborate with humans across the sales cycle. It argues this will shift sellers into “managers of AI agents,” delegating account research, outreach sequencing, and pipeline optimization—while humans remain essential for trust-heavy negotiations and final agreement.

The practical warning: the biggest barrier is not capability, but readiness. If your CRM data is fragmented or your systems don’t integrate, agentic workflows will fail in predictable ways (“bad gasoline” problem). The article emphasizes the need for clear rules for autonomy, escalation, and accountability to avoid loss of control, unwanted outreach, or brand damage.

Relevance for Business

For SMBs, agentic sales tools can improve speed-to-lead and consistency—but they also increase exposure to compliance mistakes, tone-deaf messaging at scale, and CRM hygiene debt. The ROI depends less on vendor selection and more on your process discipline.

Calls to Action

🔹 Start with a narrow, measurable slice (lead enrichment + follow-up sequencing) before expanding autonomy.

🔹 Clean your CRM: dedupe, standardize fields, and integrate source systems—agentic AI is only as good as your records.

🔹 Define guardrails: what agents can send, what requires approval, and when they must escalate.

🔹 Keep humans on “trust moments”: pricing exceptions, negotiation, and relationship repair.

🔹 What to monitor: rising “automation resentment” on sales teams—this can quietly sabotage adoption.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91487972/agentic-ai-is-the-future-of-sales-heres-how-to-prepare_ai-sales-success: March 10, 2026

AI WON’T REPLACE STRATEGY: IT WILL EXPOSE IT

FAST COMPANY (03-03-2026)

TL;DR / Key Takeaway: AI is a strategy stress test—it will magnify your data fragmentation, incentive misalignment, and vague priorities faster than it delivers “magic intelligence.”

Executive Summary

The article argues against the idea that “intelligence” can be imported like software licenses. Organizations aren’t empty containers; they’re systems of incentives, legacy processes, tacit assumptions, and fragmented data flows—and AI doesn’t float above that system; it interacts with it.

When AI meets a messy org, it scales the mess: fragmented data becomes visible at scale, misaligned incentives get optimized, and vague strategies get wrapped in fluent outputs that feel “smart” but aren’t coherent. The warning is practical: asking “How can AI improve this process?” is often the wrong first question—because optimizing a broken workflow just means you’re automating confusion.

Relevance for Business

For SMB leaders, the signal is timing and sequencing: the winners aren’t the ones who “deploy AI fastest,” but those who clarify decision rights, integrate data, and tighten feedback loops so AI outputs become hypotheses—not directives.

Calls to Action

🔹 Treat AI rollout as a diagnostic: map where it will surface broken handoffs, duplicate systems, and unclear ownership.

🔹 Before buying more tools, fix the basics: shared definitions, clean data flows, and explicit escalation rules.

🔹 Require “decision memos” for AI projects: what assumption is being tested, what metric changes, who is accountable.

🔹 Avoid “pilot sprawl”: consolidate experiments around 2–3 business outcomes, not departmental novelty.

🔹 What to monitor: where AI outputs are being treated as answers—especially in pricing, hiring, and risk decisions.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91498609/ai-wont-replace-strategy-it-will-expose-it: March 10, 2026

THERE’S ANOTHER AI-DOOM POST DOING THE ROUNDS. THIS TIME, THE S&P 500 DIVES NEARLY 40%

MARKETWATCH WSJ/MARKETPLACE (FEB 23, 2026)

TL;DR / Key Takeaway: A viral “future lookback” scenario argues successful AI adoption could still be bearish by triggering a non-cyclical white-collar demand shock—a reminder that AI risk is as much macroeconomic as it is technical.

Executive Summary:

MarketWatch summarizes a widely shared commentary (Citrini Research + a guest contributor) written as if from June 2028: unemployment rises to 10.2% and the S&P 500 is down ~38% from late-2026 highs. The mechanism starts with agentic AI-driven dislocation, especially in software/SaaS, where companies respond to disruption by cutting headcount and using savings to buy more AI.

The scenario emphasizes a feedback loop: AI agents reduce consumer friction (constantly optimizing subscriptions and choices), compressing company margins. Meanwhile, displaced workers can’t simply “shift jobs” because AI improves at the very tasks they would redeploy to—so layoffs reduce consumption, which pressures more firms to automate further. The piece then layers in systemic fragility via private credit exposure to software-backed loans and correlated financial bets.

Importantly, the commentary itself claims it’s not a prediction, but a stress-test scenario to explore under-modeled risk; it also spurred pushback arguing governments could respond with large-scale reindustrialization investment.

Relevance for Business:

For SMB leaders, the practical takeaway isn’t “the market will crash.” It’s that AI adoption can reshape demand, pricing power, and labor markets in ways that hit revenue assumptions and customer behavior. If AI makes buyers more price-sensitive (agents shopping constantly) and reduces white-collar spending, growth plans built on inertia and loyalty become fragile.

Calls to Action:

🔹 Treat “AI success” as a dual-edged risk: plan for margin compression and churn pressure.

🔹 Build resilience: diversify acquisition channels; don’t rely on habitual subscription behavior.

🔹 Stress-test your business for a demand dip driven by white-collar employment softness.

🔹 If deploying AI to cut costs, invest some savings into growth moats (service, trust, differentiated expertise)—not just more automation.

🔹 Label this as “what to monitor”: labor-market indicators + margin pressure + credit tightening signals.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/theres-another-ai-doom-post-doing-the-rounds-this-time-the-s-p-500-dives-nearly-40-13162b42: March 10, 2026

The AI-Powered Hacking Spree Is Here

Intelligencer (Feb 26, 2026)

TL;DR / Key Takeaway: As AI coding tools improve, cybercrime is likely to get cheaper, faster, and more scalable, accelerating a near-term wave of opportunistic attacks and raising the baseline for security readiness.

Executive Summary:

The article argues that the newest generation of AI coding tools is moving from “assistive” to software-producing, and one early real-world outcome is an offensive one: attackers can generate bespoke, good-enough hacking tools on demand. A cited case involves an alleged Claude-assisted campaign targeting Mexican government agencies, used to identify vulnerabilities, write exploit scripts, and automate data theft—illustrating how “AI + automation” compresses attacker effort.

The broader claim: when code becomes cheaper to produce, malicious code does too. That lowers barriers for less-skilled actors and increases productivity for skilled ones. The piece references a recent IBM report flagging “AI-enabled vulnerability scanning” as a driver of increased attacks—suggesting the next phase may look like more volume at the low end and faster iteration at the high end.

Relevance for Business:

For SMBs, the risk isn’t “Hollywood hacking”—it’s more frequent, more customized attacks: credential stuffing tuned to your stack, phishing that matches your internal language, and automated scanning for common misconfigurations. The cost of being “average” at security rises because the attacker’s unit economics improve.

Calls to Action:

🔹 Assume a higher cadence of attacks and tighten basic hygiene (patching SLAs, MFA everywhere, least privilege).

🔹 Run a fast audit of internet-exposed assets (old sites, admin panels, shadow SaaS).

🔹 Add monitoring for abnormal auth behavior (impossible travel, MFA fatigue, new device anomalies).

🔹 Rehearse incident response for data theft scenarios (not just ransomware).

🔹 If adopting AI coding internally, add controls to prevent secret leakage and insecure code patterns.

Summary by ReadAboutAI.com

https://nymag.com/intelligencer/article/the-ai-powered-hacking-spree-is-here.html: March 10, 2026

RECRUITERS SAY JOB BOARDS LIKE LINKEDIN ARE DEAD. HERE’S HOW TO HIRE INSTEAD

FAST COMPANY / INC. (FEB 2, 2026)

TL;DR / Key Takeaway: “Spray-and-pray” job boards are collapsing into bot noise; the hiring advantage is shifting to companies that tighten the funnel via networks, niche communities, and targeted outreach.

Executive Summary:

The article argues that major job boards (LinkedIn/Indeed) have devolved into an over-automated applicant swamp, where filtering for fit has become a time sink—especially as bots and low-signal applications surge. A CTO anecdote illustrates the operational cost: automation didn’t “find people quickly,” it produced backlog and chaos, driving leaders to question why they bought so much hiring tech in the first place.

A core theme is “results-first hiring”: treat hires as “critical machine parts,” not interchangeable labor. Companies described in the piece are bypassing job boards—posting on their own sites (or not at all) and sourcing through employees/investors, smaller recruiters, trade associations, colleges, and niche online communities (e.g., Reddit, Hacker News). The goal is 100 targeted resumes, not 10,000 noisy ones.

Relevance for Business:

For SMBs, this is a practical operating model: your hiring bottleneck is increasingly screening time and signal extraction, not “top-of-funnel volume.” AI can help, but the risk is automating the wrong thing and importing more noise. The strategic shift is to design a hiring motion that optimizes for fit and speed, not application counts.

Calls to Action:

🔹 Replace “job board-first” with a targeted sourcing plan (employee referrals + niche communities + industry groups).

🔹 Tighten job requirements and success metrics to reduce false applicants and mis-hires.

🔹 Use AI for research and outreach personalization, not to mass-produce generic postings and filters.

🔹 Audit your ATS/hiring stack: eliminate tools that create friction without improving signal.

🔹 Track “time-to-shortlist” and “quality-of-shortlist” as the key KPIs—not number of applicants.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91482196/recruiters-say-job-boards-like-linkedin-are-dead-heres-how-to-hire-instead: March 10, 2026

NO, AI IS NOT ABOUT TO KILL THE SOFTWARE INDUSTRY

FAST COMPANY (02-27-2026)