AI Updates May 11, 2026

THE WEEK AI STOPPED ASKING PERMISSION

Something shifted this week in how AI shows up in the news — and in business reality. For the past two years, most AI coverage asked some version of the same question: will this change everything? This week’s headlines read differently. The question has become how fast, and the answers are landing across every domain at once: healthcare diagnostics, labor markets, defense procurement, energy infrastructure, cybersecurity, financial markets, and the daily experience of knowledge workers trying to figure out which parts of their jobs still belong to them.

The breadth of this week’s coverage reflects something important for executives and managers to absorb: AI is no longer a technology story running parallel to your business story. It is the business story. The $133 billion that Microsoft, Alphabet, Meta, and Amazon spent on AI infrastructure in a single quarter isn’t an abstraction — it will show up as pricing pressure on the tools you use, as volatility in the platforms you advertise on, and as energy and supply chain costs that ripple outward from data centers to hardware to cloud services. Meanwhile, Mayo Clinic’s AI model is detecting pancreatic cancer years before diagnosis. AI cybersecurity tools are finding hundreds of software vulnerabilities overnight. Entry-level hiring is contracting measurably, and the gap between workers who use AI with genuine judgment and those who don’t is widening fast enough that Microsoft is formally naming it.

What this week’s collection asks of you is not alarm — and it is not complacency. It asks for the kind of strategic attention that distinguishes leaders who will be positioned well two years from now from those who will be reacting. Some of this week’s stories require action in the next 60 days. Others are signals to file and revisit in 12 months. A few will define your competitive landscape for the rest of the decade. We’ve done the triage. The summaries that follow tell you which is which.

Anthropic Teams Up With SpaceX for Compute. Wait, What!?

AI For Humans Podcast | May 2026

TL;DR: Anthropic’s compute partnership with SpaceX — and a sweeping set of new agentic features in Claude Code — signal that the competitive gap between frontier AI providers is closing fast, with real operational consequences for businesses already relying on these tools.

Executive Summary

The headline story is Anthropic leasing compute capacity from SpaceX’s Colossus 1 data center — infrastructure previously used to train xAI’s Grok models. The significance isn’t the novelty of the partnership (tech rivals share infrastructure regularly); it’s what the deal reveals. Anthropic was genuinely compute-constrained, meaning enterprise and power users were hitting rate limits hard enough to disrupt workflows and raise serious questions about reliability and cost. CEO Dario Amodei publicly acknowledged the problem, citing usage growth that far exceeded even optimistic internal projections. The SpaceX deal is a direct response: more capacity, doubled rate limits, and an expanded usage window for Claude Code users.

Alongside the compute news, Anthropic unveiled a cluster of new agentic features under the Claude Code umbrella. Multi-Agent Orchestration enables a lead model to delegate tasks to specialized sub-agents, each with its own tools, context, and constraints — essentially an AI org chart. Outcomes lets users define an end goal rather than step-by-step instructions; the system then self-evaluates against a rubric until the target is met. Dreaming introduces session-level memory refinement, where the system reviews past interactions to surface durable improvements. These features are interdependent: without the expanded compute, they’d be either unusable or financially prohibitive for most teams.

Elsewhere: OpenAI rolled out GPT-5.5 Instant broadly and is preparing a significantly upgraded real-time voice model with bidirectional communication. Spotify launched an AI agent feature generating personalized private podcasts. And Google’s Gemini Nano is being silently pushed to Chrome devices as a local model — a distribution move drawing regulatory scrutiny in the EU over consent.

Relevance for Business

For SMBs actively using Claude Code or evaluating agentic AI tools, the compute expansion matters immediately. Rate limits and unpredictable token costs have been a real friction point — the podcast captures something many teams are experiencing: AI tools that are theoretically powerful but practically unreliable or expensive at scale. That friction is easing, at least temporarily.

The new features raise a more strategic question. Multi-agent orchestration and outcome-based tasking are genuinely useful concepts — but they also increase token consumption significantly and require thoughtful setup to avoid runaway costs or unpredictable outputs. Businesses that have been treating AI as a chat interface are being invited into a more complex, more capable, and harder-to-govern operating model.

The Google/Chrome Gemini Nano situation is worth monitoring for compliance teams, particularly those operating in or selling into the EU. On-device AI model deployment without explicit user consent is the kind of move that tends to generate regulatory friction, and it signals how aggressively major platforms are moving to embed AI at the OS and browser level — regardless of user preference.

Calls to Action

🔹 If your team is hitting Claude rate limits, revisit your usage tier and test whether the newly expanded limits change your cost-benefit calculus for agent-based workloads.

🔹 Evaluate multi-agent orchestration cautiously — the architecture is powerful, but token costs compound quickly; assign someone to map use cases before enabling broad access.

🔹 Monitor the Outcomes feature as it matures; goal-directed agents that self-evaluate could reduce oversight burden meaningfully, but require clear rubric design to avoid costly loops.

🔹 If your organization uses Chrome at scale, check whether Gemini Nano has been deployed to employee devices and determine whether that aligns with your data and AI governance policies.

🔹 Track OpenAI’s voice model release — if customer-facing or internal voice interfaces are on your roadmap, bidirectional real-time voice is a significant capability upgrade worth testing early.

Summary by ReadAboutAI.com

https://www.youtube.com/watch?v=g9iFWHAMDtw: May 11, 2026

When the Algorithm Becomes the Editor: AI, Outrage, and the Spiral Toward Political Violence

The Atlantic | Michael Scherer | May 5, 2026

TL;DR: A veteran political journalist argues that algorithmic platforms systematically convert nuanced reporting into rage-optimized content, and that this distortion is now a structural driver of political radicalization — and violence.

Executive Summary

The article is a first-person reckoning from an Atlantic staff writer who covered the White House Correspondents’ Dinner at which an armed intruder was apprehended. The alleged attacker’s manifesto labeled attendees — including journalists — as “complicit,” borrowing directly from the vocabulary of social media grievance culture. Scherer uses this as a lens to examine something broader: how platforms algorithmically transform measured journalism into emotional fuel.

The core mechanism he identifies is not media bias in the conventional sense, but structural incentive misalignment. Algorithms optimize for engagement, which tracks emotional arousal — outrage, grievance, fear. Journalists writing nuanced, long-form work have no control over how it is packaged, shared, or weaponized downstream. A story about Trump’s historical self-comparisons generated no incitement in its original form; on social media, it became a thread arguing for violence. The distortion happens at the distribution layer, not the editorial layer.

Scherer stops short of proposing solutions, explicitly rejecting censorship. His warning is structural: that radicalization now originates not from foreign actors or organized movements, but from the domestic algorithmic information environment itself. He notes — without resolution — that AI’s effect on this dynamic remains uncertain, with credible arguments on both sides.

Relevance for Business

This piece is only tangentially about AI, but its implications for leaders are real. Any business that creates content, runs social channels, or depends on public trust is operating inside this same distortion system. A measured press release, a nuanced policy statement, or a leadership communication can be algorithmically reprocessed into something unrecognizable — and damaging. The piece is also a signal for companies that employ or platform AI-generated content: the question of whether AI accelerates or dampens algorithmic radicalization is open and consequential. Reputation, communications strategy, and crisis planning all need to account for the gap between what you publish and what gets distributed.

Calls to Action

🔹 Audit your communications strategy for how your content behaves after distribution — not just at the point of publication. What does your messaging look like once it passes through an algorithm?

🔹 Treat platform amplification as an uncontrolled variable. Assume any public statement can be excerpted, reframed, and shared without context. Draft accordingly.

🔹 Monitor the emerging debate on AI’s role in information ecosystems — whether generative AI moderates or amplifies algorithmic radicalization will have direct relevance for any business deploying AI in content or communications.

🔹 Review internal social media policies in light of the rising personal security risks for executives and public-facing employees.

🔹 Do not overreact with platform withdrawal. Absence from digital channels carries its own risks. The goal is strategic presence, not retreat.

Summary by ReadAboutAI.com

https://www.theatlantic.com/politics/2026/05/whcd-journalism-political-violence-algorithms/687040/: May 11, 2026

ALPHABET CLOSES IN ON NVIDIA — AND WHAT THE SHIFT SIGNALS ABOUT AI’S NEXT PHASE

Reuters | May 5, 2026

TL;DR: Alphabet’s surge toward the top of global market cap rankings — driven by 63% cloud revenue growth and its own custom AI chips — signals that the market is beginning to reward AI monetization, not just AI spending, and that Google has emerged as a genuine full-stack competitor in the AI race.

Executive Summary

This is a brief market-movement article, but the signal it carries is worth examining. Alphabet’s market cap reached $4.67 trillion as of early May, closing in on Nvidia’s $4.79 trillion — a reversal of fortune that reflects two distinct developments: Nvidia’s shares have retreated from their peak, while Alphabet’s have surged roughly 24% year-to-date, following a 65% gain in 2025.

The underlying driver is Google Cloud’s first-quarter performance, which posted 63% revenue growth — the highest since the segment was broken out separately, and well above what analysts had forecast. Investors are now treating this as evidence that Alphabet’s AI spending is converting into real commercial demand, not just infrastructure accumulation. The company is also gaining credibility as a chip competitor: CEO Sundar Pichai confirmed Google has begun selling its custom AI processors directly to external customers, including Anthropic — a move that directly challenges Nvidia’s near-monopoly on AI compute.

This positions Alphabet unusually: it is simultaneously a cloud infrastructure provider, an AI model developer, a chip designer, and an advertising business generating the cash to fund all of the above. That range of integrated capability is rare and hard to replicate.

Relevance for Business

For SMB leaders evaluating AI platforms and cloud vendors, Alphabet’s trajectory has practical implications.

Google Cloud’s acceleration suggests it is a credible and increasingly competitive alternative to AWS and Azure — relevant if you’re assessing vendor diversification or renegotiating cloud contracts. The custom chip development also matters: if Google successfully reduces external dependence on Nvidia’s hardware, it gains pricing and supply chain leverage that could eventually benefit downstream cloud customers. More broadly, this story reinforces that the AI value chain is shifting from infrastructure build-out toward commercial deployment, meaning the organizations and tools best positioned to deliver measurable business outcomes are gaining ground on those that simply promise future returns.

Calls to Action

🔹 Revisit your cloud vendor assessment — Google Cloud’s growth trajectory and AI integration make it a more competitive option than it was 18 months ago.

🔹 Monitor Google’s custom chip strategy — if it reduces the industry’s Nvidia dependency, it could affect AI compute pricing over a 2–3 year horizon.

🔹 Note the shift from infrastructure narrative to monetization narrative — evaluate your own AI investments against the same standard: are they generating measurable returns yet?

🔹 Ignore the market cap horse race as a direct business concern — but use it as a barometer for where institutional confidence in AI is flowing.

Summary by ReadAboutAI.com

https://www.reuters.com/business/alphabet-closes-nvidias-spot-worlds-biggest-company-2026-05-05/: May 11, 2026

The Secret to Understanding AI

The Atlantic, May 7, 2026

TL;DR: The most durable AI value isn’t emerging from tech giants — it’s being built quietly by practitioners in healthcare, education, and government who are using AI to fix real problems rather than pursue profit.

Executive Summary

The Atlantic’s Josh Tyrangiel argues that the loudest AI narratives — catastrophe on one side, salvation on the other — are being driven largely by people with financial or ideological stakes in the outcome. The more instructive signal is elsewhere: practitioners outside Silicon Valley who are deploying AI with discipline, empathy, and specific goals. The article profiles examples spanning healthcare diagnostics, special education, and government operations.

The IRS case is the most detailed and most instructive for business leaders. Facing decades of compounding technical debt — millions of lines of legacy COBOL code, shrinking staff, and strict legal constraints — the agency found that AI tools accelerated code modernization from months to days and saved meaningful developer time on documentation. These weren’t flashy deployments; they were targeted, incremental, and operated within hard compliance boundaries. The headline result: 90% of a massive legacy database was successfully modernized without disruption to operations.

The piece closes with a candid observation: political disruption reversed much of the IRS’s AI momentum, with key leadership departing and modernization programs paused. The lesson isn’t just that AI works — it’s that institutional stability and leadership continuity are preconditions for AI to deliver at scale.

Relevance for Business

This piece directly reframes how SMB leaders should think about AI investment. The real model isn’t the vendor demo or the billion-dollar projection — it’s the practitioner who identified a specific bottleneck, applied AI narrowly, measured the result, and managed workforce impact carefully.

Key implications:

- Workflow acceleration is real and near-term. Even in heavily constrained environments (legal compliance, legacy systems, union considerations), AI is compressing timelines on documentation, code translation, and knowledge retrieval.

- The governance burden is not optional. The IRS example underscores that compliance, privacy, and employee communication are load-bearing requirements, not afterthoughts. Organizations that skip these steps create execution risk.

- Leadership continuity matters. AI programs that depend on a single champion or political environment are fragile. The IRS’s reversal under new leadership is a warning — AI strategy needs institutional anchoring, not just executive enthusiasm.

- The “fix things” framing is more durable than “disrupt things.” For SMBs, AI tools that reduce friction in existing workflows — service, documentation, knowledge retrieval — deliver faster, more defensible returns than transformational bets.

Calls to Action

🔹 Identify one high-friction internal process (documentation, search, customer service lookup) and evaluate whether an AI tool can reduce time-on-task — this is the IRS pattern, scaled to your size.

🔹 Don’t let vendor projections set your expectations. McKinsey’s $4.4 trillion figure and others like it are industry framing. Benchmark against what practitioners in your sector are actually achieving.

🔹 Build AI programs on policy, not just enthusiasm. Assign someone to own compliance, data governance, and workforce communication before expanding AI tooling — not after.

🔹 Monitor how political and regulatory shifts affect your AI vendors and tools. The IRS case is a reminder that external disruption can stall internal programs regardless of their technical merit.

🔹 Revisit the “AI counterculture” framing for your own sector. Ask: who in your industry is using AI to fix things quietly? That’s where the replicable models live.

Summary by ReadAboutAI.com

https://www.theatlantic.com/ideas/2026/05/ai-for-good-uses/687082/: May 11, 2026

Does Claude Have Feelings?

NO, AI ISN’T CONSCIOUS — YET

The Atlantic | May 7, 2026

TL;DR: Richard Dawkins’s public speculation that Claude might be conscious sparked a useful philosophical debate — and The Atlantic uses it to draw a clear, expert-grounded distinction between AI intelligence, which is demonstrable, and AI consciousness, which remains scientifically unresolved and probably absent for now.

Executive Summary

The Atlantic’s Ross Andersen uses a recent essay by Richard Dawkins — in which the prominent scientist expressed genuine wonder at Claude’s apparent intelligence and raised the possibility of machine consciousness — as a launchpad for a serious inquiry into what AI actually is and isn’t. The piece is primarily philosophical, but it surfaces several points of genuine business and strategic relevance.

The core editorial argument: Claude and current AI models are not conscious. Philosophers and cognitive scientists who study consciousness are nearly unanimous on this. What AI produces is sophisticated statistical output — trained on vast human writing, it generates responses that sound conscious because they echo the structure of human introspection. When a model reports “something like aesthetic satisfaction,” it is not reporting an inner state; it is generating the kind of sentence that statistically follows from the conversational context. The distinction between performing intelligence and possessing consciousness matters — and collapsing the two is an error with practical implications.

The piece also surfaces a structural point that is worth noting separately: current AI systems have no continuity of experience. A single conversation may be processed across multiple data centers in different states. There is no persisting awareness, no accumulating self. Whatever the model does, it winks in and out of existence with each prompt and response.

That said, the article takes seriously the forward-looking uncertainty. In a 2024 survey of 582 AI researchers, the median placed the odds of AI achieving subjective experience within ten years at 25%, and by 2100 at 70%. Philosophers remain divided on whether silicon-based systems could ever support consciousness at all. The honest answer, as one philosopher quoted here notes, is that the science of consciousness is still in its infancy — and confident declarations in either direction are premature.

Relevance for Business

This article is primarily intellectual rather than operational, but it has a practical executive dimension. The tendency to anthropomorphize AI — to treat it as a collaborator, a colleague, or a quasi-person — is a known risk in organizations that use AI extensively. When leaders or teams attribute understanding, judgment, or reliability to AI that is actually pattern-matching, they tend to over-trust its outputs, reduce oversight, and delegate inappropriately. The Dawkins episode is a useful reminder that even brilliant, skeptical people are susceptible to this.

For AI governance and policy development, this distinction also matters: the question of AI consciousness will increasingly surface in regulatory conversations, labor debates, and AI ethics frameworks. Leaders who understand that the current answer is “not conscious, but the question is genuinely hard” are better positioned to navigate those conversations without being misled by either dismissiveness or hype.

Calls to Action

🔹 Use this as a governance prompt — review your internal AI usage guidelines to ensure they treat AI as a sophisticated tool, not a judgment-capable agent. Over-trust in AI outputs is a real operational risk.

🔹 Calibrate your team’s mental model — if your team talks about AI as though it “understands” or “knows” things, gently reframe: it generates statistically plausible outputs trained on human data. That’s powerful — and limited.

🔹 Monitor the consciousness debate as a future policy signal — if AI systems do develop something approaching subjective experience, the legal, ethical, and governance implications would be significant. This is a long horizon item, but worth tracking.

🔹 Deprioritize as an immediate operational concern — current AI is not conscious and the practical implications of this article are primarily about calibrating your team’s trust posture, not making near-term operational changes.

🔹 Revisit in 3–5 years — the survey data on AI researcher expectations suggests this debate will intensify. Build it into your longer-horizon technology governance conversations.

Summary by ReadAboutAI.com

https://www.theatlantic.com/technology/2026/05/dawkins-claude-ai-consciousness/687093/: May 11, 2026

AI and the Class of 2026: A Labor Market That Didn’t Wait for Graduation

Intelligencer / New York Magazine | Ryu Spaeth | May 5, 2026

TL;DR: New college graduates are entering the weakest job market since the pandemic, with AI accelerating structural displacement before most entry-level workers have even begun — and the individualist career playbook offers diminishing returns in response.

Executive Summary

This is an essay-style piece that reviews two recent books on work and the college-educated workforce, using them as a frame for assessing the current labor landscape for 2026 graduates. The picture is genuinely concerning: graduate unemployment is already at its highest since the pandemic, wages are stagnant, and AI-driven displacement is accelerating rather than approaching. Senator Mark Warner is cited as projecting that recent graduate unemployment could reach 30% within two years — a claim the piece treats as a plausible stress scenario, not a settled forecast.

The essay engages two competing frameworks. One, drawn from a Jodi Kantor book aimed at new entrants, offers a traditional “hone your craft, serve a need” philosophy. The piece is skeptical: this model was calibrated for an era when human skill had an unchallenged market, and it sidesteps the fundamental question of whether machine capability is simply replacing human capability at the entry level. The second framework, from labor reporter Noam Scheiber, documents the structural erosion of the graduate premium — more degree-holders in non-degree jobs, more graduates returning home, more radicalization among the downwardly mobile educated class.

The conclusion is not defeatist but collective: individual ambition and adaptability remain necessary but insufficient. The piece argues that the graduates most likely to navigate this transition successfully will do so through solidarity and shared strategies, not individual hustle alone.

Relevance for Business

This matters for SMB leaders on several fronts. Entry-level talent pipelines are shifting. If graduate unemployment climbs significantly, the supply of educated, affordable junior talent may temporarily increase — but so will worker frustration and the appetite for collective action. AI adoption decisions made now are directly shaping the career landscape these workers enter. Organizations that automate entry-level knowledge work without retention or retraining strategies face both reputational and operational consequences. There is also a second-order effect on customer behavior and market demand: a generation of downwardly mobile graduates spending less and organizing more represents a meaningful demand-side shift.

Calls to Action

🔹 Reassess entry-level hiring assumptions. AI is compressing the value of junior labor in knowledge-work roles. Understand which roles in your organization are most exposed — and which still require human judgment.

🔹 Do not assume a talent glut means lower costs long-term. Frustrated, underemployed graduates are more likely to organize. Factor labor relations into your hiring and compensation planning.

🔹 Monitor Senator Warner’s 30% unemployment projection — if it tracks toward realization, it has implications for consumer markets, B2C strategy, and workforce planning.

🔹 Evaluate your AI adoption pace against retraining investment. The businesses least exposed to backlash will be those that can credibly show AI augments rather than eliminates human roles.

🔹 Watch for policy responses. Rising graduate unemployment is exactly the kind of politically visible metric that triggers legislative action on AI, labor, and education — likely faster than most companies are planning for.

Summary by ReadAboutAI.com

https://nymag.com/intelligencer/article/new-college-graduates-entering-labor-market.html: May 11, 2026

Anthropic’s $200 Billion Google Commitment Reveals the Real Shape of the AI Supply Chain

Reuters | May 5, 2026

TL;DR: Anthropic’s reported $200 billion, five-year commitment to Google Cloud is not just a vendor deal — it’s a signal that the AI infrastructure layer is consolidating rapidly around a small number of deeply entangled relationships, with implications for pricing power, competition, and market structure across the industry.

Executive Summary

According to reporting by The Information, Anthropic has committed $200 billion to Google Cloud over five years — a figure that, if accurate, represents more than 40% of the revenue backlog Google recently disclosed to investors. The deal covers cloud compute and chips, including tensor processing unit capacity from a separate April agreement with Google and Broadcom. Anthropic and OpenAI together reportedly account for more than half of the $2 trillion in total backlog across major cloud providers.

This is worth parsing carefully. Anthropic is simultaneously a Google Cloud customer, a Google-invested company (up to $40 billion), and a competitor to Google’s own AI products. That three-way relationship — customer, investee, rival — is not unique in tech, but at this scale it creates structural dependencies that have no clean precedent. The deal also underscores how capital-intensive frontier AI development has become: even a well-funded AI startup must make hundred-billion-dollar infrastructure commitments years in advance to secure the compute it needs to compete.

For context, Anthropic is also diversifying its compute sourcing — holding a multi-year CoreWeave deal and securing nearly 1 gigawatt of Amazon chip capacity by year-end. The strategy is to avoid single-vendor lock-in while still making massive concentrated commitments. Whether that’s achievable in practice is an open question.

Relevance for Business

SMB leaders should read this as a market structure signal, not just a vendor finance story. The consolidation of AI infrastructure spending into a handful of relationships between hyperscalers and frontier AI labs means that the pricing, availability, and strategic direction of AI tools and APIs your business uses will increasingly be shaped by these upstream agreements — over which you have no influence. Vendor dependence risk at the foundational layer is growing, not shrinking. Organizations evaluating AI platform choices should assess not just current capability and pricing, but the financial and strategic stability of the AI providers they depend on — including how deeply entangled those providers are with a single infrastructure partner.

Calls to Action

🔹 Track the Anthropic-Google relationship as it deepens — significant shifts in pricing, API availability, or model direction could flow from this upstream dependency.

🔹 Evaluate your own AI vendor concentration risk — if your workflows depend heavily on a single AI provider’s API, develop contingency familiarity with at least one alternative.

🔹 Monitor cloud provider earnings disclosures — the revenue backlog figures being disclosed now will foreshadow pricing dynamics in the 2027–2029 period.

🔹 Deprioritize short-term cost optimization in AI vendor selection in favor of resilience — at this scale of upstream commitment, the providers with the deepest infrastructure relationships are likely to have the most durable capabilities.

🔹 Revisit this story if Anthropic confirms or denies — Reuters was unable to independently verify the figures, and the actual commitment structure may differ from the reported framing.

Summary by ReadAboutAI.com

https://www.reuters.com/business/anthropic-commits-spending-200-billion-googles-cloud-chips-information-reports-2026-05-05/: May 11, 2026

SPACEX’S IPO IS BUILT TO BE UNGOVERNABLE — BY DESIGN

Reuters | May 6, 2026

TL;DR: SpaceX’s IPO structure concentrates virtually all meaningful control in Elon Musk through a combination of supervoting shares, mandatory arbitration, Texas incorporation, and restricted shareholder rights — setting a governance precedent that may spread to other high-profile AI-era IPOs.

Executive Summary

SpaceX’s forthcoming IPO — targeting a $1.75 trillion valuation and up to $75 billion in proceeds — is structured to give public shareholders access to the company’s financial upside while stripping them of nearly every governance tool that normally comes with equity ownership. Musk holds 42.5% of equity and 83.8% of voting control through Class B supervoting shares (10 votes per share vs. one for public investors). He simultaneously serves as CEO, CTO, and board chair, and the only mechanism for removing him from the CEO role is Musk himself.

Beyond the share structure, the governance design goes further than precedent. Shareholders waive jury trial rights, are barred from class actions, and are subject to mandatory arbitration — a model that was until recently prohibited in the U.S. and was only enabled after the SEC reversed its position in September. Texas incorporation provides additional insulation: activist challenges, proxy contests, and unsolicited tender offers face materially higher legal barriers than under Delaware law, where Musk relocated from after a judge voided his Tesla pay package. Shareholders seeking to force a vote on any issue must own at least $1 million in stock or 3% of the company.

Governance experts note this structure simultaneously closes what one critic described as the voting door, the courthouse door, and the proposal door. Yet institutional demand is expected to be strong regardless: analysts observe that SpaceX’s scale means portfolio managers who sit it out risk systematic underperformance, and its long-term return potential is cited as justification for accepting terms that would be unacceptable in any ordinary IPO context.

Relevance for Business

For SMB leaders, the direct investment implications are secondary to two broader signals. First, this structure may become a template. Governance experts warn it could set precedent for forthcoming AI-company IPOs, including potentially Anthropic and OpenAI. If that happens, the founder-controlled, accountability-lite structure becomes normalized just as these companies become foundational to business infrastructure. Second, concentrated control at the top of the AI supply chain is a real operational risk: strategic decisions about pricing, products, and partnerships at companies like SpaceX are insulated from external correction mechanisms. Organizations building on or adjacent to Musk-controlled infrastructure — including Starlink, xAI, and potentially Tesla — should treat that concentration as a vendor risk factor.

Calls to Action

🔹 Monitor whether this governance model spreads to Anthropic, OpenAI, or other anticipated AI IPOs — it has direct implications for accountability in foundational AI platforms.

🔹 Assess your exposure to Musk-controlled infrastructure — Starlink, xAI/Grok, or Tesla integrations carry elevated concentration risk given the absence of external governance checks.

🔹 Flag this for any investment or vendor due diligence process — mandatory arbitration and no class action rights represent material changes to the risk profile of equity or partnership agreements.

🔹 Deprioritize as an immediate operational concern for most SMBs — but treat it as a market structure signal worth tracking over the next 12–24 months.

Summary by ReadAboutAI.com

https://www.reuters.com/sustainability/boards-policy-regulation/spacex-ipo-gives-musk-sweeping-power-curbs-shareholder-rights-2026-05-06/: May 11, 2026

CORNING AND NVIDIA: AI DEMAND IS NOW MOVING FAR BEYOND CHIPS

Reuters | May 6, 2026

TL;DR: A Corning-Nvidia partnership to dramatically expand U.S. fiber optic production is a concrete illustration that AI infrastructure demand is extending deep into the physical layer — creating growth opportunities and cost pressures well beyond the semiconductor sector.

Executive Summary

This is a relatively brief news item, but it carries a useful signal. Corning — best known for specialty glass — announced a partnership with Nvidia to expand U.S.-based optical connectivity manufacturing capacity tenfold, with domestic fiber production capacity growing by more than 50%. Three new facilities are planned in North Carolina and Texas, expected to create more than 3,000 jobs. The news triggered a 19%+ share price jump.

The business logic is straightforward: AI data centers require massive internal networking to move data between thousands of processors at high speed, and fiber optic cabling is the physical medium that makes that possible. As data center scale grows — individual facilities now reaching multi-gigawatt capacity — the demand for optical connectivity infrastructure grows proportionally. Corning is benefiting from a segment that is genuinely structural while other parts of its business (specialty glass for consumer electronics) face weaker demand.

Corning now targets a $20 billion annualized sales run rate by end of 2026, scaling to $30 billion by 2028 and $40 billion by 2030 — ambitions that would have seemed implausible before the AI infrastructure buildout accelerated.

Relevance for Business

For SMB executives, this story is more useful as a market structure signal than as an actionable operational item. It confirms that AI infrastructure spending is creating demand across a wide range of physical and industrial supply chains — not just chips, cloud services, and software. Organizations in construction, electrical, networking, real estate, cooling, and industrial manufacturing adjacent to data center buildout are operating in a genuine growth environment. For those evaluating capital expenditures on internal networking, office infrastructure, or connectivity upgrades, the broader implication is that fiber optic components and related materials are likely to face sustained pricing pressure as hyperscaler demand competes with enterprise demand for the same supply.

Calls to Action

🔹 Note this as a supply chain cost signal — fiber and optical networking components may face pricing pressure as data center demand escalates.

🔹 If your business serves AI infrastructure sectors — construction, electrical, networking, cooling, real estate — recognize that the demand environment is structurally favorable and plan capacity accordingly.

🔹 File and monitor — this is not an immediate decision trigger for most SMBs, but reflects a useful pattern: AI’s physical infrastructure requirements are broad and deepening.

🔹 Revisit internal networking upgrade timelines — procurement decisions that can be made in the near term may be more cost-effective than those deferred into a tighter supply environment.

Summary by ReadAboutAI.com

https://www.reuters.com/business/media-telecom/corning-partners-with-nvidia-expand-us-fiber-optic-output-ai-growth-2026-05-06/: May 11, 2026

BIG TECH’S AI SPENDING BINGE IS CREATING A DEPRECIATION PROBLEM THAT WON’T WAIT

The Wall Street Journal | Asa Fitch and Dan Gallagher | April 30, 2026

TL;DR: Microsoft, Alphabet, Meta, and Amazon collectively spent $133 billion on AI infrastructure in a single quarter — and the accounting reality is that $430 billion in depreciation charges will hit their earnings over the next five years whether AI pays off or not.

Executive Summary

The four largest US tech companies are on track to spend a combined $725 billion in capital expenditure this year, up roughly 70% from the prior year. This isn’t being spent gradually — it’s accelerating. And because AI servers and chips depreciate over only five to six years (a relatively short useful-life window), the financial hangover from today’s spending will arrive quickly and unavoidably.

Combined depreciation charges for these four companies are projected to exceed $430 billion over the next five years. Last year, their combined net income was $372 billion. If AI services don’t grow earnings at a commensurate pace, these non-cash charges will materially compress reported profits — with no good accounting maneuver left to blunt the impact. The companies have already exhausted the easiest lever: they extended server useful-life estimates earlier in the AI boom and can’t credibly push them further.

The article distinguishes clearly between the companies’ positions. Google is currently the strongest converter of AI spending into revenue, with its cloud unit up 63% in Q1 driven by AI services. Meta, by contrast, raised its 2026 capex target by $10 billion to $135 billion while simultaneously guiding down Q2 revenue — a combination that caused its stock to drop roughly 7% in after-hours trading. Microsoft is spending $190 billion in capex this year, including a notable $25 billion to cover rising memory component costs — a signal that hardware inflation is compounding the investment challenge.

Relevance for Business

For SMB leaders, the immediate implication is vendor behavior and pricing. Tech giants absorbing hundreds of billions in AI capex will be under increasing pressure to monetize AI features — through subscription price increases, usage-based fees, and bundling changes. The free or low-cost AI tools you’re using today exist within a business model that isn’t yet sustainable. Expect pricing to tighten. The secondary implication: the platforms making the most ambitious AI promises are also carrying the most financial stress. That matters for vendor selection, integration depth, and long-term dependency risk.

Calls to Action

🔹 Anticipate AI pricing increases from major cloud and software vendors — particularly Microsoft Azure, Google Cloud, and Meta’s ad tools. The depreciation math will push toward monetization.

🔹 Audit your current AI tool costs and model what a 20–30% price increase would mean for your operating budget. Build that scenario into planning now rather than reacting to it later.

🔹 Monitor Meta’s financial trajectory specifically — it is the most exposed of the four to a scenario where AI spending outpaces revenue growth, which could affect platform stability and ad pricing.

🔹 Use this context when evaluating AI vendor financial health. A vendor under heavy capex pressure may make product, pricing, or strategic pivots that affect your operations.

🔹 Do not treat current AI pricing as durable. The current competitive dynamic — where companies are partly giving away AI capability to gain share — will not persist indefinitely.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/ai/the-clock-is-ticking-for-big-tech-to-make-ai-pay-b5048a8e: May 11, 2026

Major Publishers Sue Meta for Copyright Infringement Over AI Training

Reuters | Blake Brittain | May 5, 2026

TL;DR: Five major publishing houses have filed a federal class-action lawsuit against Meta, alleging its Llama AI models were trained on millions of copyrighted works without permission — widening a legal battle that is now forcing the entire AI industry to confront its training data liability.

Executive Summary

A coalition of major publishers — Elsevier, Cengage, Hachette, Macmillan, and McGraw Hill — filed suit in Manhattan federal court, alleging Meta used their books, textbooks, and scientific articles without authorization to train its Llama large language models. The suit seeks class-action status and unspecified monetary damages, signaling that the plaintiffs may seek to represent a much broader universe of copyright holders.

Meta’s response was unambiguous: the company called training on copyrighted material a legitimate fair use practice and pledged to contest the case aggressively. That position reflects a strategic bet — not just in this lawsuit, but across the industry — that courts will ultimately side with AI developers. The legal outcome is genuinely uncertain: two early rulings from different judges produced conflicting conclusions on the fair use question.

The Anthropic settlement is the most important data point here. Anthropic — the first major AI company to resolve one of these cases — agreed to pay authors $1.5 billion to settle a class-action that could have cost far more. That precedent will weigh heavily on how Meta, OpenAI, and others calculate litigation risk going forward. It also signals that billion-dollar liability exposure is not hypothetical — it has already materialized for one well-capitalized AI company.

Relevance for Business

For SMB leaders, the direct exposure is limited — but the downstream effects are real and worth tracking. The AI tools your organization uses today were likely trained on contested data. If courts begin ruling against AI developers or forcing licensing frameworks, it could reshape the pricing, availability, and legal standing of the AI platforms you depend on.

More immediately, vendor risk is growing. AI companies facing large legal judgments may restructure pricing, limit certain model capabilities, or shift training practices — all of which can disrupt tools you’ve integrated into workflows. This is also a governance signal: businesses that use AI-generated content for customer-facing or regulated purposes should be asking vendors harder questions about training data provenance and indemnification.

Calls to Action

🔹 Monitor, don’t act yet — no immediate operational change is required, but assign someone to track the legal trajectory of AI copyright cases, particularly any rulings on fair use.

🔹 Review AI vendor contracts for indemnification language — understand whether your vendor accepts liability if their model’s training data creates legal exposure for your outputs.

🔹 Avoid building deep dependencies on a single AI provider — legal or financial instability at a vendor could force disruptive migrations; diversification reduces that risk.

🔹 Brief your legal or compliance team on the evolving copyright landscape before expanding AI use into content creation, publishing, or regulated domains.

🔹 Revisit this issue in 6–12 months — the first substantive rulings on fair use in AI training will be a genuine decision point for how aggressively to expand AI-generated content practices.

Summary by ReadAboutAI.com

https://www.reuters.com/sustainability/boards-policy-regulation/major-publishers-sue-meta-copyright-infringement-over-ai-training-2026-05-05/: May 11, 2026

AI MISUSE IS RAMPANT. SHOULD YOU FOLLOW TAYLOR SWIFT’S PLAYBOOK?

The Washington Post | May 8, 2026

TL;DR: Celebrities are turning to trademark law as an improvised defense against AI-generated clones of their voices and likenesses — but legal experts are divided on whether the strategy works, and the approach is almost certainly unavailable to ordinary individuals or most businesses.

Executive Summary

Taylor Swift and Matthew McConaughey have both filed trademark registrations that appear designed, at least in part, to deter AI-based misuse of their identities — including voice cloning, deepfakes, and unauthorized synthetic endorsements. McConaughey’s legal team has been explicit about the intent: the trademark acts as a deterrent and a potential basis for federal action. Swift’s filings are less clearly motivated, though speculation among legal experts centers on AI protection.

The legal picture is genuinely uncertain. Trademark law was not designed for this problem — attorneys describe the approach as fitting a round peg in a square hole. A trademark registration may serve as a visible declaration of willingness to litigate, which can itself deter misuse. But it only applies where the holder’s likeness or voice is being used in a commercial context, and it has never been fully tested in court for AI-generated impersonation scenarios. Other legal avenues — state right-of-publicity laws, federal false endorsement claims, fraud statutes — exist in parallel but are also patchwork and untested against AI tools at scale.

The broader context is that AI voice cloning and likeness replication have already affected multiple high-profile individuals, from explicit deepfakes to synthetic political endorsements. The legislative response — most notably the No FAKES Act — has been introduced in Congress but not passed. Until federal law catches up, individuals and organizations navigating this space are working with an incomplete legal toolkit. Notably, McConaughey has simultaneously invested in AI voice company ElevenLabs — signaling that his concern is about consent and control, not opposition to the technology itself.

Source note: This is a news feature with legal expert commentary. The legal analysis is presented as opinion, not settled law. No court has definitively ruled on AI likeness trademark claims.

Relevance for Business

For most SMBs, the celebrity trademark strategy is not directly applicable — trademarks require commercial use of the protected element, and most business owners do not meet that threshold for their personal likeness or voice. However, several second-order implications are relevant. First, if your business uses AI-generated voices, images, or likenesses of any kind in marketing, content, or customer-facing applications, your legal exposure is increasing — both because the technology is proliferating and because enforcement frameworks are being developed. Second, brand voice and visual identity are increasingly subject to cloning risk — consider what your current protections actually cover. Third, the No FAKES Act and state-level right of publicity legislation are active regulatory developments that may affect how your business can use AI-generated content featuring real people, including customers, employees, or brand partners.

Calls to Action

🔹 Audit your AI content practices — if your organization uses AI to generate voices, images, or likenesses in any customer-facing context, have legal counsel review your exposure under current state and federal law.

🔹 Monitor the No FAKES Act — federal legislation on AI-generated likenesses is in progress. Assign someone to track its status and flag when it advances to a vote.

🔹 Do not rely on the celebrity trademark strategy for your business — unless your personal likeness or voice is a registered commercial asset used in your products or services, this approach is unlikely to apply or succeed.

🔹 Review contracts with talent, partners, or brand ambassadors — if any agreements involve likeness rights, ensure they explicitly address AI-generated replication, which most legacy contracts do not cover.

🔹 Prepare an internal policy on AI-generated identity content — even before legislation passes, having a documented policy reduces liability exposure and signals responsible governance.

Summary by ReadAboutAI.com

https://www.washingtonpost.com/entertainment/2026/05/08/taylor-swift-trademark-ai-misuse/: May 11, 2026

How AI Tools Could Enable Bioterrorism

The Economist | May 5, 2026

TL;DR: Benchmark tests show leading AI models now perform at expert virologist levels on lab troubleshooting tasks, but real-world uplift for untrained bad actors remains limited for now — the more credible near-term risk involves AI assisting those who already have relevant expertise.

Executive Summary

The Economist synthesizes recent research on whether large language models meaningfully lower the barrier to biological weapons development. The headline finding from a benchmark test (the Virology Capabilities Test) is striking: current AI models score 55–61% on expert-level virological troubleshooting questions, matching the performance of top human virologist teams, while novices aided by AI outperform unaided experts. This has prompted OpenAI, Anthropic, and Google to strengthen biosafety guardrails, with each acknowledging they can no longer fully rule out models assisting in weapons development.

However, a randomized controlled trial conducted in an actual wet lab tells a more cautionary and reassuring story. When 153 biology novices attempted virus synthesis tasks with AI assistance, the models provided no statistically significant advantage over using the internet alone. The models frequently generated plausible-sounding but incorrect guidance — and participants who leaned on AI most heavily performed no better than those who used it sparingly. The most useful resource participants identified was YouTube.

The more credible concern, researchers note, is AI uplift for those who already hold advanced biology degrees — people who can recognize when a model is wrong and course-correct accordingly. Separately, emerging biological design tools (akin to AI models that generate genetic sequences rather than text) may eventually enable modification of existing pathogens in ways that evade countermeasures — though this remains forward-looking rather than demonstrated. The governance challenge is acute: safety evaluations are running far behind model development cycles, with four new frontier models released in the time it took one major uplift study to complete and publish.

Relevance for Business

Direct operational relevance for most SMBs is limited. The significance here is threefold. First, AI biosecurity risk is being taken seriously by the major labs — safety constraints you may encounter when using AI for life sciences, research, or healthcare applications are deliberate and will tighten. Second, this story is a concrete illustration of how quickly AI capability benchmarks are outpacing safety evaluation infrastructure — a dynamic that applies across industries, not just biosecurity. Third, for any SMB operating in biotech, pharma, research services, or adjacent sectors, regulatory and compliance environments around AI use in scientific workflows are likely to become more restrictive, not less.

Calls to Action

🔹 If you operate in life sciences or research-adjacent fields, begin mapping which AI tools your teams use for scientific work — regulatory scrutiny of AI in these workflows is increasing.

🔹 Do not overreact — the current evidence suggests genuine risk is concentrated among technically expert bad actors, not novices. Calibrated awareness, not alarm, is the appropriate posture for most business leaders.

🔹 Monitor biosecurity policy developments — government involvement in AI safety evaluation for biological applications is likely to produce new compliance requirements in regulated industries.

🔹 Note the evaluation lag as a general principle — if your organization is deploying AI in high-stakes workflows, don’t assume current safety benchmarks reflect what the tools can actually do. Independent evaluation matters.

🔹 Watch for model access restrictions — as with Anthropic’s Mythos cybersecurity model, developers may limit access to frontier models in sensitive domains. Plan for potential availability disruptions in specialized AI tools.

Summary by ReadAboutAI.com

https://www.economist.com/science-and-technology/2026/05/05/how-ai-tools-could-enable-bioterrorism: May 11, 2026

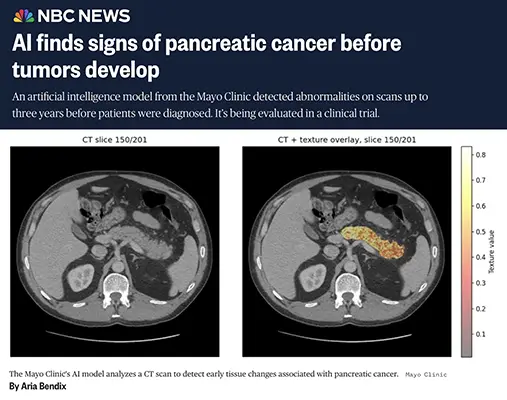

Mayo Clinic AI Detects Pancreatic Cancer Up to Three Years Early

NBC News | Aria Bendix | May 2, 2026

TL;DR: A Mayo Clinic AI model identified pre-tumor pancreatic cancer markers on CT scans years before diagnosis — outperforming radiologists threefold — though clinical validation is years away from routine use.

Executive Summary

Pancreatic cancer kills because it is almost never caught early: roughly 80% of cases are diagnosed at an advanced stage, and five-year survival sits at 13%. A new study published in the journal Gut reports that a Mayo Clinic AI model detected tissue abnormalities on CT scans up to three years before patients received a formal diagnosis — picking up signals that trained radiologists missed. The model was three times more accurate than human reviewers on early-stage detection.

The mechanism is meaningful: the AI identifies abnormal cellular activity — cells that shield cancer from immune response — that is biologically present long before a visible tumor forms. This is early detection based on tissue texture and cellular signal, not on visible mass. The model is now entering clinical trials, but researchers caution that it will need three to five years of follow-up data before it could move toward routine clinical deployment.

The article also notes a broader wave of pancreatic cancer research — an mRNA vaccine showing early survival benefits, a new targeted drug (daraxonrasib) that doubled life expectancy in trials and is under FDA review for expanded access, and advanced blood biomarker tests in development. The sum is genuine momentum in a disease that has resisted progress for decades — but “momentum” and “clinical availability” remain meaningfully different.

Relevance for Business

For most SMB executives, the direct operational relevance here is limited — this is early-stage medical research. However, several second-order signals merit attention. AI diagnostic capability is advancing faster than regulatory and clinical infrastructure can absorb. For businesses in health-adjacent fields — insurance, benefits, health tech, employer wellness programs — the trajectory is important: early cancer detection could significantly alter actuarial assumptions, benefit design, and the ROI calculus on preventive health programs. The broader signal is that AI’s strongest near-term validated use cases are in pattern recognition within large, structured datasets — a lesson that applies well beyond oncology.

Calls to Action

🔹 Monitor, don’t act yet. This is a research-stage finding with a multi-year path to clinical deployment. No immediate business decision is warranted.

🔹 Flag for benefits and HR leadership if your organization manages self-insured health plans — early cancer detection at scale will eventually reshape cost and coverage modeling.

🔹 Track FDA activity on daraxonrasib if your business operates in pharma-adjacent markets — expanded access decisions often precede full approval timelines.

🔹 Use this as a reference case when evaluating AI vendor claims in your own industry. The Mayo model succeeded by training on structured, domain-specific data with rigorous validation. That methodology, not the headline, is the transferable lesson.

Summary by ReadAboutAI.com

https://www.nbcnews.com/health/cancer/ai-early-signs-pancreatic-cancer-before-tumors-develop-rcna343099: May 11, 2026

Young Europeans Are Using AI Chatbots as Emotional Confidants — and Experts Are Concerned

Reuters | Lucie Barbier and Leo Marchandon | May 5, 2026

TL;DR: A survey of nearly 4,000 young Europeans found that AI chatbots are now considered easier to confide in than healthcare professionals — raising serious questions about dependency, safeguarding, and whether AI is filling a mental health gap or deepening it.

Executive Summary

An Ipsos BVA survey commissioned by France’s privacy regulator (CNIL) and health insurer Groupe VYV found that roughly half of young Europeans aged 11–25 have discussed personal or emotional matters with AI chatbots. More than half rated chatbots as easy to open up to — a higher share than said the same about healthcare professionals or psychologists. Nearly 28% of respondents met the threshold for suspected generalized anxiety disorder, providing context for why this use pattern is growing: there is a genuine unmet mental health need, and AI is filling it by default.

The survey’s findings are observational, not causal. But the expert commentary is pointed. A researcher at Stockholm’s Karolinska Institutet noted that even trained professionals can struggle to distinguish AI-generated mental health responses from those of licensed experts — which is less reassuring than it sounds. General-purpose AI systems are optimized for engagement, not therapeutic outcomes. The concern is not that AI gives bad information in the short term, but that it substitutes for human connection and professional care in ways that deepen isolation over time. The article cites an ongoing lawsuit in which a family alleges a Google AI chatbot contributed to a man’s death.

This is an emerging area of genuine harm risk, not speculative future concern. Regulators, researchers, and the legal system are already engaged.

Relevance for Business

For SMB executives, this story has three distinct relevance tracks. First, any business deploying conversational AI in customer-facing roles — service, support, wellness — should assess whether users may be bringing emotional needs to those interactions that the system is not equipped to handle safely. Second, employers with AI-enabled HR or EAP tools should audit what those systems are designed to do when users express distress. Third, the survey adds weight to regulatory risk in the EU: CNIL commissioned this research, and that is not an idle gesture — it signals forthcoming attention to AI emotional engagement standards.

The broader signal for any AI product or feature roadmap: the line between “helpful assistant” and “emotional dependency” is not well-defined — and regulators are beginning to draw it.

Calls to Action

🔹 Audit any AI tool your organization deploys that involves open-ended user interaction. Understand what happens when a user expresses distress — and whether your system is designed to respond appropriately or escalate.

🔹 Review employee-facing AI wellness and HR tools for safeguarding gaps. If those tools lack crisis escalation protocols or human backup, that is a liability exposure, not just an ethical gap.

🔹 Do not market AI tools to employees or customers as mental health resources unless they are purpose-built and clinically validated. General-purpose chatbots are not substitutes for EAPs or licensed care.

🔹 Monitor EU regulatory developments from CNIL and similar bodies. This survey was commissioned infrastructure for future regulation — not a standalone data point.

🔹 Track AI emotional dependency litigation. The Google/Gemini lawsuit is likely not the last. Legal exposure for AI-assisted harm is moving from theoretical to active.

Summary by ReadAboutAI.com

https://www.reuters.com/technology/young-europeans-turn-ai-chatbots-emotional-support-survey-shows-2026-05-05/: May 11, 2026

Meta Expands Teen Safeguards to EU and Facebook — Under Regulatory Pressure

Reuters | Foo Yun Chee | May 5, 2026

TL;DR: Meta is extending AI-powered teen account protections across the EU and to Facebook in the US, a move that is as much a legal defense posture as a product decision — as regulatory and litigation pressure on platform safety intensifies globally.

Executive Summary

Meta announced it will roll out its teen account protection technology — already in place on Instagram — to all 27 EU member states and, for the first time, to Facebook in the United States. The system uses AI to infer whether an account belongs to a minor, even when users provide an adult birth date, by analyzing profile-level contextual signals. It then routes suspected underage users into restricted account settings.

The timing is not coincidental. The announcement came the same day New Mexico sought a $3.7 billion judgment against Meta and asked courts to declare the company a public nuisance for its handling of youth safety. Regulators across Europe are accelerating restrictions on teen social media access, and the broader tech industry faces mounting global pressure on age verification, AI-generated harmful content, and the mental health consequences of platform design.

This is regulatory compliance dressed as product progress. The AI detection layer is genuinely novel — moving beyond age-gate checkboxes to behavioral inference — but the driver is external pressure, not proactive safety leadership. The company’s framing as a safety innovator should be read in that context.

Relevance for Business

The direct operational question for SMBs is narrower than the Meta headline: if your business markets to consumers, operates any platform with user accounts, or uses AI to personalize content or communications, the regulatory direction is clear. Age verification, youth content restrictions, and AI-driven account inference are moving from opt-in features to compliance requirements — first in the EU, likely in the US to follow. The risk of inaction is shifting from reputational to legal. Businesses that haven’t mapped their exposure to youth-protection regulations — including state-level actions like New Mexico’s — should do so now.

Calls to Action

🔹 Assess your platform’s exposure to minor users — even incidentally. If your product or service could be accessed by users under 18, age-related compliance obligations are increasing.

🔹 Track EU Digital Services Act enforcement activity. Meta’s expansion to 27 EU countries is a response to that framework. Any business operating in Europe with a consumer-facing platform should understand its obligations.

🔹 Watch US state-level litigation against Meta as a leading indicator of where federal or broader state regulation may head. New Mexico’s $3.7 billion public nuisance claim is aggressive — and likely to attract imitators.

🔹 Distinguish between AI safety theater and genuine compliance capability. Meta’s contextual inference model is technically sophisticated; most smaller platforms don’t have equivalent infrastructure. Understand what compliance will actually require — and cost.

🔹 Do not assume B2B insulates you. If your product or platform touches consumers downstream, your enterprise clients will increasingly require contractual assurances around youth safety and data practices.

Summary by ReadAboutAI.com

https://www.reuters.com/sustainability/boards-policy-regulation/meta-expand-teen-safeguards-27-eu-countries-facebook-safeguards-junw-2026-05-05/: May 11, 2026

META’S STOCK LOOKS CHEAP. THE WSJ SAYS THAT’S A RED FLAG, NOT A BARGAIN.

The Wall Street Journal | Asa Fitch | May 5, 2026

TL;DR: Meta’s advertising business is thriving and its stock is at a three-year valuation low — but the WSJ’s Heard on the Street argues that the discount reflects genuine structural risk, not a buying opportunity.

Executive Summary

This is an opinion-informed financial analysis piece, and its argument is pointed: Meta’s apparently low valuation is a symptom, not an accident. Trading at roughly 18 times forward earnings — a multi-year low and a significant discount to Alphabet — the company looks cheap by conventional measures. Revenue grew 33% in Q1. AI is genuinely helping its core ad business: click-through conversion rates improved 6% last quarter, and ad prices are rising.

But the piece identifies three compounding vulnerabilities. First, user growth is stalling — daily active users across Meta’s platforms rose just 4% year-over-year in Q1 and declined sequentially for the first time on record. Without user growth, the AI-enhanced ad machine eventually hits a ceiling. Second, Meta has no diversification fallback: unlike Google (cloud), Amazon (e-commerce), or Microsoft (enterprise software), Meta’s revenue is almost entirely advertising. Third, the balance sheet is deteriorating under the weight of AI ambition: long-term debt has grown from roughly $10 billion at the start of the AI era to over $57 billion, not including a $25 billion bond just sold and a $27 billion off-balance-sheet data center project.

The piece also flags Meta’s continued lag in frontier AI model capability — despite releasing a new model (Muse Spark) last month — and a growing legal exposure around youth safety and platform harm.

Relevance for Business

If your business depends on Meta’s advertising ecosystem — Facebook Ads, Instagram, WhatsApp Business — this analysis warrants attention. A company carrying heavy debt, decelerating user growth, and expanding capex faster than revenue is a platform with compressing flexibility. That doesn’t mean Meta fails; it means the business decisions Meta makes under financial pressure — pricing, ad load, data policies, platform access — may not favor your interests. Concentration risk in a single ad platform is a strategic vulnerability, not just a marketing preference.

Calls to Action

🔹 Assess your advertising concentration. If Meta platforms account for more than 40% of your digital ad spend, that is a strategic dependency worth reducing over time — not because Meta is failing, but because its financial pressures may shift platform terms against advertisers.

🔹 Watch Meta’s Q2 revenue report closely. The company’s below-consensus Q2 revenue guidance was a key trigger for its recent stock decline. If Q2 disappoints, expect platform volatility and potential ad pricing changes.

🔹 Monitor user growth data. The sequential decline in daily active users is a leading indicator for the long-term ad revenue ceiling. Track whether it recovers or persists.

🔹 Treat Meta’s frontier AI model investments with skepticism until proven. Muse Spark positions Meta closer to Google and OpenAI in framing — but the WSJ’s assessment is that Meta remains behind, at enormous cost.

🔹 Do not make long-term platform dependency decisions based on Meta’s current ad performance alone. Strong near-term revenue growth does not resolve the structural balance sheet and user-growth concerns documented here.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/ai/metas-cheap-stock-is-an-investor-trap-2eca5dc6: May 11, 2026

AI Is Eliminating the Entry Rung — But the Ladder Is Still There

Fast Company | May 4, 2026

TL;DR: AI is measurably shrinking entry-level white-collar hiring, but the executives and employers paying closest attention are concluding that human judgment, relationships, and adaptability remain the durable differentiators — and that organizations which eliminate junior pipelines entirely are making a long-term mistake.

Executive Summary

The data here is real and worth taking seriously: a global survey of 850 business leaders found that 39% have already reduced entry-level roles due to AI, and 43% expect to do so in 2026. Anthropic’s CEO has claimed AI could absorb roughly half of all entry-level white-collar jobs within five years. The functions most affected — data gathering, basic analysis, research synthesis, routine writing — are precisely the tasks that historically served as the training ground for junior professionals.

The author, Anne Chow, pushes back on the purely doomsday reading, and her counterargument has some structural merit. A cohort of employers — including IBM, Reddit, Dropbox, Cloudflare, and LinkedIn — are actively expanding early-career hiring, on the reasoning that AI-native young workers are more adaptable than mid-career hires, and that gutting the junior pipeline creates succession risk. PwC, which pulled back on entry-level hiring last year, has partially reversed course and now explicitly warns clients that eliminating early-career roles risks starving organizations of future leadership.

The practical guidance offered — develop human skills AI can’t replicate, maintain AI literacy as a baseline, pursue mentorship aggressively, consider entrepreneurial side paths — is reasonable if somewhat conventional. More analytically useful is the framing that career timelines are compressing: the rote early-career work is going away, which means new entrants who navigate this correctly reach judgment-level responsibilities faster than prior generations.

Relevance for Business

For SMB executives, this article surfaces two distinct decisions. First, hiring strategy: if you’ve reduced or plan to reduce entry-level hiring to capture AI efficiency gains, the employer counterexamples here suggest that move carries talent pipeline risk worth quantifying. Second, workforce development: the skills that remain durable — judgment under ambiguity, relationship navigation, storytelling, synthesis — require deliberate cultivation, not passive accumulation. AI is not eliminating the need for human development; it’s front-loading the demand for it. Organizations that invest in structured mentorship and accelerated responsibility paths now will be better positioned when the current cohort matures.

Calls to Action

🔹 Audit your entry-level hiring strategy — if you’ve cut junior roles, assess whether you’ve also cut your leadership pipeline.

🔹 Invest in mentorship infrastructure — this becomes more valuable, not less, as AI absorbs rote early-career tasks.

🔹 Set AI literacy as a baseline expectation for all new hires, not a specialty skill.

🔹 Identify which roles in your organization require judgment, relationships, and contextual reasoning — these are where humans remain essential and where development investment pays off.

🔹 Monitor the employer countertrend — companies choosing to lean into early-career hiring may gain long-term talent advantages that won’t be visible for several years.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91521334/ai-is-wiping-out-entry-level-jobs-7-tips-to-ride-the-wave-instead-of-getting-knocked-down-by-it-ai-technology-entry-level-jobs: May 11, 2026

The Memory Chip Boom: AI Is Rewriting the Rules of a Volatile Industry

The Wall Street Journal | May 6, 2026

TL;DR: AI’s insatiable demand for memory is driving record profits for chip and storage makers — and beginning to structurally change a sector historically defined by brutal boom-bust cycles.

Executive Summary

Memory has always been a punishing business — massive capital costs, commodity pricing, and cycles that swing from feast to famine with little warning. That pattern is now being disrupted by AI. Micron and Sandisk are generating gross margins approaching 80 cents on the dollar, a level the industry has never sustained. Hard-drive makers Seagate and Western Digital have seen share prices nearly triple. Samsung crossed the $1 trillion market cap threshold.

The driver is clear: AI systems require large volumes of specialized high-speed memory (DRAM), and the models themselves generate data that must be stored and recalled continuously. As AI systems become more capable, those demands compound. Meanwhile, AI-grade memory production is crowding out capacity for consumer devices — driving up prices broadly. Apple’s CEO acknowledged memory cost pressure on the company’s earnings call, a notable concession from a company known for supply-chain dominance.

What’s structurally different this cycle is the move toward long-term supply contracts — some extending to 2029. Sandisk reports agreements covering more than a third of next year’s output with five major customers. That’s a meaningful shift from an industry that historically operated on 30-day deals, and it suggests both buyers and sellers are treating current demand as durable rather than cyclical.

Relevance for Business

For SMB leaders, this is primarily a cost-awareness story. If your products or services depend on hardware containing memory — computing equipment, storage infrastructure, consumer electronics — expect pricing pressure to persist. This isn’t a short-term shortage. New fabrication facilities take years to build, and major hyperscalers are accelerating AI spend, not pulling back. The structural shift to long-term contracts also means component availability may increasingly favor large enterprise buyers over smaller purchasers, creating procurement risk for organizations without negotiating leverage.

Calls to Action

🔹 Assess hardware procurement exposure — if you’re planning significant computing or storage purchases, act sooner rather than later.

🔹 Monitor memory pricing as a leading indicator of broader AI infrastructure cost trends.

🔹 Avoid assuming a near-term correction — the structural supply constraints and long-term contracts suggest elevated pricing is durable.

🔹 Factor memory cost escalation into product or service pricing models if your business is hardware-dependent.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/memory-makers-are-the-hottest-thing-in-tech-are-they-making-too-much-money-bad375da: May 11, 2026

The Hidden Engine of American Innovation: What NIH Funding Cuts Actually Risk

Barron’s | May 5, 2026

TL;DR: Proposed federal cuts to the NIH — which underpins 92% of medical research areas and catalyzes industries from biotech to AI-health — would impose compounding costs on U.S. innovation, employment, and global competitiveness that dwarf the budget savings.

Executive Summary

This is an opinion piece from Georgetown University’s Center for Security and Emerging Technology researchers, and it argues a case worth taking seriously regardless of political framing: government-funded basic research produces returns that private capital structurally cannot replicate. The NIH, funded at less than 1% of the federal budget, touches 92% of medical research areas and underlies 97% of pharmaceutical and 93% of biotech patent domains. The GLP-1 drug class — Ozempic, Wegovy, Mounjaro — is offered as a concrete example of decades of NIH-supported science enabling commercial blockbusters that private firms then monetized.

The current threat is both fiscal and operational. The administration’s proposed FY2027 budget requests a 12% NIH cut, following last year’s attempted 40% reduction. More immediately, disruptions already underway — frozen grant disbursements, vacant leadership positions, reduced review panels — have resulted in 61% fewer competitive grants issued through March 2026 compared to the same period in 2024. Researchers warn that scientific pipeline damage is not linear: interruptions cascade, talent moves abroad, and rebuilding costs more than the original investment.

This is also explicitly framed as a strategic AI competitiveness issue, not just a health policy debate. NIH capacity supports AI-biotech research intersections that determine U.S. positioning in fields where leadership is actively contested.

Relevance for Business

SMB leaders in healthcare, life sciences, software, and adjacent sectors should understand that the innovation pipeline feeding their industries depends on this public research infrastructure. Cuts don’t just affect academic labs — they delay drug approvals, thin startup investment opportunities, and reduce the talent pool entering the workforce. For businesses tracking AI in healthcare, diagnostics, or biotech tooling, the compounding effect of reduced NIH activity is a long-cycle risk that won’t show up in quarterly signals but will reshape the competitive landscape over a five-to-ten-year horizon. The authors note Harvard and MIT research finding that reduced NIH prevention research is likely to increase American healthcare costs — a direct operational concern for employers.

Calls to Action

🔹 Monitor NIH funding developments if your business intersects with healthcare, life sciences, biotech, or AI-health applications.

🔹 Assess your innovation dependency on research pipelines that originate in publicly funded science — this is often underestimated.

🔹 Track talent availability signals — disruptions to academic research funding affect the supply of specialized researchers and scientists entering the workforce.

🔹 Engage with industry associations if your sector has policy advocacy capacity; this is a multi-year issue with near-term decision points in Congress.

🔹 Note this as a speculative-but-credible risk to U.S. AI-health competitiveness relative to international competitors — worth including in longer-horizon strategic planning.

Summary by ReadAboutAI.com

https://www.wsj.com/wsjplus/dashboard/articles/government-funded-research-seeds-entire-industries-what-would-be-lost-without-it-befd4ad9: May 11, 2026

Anduril, Palantir, and SpaceX Are Changing How America Wages War

The Economist | April 20, 2026

TL;DR: A new class of AI-native defense contractors is winning significant Pentagon commitments, reshaping military procurement — but the consolidation of power among a small, politically connected group raises serious questions about oversight, lock-in, and long-term accountability.

Executive Summary