ReadAboutAI.com Anniversary Week: Day 5 – Money, Spending and Return on Investment

A look back. Relevant articles over the past year on the massive amount of dollars fueling AI Development.

The AI Boom Became a Battle of Spending, Scale, and ROI

AI Economics & Market Power

The question that defined 18 months of AI coverage was never really about the technology. It was about the money — who was spending it, on what assumptions, and whether those assumptions were holding. When ReadAboutAI.com began tracking AI developments in late 2023, the dominant story was capability: models improving faster than anyone expected, market valuations climbing to reflect a technology that appeared to have no ceiling. By early 2025, a second story had emerged alongside it, quieter but more durable — a story about returns that were not arriving on schedule, costs that were rising faster than revenue, and a business case for AI that, at both the macro and firm level, was proving harder to verify than anyone had publicly acknowledged.

What the coverage consistently showed was a gap between two parallel economies. The first was the infrastructure economy — Nvidia’s valuation surge, hyperscaler capital expenditure commitments running into the hundreds of billions, data center buildouts stretching across the country. That economy was real, measurable, and concentrated in a small number of companies. The second was the productivity economy — the actual returns showing up in enterprise operations, firm margins, and national economic output. There, the evidence was fragmentary, contested, and in some cases running directly counter to the narrative that had justified the infrastructure spending in the first place. Goldman Sachs concluded that AI-related investment contributed close to nothing to U.S. GDP growth in 2025. A major MIT-affiliated report found that 95% of AI pilots had failed to scale or deliver measurable ROI. Harvard Business Review’s most recent survey found that the organizations achieving the highest returns were distinguished not by the sophistication of their technology, but by the rigor of their management discipline.

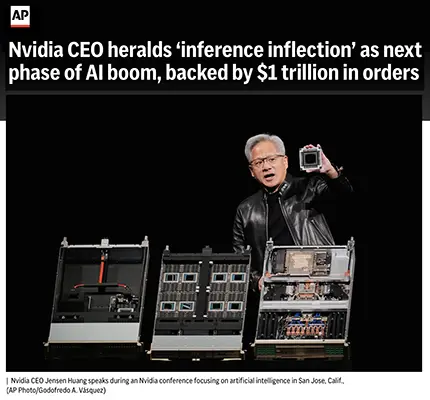

Nvidia CEO Heralds ‘Inference Inflection’ as Next Phase of AI Boom, Backed by $1 Trillion in Orders

AP News | March 16, 2026

TL;DR: Jensen Huang’s GTC keynote introduced “inference” as Nvidia’s next strategic bet — a pivot from building AI to running it efficiently at scale — and announced a projected $1 trillion chip order backlog, but Nvidia’s stock remains down 6% from pre-earnings levels, signaling market uncertainty about the sustainability of the current AI infrastructure buildout pace.

Source note: AP wire reporting from a corporate event. Huang’s forward-looking claims reflect executive interest in AI optimism; the $1 trillion backlog is a forecast, not a disclosed order book.

EXECUTIVE SUMMARY

The structural distinction matters: training AI models requires enormous one-time compute. Inference is the ongoing cost of running those models for every query or automated process. As AI moves from being built to being embedded in daily operations, inference compute grows continuously. Huang’s argument is that this market is entering a structural growth phase requiring specialized chips — and that Nvidia intends to lead it. The company struck a multi-billion dollar licensing deal with inference specialist Groq, acquiring its top engineers, to accelerate this transition.

Nvidia’s financial trajectory is real: revenue grew from $27B in 2022 to $216B last year; market cap is $4.5 trillion. But the stock is down 6% from pre-earnings levels, despite beating analyst forecasts — signaling that the market is pricing in uncertainty about demand sustainability, custom chip competition from Google and Meta, and U.S. export restrictions on China sales.

RELEVANCE FOR BUSINESS

Every AI tool your organization uses generates recurring inference costs — API calls, tokens, usage fees. As AI embeds into more workflows, these costs accumulate proportionally. Nvidia’s infrastructure bet signals meaningful pricing changes ahead, either through increased competition (favorable for buyers) or supply constraints during infrastructure transitions (a continuity risk). The China export restriction is a systemic supply chain risk for global AI services.

What to Monitor: The gap between Nvidia’s stellar earnings and its declining stock is a leading indicator worth watching. It suggests analysts believe the current pace of infrastructure investment may slow — which would affect the availability and pricing of AI compute for the tools you use, with a lag of 12–24 months.

CALLS TO ACTION

▹ Audit AI usage costs by category — separate one-time training costs from recurring inference costs; track inference as an operational budget line, not a technology expense.

▹ Build cost-scaling assumptions into AI tool ROI models — if usage doubles, inference costs roughly double. Model this before deepening workflow integration.

▹ Monitor Google and Meta custom chip development — success here could shift AI service pricing dynamics over the medium term.

▹ Track U.S.-China AI chip trade restrictions — disruptions affect not just Nvidia but all AI services that depend on the compute infrastructure it enables.

▹ Treat the $1 trillion backlog projection as directional, not guaranteed — the market’s subdued response to strong earnings reflects genuine uncertainty. Do not base multi-year AI commitments on the assumption that current growth rates continue unchanged.

Summary by ReadAboutAI.com

https://apnews.com/article/nvidia-ceo-jensen-huang-artificial-intelligence-conference-846f7d4aada068e92516665c6993ea29: Day 5: May 24, 2026

What OpenAI’s $110 Billion Funding Round Says About the AI Bubble

Fast Company | Thomas Smith | March 10, 2026

TL;DR: OpenAI closed one of history’s largest private funding rounds — $110 billion from Amazon, NVIDIA, and SoftBank — suggesting the AI investment cycle is not collapsing yet, but the underlying revenue-to-valuation gap that defines a bubble remains unresolved.

Executive Summary

OpenAI raised $110 billion in late February, valuing the company at $730–$840 billion. The round was led by credible institutional investors: Amazon contributed $50 billion, NVIDIA and SoftBank each $30 billion. These are not speculative retail bets — they are strategic commitments from companies with deep AI infrastructure interests. As part of the deal, OpenAI agreed to use Amazon’s cloud infrastructure and secured expanded chip access from NVIDIA, embedding new vendor dependencies into OpenAI’s operations.

The funding round silenced short-term bubble predictions. Analysts who had warned that failure to close the round would signal a burst — including those citing OpenAI’s governance instability and NVIDIA’s initial hesitation — were proven wrong for now. The capital will sustain OpenAI’s operations and reinforce the broader AI investment flywheel: Google and Meta face implicit pressure to match this spending or explain to investors why they didn’t.

However, the article is explicit that the fundamental tension remains unchanged: OpenAI’s 2025 revenue of $20 billion is modest relative to its valuation — roughly comparable to a mid-tier consumer goods company. Investors are pricing in a transformative labor displacement scenario that has not yet materialized. If that scenario falters, the article suggests large investors like Amazon could redirect capital quickly. NYU professor Gary Marcus is quoted warning this is a financial bubble — the timing of the burst is unknown, but the structure is there.

Relevance for Business

For SMB executives, this story has two practical implications. First, AI tools and vendors are not at risk of sudden disappearance in the near term — the capital base is stable enough to sustain continued product development from the major players. Second, the concentration of AI infrastructure power is intensifying. Amazon, NVIDIA, and OpenAI are now bound together through this deal in ways that will shape pricing, availability, and access to AI capabilities for years. Organizations making long-term commitments to specific AI platforms should factor in these entangled dependencies. The bubble question is real but not yet urgent — the more operational concern for leaders is vendor lock-in, not platform collapse.

Calls to Action

🔹 Monitor, don’t act: The AI investment cycle is stable for now — no need to accelerate or pause AI initiatives based on bubble fears alone.

🔹 Assess vendor dependency risk: If your organization is building on OpenAI’s API or AWS AI infrastructure, document what a vendor disruption scenario would require operationally.

🔹 Track the revenue signal: When reports indicate OpenAI’s revenue growth is plateauing or investor confidence is shifting, treat that as a leading indicator — not lagging news.

🔹 Ignore bubble speculation as a planning input: Decisions about AI adoption should rest on operational ROI, not macro funding narratives that remain unresolved.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91502821/openai-110-billion-funding-round-ai-bubble: Day 5: May 24, 2026

Even Silicon Valley Says That AI Is a Bubble

The Atlantic (March 12, 2026)

TL;DR / Key Takeaway: The article’s core signal is that parts of Silicon Valley are no longer denying AI-bubble risk; instead, many are reframing a possible crash as an acceptable cost of building strategic infrastructure and accelerating AI progress, which means leaders should treat today’s AI boom as both a real capability build-out and a real financial-risk cycle.

Executive Summary

Lila Shroff’s Atlantic piece is less about whether AI is “real” and more about a notable shift in elite tech thinking: some of the people funding and shaping the AI boom now openly accept bubble dynamics, while arguing that the resulting excess investment may still be socially or economically worthwhile. The article cites figures such as Hemant Taneja, Jeff Bezos, and Sam Altman as examples of leaders willing to tolerate major losses, layoffs, and company failures if the spending surge leaves behind transformative infrastructure, stronger models, and enduring firms.

That framing matters because it separates demonstrated reality from ideological justification. The reality is that AI capability has advanced quickly, helped by massive spending on compute, talent, and start-ups. The article also notes the scale of the build-out: OpenAI is described as still unprofitable yet valued above several major legacy corporations combined, while Big Tech is expected to spend roughly $650 billion this year on AI infrastructure. But the “good bubble” thesis is still a thesis. It assumes that overinvestment today will create durable value tomorrow, even though chips depreciate quickly, data centers can be overbuilt, and demand may not justify current expectations on the timeline investors want.

The strongest editorial takeaway is that this is not a simple pro- or anti-AI article. It argues that two things can be true at once: AI may be a foundational technology, and the current financing model may still create severe collateral damage if it breaks. The article explicitly raises the risk that a crash could spread beyond tech into retirement accounts, broader markets, and the wider economy—especially if still-unprofitable AI firms move into public markets and pull more ordinary investors into the cycle. For executives, the important distinction is this: AI progress is real; market pricing, spending discipline, and long-term returns remain much less certain.

Relevance for Business

For SMB executives and managers, this matters because the AI market is being shaped by capital intensity, infrastructure concentration, and investor expectations that are far above the day-to-day needs of most businesses. A bubble environment can still produce useful tools, better models, and falling software costs over time. But it also creates vendor dependence, unstable pricing assumptions, aggressive roadmap promises, and a higher chance that some suppliers, partners, or AI-native startups will not survive a market correction.

It also reinforces a practical leadership point: do not confuse sector enthusiasm with enterprise readiness. The article suggests that much of the current spending is justified by future productivity gains that may take time to materialize. For SMBs, that means the right question is not whether AI will matter, but which current use cases produce measurable value now without locking the company into fragile tools, inflated contracts, or workflows that depend on a vendor still burning cash.

Calls to Action

🔹 Treat AI vendors like cyclical suppliers, not permanent fixtures. Review financial durability, dependency risk, and switching costs before embedding a tool deeply into operations.

🔹 Prioritize near-term ROI over visionary positioning. Focus on workflow improvements you can measure in the next 6–12 months, not on broad promises tied to future model breakthroughs.

🔹 Build contingency plans for vendor disruption. Identify where a startup failure, pricing change, or API instability would interrupt work.

🔹 Separate capability adoption from market narrative. A frothy investment cycle can still produce useful products; adopt the product only when the business case stands on its own.

🔹 Monitor public-market spillover. If more AI firms go public while remaining unprofitable, market volatility could affect budgets, customer sentiment, and enterprise spending conditions beyond tech.

Summary by ReadAboutAI.com

https://www.theatlantic.com/technology/2026/03/ai-bubble-defenders-silicon-valley/686340/: Day 5: May 24, 2026

10 AI Business Use Cases That Produce Measurable ROI

TechTarget / Search Enterprise AI | January 30, 2026

TL;DR: Despite widespread AI adoption, most enterprise implementations have not yet generated measurable profit impact — but a specific set of use cases consistently delivers ROI, and SMBs that focus there will outperform those chasing novelty.

Executive Summary

The framing of this TechTarget feature is practical and useful: it synthesizes McKinsey, MIT, and Wharton research to identify where AI actually pays off. The headline finding is sobering — MIT analysis of over 300 public AI implementations found only 5% generated millions of dollars in measurable value, and nearly half of GenAI investment went to sales and marketing, even though back-office automation delivers stronger ROI.

The ten use cases identified as most likely to produce measurable returns are: customer service automation, sales and marketing optimization, personalized customer experiences, predictive analytics, predictive maintenance, IT operations automation, document and workflow automation, software development, supply chain and inventory management, and fraud detection. The common thread across high-ROI deployments is automating high-volume, repetitive processes with clear, measurable outcomes — not experimental GenAI initiatives. Wharton’s 2025 survey found 72% of executives are measuring ROI through productivity gains and incremental profits, and about three-quarters reported positive returns from AI integrated into coding, writing, and data analysis workflows.

Critical caveat: Cost reductions in areas like AI-assisted software development are not automatic. They depend on governance maturity, adoption discipline, and whether AI outputs reduce rework or create new technical debt. The article also flags a structural bias in how companies prioritize AI — metric attribution ease (sales dashboards are easier to measure) drives investment decisions, not actual value potential.

Relevance for Business

This is directly actionable for SMB leaders. The research confirms that the highest-ROI AI investments for most organizations are operational and back-office — invoice processing, IT ticket automation, document handling, customer service support, and predictive maintenance — not frontier GenAI experiments. SMBs with limited budgets should resist pressure to invest in visible but low-ROI AI showcases (brand-only marketing tools, generative content at scale) and instead build the data quality and process clarity required to make operational AI work. The 6-to-24 month ROI window for operational efficiency use cases is a realistic planning benchmark.

Calls to Action

🔹 Audit current AI spend against this ROI framework — identify whether investments are concentrated in high-visibility, low-ROI areas like brand marketing AI versus high-return operations automation.

🔹 Prioritize back-office automation (invoicing, document processing, IT support, customer service) as the most reliable path to near-term, measurable AI returns.

🔹 Establish clear KPIs before deploying AI in any function — if you can’t measure it before AI, you won’t be able to attribute ROI after.

🔹 Assess data quality in targeted functions; most operational AI ROI failures trace back to poor or unstructured data, not the AI model itself.

🔹 Set realistic timelines: plan for 6–24 months to demonstrate operational ROI, longer for strategic or competitive advantage plays.

Summary by ReadAboutAI.com

https://www.techtarget.com/searchenterpriseai/feature/10-AI-business-use-cases-that-produce-measurable-ROI: Day 5: May 24, 2026

7 Factors That Drive Returns on AI Investments, According to a New Survey

Harvard Business Review | Thomas H. Davenport and Laks Srinivasan | March 17, 2026

Editorial Note: This study was sponsored by Scaled Agile’s AI training business. The authors are credible researchers — Davenport is a widely cited authority on enterprise AI — and the methodology (1,006 senior executives surveyed, 12 interviews) is substantive. Sponsor involvement in a survey does not automatically invalidate findings, but readers should weigh that context when interpreting results, particularly those that might favor investment in AI training and structured frameworks.

TL;DR: A survey of over 1,000 senior executives finds that AI value is primarily a management challenge, not a technology one — and that the organizations achieving the most are distinguished by governance discipline, financial accountability, and measurement rigor, not technical sophistication.

Executive Summary

This is among the most practically useful research pieces on enterprise AI ROI published to date. The survey results push back against the prevailing narrative that AI is failing to deliver value: 90% of respondents reported achieving at least moderate value. But the more important finding is what is actually driving that value — and what is not.

What is not driving value is worth naming first. Headcount reduction from AI is nearly nonexistent in practice: the researchers find that only 2% of announced workforce reductions or hiring slowdowns are actually attributable to deployed AI capabilities. The rest reflects either anticipated future benefits or cost reductions made for unrelated reasons and attributed to AI. Creating a Chief AI Officer role is also not correlated with value creation. Generative AI — the category receiving the most attention — is producing the least measured value of any AI category surveyed.

What is driving value is a set of organizational disciplines that have little to do with technology choice. Organizations with the highest AI returns share three characteristics: they define clearly what value they are seeking and hold someone accountable for it; they measure outcomes — not just pre-implementation projections — and treat that measurement as a management function, not a reporting formality; and they involve the finance function directly, not just IT or AI-specific leadership.

The finance finding is striking. Only 2% of organizations assign AI value accountability to the CFO. But in organizations where the CFO carries that accountability, 76% report achieving a “great deal” of value — compared to 53% under CIOs or CTOs, and just 32% under functional executives. Finance brings credibility, rigor, and organizational authority that other roles cannot replicate.

The research also identifies a maturity model with six stages, from unmeasured pilots (where only 4% of organizations achieve high value) to formal external reporting (where 85% do). The most important inflection point is the move from pre-implementation projections to post-implementation measurement — a step that more than doubles the proportion of organizations reporting high value. A second major inflection occurs when value reporting becomes formal and externally disclosed.

Relevance for Business

This research is directly actionable for SMB executives, and it requires no technical expertise to apply. The message is clear: the gap between organizations that are and are not getting returns from AI is not primarily a technology gap.It is a management gap.

For leaders currently running AI pilots, the most consequential question this research raises is whether those pilots are producing any production deployments — and whether those deployments are being measured against real business outcomes. Organizations that stay in the pilot phase indefinitely generate no economic value, regardless of technical quality.

The CFO accountability finding has particular relevance. Finance involvement in AI governance is rare, but the correlation with value achievement is the strongest in the dataset. Leaders who want to accelerate AI returns should consider pulling the finance function into AI measurement now — not as a compliance exercise, but as a value-creation lever.

Calls to Action

🔹 Define what value means for your AI investments before deploying further. Clarity on the target matters more than tool selection.

🔹 Move at least one AI initiative from pilot to production. The research shows the transition itself produces a step-change in value, independent of the use case.

🔹 Assign AI value measurement to someone with authority to certify outcomes — ideally the CFO or finance function, not only the technology lead.

🔹 Build post-implementation measurement into every AI deployment. Pre-implementation projections alone produce almost no incremental value over unmeasured pilots.

🔹 Do not treat headcount reduction as an AI ROI metric. The research is clear that this is largely a forward-looking claim disconnected from current deployed capability.

Summary by ReadAboutAI.com

https://hbr.org/2026/03/7-factors-that-drive-returns-on-ai-investments-according-to-a-new-survey: Day 5: May 24, 2026

The AI Economy Story May Have Been Wrong

The Washington Post | Shira Ovide | February 23, 2026

TL;DR: A growing consensus among prominent economists — including from Goldman Sachs, Morgan Stanley, and JPMorgan — holds that the widely accepted narrative of AI driving significant U.S. economic growth in 2025 was overstated, and may have been close to zero in net terms.

Executive Summary

For much of 2025, a compelling story circulated in policy and financial circles: AI investment was propping up the U.S. economy, accounting for a substantial portion of GDP growth at a time when other indicators were weak. That story was embraced across the political spectrum — used by the Trump administration as evidence against regulation, and by critics as evidence of dangerous economic dependency.

The problem, as economists are now calculating, is that the story may not have been true. Goldman Sachs concluded that AI-related investment spending contributed “basically zero” to U.S. economic growth in 2025. Morgan Stanley and JPMorgan reached similarly low estimates. The core methodological issue: a significant share of AI data center spending goes toward imported components — chips manufactured in Asia — and imports subtract from U.S. GDP rather than adding to it. Roughly three-quarters of data center build costs are for computing equipment, much of it foreign-made.

The article is careful to note that this is a live methodological debate, not a settled conclusion. A St. Louis Fed economist who calculated AI’s contribution at roughly 39% of 2025 growth acknowledged her figure was probably the upper bound, and that early 2025 data was distorted by tariff-related import surges. The underlying uncertainty is real: the economic data simply does not yet have the resolution to cleanly capture AI’s effects. What’s notable is not that economists disagree — it is that the narrative hardened before the analysis did.

Relevance for Business

The practical implication for SMB executives is not about GDP accounting. It is about the reliability of the information environment surrounding AI. If the macro-level story about AI’s economic contribution was driven more by narrative than by data, the same pattern likely applies to the business-level claims made by vendors, consultants, and analysts. The tools exist; the spending is real. But the return on that spending — at the macro level and at the firm level — is considerably harder to measure than the confident projections suggest. Leaders who are making AI investment decisions should demand outcome evidence, not forecast evidence.

Calls to Action

🔹 Apply the same scrutiny to internal AI ROI claims that Goldman Sachs applied to national AI economic claims: ask whether the measurement methodology actually captures the effect being claimed.

🔹 When AI vendors present productivity or efficiency data, distinguish between input metrics (time saved, tasks automated) and output metrics (revenue, margin, customer value delivered).

🔹 Treat the broader “AI is transforming the economy” narrative as contested, not settled — and factor that uncertainty into multi-year AI investment planning.

🔹 Monitor emerging economic research on AI productivity effects — the measurement tools are improving, and more reliable data is expected over the next 12–18 months.

🔹 Do not deprioritize AI exploration, but resist pressure to accelerate investment based on macro narratives that are now being revised.

Summary by ReadAboutAI.com

https://www.washingtonpost.com/technology/2026/02/23/ai-economic-growth-gdp-mirage/: Day 5: May 24, 2026

At Davos 2026, the AI Conversation Shifted — From Possibility to Proof

Fortune / Eye on AI | Jeremy Kahn | January 20, 2026

TL;DR: By Davos 2026, the dominant AI conversation among global business leaders had moved decisively from what AI might do to whether current investments are actually working — a shift with direct implications for how executives should be framing their own AI decisions.

Executive Summary

Note: This is a newsletter dispatch reporting from the World Economic Forum in Davos, January 2026. It reflects the author’s direct conversations with executives and incorporates attributed quotes from named leaders. It should be read as informed journalism, not a formal survey or research report.

The most significant signal from Kahn’s dispatch is not any single development — it is the aggregate tone shift. A year earlier at Davos, the conversation had centered on AI agents and the possibility that Chinese AI models might undercut US AI economics. In January 2026, neither of those conversations dominated. Instead, the executives Kahn spoke with were focused on a more practical question: how do we make this pay?

The “bottom-up” AI deployment model has largely failed at scale. The approach of giving every employee access to AI tools and letting them find uses — popular in 2023 and 2024 — produced gains that were hard to quantify and rarely added up to meaningful changes in revenue or cost structure. The emerging consensus at Davos was that returns require CEO-led, top-down transformation of core business processes. The Siemens chairman described the required posture as CEO-as-“dictator” on AI deployment priorities. Research commissioned by process-mining firm Celonis found that establishing a dedicated center of excellence for AI process optimization produced returns eight times greater than approaches that did not.

A second major theme was the emerging competition among enterprise software vendors — Workday, Salesforce, Microsoft, Snowflake — to become the orchestration layer for AI agents within large organizations. Each company is positioning itself as the essential interface through which enterprise AI workflows are managed. The outcome of that competition will determine which platforms become indispensable — and which become optional.

The ROI conversation has arrived. The age of pilots and experimentation — in Kahn’s framing — is ending. What replaces it is a more demanding environment in which AI investments are expected to justify themselves against business outcomes, not just technical capability.

Relevance for Business

This source is among the most directly actionable in this set for SMB leaders. The pattern described at Davos — where broad-access, bottom-up AI deployment underdelivered, and CEO-led process transformation outperformed — maps directly onto decisions SMBs are making right now. If your AI deployment is still characterized by individual employees exploring tools on their own, the evidence increasingly suggests that approach will not scale into material business value without senior-led direction and structured process targeting.

The competition among Workday, Salesforce, Microsoft, and Snowflake to become the AI agent orchestration layer also matters for SMBs evaluating enterprise software. The vendor that wins that position will have significant influence over how your workflows are structured and how your data is accessed — choices with long-term consequences.

Calls to Action

🔹 Assess whether your AI deployment is still primarily bottom-up and individual-led — if so, the emerging evidence strongly favors moving toward CEO or senior-leader-defined priorities for AI deployment.

🔹 Identify two or three core business processes where AI-driven transformation could produce measurable revenue or cost impact — and assign executive ownership to those initiatives.

🔹 Consider establishing an internal center of excellence or working group for AI process optimization, even at SMB scale — the research cited at Davos suggests this structure meaningfully improves returns.

🔹 Monitor which enterprise software vendor — Microsoft, Salesforce, Workday, or Snowflake — is winning the AI agent orchestration competition in your industry; the winner will shape how AI integrates into your core workflows.

🔹 Apply an ROI frame to every active AI initiative: what business outcome is it targeting, how will you measure it, and when do you expect to see it?

Summary by ReadAboutAI.com

https://fortune.com/2026/01/20/davos-world-economic-forum-leaders-shift-focus-to-ai-roi/: Day 5: May 24, 2026

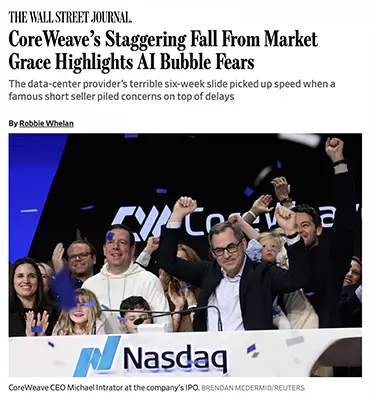

CoreWeave’s Collapse Puts a Face on the AI Infrastructure Risk

The Wall Street Journal | Robbie Whelan | December 15, 2025

TL;DR: CoreWeave’s 46% share-price decline in six weeks — driven by construction delays, a failed acquisition, and mounting debt concerns — is the clearest case study yet of the structural fragility underneath the AI infrastructure boom.

Executive Summary

CoreWeave built its business on a straightforward but high-stakes model: borrow heavily to buy advanced chips from Nvidia, install them in leased data centers, and rent compute access to AI companies. That model produced rapid revenue growth — sales more than doubled year over year to nearly $1.4 billion in its most recent quarter — but also generated significant losses and a balance sheet that one analyst described as “the ugliest in technology, by far.”

The company’s problems arrived from multiple directions simultaneously. Construction delays at a major Texas data center, caused initially by weather and compounded by design revisions, pushed back a key project for OpenAI by several months. A failed acquisition attempt of Core Scientific — a major construction partner — collapsed after a hedge fund publicly opposed the deal. Short seller Jim Chanos, known for his pre-collapse Enron call, then added public criticism. Each event, on its own, might have been manageable. Together, they revealed how little margin for error exists in a capital structure dependent on continuous high-utilization demand from a small number of large customers.

The most consequential detail is not the stock price: it is the operating margin. CoreWeave’s margins of roughly 4% are less than half of what the company pays in interest on its debt. The bull case is that scale will close that gap. The bear case is that this is a company already at scale.

Relevance for Business

SMBs do not invest in CoreWeave. But the companies that do — and the infrastructure those investments are meant to build — are what your AI tools run on. The fragility of the AI infrastructure layer is a direct operational risk for any business that depends on cloud-based AI services. Delays, financial stress, and consolidation among infrastructure providers could affect service availability, pricing, and reliability. This story also signals something broader: the confidence projections that have justified AI spending at the macro level are now being tested by physical reality — construction timelines, debt markets, weather.

Calls to Action

🔹 If your business depends on AI services from providers that rely on third-party compute infrastructure (CoreWeave, similar), monitor their financial stability as a vendor risk factor.

🔹 Build service redundancy into any AI-dependent workflows — infrastructure delays and provider consolidation are real near-term risks, not hypothetical ones.

🔹 Do not assume AI infrastructure capacity will expand smoothly to meet demand; planning timelines for AI-dependent projects should include supply-side uncertainty.

🔹 Use this case as a reference point when evaluating AI vendor stability — revenue growth alone is not a reliable indicator of operational soundness.

🔹 Monitor the broader AI infrastructure debt market; if financing costs rise further, the speed of AI buildout — and therefore AI product availability — may slow.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/ai/coreweave-stock-market-ai-bubble-a3c8c321: Day 5: May 24, 2026

Finding Return on AI Investments Across Industries

MIT Technology Review (Sponsored by Intel) | Lynn Comp | October 28, 2025

⚠️ Editorial Note: This is a sponsored article. It was produced by Intel and published in MIT Technology Review’s branded content section. It was not written by MIT Technology Review’s editorial staff. The framing and recommendations reflect Intel’s commercial perspective and should be read accordingly. The article references legitimate third-party data points — including the MIT NANDA report and McKinsey findings — but embeds them within a vendor argument. Treat the three principles it advances as Intel’s framing, not independent research findings.

TL;DR: Three years after the generative AI surge began, most enterprise deployments are still failing to deliver measurable returns — and this sponsored piece, despite its vendor framing, offers a practically grounded argument for why most organizations are approaching AI adoption backwards.

Executive Summary

Writing in late 2025, Comp acknowledges what had become an uncomfortable consensus: the MIT NANDA report found that 95% of AI pilots had failed to scale or deliver measurable ROI. McKinsey had separately pointed to next-generation automation approaches as the likely path to enterprise returns. Some voices at the Wall Street Journal’s Technology Council Summit had gone further, advising CIOs to stop trying to measure AI returns at all, on the grounds that measurement was too difficult and likely to produce misleading results.

The article pushes back on that last position by advancing three operational principles. The first is data governance as a precondition: before AI can deliver value, organizations need to understand what proprietary data they have, who can access it, and — critically — what it is worth as a negotiating asset with model vendors. The article notes that major AI companies have been completing large deals with enterprise data owners because publicly available training data is increasingly insufficient for frontier model development. This creates an underacknowledged leverage point for enterprises with valuable proprietary datasets.

The second principle — stability over novelty — challenges the assumption that organizations should continuously update to the latest AI models. The article observes that 182 new AI models were introduced in 2024 alone, and that when GPT-5 arrived in 2025, earlier models were deprecated, disrupting workflows built on them. Organizations that prioritized the newest capabilities over workflow stability paid an operational price. Back-office processes require reliability, not the latest benchmark performance.

The third principle — right-sizing AI to actual consumption — argues against adopting AI infrastructure based on vendor benchmarks rather than what the business can actually absorb. The article notes that organizations chasing maximum performance often generated substantial operational run-rate costs without proportional benefit, while those that designed for more modest throughput deployed successfully at scale.

Relevance for Business

For SMB leaders, this article’s most useful contribution — despite its Intel provenance — is the practical argument for conservative AI adoption design. The three principles align with failure modes that appear repeatedly across independent research: over-ambitious pilots, unstable tooling, and vendor-driven rather than need-driven procurement.

The data leverage point is particularly underappreciated. SMBs with specialized operational data — in legal, financial, logistics, or healthcare contexts — may be in a stronger negotiating position with AI vendors than they realize. This is not an argument to expose proprietary data carelessly; it is an argument to treat data assets as something to be governed and potentially leveraged, not simply fed into vendor systems by default.

Calls to Action

🔹 Audit your proprietary data assets before signing standard AI vendor contracts. What you have may be more valuable than what you are paying for.

🔹 Design AI workflows for stability, not for novelty. Prioritize vendors and configurations that offer durability over those chasing the latest model release.

🔹 Size AI infrastructure to what your team can actually use at current scale. Do not buy capacity based on vendor benchmarks.

🔹 Do not treat failure to measure AI ROI as acceptable. If a tool cannot be connected to a business outcome, that is a signal to pause or redesign — not to stop measuring.

🔹 Read this article with Intel’s commercial interests in mind. The practical advice is sound, but the framing is vendor-originated. Cross-reference with independent sources before building policy on it.

Summary by ReadAboutAI.com

https://www.technologyreview.com/2025/10/28/1126693/finding-return-on-ai-investments-across-industries/: Day 5: May 24, 2026

Big Tech’s Q3 2025 Earnings: Record Revenue, Escalating AI Costs, and a Warning About What Comes Next

Fortune Tech | Andrew Nusca | October 30, 2025

TL;DR: Meta, Microsoft, and Alphabet all reported record third-quarter revenues in October 2025, but each simultaneously announced significantly higher AI capital expenditure commitments for 2026 — a pattern that signals the industry’s spending is accelerating even as the return timeline remains uncertain.

Executive Summary

Note: This source is a newsletter dispatch — conversational in tone and not independently verified. It provides a useful snapshot of reported earnings figures and executive statements, but should be read as editorial summary rather than detailed financial analysis.

The three results, reported on the same day, told a consistent story: strong revenue, growing AI-driven costs, and forward guidance that promises even more spending. Meta posted over $51 billion in quarterly revenue — a 26% year-over-year increase — but raised its 2026 capital expenditure outlook to as much as $72 billion, driven primarily by AI infrastructure and talent costs. Microsoft reported cloud revenue of $49 billion for the quarter, with Azure up 40% year-over-year, but acknowledged it will remain capacity-constrained for the rest of its fiscal year despite significant investment. Alphabet crossed $100 billion in quarterly revenue for the first time and promptly raised its 2025 capital expenditure forecast to between $91 billion and $93 billion, with the CFO flagging a further significant increase expected in 2026.

The pattern that runs across all three results is the same: AI investment is outpacing current monetization. Meta’s Zuckerberg was explicit that returns from new AI products — as opposed to AI-enhanced advertising — are “years” away. Microsoft’s capacity constraints suggest demand is real but that supply cannot keep up. Alphabet’s numbers show cloud acceleration, but the scale of announced future spending suggests executives are not yet confident they have built enough.

Relevance for Business

For SMB leaders, these numbers have two direct implications. First, the AI tools embedded in the platforms you use — Microsoft 365, Google Workspace, Meta’s advertising system — are being subsidized by capital expenditure that has not yet been recouped. Pricing for those tools will eventually reflect actual costs. Second, capacity constraints at Microsoft are not an abstraction: they mean your Azure-based AI workloads are competing for resources that the world’s largest cloud company cannot currently supply on demand.

Calls to Action

🔹 Anticipate that AI-embedded software pricing will rise as platform providers begin to recoup infrastructure investment — factor this into multi-year budget planning.

🔹 If your organization relies on Azure or other constrained cloud capacity for AI workloads, assess whether current service level agreements reflect actual availability risks.

🔹 Monitor Meta’s advertising AI performance closely — it remains the short-term revenue driver, but structural changes to the platform’s AI model could affect ad efficacy.

🔹 Use the gap between current revenue and future AI product monetization as a calibration tool: if the platforms building these systems expect years before returns materialize, your internal AI investment timelines should be similarly realistic.

Summary by ReadAboutAI.com

https://fortune.com/2025/10/30/meta-revenue-soars-but-so-do-ai-expenses/: Day 5: May 24, 2026

The Web of Circular Deals Holding Up the AI Boom

Bloomberg | Emily Forgash and Agnee Ghosh | October 7–8, 2025

TL;DR: A pattern of large, interlocking investment deals between Nvidia, OpenAI, AMD, Oracle, and CoreWeave has raised serious questions about whether the trillion-dollar AI market is being sustained by genuine demand or by a self-reinforcing loop of companies funding each other.

Executive Summary

The deals in question follow a recognizable structure: Nvidia invests in an AI company, which then commits to buying Nvidia chips, which generates revenue that funds further Nvidia investments. In the weeks covered by this article, a $100 billion investment agreement between Nvidia and OpenAI was followed immediately by OpenAI’s partnership with AMD — in which OpenAI is positioned to become one of AMD’s largest shareholders — followed by a separate $300 billion data center agreement with Oracle, which in turn is purchasing Nvidia chips for those facilities. The money flows in a circle.

The concern is not simply that the deals are large — it is that the underlying cash flows do not yet justify them.OpenAI, the central actor in most of these arrangements, does not expect to be cash-flow positive until late this decade. Its computing commitments with Nvidia, AMD, and Oracle could collectively exceed $1 trillion. Nvidia, by contrast, is highly profitable and has the financial capacity to sustain the cycle — but analysts note that it has taken stakes in dozens of AI startups, many of which depend on Nvidia chips to function, creating a portfolio where the investor and the primary vendor are the same entity.

A Harvard Kennedy School researcher quoted in the article draws the parallel to late-1990s dot-com cross-selling, while acknowledging a key difference: today’s AI companies have real products and real customers. The question the article does not resolve — and cannot — is whether those customers will generate enough return to justify the scale of the infrastructure being built for them.

Relevance for Business

SMBs are not parties to these deals. But the AI tools, cloud services, and software platforms SMBs use daily are built on this infrastructure — and priced into a market that is partly sustained by circular investment logic. If the cycle contracts — whether through rising debt costs, reduced investor patience, or a demand shortfall — pricing, availability, and vendor stability across the AI tool stack could shift. The signal here is not panic; it is awareness. The AI market has structural fragility underneath the revenue numbers.

Calls to Action

🔹 Maintain awareness of the financial health of AI vendors whose services you depend on — circular financing is a real risk factor, not a theoretical one.

🔹 Avoid long-term contractual commitments to AI infrastructure or platforms that cannot demonstrate independent cash-flow sustainability.

🔹 When evaluating AI vendor partnerships, ask: does this company have paying customers outside its own investment ecosystem?

🔹 Monitor how the OpenAI–Nvidia–Oracle relationship evolves; disruption in any leg of that arrangement could ripple through cloud pricing and availability.

🔹 No immediate operational action required, but include AI vendor financial stability in your next vendor risk review.

Summary by ReadAboutAI.com

https://www.bloomberg.com/news/features/2025-10-07/openai-s-nvidia-amd-deals-boost-1-trillion-ai-boom-with-circular-deals: Day 5: May 24, 2026

Nvidia Rockets to $1.72 Trillion, Just Shy of Amazon as Fourth Most Valuable U.S. Company

Fortune | Ryan Vlastelica and Bloomberg | February 9, 2024

TL;DR: Nvidia’s extraordinary valuation surge in early 2024 was the clearest market signal that AI infrastructure demand was real — but the pace of that rise was already raising durability questions even among investors who remained bullish.

Executive Summary

By early February 2024, Nvidia had added an amount roughly equal to Tesla’s entire market capitalization over just two months, pushing its valuation to $1.72 trillion. Its shares had risen more than 40% in the year to that point, making it the top performer in both the Nasdaq 100 and the so-called Magnificent Seven group of major tech stocks. The company was within striking distance of Amazon, with Alphabet not far behind.

The underlying driver was AI chip demand. Nvidia’s revenue for fiscal year 2024 was projected to grow roughly 120%, with another 60% expected the following year. Wall Street analyst consensus was firmly positive — more than 90% carried buy ratings. But the surge had also pushed the stock’s valuation back toward its highest level in months, reigniting a familiar question: how long can this rate of growth be sustained, and at what multiple is it already priced in?

The article surfaces a meaningful signal for leaders watching AI infrastructure: the stock had risen past the average analyst price target for the first time since the prior spring, suggesting that even institutions bullish on Nvidia’s fundamentals did not expect further near-term upside at that pace. One fund manager trimmed his position, describing the stock as “too rich” despite continued confidence in its long-term prospects.

Relevance for Business

Nvidia’s trajectory matters to SMB leaders not as an investment story but as a market structure signal. The concentration of AI compute capability in a single supplier — whose chips power the training and operation of virtually every major AI model — represents a structural dependency with real business implications.

When a supplier’s stock is pricing in several years of compounding growth, that valuation reflects both the company’s leverage and its customers’ limited alternatives. AI model providers and cloud platforms that rely on Nvidia hardware pass that cost structure through to enterprise buyers. Understanding who controls the physical layer of AI is relevant context for anyone making multi-year commitments to AI tooling or infrastructure.

The article also illustrates the speed at which market consensus can form around a single narrative. That consensus, as this article noted even in early 2024, was already showing early signs of strain.

Calls to Action

🔹 Recognize Nvidia’s dominance as an infrastructure dependency, not just a market story. If your AI vendors rely on Nvidia hardware, that cost structure is already embedded in your pricing.

🔹 Monitor for signs of Nvidia alternative adoption — AMD and custom chip programs from Google and Amazon are relevant if they affect supply dynamics or pricing.

🔹 Treat the early-2024 AI infrastructure boom as historical context for current vendor pricing. The costs were real and have been passed forward.

🔹 Do not conflate stock-market momentum with product durability. High valuations reflect expectations, not guarantees. Design vendor relationships accordingly.

Summary by ReadAboutAI.com

https://fortune.com/2024/02/09/how-valuable-nvidia-amazon-billionaire-jensen-huang/: Day 5: May 24, 2026

Microsoft’s OpenAI Bet Was Driven by Fear of Falling Behind Google

Bloomberg | Leah Nylen and Shirin Ghaffary | April 30, 2024

TL;DR: A 2019 internal Microsoft email, surfaced in federal court, reveals that the company’s massive OpenAI investment was not a strategic vision play — it was a defensive move born from a frank acknowledgment that Microsoft had fallen dangerously behind Google in AI capability.

Executive Summary

The email, written by Microsoft CTO Kevin Scott to CEO Satya Nadella and co-founder Bill Gates, stated plainly that Microsoft was “multiple years behind the competition in terms of machine learning scale.” Scott described having made a mistake by underestimating earlier AI efforts by competitors, and Nadella endorsed the email, forwarding it internally as an explanation for why the OpenAI partnership needed to happen. The document was released as part of the Justice Department’s antitrust case against Google.

The real issue is not the investment itself — it is what the email reveals about the competitive logic driving AI spending. The largest technology decisions of this era are being made not from confident strategic positioning, but from fear of being left behind. That dynamic tends to produce large, fast bets that may not be subjected to ordinary financial scrutiny.

The DOJ used the email to support its argument that Google’s dominance in search had concentrated AI development in ways that distorted competition — a framing with implications well beyond this single case.

Relevance for Business

For SMB leaders watching AI vendor relationships and platform decisions, this source matters for one specific reason: the companies whose tools you are evaluating were built in competitive panic, not deliberate design. Investment decisions at Microsoft — which shapes tools used by hundreds of millions of businesses — were driven by fear of Google. That competitive pressure has not abated. It continues to shape roadmap priorities, partnership terms, and product stability for the platforms SMBs depend on.

Calls to Action

🔹 Treat AI vendor roadmaps with appropriate skepticism — product decisions at major platforms are driven as much by competitive anxiety as by customer need.

🔹 Monitor the ongoing Google antitrust proceedings; outcomes may affect the competitive structure of AI tools you use today.

🔹 When evaluating Microsoft Copilot or similar AI-embedded tools, distinguish between features developed for genuine business utility and those built to match a competitor’s capability.

🔹 No immediate action required, but assign someone to track antitrust developments in AI — rulings could affect licensing, data practices, and market access.

Summary by ReadAboutAI.com

https://www.bloomberg.com/news/articles/2024-05-01/microsoft-concern-about-google-s-lead-drove-investment-in-openai: Day 5: May 24, 2026

Regulators Signal Concern Over Big Tech’s Grip on AI — by Excluding Them from the Room

Bloomberg | Leah Nylen | May 30, 2024

TL;DR: When the Biden administration held its major AI competition workshop in May 2024, it deliberately excluded Google, Microsoft, Meta, Amazon, and Nvidia from the speakers list — a signal that antitrust enforcers view these companies not as contributors to healthy AI competition, but as the source of the problem.

Executive Summary

The workshop, co-hosted with Stanford University, featured antitrust agencies from the US, UK, and EU alongside venture capital firms and smaller AI players such as France’s Mistral AI. The exclusion of the dominant platforms was intentional, according to Assistant Attorney General Jonathan Kanter. The event marked a visible shift: where congressional hearings had positioned Big Tech CEOs as the authoritative voices on AI governance, regulators were now deliberately holding a different conversation — one that centered on structural concerns about market concentration.

The core concern regulators expressed was dependency. Many of the most promising AI startups rely on the dominant platforms for financing, computing infrastructure, and distribution. That structural dependency raises questions about whether independent AI competition is even possible without the approval — and infrastructure — of a handful of incumbents. The EU’s representative at the workshop noted the contrast with the early internet era: this time, the largest companies are leading the innovation, not being disrupted by it.

The FTC and DOJ were also, at this point, actively examining the investment relationships between Google, Amazon, and Microsoft and their AI startup partners — a line of inquiry with direct implications for how AI supply chains and vendor dependencies are structured.

Relevance for Business

SMBs that rely on AI tools embedded in large platforms — Microsoft, Google, Amazon — are operating within a supply chain that regulators believe is insufficiently competitive. That does not mean those tools should be abandoned. It does mean that vendor lock-in risk is real, that pricing and access terms are set by companies with enormous structural advantages, and that regulatory intervention — which could disrupt platform AI offerings — is a live possibility rather than a remote one.

Calls to Action

🔹 Audit your AI tool dependencies: which of your critical AI capabilities run through a single platform provider?

🔹 Monitor antitrust proceedings involving Google, Amazon, and Microsoft — outcomes could affect product availability, pricing, or access to AI services.

🔹 When evaluating AI partnerships or new tools, ask whether the vendor depends on a single large platform for its infrastructure — that dependency is a risk factor.

🔹 Begin documenting your AI vendor relationships now; governance requirements in this area are likely to expand.

🔹 No immediate action required on this specific development, but assign someone to track EU AI Act implementation — it may set precedents that reach US operations.

Summary by ReadAboutAI.com

https://www.bloomberg.com/news/articles/2024-05-30/openai-microsoft-meta-google-all-left-off-us-backed-event-on-competition: Day 5: May 24, 2026

Wall Street Blessed Meta’s AI Spending — Then the Bill Got Bigger

Fortune / Data Sheet | Sharon Goldman | August 1, 2024

TL;DR: In August 2024, Meta’s strong advertising revenue was giving Wall Street patience for its AI infrastructure spending — but Zuckerberg’s own language made clear that returns from generative AI products were years away, and the capital expenditure trajectory was already pointing significantly higher.

Executive Summary

Note: This source is a newsletter dispatch covering Meta’s Q2 2024 earnings call. It draws directly on company statements and analyst reactions, with appropriate attribution. Read it as editorial summary of a public earnings event, not independent financial analysis.

Meta’s Q2 2024 results showed strong underlying performance — digital advertising revenue drove the beat — and the company raised its 2024 capital expenditure guidance to between $37 billion and $40 billion, with infrastructure costs identified as the primary driver of further expense growth in 2025. The scale of the hardware commitment — reportedly including around 600,000 Nvidia GPUs at roughly $30,000 each — underlines both the ambition and the cost structure of Meta’s AI push.

The critical disclosure came not from the revenue numbers but from Zuckerberg’s own framing of the timeline. He was explicit that improving existing Meta products with AI — primarily the recommendation and advertising systems — would drive results over the next two years. But returns from newer generative AI products, such as Meta AI and AI Studio, were characterized as requiring the company’s “relatively long business cycle” of scaling to a billion users before meaningful monetization. He estimated that timeline in “years.”

Reading this article alongside the October 2025 Fortune earnings dispatch (Summary 7 in this set) reveals a consistent pattern: Meta’s AI spending continued to escalate on schedule, while the return timeline on generative AI products remained pushed forward. What Wall Street was patient about in August 2024, it was becoming less patient about by late 2025 — when Meta shares dropped 8% after-hours on its latest capital expenditure upgrade.

Relevance for Business

For SMBs that use Meta’s advertising platform — and the many who do — the distinction Zuckerberg drew between advertising AI (working now, generating returns) and generative AI products (years away from monetization) is operationally important. The ad targeting capabilities you are paying for today are built on established machine learning, not the frontier AI models Meta is spending billions to develop. That may change. But it has not changed yet, and the timeline is measured in years, not quarters.

Calls to Action

🔹 If your business depends on Meta advertising, understand that the AI driving your current ad performance is not the same technology Meta is spending its billions on — and that your current returns may shift as Meta evolves its model approach.

🔹 Do not extrapolate Meta’s current advertising AI performance to its future generative AI products — Zuckerberg was explicit that the timelines are different.

🔹 Monitor Meta’s AI product rollouts (Meta AI, AI Studio) as leading indicators of how the platform may evolve — and what new capabilities or risks may emerge for business users.

🔹 Use this source in combination with the Q3 2025 earnings summary: the pattern of escalating costs and delayed generative AI returns has been consistent and is now being tested by investor patience.

Summary by ReadAboutAI.com

https://fortune.com/2024/08/01/meta-ai-spending-spree-q2-2024-earnings/: Day 5: May 24, 2026

Big Tech Stocks Lose Some of Their Aura as Earnings Growth Slows

Fortune | Jeran Wittenstein, Ryan Vlastelica, and Bloomberg | October 27, 2024

TL;DR: The five largest US tech companies were heading into Q3 2024 earnings with their slowest collective profit growth in a year and a half — and investors were starting to ask whether AI spending justified the prices they were paying.

Executive Summary

Through most of 2023 and into 2024, the largest technology companies — Apple, Nvidia, Microsoft, Alphabet, and Amazon — led the US equity market with outsized earnings growth. That dynamic was shifting by late October 2024. Collective earnings growth among these companies, while still projected to outpace the broader S&P 500, was expected to reach its slowest pace in six quarters. The gap between Big Tech and the rest of the market was narrowing, and analysts were forecasting it would continue to shrink into 2025.

The central tension was the AI spending question. In just the prior quarter, Microsoft, Alphabet, Amazon, and Meta together had directed an estimated $56 billion into capital expenditures — up more than 50% year over year. Investors broadly accepted that these outlays represent long-term positioning. But with little visible payoff from AI-integrated products at that stage, concern was mounting that peak margins may have already passed. Bloomberg Intelligence analysts wrote that AI-related capital spending was beginning to offset top-line revenue gains.

Valuations added to the unease. Apple and Microsoft were trading at earnings multiples significantly above their ten-year historical averages, raising the question of whether momentum alone was sustaining the premium. Hedge funds had been reducing their exposure to these stocks for several months. Still, Wall Street analyst consensus remained overwhelmingly bullish — roughly 90% of analysts covering Microsoft, Alphabet, and Nvidia carried buy ratings at the time of publication.

Relevance for Business

For SMB leaders, the significance here is not portfolio management — it is the emerging accountability gap at the infrastructure layer. The companies that supply AI tools and cloud compute to smaller businesses were themselves under scrutiny for returns on AI investment. That scrutiny would intensify, and it would eventually flow downstream. Vendors under margin pressure tend to pass costs forward, adjust product roadmaps, or shift priorities toward enterprise accounts. Leaders buying AI services from these platforms should understand that the economics of the supply chain were already under stress by late 2024.

Additionally, valuation concentration carries systemic risk. When the five largest companies by market cap are all simultaneously cycling through questions about AI ROI, cost structures, and regulatory exposure, the downstream effects on software pricing, infrastructure availability, and vendor stability become relevant operational questions — not just investor concerns.

Calls to Action

🔹 Monitor vendor economics. Suppliers facing margin pressure may raise prices, restrict features, or restructure contracts. Build this into your vendor review cycles.

🔹 Do not assume AI pricing stability. The cloud and AI tool pricing environment is linked to the financial performance of a small number of providers. Benchmark your current contracts while alternatives exist.

🔹 Treat capital expenditure announcements from major AI providers with skepticism until outcomes materialize.Spending size is not evidence of returns.

🔹 Use this moment to develop internal clarity on what AI is actually delivering for your organization — before external pressure from boards and CFOs arrives in the form of formal ROI demands.

Summary by ReadAboutAI.com

https://fortune.com/2024/10/27/big-tech-earnings-preview-apple-amazon-microsoft-google-alphabet-meta/: Day 5: May 24, 2026

AI Giants Seek New Tactics Now That ‘Low-Hanging Fruit’ Is Gone

Bloomberg Businessweek | Rachel Metz | December 19, 2024

TL;DR: By late 2024, the dominant model-scaling strategy that drove the AI boom was running into real constraints — and the industry’s response was a set of experimental workarounds whose long-term viability remained genuinely uncertain.

Executive Summary

This article, published as part of Bloomberg Businessweek’s “Year Ahead 2025” issue, documents a turning point in AI development that was underway even before it was widely acknowledged publicly. The core finding: the approach that had powered AI progress since ChatGPT’s launch — using more computing power, more data, and larger models to produce consistent capability gains — was delivering diminishing returns.

Three concrete constraints had emerged by late 2024. First, the supply of high-quality, human-generated training data was becoming scarce. AI companies had largely consumed what was publicly available, and acquiring more required deals with private data holders — a shift reflected in the major data partnership agreements that major AI developers had been pursuing. Second, the cost trajectory was becoming difficult to justify. Anthropic’s CEO stated publicly that training a leading-edge model already cost approximately $100 million and could reach $100 billion within a few years. OpenAI’s CFO confirmed that the company’s next generation model would cost billions to develop. Third, even as costs rose, improvements were becoming harder to achieve — and harder to translate into proportional product gains.

The article identifies three paths the industry was exploring in response. The first — inference-time computation, exemplified by OpenAI’s o1 model — shifts processing from training (a fixed, upfront cost) to the moment a user poses a question. The model takes more time to reason through problems before responding, which improves accuracy on complex tasks. Google, Databricks, and others were developing versions of this approach. Second, companies were turning to synthetic data — AI-generated content used to train AI systems — as a substitute for scarce human-generated data. The technique produced measurable improvements in some cases, though researchers acknowledged they did not fully understand why, and concerns about compounding errors in AI-trained-on-AI content were live. Third, the article notes a potential shift back toward task-specific models — narrower systems designed to excel at defined functions rather than general-purpose intelligence. This mirrors the chip industry’s pattern of reaching apparent limits, then finding new architectural paths forward.

Google CEO Sundar Pichai’s framing at the NYT DealBook Summit is worth noting for its candor: the easy gains in AI development were behind the industry, and progress would become harder.

Relevance for Business

For SMB executives, the significance of this article is not technical — it is structural. The era of consistent, rapid, and relatively cheap AI capability improvement that characterized 2022–2024 was already showing signs of slowing by the time of publication. That has several implications for leaders making AI-related decisions.

Costs were going up, not down. The assumption that AI tools would become dramatically cheaper and more capable over short time horizons was being tested by the underlying economics of frontier model development. Organizations that built business cases premised on rapid continued improvement should review those assumptions.

The vendor landscape was becoming more complex. The shift toward inference-time compute, synthetic data, and task-specific models means AI vendors would be making material architecture changes — some of which would affect pricing, performance, and compatibility with existing integrations. Workflow stability was becoming a legitimate procurement consideration, not a conservative afterthought.

The gap between frontier AI development and practical enterprise application was not closing as quickly as many had anticipated. The technology was maturing, but the most transformative promises remained dependent on problems the industry had not yet solved.

Calls to Action

🔹 Revisit AI business cases built on assumptions of rapid, continuous capability improvement. The pace of progress at the frontier was already slowing by late 2024.

🔹 Monitor the inference-time compute shift. If AI tools you rely on adopt this approach, response times and usage-based pricing structures may change.

🔹 Be cautious about vendor roadmap commitments. The technical direction of the industry was genuinely uncertain at the time of this article; vendors offering firm promises were offering them in uncertain conditions.

🔹 Distinguish between frontier AI development news and practical enterprise tool readiness. They operate on different timelines.

🔹 Monitor the synthetic data debate. If training quality becomes an unresolved issue at the model layer, it will eventually affect the reliability of tools built on top of those models.

Summary by ReadAboutAI.com

https://www.bloomberg.com/news/articles/2024-12-19/anthropic-microsoft-openai-seek-new-ways-to-advance-ai: Day 5: May 24, 2026

The $1.3 Trillion Forecast: Bloomberg Intelligence Projects a Decade of Generative AI Growth

Bloomberg Intelligence Press Release | June 1, 2023

TL;DR: A 2023 Bloomberg Intelligence forecast projecting the generative AI market to reach $1.3 trillion by 2032 is worth revisiting not as a reliable prediction, but as a document of the assumptions that were shaping capital allocation decisions at the start of the AI investment surge.

Executive Summary

Note: This source is a Bloomberg Intelligence press release — a promotional document for a proprietary research product available only to Bloomberg Terminal subscribers. It should be read accordingly: the figures are Bloomberg’s own projections, not independent or peer-reviewed findings.

The report projected generative AI growing from roughly $40 billion in 2022 to $1.3 trillion by 2032, at a compound annual growth rate of 42%. The largest projected revenue drivers were infrastructure-as-a-service for model training, digital advertising, and specialized software assistants. On the hardware side, AI servers, storage, and conversational devices were expected to be significant contributors. The report identified AWS, Microsoft, Google, and Nvidia as the likely primary beneficiaries — a prediction that has held reasonably well at the platform level, even as the broader market trajectory remains unverified.

What this source does not tell you matters as much as what it does. Forecasts of this scale — and at 42% compound annual growth — carry enormous uncertainty, particularly when made at the beginning of a technology cycle and sold as investment guidance. The report was published in June 2023, before the first serious questions about AI’s economic return on investment had entered mainstream discourse. Reading it alongside the February 2026 Goldman Sachs analysis (included in this source set) is instructive.

Relevance for Business

Many vendor pricing models, software contract terms, and boardroom AI investment cases were built on forecasts like this one. SMB executives should treat projections of this kind as directional indicators, not operational plans. The question is not whether AI will be a significant market — it will. The question is who captures the value, at what pace, and whether the companies currently spending the most will be the ones that benefit most. History suggests the answer is rarely simple.

Calls to Action

🔹 When vendors or consultants cite large AI market projections to justify spending recommendations, ask for the methodology and who funded the research.

🔹 Distinguish between AI infrastructure growth (real and measurable) and AI productivity return for your specific business (still highly variable and unproven at scale).

🔹 Use market-size forecasts as context for understanding where investment is flowing — not as justification for internal AI commitments.

🔹 Revisit any internal AI business cases built on analyst forecasts from 2022–2023; the assumptions underlying those projections deserve fresh scrutiny.

Summary by ReadAboutAI.com

https://www.bloomberg.com/company/press/generative-ai-to-become-a-1-3-trillion-market-by-2032-research-finds/: Day 5: May 24, 2026

The AI Field in Early 2023: A Landscape That No Longer Exists

Bloomberg | Dina Bass and Priya Anand | February 6, 2023

TL;DR: This February 2023 account of the competitive field challenging OpenAI is most valuable today not as a current assessment, but as a historical baseline — a snapshot of a market that has since been substantially consolidated, disrupted, and in some cases dismantled.

Executive Summary

Editorial note: This article was published in February 2023, more than three years before the Anniversary Week coverage period. Its value for Day 5 is archival and contextual — it documents the starting conditions of a competitive landscape that has since changed substantially. It should be framed as a baseline, not a current assessment.

At the time of publication, a large field of challengers was assembling around OpenAI. The article profiled Stability AI, Anthropic, AI21 Labs, Character.AI, Cohere, Google, Amazon, and Baidu as significant contenders. The framing was competitive and optimistic: venture capital was flowing, more than 150 AI startups had been counted, and OpenAI’s lead appeared contestable.

What the three years since have revealed is more instructive than the article itself. Stability AI, once valued at $1 billion and celebrated for open-source image generation, later ran into severe financial and legal difficulties. Character.AI was acquired by Google in a deal that drew regulatory scrutiny. Anthropic, backed initially by Google and later by Amazon, emerged as one of two credible frontier model competitors. Cohere pivoted heavily toward enterprise. AI21 narrowed its scope. Google consolidated its AI operations dramatically. The open competitive field the article described gave way to a market dominated by two or three frontier model providers and a handful of vertically integrated cloud incumbents.

The pattern here is important for leaders to internalize: the AI competitive landscape changes faster than most business planning cycles. Companies profiled as major competitors in early 2023 either no longer exist in their described form, have been absorbed, or have narrowed significantly.

Relevance for Business

The lesson from this archival piece is not nostalgia — it is caution about current market maps. Any vendor landscape assessment your organization conducted in 2023 or 2024 requires fresh review. The AI tool market is still consolidating. Companies that appeared independent may be owned by or deeply dependent on one of a small number of large incumbents. Due diligence on AI vendors should include ownership structure, investor relationships, and financial sustainability — not just product capability.

Calls to Action

🔹 Treat any AI vendor landscape assessment older than 12 months as potentially outdated — review it before making new commitments.

🔹 When evaluating an AI tool or vendor, research current ownership structure; several companies that appeared independent in 2022–2023 are now subsidiaries or deeply dependent on a single large investor.

🔹 Use this piece as a reference point for how rapidly the landscape can shift — and build that assumption into vendor risk and strategy planning.

🔹 Do not assume that market position or funding in the AI sector signals long-term stability; the history of the past three years argues otherwise.

Summary by ReadAboutAI.com

https://www.bloomberg.com/news/articles/2023-02-06/openai-s-growing-list-of-competitors-anthropic-google-stability-ai-and-more: Day 5: May 24, 2026Additional Sources

Day 5 — The AI Boom Became a Battle of Spending, Scale, and ROI.

Articles from the past year: What the Magnificent 7 are spending and making, and The growing tension between those numbers.

THE MAGNIFICENT 7:

Fortune — Big tech earnings: Meta, Microsoft and Tesla signal the next phase of AI-driven markets (January 2026) https://www.investmentnews.com/equities/magnificent-7-earnings-meta-microsoft-and-tesla-signal-the-next-phase-of-ai-driven-markets/265042 (Note: this ran in InvestmentNews drawing on Fortune/Bloomberg earnings.

Fortune — Magnificent 7’s stock market dominance shows signs of cracking (January 2026) https://fortune.com/2026/01/11/magnificent-7-stock-market-dominance-cracking-nvidia-microsoft-apple-meta-alphabet-amazon-tesla/

Fortune — Big Tech’s latest earnings show the AI spending spree isn’t over (October 2024) https://fortune.com/2024/10/31/big-tech-earnings-ai-spending-spree/

Fortune — Big tech’s earnings problem: estimates may be way too high (April 2025) https://fortune.com/article/big-tech-earnings-problem-estimates-apple-meta-microsoft-amazon-mag/

Fortune — Meta’s AI spending spree is just fine with Wall Street (August 2024) https://fortune.com/2024/08/01/meta-ai-spending-spree-q2-2024-earnings/

Fortune — Meta revenue soars but so do AI expenses (October 2025) https://fortune.com/2025/10/30/meta-revenue-soars-but-so-do-ai-expenses/

Bloomberg — Amazon, Apple, Meta, Alphabet, Microsoft: Watch these five AI signals (October 2025) https://www.bloomberg.com/opinion/articles/2025-10-29/amazon-apple-meta-alphabet-microsoft-earnings-watch-these-five-ai-signals

NVIDIA: THE COMPANY THAT COLLECTS THE TOLL

Fortune — Nvidia shares jump as AI boom fuels another blockbuster quarter (May 2024) https://fortune.com/2024/05/22/nvidia-shares-jump-4-as-the-ai-boom-fuels-a-another-blockbuster-quarter-and-better-than-expected-forecast/

Fortune — Nvidia smashes Q4 2026 with $68 billion in revenue (February 2026) https://fortune.com/2026/02/25/nvidia-nvda-earnings-q4-results-jensen-huang/

Fortune — Nvidia is about to pass Amazon as the 4th-most valuable U.S. company (February 2024) https://fortune.com/2024/02/09/how-valuable-nvidia-amazon-billionaire-jensen-huang/

Bloomberg — OpenAI, Nvidia fuel $1 trillion AI market with web of circular deals (October 2025) https://www.bloomberg.com/news/features/2025-10-07/openai-s-nvidia-amd-deals-boost-1-trillion-ai-boom-with-circular-deals

Bloomberg — AI circular deals: How Microsoft, OpenAI and Nvidia keep paying each other (March 2026) https://www.bloomberg.com/graphics/2026-ai-circular-deals/

THE SPENDING-VS-REVENUE GAP

Bloomberg — Why fears of a trillion-dollar AI bubble are growing (November 2025) https://www.bloomberg.com/news/articles/2025-11-24/why-ai-bubble-concerns-loom-as-openai-microsoft-meta-ramp-up-spending

Fortune — A huge chunk of U.S. GDP growth is being kept alive by AI spending ‘with no guaranteed return’(December 2025) https://fortune.com/2025/12/23/us-gdp-alive-by-ai-capex/