ReadAboutAI.com Anniversary Week: Day 4 – AI Everyday Expansion

A look back. Relevant articles over the past year on AI Everyday usage.

Anniversary Week, Day 4: AI Moved From Experiment to Everyday Work

For most of the past decade, AI in the workplace was a project — something that lived in a lab, a pilot program, or a PowerPoint deck. What the coverage of the past eighteen months showed, consistently and across sources, is that this changed. AI moved into the daily operations of real organizations: into customer service queues, legal documentation, physician notes, HR workflows, and attorney bios. The question stopped being whether AI could work in business settings and became whether organizations were building the right conditions for it to work well.

The answer, just as consistently, was: not yet. What changed was not the technology’s readiness. What changed was the visibility of the gap between where AI was deployed and where the organizational capability to use it actually stood. A year ago, the conversation was about adoption rates. Today, it is about the distance between what leaders believe is happening and what employees experience on the ground — and about the very real costs of closing that gap too slowly, or not at all.

The pattern that kept returning in this coverage was a version of the same finding: organizations that treated AI as a technology purchase underperformed those that treated it as an organizational design question. The tools were not the bottleneck. The structures, expectations, training investments, governance habits, and workforce models built around those tools were. That gap — between deployment and capability — is where most of the meaningful action in this theme took place.

WHAT CHANGED IN ONE YEAR

- AI use roughly doubled across the U.S. workforce between mid-2024 and mid-2025, according to Gallup — but daily use remains at just 10%, and adoption is heavily concentrated among senior workers and knowledge-intensive roles.

- Entry-level employment in AI-exposed roles declined 16% since late 2022, according to Stanford research using ADP payroll data — the first data-grounded evidence of measurable displacement, invisible in aggregate unemployment figures.

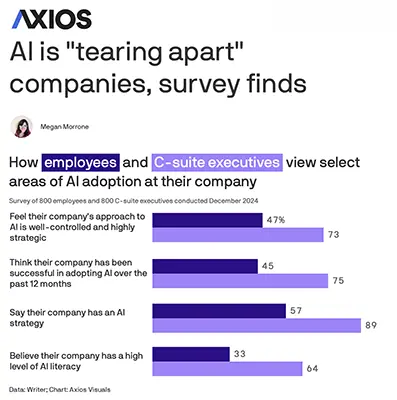

- The gap between executive and employee perception of AI rollouts widened. Surveys found 75% of C-suite leaders called their AI adoption successful; fewer than half of employees at those same companies agreed.

- High-profile “AI-first” commitments began to unravel publicly. Klarna reversed its customer service automation strategy. Duolingo faced sustained backlash. The cost of aggressive substitution — in quality and reputation — became harder to ignore.

- Agentic AI — systems that take autonomous action rather than generate text — moved from concept to enterprise planning horizon, with Gartner forecasting that more than 40% of such projects will be cancelled by 2027 due to cost, inaccuracy, or governance failures.

Summary by ReadAboutAI.com

AI Skills Demand Has Doubled — But Employers Want Human Judgment Most

Demand for AI-related skills is up 109% since last year. What that means for you

Fast Company | Jared Lindzon | February 16, 2026

TL;DR: Demand for AI-related skills has surged over 100% in a year, but the most sought-after qualities in 2026 are human — adaptability, creativity, and judgment — signaling that the near-term AI labor story is augmentation, not replacement.

Executive Summary

Drawing on Upwork’s In-Demand Skills 2026 report and corroborating McKinsey research, this piece argues that the AI-driven labor market disruption is taking a different shape than many feared. AI skill demand has more than doubled year-over-year, but the fastest-growing categories are application and integration — using AI within existing workflows — not AI development or model building. AI video and content creation skills saw the largest jump, followed by AI integration into business processes and AI data annotation.

Simultaneously, nearly half of surveyed employers are placing a premium on non-automatable human capabilities: creativity, emotional intelligence, learning agility, and adaptability. The McKinsey research cited offers a useful framework: roughly 70% of common workplace skills can be enhanced by AI but still require human expertise; 12% remain entirely human; just 18% can be fully handed over to machines. The implication is that the majority of workplace skills are not being replaced — they’re being reshaped.

The piece acknowledges — but doesn’t fully stress-test — the dynamic of employers pausing hiring while they assess AI’s impact, then re-entering the market once they understand what skills they need. That hiring pause is real and has real costs for workers and teams navigating role uncertainty. The optimistic framing of “augmentation over replacement” is directionally supported by the data cited, but should not be taken as settled — the pace of AI capability development may outrun these projections.

Relevance for Business

For SMB leaders managing teams and making hiring decisions, the signal is practical: technical AI expertise is not the primary hiring priority — the ability to work effectively with AI tools while applying human judgment is. This reframes upskilling investment. Rather than sending staff to technical AI training, the higher-value investment may be in developing critical thinking, contextual reasoning, and workflow redesign capabilities. On hiring, candidates who demonstrate adaptability and applied AI fluency are becoming more valuable than those with narrow technical credentials.

Calls to Action

🔹 Audit your current team’s AI fluency — not whether they can build AI tools, but whether they’re using available tools effectively in their daily work.

🔹 Reframe your upskilling investment toward applied AI use and judgment-dependent skills, not technical AI development unless your business requires it.

🔹 Update job descriptions and hiring criteria to reflect demand for adaptability, AI-assisted workflow competency, and applied critical thinking.

🔹 Use the McKinsey framework (70% enhanced / 12% human-only / 18% automatable) as a rough internal lens when evaluating which roles and tasks to redesign.

🔹 Monitor AI capability advances quarterly — the augmentation picture is likely to shift as model capabilities expand, and planning assumptions should be revisited regularly.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91492708/demand-for-ai-related-skills-is-up-109-since-last-year-what-that-means-for-you: Day 4: May 23, 2026

AI at Home: Real People, Real Time Savings — and a Research Base to Back It Up

The Wall Street Journal | Julie Jargon | March 28, 2026

TL;DR: Academic research and real-world examples confirm that AI tools are delivering measurable personal productivity gains at home — a signal that employee expectations about AI at work will accelerate.

Executive Summary

A UCLA/Stanford/USC study analyzing household internet browsing data from 2021–2024 found that people using AI tools gained measurable free time. The WSJ profiles several individuals deploying AI agents for tasks that would otherwise consume hours: comparing health insurance plans, managing grocery orders, coordinating household labor, and building personalized fitness coaching. The use cases span a wide range — from simple automation (grocery ordering) to more complex agent-to-agent coordination (AI calendars negotiating a date night).

What makes this notable is the qualitative shift. Early AI productivity narratives focused on screen time substitution. The individuals profiled here describe AI enabling them to reclaim time for physical and social activities — not just more scrolling. One researcher summarized the dynamic well: the data shows people getting things done faster, though whether they redirect that time toward genuinely enriching activities varies.

The risk of over-indexing on these anecdotes is real — the article profiles a narrow slice of technically sophisticated, motivated early adopters. The research base, while directionally useful, does not yet generalize to all demographics or use patterns.

Relevance for Business

The gap between consumer AI fluency and workplace AI adoption is closing. Employees who are using AI agents at home to manage insurance, groceries, fitness, and chores will arrive at work expecting similar tools — and will grow impatient when workplace systems lag behind. For SMB leaders, this creates both an opportunity and a pressure point: the internal case for AI adoption is getting easier to make, but so is the potential for talent friction if tools aren’t provided.

There is also an emerging competitive signal: businesses that help employees use AI to reduce routine cognitive load — not just automate back-office tasks — will likely see morale and retention benefits alongside productivity gains.

Calls to Action

🔹 Audit your current AI tooling against what employees are already doing on their own. The gap between personal and professional AI access is a retention and morale risk.

🔹 Pilot AI for high-friction employee tasks — benefits comparison, scheduling, research synthesis — where time savings are measurable and adoption resistance is low.

🔹 Don’t over-read the anecdotes. These are early adopters. Design AI rollouts around your actual workforce capabilities, not the most technically sophisticated examples.

🔹 Monitor the research. This UCLA/Stanford/USC study is one of the first to use behavioral data (not self-report) to measure personal AI productivity. Watch for follow-up research with broader samples.

🔹 Consider AI literacy as a workplace benefit. As consumer AI tools mature, offering structured onboarding or access — rather than waiting for organic adoption — positions you as an employer ahead of the curve.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/ai/the-people-who-are-using-ai-at-home-to-free-up-their-time-30940cec: Day 4: May 23, 2026

Why AI Backlash Is a Leadership Problem — Not a Tech One

TechTarget | Alison Roller | March 24, 2026

TL;DR: Employee and customer resistance to AI adoption is primarily a trust and accountability failure by leadership — not a technology failure — and organizations that treat it as an IT problem will accelerate the very resistance they are trying to overcome.

Executive Summary

This is a practitioner-oriented feature drawing on CTO and CIO perspectives. The core argument is well-supported and analytically sound: 44% of respondents in an Edelman Trust Barometer report described themselves as skeptical of businesses’ AI use, and over 40% of organizations cite trust, ethics, and legal concerns as top barriers to AI implementation (TEKsystems). The resistance isn’t primarily about AI being misunderstood — it’s about leadership failures in communication, accountability, and governance design.

The article identifies five leadership gaps that consistently amplify AI backlash: lack of clear ownership, rushed integration that outpaces governance, poor communication about intent and impact, failure to address displacement and surveillance fears, and treating AI as an IT project rather than a business transformation. The prescription is constructive: frame AI initiatives around business outcomes rather than technology; establish human accountability for AI-assisted decisions; create genuine feedback loops; and build observability into AI workflows before expanding them. One framing is particularly sharp: “You can’t automate accountability.” AI can inform decisions; humans must own them.

The piece does not offer original research — it synthesizes practitioner quotes and survey data — but the framing is accurate and actionable. Only 22% of organizations prioritize change management as part of their AI transformation agenda (TEKsystems), which explains why technically sound deployments repeatedly fail on adoption.

Relevance for Business

For SMB leaders, this is directly executable guidance. The failure modes described — rolling out tools without governance, letting IT own what is really a culture problem, ignoring workforce fears — are common at every company size. SMBs often face an additional risk: fewer resources to dedicate to change management means backlash can move faster and harder than at large enterprises. The key insight for smaller organizations: the speed of rollout and the quality of communication are more predictive of adoption success than the quality of the tool itself. If your team is resistant to AI tools you’ve already deployed, the problem is almost certainly leadership and communication — not the technology.

Calls to Action

🔹 Assign explicit human ownership to every AI-assisted workflow or decision in your organization — document who is accountable when the AI output is wrong.

🔹 Slow down rollouts that have outpaced governance — establish acceptable use boundaries, data handling rules, and incident response before expanding AI access.

🔹 Create a structured feedback channel for employees to raise AI concerns, and visibly act on it — performative listening accelerates distrust.

🔹 Reframe AI initiatives internally around workflow outcomes and employee benefit, not efficiency metrics and cost reduction.

🔹 Treat AI adoption as change management, not a technology deployment — assign a non-IT owner for the human and cultural dimensions.

Summary by ReadAboutAI.com

https://www.techtarget.com/searchcio/feature/Why-AI-backlash-is-a-leadership-problem-not-a-tech-one: Day 4: May 23, 2026

America’s CFOs Say AI Is Coming for Admin Jobs

Wall Street Journal | March 24, 2026

TL;DR: A rigorous study of ~750 CFOs confirms AI will primarily eliminate clerical and administrative roles, not yet knowledge work — but the displacement of “stepping stone” jobs raises a real workforce planning issue for managers today.

Executive Summary

A working paper published through the National Bureau of Economic Research, based on a survey of approximately 750 CFOs across finance, tech, manufacturing, and professional services, delivers the most grounded near-term picture yet of AI’s workforce impact. The findings: AI had essentially no measurable employment effect in 2025, and most CFOs expect only a modest headcount reduction of roughly 0.4% in 2026 relative to what it otherwise would have been. This is not a wave — it is a measured shift.

The more significant finding is directional: CFOs were twice as likely to say AI would reduce jobs in clerical and administrative roles — bookkeeping, customer service, data entry — as they were to say it would enhance them. For technical, skilled roles, the inverse is true: AI is more likely to complement than replace. This mirrors the pattern economists call “skills-biased technological change,” most recently seen when personal computers hollowed out routine office work in the 1980s and 1990s. Those jobs didn’t disappear overnight, but their share of employment shrank steadily.

A notable split by company size: larger firms (500+ employees) are actively cutting routine roles while keeping technical headcount flat, using AI to extract efficiency. Smaller companies are doing the opposite — keeping routine staff while adding technical workers to pursue growth. The study has one notable limitation: it only surveys established companies, missing the job creation that often comes from AI-native startups.

Relevance for Business

This study matters to SMB leaders on two levels. First, workforce planning: the window to reskill or redeploy employees in clerical functions is open now but narrowing. Waiting until roles are clearly redundant creates harder transitions. Second, hiring: roles that were once reliable entry points into organizations — administrative assistants, data clerks, customer service reps — are under structural pressure. Companies need to decide deliberately whether to fill those roles as they turn over, or redesign the workflow.

The size-based divergence is particularly relevant: SMBs that treat AI as a growth accelerator rather than a cost-cutter are in a stronger strategic position, both for talent retention and competitive differentiation. The risk of following large-enterprise playbooks — pure efficiency-through-reduction — is that it hollows out organizational capability and morale without building new capacity.

Calls to Action

🔹 Act now on workforce mapping: Audit which roles in your organization are primarily clerical or routine-cognitive. These are the first to be structurally pressured, and planning now costs less than reactive reductions later.

🔹 Reframe AI deployment as capability-building, not just cost reduction — particularly relevant for SMBs competing against larger, leaner enterprises.

🔹 Review hiring decisions for admin/clerical backfills: Before replacing a departing admin or customer service employee, evaluate whether AI tools can redistribute the workflow.

🔹 Prepare manager-level guidance on how to discuss AI and job security with teams. Employee anxiety is real and affects productivity.

🔹 Monitor: Track the next quarterly edition of the Duke/Atlanta Fed/Richmond Fed CFO survey for updated employment expectations as 2026 progresses.

Summary by ReadAboutAI.com

https://www.wsj.com/tech/ai/ai-admin-job-market-6a1c3436: Day 4: May 23, 2026

Data Privacy, AI Safety Assurances Key to Physician Adoption of AI

TechTarget — March 13, 2026

TL;DR / Key Takeaway:

Physician AI adoption is rising quickly, but trust remains conditional: privacy protections, validated safety, clear liability, and training are becoming the real gatekeepers of sustained healthcare AI use.

Executive Summary

This article reports that physician AI use has increased substantially, with an AMA survey showing adoption rising to 72% in 2026, up sharply from prior years. The most common use cases are practical and workflow-oriented rather than futuristic: summarizing research, generating discharge instructions and notes, documenting billing codes, and creating chart summaries. That matters because it suggests healthcare AI is becoming embedded first through administrative and information tasks, not wholesale clinical autonomy.

At the same time, the survey makes clear that adoption does not equal trust. Physicians remain cautious, with persistent concern about patient privacy, skill loss, liability, and the quality of AI tools. The strongest drivers of future adoption are not broader enthusiasm or more vendor marketing, but data privacy assurances from employers and EHR vendors, plus validation of safety and efficacy by trusted entities and continuous monitoring. Physicians also want more oversight, post-market surveillance, audits, and a clearer voice in implementation decisions.

That is the key executive signal: healthcare AI is no longer blocked primarily by awareness. It is now constrained by governance credibility. What is real now is growing usage in day-to-day clinical work. What remains unresolved is whether organizations can create the governance, training, and accountability structures necessary to scale that usage responsibly.

Relevance for Business

For healthcare organizations, this matters because AI rollout strategies that focus only on productivity gains may stall if clinicians do not trust the privacy, oversight, and liability framework around the tools. The article suggests the next phase of adoption will depend less on feature expansion and more on institutional assurance. Leaders that treat AI as a procurement exercise rather than a governance program may face internal resistance and uneven uptake.

For SMB executives more broadly, the lesson travels beyond healthcare: in regulated sectors, AI adoption increasingly depends on whether users believe the system is safe, reviewable, auditable, and aligned with professional responsibility. Trust architecture may become as important as technical capability.

Calls to Action

🔹 Prioritize privacy and oversight language in vendor selection and internal rollout plans.

🔹 Require evidence of safety, validation, and ongoing monitoring before expanding use cases.

🔹 Build role-specific training, since user confidence and skill preservation are now adoption issues.

🔹 Create clear accountability structures for liability, escalation, and adverse-event review.

🔹 Include frontline professionals in implementation decisions so adoption is shaped by actual workflow needs.

Summary by ReadAboutAI.com

https://www.techtarget.com/healthtechanalytics/news/366640254/Data-privacy-AI-safety-assurances-key-to-physician-adoption-of-AI: Day 4: May 23, 2026

Bridging the Operational AI Gap

MIT Technology Review Insights | In partnership with Celigo | March 4, 2026

⚠ Source flag: This is sponsored content produced by MIT Technology Review Insights in partnership with Celigo, an integration platform vendor. The findings favor integration platforms — Celigo’s core product — and should be read in that context. The survey data and structural observations have independent value; the implicit product recommendation does not.

TL;DR: Most enterprises are still running AI as isolated projects rather than connected systems, and a Gartner forecast cited in the piece suggests that without better operational infrastructure, a significant share of AI initiatives will be cancelled within two years.

Executive Summary

Based on a December 2025 survey of 500 senior IT leaders at mid-to-large U.S. companies, this report identifies a structural gap between AI experimentation and enterprise-wide deployment. Three-quarters of surveyed organizations have at least one AI workflow fully in production — a meaningful improvement over earlier surveys that found almost no operational AI. But the distribution is uneven: most AI success is concentrated in well-defined, already-automated processes. Only a quarter of organizations are succeeding with genuinely new processes.

The report’s most telling finding is organizational: two-thirds of companies lack a dedicated team responsible for maintaining AI workflows. Responsibility is scattered — sometimes with central IT, sometimes with departmental operations, sometimes unclear. This is not a technology gap. It is a governance and accountability gap, and it creates operational fragility as AI moves from pilots into production environments.

The central structural argument — that organizations with enterprise-wide integration platforms see better AI outcomes — aligns with what Celigo sells. That said, the underlying logic holds independently: AI systems that cannot reliably access clean, connected data across an organization will produce inconsistent results. A Gartner forecast referenced in the piece predicts that more than 40% of agentic AI projects (AI systems that take autonomous actions, as opposed to just generating text) will be cancelled by 2027 due to cost overruns, inaccuracy, or governance failures. This figure reflects analyst opinion, not demonstrated outcome data.

Relevance for Business

For SMB executives, the operational accountability finding is the most directly useful. AI projects tend to accumulate without clear ownership — initiated by one team, inherited by another, quietly abandoned when results disappoint. The discipline of assigning explicit maintenance ownership before deployment is a low-cost risk-reduction step that most organizations skip. The Gartner cancellation forecast is worth noting as a directional signal, not a prediction: it reflects how poorly most AI initiatives are currently governed.

Calls to Action

🔹 Evaluate: Before adding any new AI workflow, assign explicit ownership for ongoing maintenance and quality monitoring.

🔹 Assess your data environment: if your key systems do not share data cleanly, AI performance will reflect that fragmentation — regardless of which tools you deploy.

🔹 Monitor the Gartner forecast on agentic AI project cancellations — it is a useful benchmark for setting realistic expectations with your board or leadership team.

🔹 No immediate action required on enterprise integration platforms — but if you are experiencing inconsistent AI results, data connectivity is a likely contributing factor.

🔹 Read this source critically: the integration-platform conclusion reflects Celigo’s commercial interest. The governance and accountability observations are independently valid.

Summary by ReadAboutAI.com

https://www.technologyreview.com/2026/03/04/1133642/bridging-the-operational-ai-gap/: Day 4: May 23, 2026

AI IS “TEARING APART” COMPANIES, SURVEY FINDS

Axios | Megan Morrone | March 18, 2025

Source: Reporting on a survey of 800 C-suite executives and 800 employees, conducted December 2024 by Writer, an enterprise AI company. This is a vendor-commissioned survey and should be read accordingly — the findings align with Writer’s commercial interest in framing current AI tools as inadequate and its own platform as the alternative.

*⚠ Source flag: Vendor-produced survey. The sample was limited to organizations already permitting generative AI use, and employee respondents were required to be active AI users — both factors that skew the results toward more AI-engaged populations than the workforce at large. The directional findings on internal division are consistent with other independent reporting and are worth noting; the specific statistics should be treated as illustrative rather than definitive.

TL;DR: A vendor-commissioned survey finds that executives and employees hold sharply different views of how AI rollouts are going — a division significant enough that half of C-suite leaders describe it as fracturing their organizations.

Executive Summary

The survey’s most striking finding is a perception gap of unusual scale. Among executives, 75% describe their company’s AI adoption over the past year as successful. Among employees at those same organizations, fewer than half agree. The divergence extends further: 89% of C-suite leaders say their company has a coherent AI strategy, while only 57% of employees believe one exists. Whether these gaps reflect genuine disagreement, different information, or simply different definitions of “success” is not resolved by the survey.

The internal friction documented is multi-directional. Executives report frustration: 94% say they are not satisfied with their current AI solutions, and 71% describe AI applications as being built “in a silo.” Employees report frustration of a different kind: around half say that AI-generated outputs they encounter are inaccurate, confusing, or biased.Perhaps most operationally significant, 41% of Millennial and Gen Z employees acknowledge actively resisting their company’s AI strategy — by refusing to use tools or outputs. And 35% report paying out of pocket for AI tools they find more effective than what their employer provides.

The article quotes the CEO of Writer (the survey’s sponsor) extensively and ends with an implicit pitch for her company’s product. This framing should be accounted for when weighing the conclusions.

Relevance for Business

Independent of the vendor framing, the gap between executive and employee perception is a real and well-documented phenomenon that appears across multiple independent sources in this Day 4 series. The operational risk is concrete: if employees distrust AI outputs, resist adoption, or route around employer tools with personal subscriptions, the organizational investment in AI is not translating into organizational capability. This is a governance and change management problem, not a technology problem.

Calls to Action

🔹 Evaluate: Survey your own team’s perceptions of your AI rollout — expect the results to differ from leadership’s view.

🔹 If employees are using personal AI tools for work, treat this as a signal of unmet need, not a compliance violation.

🔹 Assess whether AI tools deployed internally meet a basic quality bar — inaccurate or unreliable outputs generate distrust that extends beyond the specific tool.

🔹 Monitor: The 41% active-resistance figure is directional; even a fraction of that level of quiet non-adoption would materially undermine an AI deployment.

🔹 Read this source critically: the survey was commissioned by Writer, and the conclusions align with its commercial interest. Cross-reference with independent sources before drawing firm conclusions.

Summary by ReadAboutAI.com

https://www.axios.com/2025/03/18/enterprise-ai-tension-workers-execs: Day 4: May 23, 2026

BEHIND THE CURTAIN: A WHITE-COLLAR BLOODBATH

Axios | Jim VandeHei and Mike Allen | May 28, 2025

Source: Reported opinion column (“Behind the Curtain” is an Axios editorial series). The central interview is with Dario Amodei, CEO of Anthropic, a leading AI development company. This is an important source to handle carefully: Amodei is simultaneously one of the most informed people alive on this topic and one of its principal commercial beneficiaries. His warnings are not disinterested. They should be taken seriously and read critically at the same time.

TL;DR: The CEO of one of the world’s leading AI companies warned, on the record, that AI could eliminate half of entry-level white-collar jobs within five years — and that neither government nor corporate leaders are preparing for it.

Executive Summary

This is the most consequential and the most complicated piece in the Day 4 set. Dario Amodei told Axios that AI could plausibly eliminate half of all entry-level white-collar jobs across technology, finance, law, and consulting within one to five years, potentially driving unemployment to levels not seen in generations. He framed this not as a fringe scenario but as a realistic trajectory that other AI executives share privately but will not say publicly. The article confirms this: VandeHei and Allen report that every CEO they have spoken with across industries is actively working to determine when AI agents can displace human workers at scale.

The mechanism described is specific and worth understanding. Today, most AI use inside companies involves augmentation — AI helping humans complete work. Amodei argues this will shift toward automation — AI completing work independently — and that this shift will happen faster than most people expect. He points to autonomous AI agents (software systems that take sequences of actions without human direction at each step) as the operational vehicle for this displacement. These agents are already operating inside companies; more are in active development.

The article also documents a real irony that readers should register: Amodei is describing the potential harms of technology his company is racing to build and sell. He offers solutions — a proposed “token tax” to redistribute AI-generated wealth, better-informed policymakers, accelerated public awareness — but acknowledges none of these would stop the underlying dynamic. Market forces and competitive pressure, including from China, will continue propelling AI capability regardless of governance choices made in the U.S.

Sam Altman of OpenAI is offered briefly as a counterpoint: technology historically creates more jobs than it destroys, and the prosperity of any given era would have seemed unimaginable to those living through its predecessor.

Relevance for Business

The operative question for SMB executives is not whether Amodei’s worst-case scenario materializes — it is what the intermediate scenario looks like, and whether their organizations are prepared for it. The signal that is already confirmed by independent data (including the Stanford study summarized earlier in this series) is that entry-level white-collar employment in AI-exposed roles is already declining. The debate is about magnitude and speed, not direction. For leaders responsible for hiring pipelines, talent development, and workforce planning, the question of how to build organizational capability when entry-level work is structurally contracting deserves a place on the strategic agenda now, not after the trend is undeniable.

Calls to Action

🔹 Monitor: Track the Anthropic Economic Index and the Stanford occupational dashboard as they develop — these are among the most credible real-world signals on AI’s labor market effects.

🔹 Evaluate: Review your five-year workforce plan for exposure to entry-level white-collar role displacement — particularly in software, legal, finance, and customer service functions.

🔹 Assign someone in your leadership team to own the AI-and-workforce question explicitly — not as an HR issue alone, but as a strategic planning issue.

🔹 Read Amodei’s warning seriously but not uncritically: he is both an unusually informed source and a commercially interested one. Distinguish his factual claims from his projections, and his projections from his proposed solutions.

🔹 No immediate operational action required — but this piece warrants a structured leadership conversation about AI’s medium-term effect on your workforce model.

Summary by ReadAboutAI.com

https://www.axios.com/2025/05/28/ai-jobs-white-collar-unemployment-anthropic: Day 4: May 23, 2026

Going ‘AI First’ Appears to Be Backfiring on Klarna and Duolingo

Fast Company | Chris Morris | May 12, 2025

TL;DR: Two companies that publicly committed to replacing human workers with AI — Klarna and Duolingo — ran into quality failures, customer backlash, and reputational damage that forced a visible retreat or rapid communications repair.

Executive Summary

Klarna and Duolingo each staked a high-profile position as leaders of the “AI-first” workplace. Klarna’s CEO had announced years earlier that the company wanted to be OpenAI’s “favorite guinea pig,” instituting a hiring freeze and replacing as many workers as possible with AI. By mid-2025, the company was reversing course — announcing a hiring push focused on human customer service representatives, with the CEO acknowledging that cost had been weighted too heavily against quality. Separately, Duolingo announced it would stop using contractors for work AI could handle. The social media backlash was swift and sustained, concentrated on the company’s consumer-facing channels.

The article documents the tension between two real phenomena: genuine cost efficiency gains from AI substitution (Klarna had claimed its AI was doing the equivalent work of hundreds of customer service agents) and the reputational and quality costs that accumulate when the substitution is visible and abrupt. Klarna’s CEO is quoted acknowledging that quality degraded. Duolingo’s representative, by contrast, argues the public misunderstood what “AI-first” meant — that AI tools are used by human experts, not in place of them.

The broader context the article includes is worth noting. A World Economic Forum study found that 40% of employers expect to reduce workforce headcount through AI automation. And Harvard Business School research cited in the piece points to a consistent psychological resistance: people resist AI tools more strongly when they appear to fully replace human agency rather than assist it. That distinction — augmentation versus replacement — turns out to matter not just to employees but to customers.

Relevance for Business

The signal here is not that AI-for-cost-reduction is wrong. It is that the communication strategy and sequencing matter as much as the operational decision itself. Klarna’s reversal was not primarily a technology failure — it was a quality-management failure that became a public-relations problem. For SMB executives, the risk of reputational exposure from aggressive AI substitution in customer-facing roles is real and often underweighted in cost-benefit calculations. Customers notice when service quality drops, and they now have immediate, visible channels to say so.

Calls to Action

🔹 If using AI in customer-facing or contractor-heavy workflows, assess what quality signals you are monitoring — and who is accountable if quality degrades.

🔹 Evaluate how your AI substitution decisions would read publicly if announced — not to avoid them, but to prepare the right framing and sequence.

🔹 Distinguish internally between augmentation (AI assisting human workers) and substitution (AI replacing them) — and be clear about which you are doing before communicating externally.

🔹 Monitor: Consumer sentiment around AI labor displacement is a real and growing variable in brand reputation, not just an internal HR issue.

🔹 No immediate action required — but treat the Klarna and Duolingo cases as cautionary templates, not as outliers.

Summary by ReadAboutAI.com

https://www.fastcompany.com/91332763/going-ai-first-appears-to-be-backfiring-on-klarna-and-duolingo: Day 4: May 23, 2026

Want AI-Driven Productivity? Redesign Work

MIT Sloan Management Review | Ravin Jesuthasan | May 1, 2025

Note: This article is behind a paywall. The PDF provided contains the introduction and framing; the full piece requires a subscription. This summary is based only on the available text.

TL;DR: AI will not deliver the productivity gains organizations expect unless they first dismantle and rebuild the underlying structures of work — layering AI onto existing job roles is the wrong approach.

Executive Summary

The article’s central argument is that most organizations are misapplying AI: they are adding it on top of existing roles and processes rather than using it as a reason to rethink how work is organized. The author, a senior partner at a major consulting firm, frames this as the difference between a “tech-forward” and a “work-backward” approach. The tech-forward approach asks: how can AI help people do their current jobs faster? The work-backward approach asks: given what AI can now do, which tasks should still be done by humans, which should be handed off, and which should be redesigned entirely?

The piece argues that work has long been structured around rigid job definitions — a model that made sense when technology could only automate narrow, mechanical tasks. Generative AI changes this because it operates at human scale, across language, reasoning, and knowledge work. The mismatch between old organizational structures and new AI capabilities is where productivity gains are being lost. Organizations that reorganize around tasks — rather than titles — will capture more value.

The author proposes a three-step discipline: deconstruct existing work into component tasks; redeploy those tasks to the best available resource (human, machine, or hybrid); then reconstruct a new operating model from those decisions. This is organizational design work, not technology work.

The author is identified as a senior partner at a global consulting firm. The framing reflects a consulting perspective and should be read as strategic opinion, not independent research.

Relevance for Business

For SMB executives, the practical implication is uncomfortable: the question is not which software to buy, but whether your organizational structure is capable of absorbing AI at all. Companies that skip the redesign step — deploying AI tools without rethinking workflows — are likely to see cost without corresponding output gain. This is the hidden execution risk in most AI rollouts: the org chart was not built for the tool being added to it.

Calls to Action

🔹 Evaluate: Before your next AI investment, map the specific tasks it affects — not the roles it touches.

🔹 Identify two or three workflows where human and AI tasks are currently blurred or duplicated, and clarify who (or what) should own each step.

🔹 Treat AI adoption as an organizational design question, not only a technology procurement decision.

🔹 Monitor: Track whether AI deployments are generating output gains or just shifting where time is spent.

🔹 No immediate action required on wholesale restructuring — but begin the diagnostic work now.

Summary by ReadAboutAI.com

https://sloanreview.mit.edu/article/want-ai-driven-productivity-redesign-work/: Day 4: May 23, 2026

AI IS ALREADY TAKING JOBS AWAY FROM ENTRY-LEVEL WORKERS

Axios | Ina Fried | August 26, 2025

Source: Reporting on a Stanford study using ADP payroll data. This is journalism covering peer-reviewed academic research — a stronger evidentiary standard than survey data or analyst opinion.

TL;DR: A Stanford study using real payroll data found a 16% drop in employment for workers ages 22–25 in AI-exposed roles since late 2022 — the first measurable, data-grounded evidence of AI displacing entry-level jobs at scale.

Executive Summary

This article carries more evidentiary weight than most AI-and-jobs coverage because it is based on ADP payroll data — actual employment records — rather than surveys or projections. Stanford researchers found that employment among workers aged 22–25 in roles directly exposed to AI substitution (software development and customer support are the two cited examples) declined by 16% since late 2022. The speed of this shift is what distinguishes it: economist Erik Brynjolfsson, one of the researchers, described it as the fastest and broadest labor market change he has observed, comparable in pace only to the COVID-19 shift to remote work.

The study’s key methodological note is important: when the researchers looked at aggregate job data, the AI impact was not visible. It only became clear when they segmented by job type and experience level. This suggests that aggregate employment figures will mask AI’s labor impact for some time — and that waiting for broad unemployment numbers to confirm the trend may mean acting too late.

Brynjolfsson offers a structural explanation for why entry-level workers are disproportionately affected: AI, like a new graduate, has broad knowledge but lacks the tacit, unwritten expertise that experienced workers accumulate over years. The implication is that more experienced workers are, for now, relatively protected — but this protection is not permanent. The researchers are developing a near-real-time economic dashboard to track AI’s effect on hiring and wages by occupation.

Relevance for Business

The most consequential second-order effect in this piece is one the article names directly: if entry-level positions disappear, there will be no pipeline of experienced workers in five to ten years. For SMB executives, this creates two near-term concerns. First, if your competitors are shrinking entry-level headcount through AI, your own pipeline advantage may erode. Second, if your own AI deployment is quietly reducing entry-level work, you may be eliminating the positions that develop your future mid-level talent.

Calls to Action

🔹 Evaluate: If your organization has reduced entry-level hiring in the past two years, assess whether this is a deliberate strategic choice or an unintended consequence of AI tool adoption.

🔹 Monitor the Stanford dashboard when it becomes available — it will provide near-real-time hiring and wage data by occupation, a more reliable signal than headline unemployment figures.

🔹 If you rely on entry-level roles as a talent pipeline, assess the medium-term supply risk to your own workforce development.

🔹 Distinguish between roles where AI substitution is deliberate and monitored versus roles where it is happening passively and without governance.

🔹 No immediate action required on policy — but begin internal tracking of how AI deployment correlates with headcount changes by role level.

Summary by ReadAboutAI.com

https://www.axios.com/2025/08/26/ai-entry-level-jobs: Day 4: May 23, 2026

Companies Need to Take a Human-Centric Approach to AI, Experts Say

Fortune | Angelica Ang | November 17, 2025

TL;DR: Most organizations are still in the experimentation phase with AI, and the bottleneck is not the technology — it is the absence of sustained, intentional investment in people.

Executive Summary

A McKinsey report cited in the article found that roughly two-thirds of companies have yet to move AI beyond limited experimentation. A parallel Accenture survey put the number even higher: around 85% of organizations remain at the pilot or early-adoption stage, applying AI only to contained use cases. Just 8% qualify as what Accenture calls “front-runners” — organizations pursuing AI end-to-end with genuine workforce redesign underway.

The consistent message from executives at Johnson & Johnson, Standard Chartered, and Accenture is that AI literacy is the missing link between experimentation and results. J&J’s experience is instructive: after broadly deploying AI tools, the company found that only 15% of its use cases were generating 90% of the value. The rest were noise. That kind of concentration argues for discipline in selection, not breadth of deployment.

Standard Chartered’s approach — piloting AI internally on HR processes before broader rollout — is worth noting as a sequencing model. It created organizational dialogue and surfaced employee sentiment before the stakes were higher. One academic voice in the piece, a professor from the University of South Australia, adds a useful caution: some human-facing functions, particularly performance evaluation and feedback, should remain primarily human-led, both for quality and employee trust reasons.

Relevance for Business

For SMB executives, the cost implication in this article is concrete: an Accenture managing director quoted here suggests organizations should spend three dollars on people for every dollar spent on technology. That ratio is rarely reflected in actual AI budgets. The risk for smaller organizations is spending on tools while underinvesting in the capability to use them — generating visible costs and invisible returns. The J&J finding about value concentration also applies at smaller scale: a handful of well-chosen use cases will outperform a scattered deployment every time.

Calls to Action

🔹 Audit your current AI use cases against actual value generated — not just adoption rates or usage hours.

🔹 Before expanding AI deployment, assess whether your team has the foundational literacy to use existing tools well.

🔹 If piloting AI in people-facing processes (performance reviews, hiring support), build in human review as a structural requirement, not an afterthought.

🔹 Monitor: Watch whether the ratio of people investment to tech investment in your AI budget reflects realistic expectations for return.

🔹 Treat the 15%-drives-90% finding as a diagnostic prompt — not all use cases are worth scaling.

Summary by ReadAboutAI.com

https://fortune.com/2025/11/17/ai-adoption-human-centered-approach-literacy-accenture/: Day 4: May 23, 2026

THE 3 TRENDS THAT DOMINATED COMPANIES’ AI ROLLOUTS IN 2025

Fortune | Sage Lazzaro | December 15, 2025

Source: Reported opinion piece based on the author’s interviews with business leaders and consultants throughout 2025. This is journalism drawing on first-person reporting, not survey data or peer-reviewed research.

TL;DR: After a year of frontline observation, a consistent pattern emerged: AI initiatives succeed when they start with a defined business problem, and fail when they start with the technology.

Executive Summary

This piece synthesizes a year of reported conversations with executives, consultants, and practitioners into three durable patterns. The first and most emphasized: companies that led with AI as a goal tended to produce expensive experiments that solved nothing, while those that started with a specific operational problem and worked backward to the solution tended to find genuine value. The construction equipment company BigRentz and industrial conglomerate Honeywell are offered as contrasting examples of disciplined problem-first thinking; an unnamed client of consulting firm West Monroe is offered as the cautionary opposite — a data-science team deployed to find AI use cases that ended up generating interesting but useless output.

The second pattern is that back-end, administrative applications — unglamorous by definition — are consistently producing the most measurable results. A law firm example is specific: using AI to rewrite 1,600 attorney bios during a merger saved a reported $200,000 in staff time. Healthcare examples include AI-assisted transcription of physician-patient conversations, reducing documentation burden. The pattern holds across sectors: operational friction, not frontier innovation, is where AI is currently delivering.

The third pattern concerns people management, and it carries a warning for leaders who are overselling AI capabilities internally. The article documents frustration among software engineers in particular, who report feeling burdened by inflated productivity expectations from executives who do not understand day-to-day implementation realities. The piece also raises a longer-range concern: if AI handles entry-level work, the next generation of experienced professionals may not have the foundational period they need to develop judgment.

Relevance for Business

This article functions well as a diagnostic frame. The three patterns it identifies — problem-first versus AI-first, back-end value, and people friction — map directly onto where SMB AI initiatives tend to succeed or break down. The most common and costly mistake described is deploying AI capability without a clear theory of which problem it solves and how that solution will be measured. For SMBs with limited AI budgets, that failure mode is more consequential than it is for large enterprises with room to absorb failed experiments.

Calls to Action

🔹 Act: For any current or planned AI initiative, require a written problem statement before procurement — not after.

🔹 Evaluate your current portfolio of AI use cases: which ones have measurable outcomes attached, and which are exploratory without clear success criteria?

🔹 Identify two or three back-end administrative workflows where AI could reduce friction — these are lower-risk starting points with clearer ROI.

🔹 Audit internal communications about AI to ensure expectations set by leadership match what implementation teams say is realistic.

🔹 Monitor: If AI is reducing entry-level task volume in your organization, assess the downstream effect on talent development.

Summary by ReadAboutAI.com

https://fortune.com/2025/12/15/three-trends-companies-ai-enterprise-tech-aiq/: Day 4: May 23, 2026

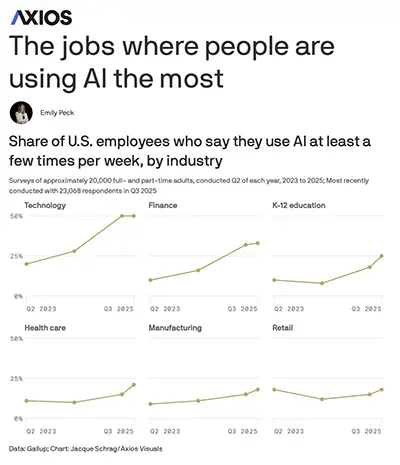

THE JOBS WHERE PEOPLE ARE USING AI THE MOST

Axios | Emily Peck | December 15, 2025

Source: Reporting on Gallup survey data (approximately 23,000 respondents, Q3 2025). This is journalism based on third-party survey data, not an independent study.

TL;DR: Gallup data shows AI workplace adoption roughly doubled in 18 months, but use remains heavily concentrated in knowledge-intensive roles — most workers are still not regular AI users.

Executive Summary

The headline number is striking: the share of U.S. workers using AI at least a few times a year jumped from 27% to 45% between mid-2024 and mid-2025. Weekly use nearly doubled over the same period. But the aggregate figure obscures a significant stratification by role and industry. Among technology workers, roughly half report using AI at least several times per week. Finance and professional services workers follow at around a third. In manufacturing, retail, and healthcare, the numbers are materially lower — in the high teens to low twenties.

Two patterns in the data deserve attention from leaders. First, the higher a worker’s seniority, the more likely they are to be using AI — which means adoption is not trickling down through organizations on its own. Second, chatbots remain the dominant use case, accounting for the majority of AI tool use across all categories. The article draws a comparison to the early adoption curve of internet search tools, while noting that AI has not yet reached the same breadth of workplace penetration.

The 10% daily-user figure is the most honest single data point in the piece: despite all the noise around AI, one in ten workers is using it as a routine daily tool. That is a meaningful base but still represents a narrow share of the workforce.

Relevance for Business

For SMB executives, the stratification finding is the most operationally relevant. If adoption is concentrated at senior levels, organizations are not capturing productivity gains across the workforce — they are concentrating capability at the top, where it has the least leverage on volume output. The gap between executive AI use and frontline AI use is not a communications problem. It is a training, access, and workflow design problem.

Calls to Action

🔹 Monitor: Track AI adoption rates by level and function within your organization — not just overall usage.

🔹 Evaluate whether tools are accessible and relevant to frontline workers, not only knowledge workers and senior staff.

🔹 Do not treat chatbot adoption as evidence of meaningful AI integration — it is a starting point, not a destination.

🔹 No immediate action required on the broader adoption statistics — but use the Gallup benchmarks to calibrate where your organization sits relative to its peers.

🔹 If adoption is concentrated at the top of your org, treat this as a workflow and training gap, not a technology gap.

Summary by ReadAboutAI.com

https://www.axios.com/2025/12/15/ai-chatgpt-jobs: Day 4: May 23, 2026

Making Generative AI Work in the Enterprise

MIT Sloan Management Review (MIT Management digest) | Brian Eastwood | July 16, 2024

TL;DR: Getting AI to work in organizations requires changes to management structure, quality-verification habits, and governance philosophy — none of which come bundled with the technology.

Executive Summary

This piece synthesizes three distinct findings from MIT Sloan Management Review research. The first draws on work by Wharton professor Ethan Mollick, who argues that generative AI is categorically different from previous technologies because it operates at human cognitive scale — meaning it disrupts coordination, not just execution. His three principles for adaptation are worth distilling: surface who in your organization is already using AI (at any level); give teams the latitude to develop their own working methods rather than centralizing AI governance; and plan for the pace of AI model development to outrun any fixed policy you set today.

The second finding comes from MIT research into how users interact with AI-generated text. The study found that highlighting likely errors in AI outputs — a form of deliberate friction — helped users catch more mistakes without meaningfully slowing them down. The implication for managers is specific: people tend to over-trust AI outputs by default, and that trust is not self-correcting without structural prompts. The researchers call this “beneficial friction” — designed interruptions that improve accuracy.

The third section examines the Mayo Clinic as an AI adoption case study. The key variable in Mayo’s approach is a shift from governance-first to enablement-first: rather than dictating what AI can and cannot do, Mayo gives clinical staff the tools to build and test AI applications in their own domains, with the staff themselves responsible for data quality. A 60-person internal team supports this infrastructure. The lesson is not that governance is unimportant — it is that overly restrictive governance becomes an adoption barrier before AI has had a chance to prove its value.

Relevance for Business

All three findings in this piece address problems that SMB executives are more likely to encounter than they expect: shadow AI use among employees, unchecked errors in AI-generated work product, and governance structures that throttle adoption before it starts. The most actionable signal: assume your team is already using AI tools without a formal policy in place, and design around that reality rather than against it.

Calls to Action

🔹 Act: Survey or informally assess which AI tools your team is already using — before setting policy.

🔹 Evaluate: If AI outputs are used in client-facing or decision-relevant work, design a review checkpoint into the workflow — do not rely on individuals to self-correct.

🔹 Review your current AI governance posture: is it primarily restrictive, or does it enable structured experimentation?

🔹 Give individual teams or departments room to develop their own AI working norms, rather than waiting for centralized policy.

🔹 Monitor: Watch for the Mayo model’s core principle — when domain experts own their AI tools, quality tends to improve.

Summary by ReadAboutAI.com

https://mitsloan.mit.edu/ideas-made-to-matter/making-generative-ai-work-enterprise-new-mit-sloan-management-review: Day 4: May 23, 2026Sources

Experiment to Everyday Work 25 Candidate Articles | Links Only

Harvard Business Review

- “AI Doesn’t Reduce Work — It Intensifies It” (Feb. 2026) https://hbr.org/2026/02/ai-doesnt-reduce-work-it-intensifies-it

- “Research: The Hidden Penalty of Using AI at Work” (Aug. 2025) https://hbr.org/2025/08/research-the-hidden-penalty-of-using-ai-at-work

- “AI-Generated ‘Workslop’ Is Destroying Productivity” (Sept. 2025) https://hbr.org/2025/09/ai-generated-workslop-is-destroying-productivity

- “How Work Changed in 2025, According to HBR Readers” (Dec. 2025) https://hbr.org/2025/12/how-work-changed-in-2025-according-to-hbr-readers

- “AI Is Changing How We Learn at Work” (Dec. 2025) https://hbr.org/2025/12/ai-is-changing-how-we-learn-at-work

MIT Sloan Management Review / MIT Sloan

- “Want AI-Driven Productivity? Redesign Work” (MIT Sloan Management Review) https://sloanreview.mit.edu/article/want-ai-driven-productivity-redesign-work/

- “Making Generative AI Work in the Enterprise” (MIT Sloan, July 2024) https://mitsloan.mit.edu/ideas-made-to-matter/making-generative-ai-work-enterprise-new-mit-sloan-management-review

- “Generative AI Changes How Employees Spend Their Time” (MIT Sloan, March 2026) https://mitsloan.mit.edu/ideas-made-to-matter/generative-ai-changes-how-employees-spend-their-time

- “How Generative AI Can Boost Highly Skilled Workers’ Productivity” (MIT Sloan, Jan. 2026) https://mitsloan.mit.edu/ideas-made-to-matter/how-generative-ai-can-boost-highly-skilled-workers-productivity

- “The Productivity Paradox of AI Adoption in Manufacturing Firms” (MIT Sloan, Jan. 2026) https://mitsloan.mit.edu/ideas-made-to-matter/productivity-paradox-ai-adoption-manufacturing-firms

- “How Artificial Intelligence Impacts the US Labor Market” (MIT Sloan, Oct. 2025) https://mitsloan.mit.edu/ideas-made-to-matter/how-artificial-intelligence-impacts-us-labor-market

- “What 2 MIT Experts Are Thinking About AI and Work” (MIT Sloan, March 2026) https://mitsloan.mit.edu/ideas-made-to-matter/what-2-mit-experts-are-thinking-about-ai-and-work

- “AI Implementation Strategies: 4 Insights from MIT Sloan Management Review” (MIT Sloan, Oct. 2025) https://mitsloan.mit.edu/ideas-made-to-matter/ai-implementation-strategies-4-insights-mit-sloan-management-review

- “Reskilling the Workforce With AI: Harvard Business School’s Raffaella Sadun” (MIT Sloan Management Review) https://sloanreview.mit.edu/audio/reskilling-the-workforce-with-ai-harvard-business-schools-raffaella-sadun/

Fortune

- “The 3 Trends That Dominated Companies’ AI Rollouts in 2025” (Dec. 2025) https://fortune.com/2025/12/15/three-trends-companies-ai-enterprise-tech-aiq/

- “Companies Must Take a Human-Centric Approach to AI Adoption” (Nov. 2025) https://fortune.com/2025/11/17/ai-adoption-human-centered-approach-literacy-accenture/

- “‘Human Skills’ Are at a Premium Again Now That Big Companies Are Backpedaling on Error-Prone AI” (Sept. 2025) https://fortune.com/2025/09/10/ai-adoption-declines-big-companies-human-skills-premium-education-gen-z/

- “Klarna’s CEO Warns AI Will Replace Human Workers — and His Company Is Already Living It” (Feb. 2025) https://fortune.com/2025/02/03/klarna-ceo-ai-replacing-human-workers/

Axios

- “ChatGPT in the Workplace: The Jobs Where People Use AI the Most” (Dec. 2025) https://www.axios.com/2025/12/15/ai-chatgpt-jobs

- “AI Is Already Taking Jobs Away From Entry-Level Workers” (Aug. 2025) https://www.axios.com/2025/08/26/ai-entry-level-jobs

- “AI Rollout Divides Execs and Staff, Survey Finds” (March 2025) https://www.axios.com/2025/03/18/enterprise-ai-tension-workers-execs

- “Behind the Curtain: Top AI CEO Foresees White-Collar Bloodbath” (May 2025) https://www.axios.com/2025/05/28/ai-jobs-white-collar-unemployment-anthropic

- “MIT Study Challenges AI Job Apocalypse Narrative” (April 2026) https://www.axios.com/2026/04/02/ai-jobs-mit-study-workforce-impact

Fast Company

- “Going ‘AI First’ Backfires on Klarna and Duolingo” (July 2025) https://www.fastcompany.com/91332763/going-ai-first-appears-to-be-backfiring-on-klarna-and-duolingo

MIT Technology Review

“Bridging the Operational AI Gap” (March 2026) https://www.technologyreview.com/2026/03/04/1133642/bridging-the-operational-ai-gap/

List provided by ReadAboutAI.com

Closing: Anniversary Week, Day 4: AI Moved From Experiment to Everyday Work

‘WHY THIS MATTERED MORE THAN IT FIRST APPEARED’

The story most people missed in this theme was not about AI capability. It was about organizational readiness — and the cost of pretending the two move at the same speed. Across ten distinct sources spanning consulting research, payroll data, vendor surveys, and CEO interviews, the same structural failure repeated: organizations deployed tools faster than they built the conditions for those tools to generate real value. The 15%-drives-90% finding from Johnson & Johnson’s experience was not an outlier. It was a pattern, confirmed again and again in different industries and at different scales. What the coverage collectively revealed is that AI in the workplace is not primarily a technology story. It is a management story — about who owns the deployment, who verifies the output, who carries accountability when results do not materialize, and what happens to the workers who absorb the consequences when those questions go unanswered.

The Stanford occupational dashboard and the Anthropic Economic Index, both under development, will provide more reliable real-time signals on AI’s labor effects than any survey can. Watch for whether entry-level employment in AI-exposed roles stabilizes, contracts further, or begins to bifurcate by sector. The more consequential question over the next twelve months is not how many workers use AI — it is whether the organizations deploying it at scale are building the governance, training, and accountability structures that determine whether it creates value or quietly accumulates risk.

A year ago, the dominant question around AI at work was whether employees would use it at all. Today, the question has shifted — and the shift is the story. Across knowledge work, the evidence from 18 months of coverage points to a transition that is real but uneven: AI moved from something companies announced to something workers quietly reached for. Weekly usage among knowledge workers roughly doubled between mid-2024 and late 2025. Coding, summarization, document handling, internal research, and customer support were the first functions where the change became visible — not because companies mandated it, but because individual workers found the tools useful enough to keep using them. The pattern that kept returning was adoption from the edges inward: employees experimented on their own, often without formal programs, and the organization followed. What once looked like a technology rollout increasingly looked like a behavior change.

AI moved closer to routine, embedded use in everyday work: coding, internal research, document handling, summarization, search, planning, customer support, and workflow assistance. AI increasingly stopped being something people tested in demos and started becoming something they quietly used as part of normal work habits. That does not mean the value is always proven or adoption always smooth, but AI now looks much more like operational integration than isolated experimentation.

What the coverage also consistently showed, however, is that integration is not the same as value — and adoption is not the same as transformation. The Klarna reversal became the clearest illustration: a company that moved aggressively to replace customer service workers with AI was quietly rehiring humans within months, its CEO acknowledging that cost had crowded out quality. Research from MIT, Harvard, and BCG told a more granular story: AI helped skilled workers go faster on certain tasks, but the productivity gains were jagged, unequal across roles, and often accompanied by new burdens — more validation work, more output volume, and in some cases, longer total task times. The organizational friction proved as significant as the technical capability. A year ago, this looked like experimentation. Today, it looks like integration — but integration with real limits, real costs, and a reckoning still in progress over who captures the value and who absorbs the disruption.

All Summaries by ReadAboutAI.com

↑ Back to Top